AMD's Radeon HD 5870: Bringing About the Next Generation Of GPUs

by Ryan Smith on September 23, 2009 9:00 AM EST- Posted in

- GPUs

The Return of Supersample AA

Over the years, the methods used to implement anti-aliasing on video cards have bounced back and forth. The earliest generation of cards such as the 3Dfx Voodoo 4/5 and ATI and NVIDIA’s DirectX 7 parts implemented supersampling, which involved rendering a scene at a higher resolution and scaling it down for display. Using supersampling did a great job of removing aliasing while also slightly improving the overall quality of the image due to the fact that it was sampled at a higher resolution.

But supersampling was expensive, particularly on those early cards. So the next generation implemented multisampling, which instead of rendering a scene at a higher resolution, rendered it at the desired resolution and then sampled polygon edges to find and remove aliasing. The overall quality wasn’t quite as good as supersampling, but it was much faster, with that gap increasing as MSAA implementations became more refined.

Lately we have seen a slow bounce back to the other direction, as MSAA’s imperfections became more noticeable and in need of correction. Here supersampling saw a limited reintroduction, with AMD and NVIDIA using it on certain parts of a frame as part of their Adaptive Anti-Aliasing(AAA) and Supersample Transparency Anti-Aliasing(SSTr) schemes respectively. Here SSAA would be used to smooth out semi-transparent textures, where the textures themselves were the aliasing artifact and MSAA could not work on them since they were not a polygon. This still didn’t completely resolve MSAA’s shortcomings compared to SSAA, but it solved the transparent texture problem. With these technologies the difference between MSAA and SSAA were reduced to MSAA being unable to anti-alias shader output, and MSAA not having the advantages of sampling textures at a higher resolution.

With the 5800 series, things have finally come full circle for AMD. Based upon their SSAA implementation for Adaptive Anti-Aliasing, they have re-implemented SSAA as a full screen anti-aliasing mode. Now gamers can once again access the higher quality anti-aliasing offered by a pure SSAA mode, instead of being limited to the best of what MSAA + AAA could do.

Ultimately the inclusion of this feature on the 5870 comes down to two matters: the card has lots and lots of processing power to throw around, and shader aliasing was the last obstacle that MSAA + AAA could not solve. With the reintroduction of SSAA, AMD is not dropping or downplaying their existing MSAA modes; rather it’s offered as another option, particularly one geared towards use on older games.

“Older games” is an important keyword here, as there is a catch to AMD’s SSAA implementation: It only works under OpenGL and DirectX9. As we found out in our testing and after much head-scratching, it does not work on DX10 or DX11 games. Attempting to utilize it there will result in the game switching to MSAA.

When we asked AMD about this, they cited the fact that DX10 and later give developers much greater control over anti-aliasing patterns, and that using SSAA with these controls may create incompatibility problems. Furthermore the games that can best run with SSAA enabled from a performance standpoint are older titles, making the use of SSAA a more reasonable choice with older games as opposed to newer games. We’re told that AMD will “continue to investigate” implementing a proper version of SSAA for DX10+, but it’s not something we’re expecting any time soon.

Unfortunately, in our testing of AMD’s SSAA mode, there are clearly a few kinks to work out. Our first AA image quality test was going to be the railroad bridge at the beginning of Half Life 2: Episode 2. That scene is full of aliased metal bars, cars, and trees. However as we’re going to lay out in this screenshot, while AMD’s SSAA mode eliminated the aliasing, it also gave the entire image a smooth makeover – too smooth. SSAA isn’t supposed to blur things, it’s only supposed to make things smoother by removing all aliasing in geometry, shaders, and textures alike.

As it turns out this is a freshly discovered bug in their SSAA implementation that affects newer Source-engine games. Presumably we’d see something similar in the rest of The Orange Box, and possibly other HL2 games. This is an unfortunate engine to have a bug in, since Source-engine games tend to be heavily CPU limited anyhow, making them perfect candidates for SSAA. AMD is hoping to have a fix out for this bug soon.

“But wait!” you say. “Doesn’t NVIDIA have SSAA modes too? How would those do?” And indeed you would be right. While NVIDIA dropped official support for SSAA a number of years ago, it has remained as an unofficial feature that can be enabled in Direct3D games, using tools such as nHancer to set the AA mode.

Unfortunately NVIDIA’s SSAA mode isn’t even in the running here, and we’ll show you why.

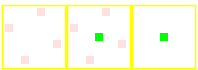

5870 SSAA

GTX 280 MSAA

GTX 280 SSAA

At the top we have the view from DX9 FSAA Viewer of ATI’s 4x SSAA mode. Notice that it’s a rotated grid with 4 geometry samples (red) and 4 texture samples. Below that we have NVIDIA’s 4x MSAA mode, a rotated grid with 4 geometry samples and a single texture sample. Finally we have NVIDIA’s 4x SSAA mode, an ordered grid with 4 geometry samples and 4 texture samples. For reasons that we won’t get delve into, rotated grids are a better grid layout from a quality standpoint than ordered grids. This is why early implementations of AA using ordered grids were dropped for rotated grids, and is why no one uses ordered grids these days for MSAA.

Furthermore, when actually using NVIDIA's SSAA mode, we ran into some definite quality issues with HL2: Ep2. We're not sure if these are related to the use of an ordered grid or not, but it's a possibility we can't ignore.

If you compare the two shots, with MSAA 4x the scene is almost perfectly anti-aliased, except for some trouble along the bottom/side edge of the railcar. If we switch to SSAA 4x that aliasing is solved, but we have a new problem: all of a sudden a number of fine tree branches have gone missing. While MSAA properly anti-aliased them, SSAA anti-aliased them right out of existence.

For this reason we will not be taking a look at NVIDIA’s SSAA modes. Besides the fact that they’re unofficial in the first place, the use of a rotated grid and the problems in HL2 cement the fact that they’re not suitable for general use.

327 Comments

View All Comments

SiliconDoc - Monday, September 28, 2009 - link

When the GTX295 still beats the latest ati card, your wish probably won't come true. Not only that, ati's own 4870x2 just recently here promoted as the best value, is a slap in it's face.It's rather difficult to believe all those crossfire promoting red ravers suddenly getting a different religion...

Then we have the no DX11 released yet, and the big, big problem...

NO 5870'S IN THE CHANNELS, reports are it's runs hot and the drivers are beta problematic.

---

So, celebrating a red revolution of market share - is only your smart aleck fantasy for now.

LOL - Awwww...

silverblue - Monday, September 28, 2009 - link

It's nearly as fast as a dual GPU solution. I'd say that was impressive.DirectX 11 comes out in less than a month... hardly a wait. It's not as if the card won't do DX9/10.

Hot card? Designed to be that way. If it was a real issue they'd have made the exhaust larger.

Beta problematic drivers? Most ATI launches seem to go that way. They'll be fixed soon enough.

SiliconDoc - Monday, September 28, 2009 - link

Gee, I thought the red rooster said nvidia sales will be low for a while, and I pointed out why they won't be, and you, well you just couldn'r handle that.I'd say a 60.96% increase in a nex gen gpu is "impressive", and that's what Nvidia did just this last time with GT200.

http://www.anandtech.com/video/showdoc.aspx?i=3334...">http://www.anandtech.com/video/showdoc.aspx?i=3334...

--

BTW - the 4870 to 4890 move had an additional 3M core transistors, and we were told by you and yours that was not a "rebrand".

BUT - the G80 move to G92 added 73M core transistors, and you couldn't stop shrieking "rebrand".

---

nearly as fast= second best

DX11 in a month = not now and too early

hot card -= IT'S OK JUST CLAIM ATI PLANNED ON IT BEING HOT !ROFL, IT'S OK TO LIE ABOUT IT IN REVIEWS, TOO ! COCKA DOODLE DOOO!

beta drivers = ALL ATI LAUNCHES GO THAT WAY, NOT "MOST"

----

Now, you can tell smart aleck this is a paper launch like the 4870, the 4770, and now this 5870 and no 5850, becuase....

"YOU'LL PUT YOUR HEAD IN THE SAND AND SCREAM IN CAPS BECAUSE THAT'S HOW YOU ROLL IN RED ROOSERVILLE ! "

(thanks for the composition Jared, it looks just as good here as when you add it to my posts, for "convenience" of course)

ClownPuncher - Monday, September 28, 2009 - link

It would be awesome if you were to stop posting altogether.SiliconDoc - Monday, September 28, 2009 - link

It would be awesome if this 5870 was 60.96% better than the last ati card, but it isn't.JarredWalton - Monday, September 28, 2009 - link

But the 5870 *is* up to 65% faster than the 4890 in the tested games. If you were to compare the GTX 280 to the 9800 GX2, it also wasn't 60% faster. In fact, 9800 GX2 beat the GTX 280 in four out of seven tested games, tied it in one, and only trailed in two games: Enemy Territory (by 13%) and Oblivion (by 3%), making ETQW the only substantial win for the GT200.So we're biased while you're the beacon of impartiality, I suppose, since you didn't intentionally make a comparison similar to comparing apples with cantaloupes. Comparing ATI's new card to their last dual-GPU solution is the way to go, but NVIDIA gets special treatment and we only compare it with their single GPU solution.

If you want the full numbers:

1) On average, the 5870 is 30% faster than the 4890 at 1680x1050, 35% faster at 1920x1200, and 45% faster at 2560x1600.

2) Note that the margin goes *up* as resolution increases, indicating the major bottleneck is not memory bandwidth at anything but 2560x1600 on the 5870.

3) Based on the old article you linked, GTX 280 was on average 5% slower than 9800X2 and 59% faster than the 9800 GTX - the 9800X2 was 6.4% faster than the GTX 280 in the tested titles.

4) Making the same comparisons, 5870 is only 3.4% faster than the 4870X2 in the tested games and 45% faster than the 4890HD.

Now, the games used for testing are completely different, so we have to throw that out. DoW2 is a huge bonus in favor of the 5870 and it scales relatively poorly with CF, hurting the X2. But you're still trying to paint a picture of the 5870 as a terrible disappointment when in fact we could say it essentially equals what NVIDIA did with the GTX 280.

On average, at 2560x1600, if NVIDIA's GT300 were to come out and be 60% faster than the GTX 285, it will beat ATI's 5870 by about 15%. If it's the same price, it's the clear choice... if you're willing to wait a month or two. That's several "ifs" for what amounts to splitting hairs. There is no current game that won't run well on the HD 5870 at 2560x1600, and I suspect that will hold true of the GT300 as well.

(FWIW, Crysis: Warhead is as bad as it gets, and dropping 4xAA will boost performance by at least 25%. It's an outlier, just like Crysis, since the higher settings are too much for anything but the fastest hardware. "High" settings are more than sufficient.)

SiliconDoc - Tuesday, September 29, 2009 - link

In other words, even with your best fudging and whining about games and all the rest, you can't even bring it with all the lies from the 15-30 percent people are claiming up to 60.96%--

Yes, as I thought.

zshift - Thursday, September 24, 2009 - link

My thoughts exactly ;)I knew the 5870 was gonna be great based on the design philosophy that AMD/ATi had with the 4870, but I never thought I'd see anything this impressive. LESS power, with MORE power! (pun intended), and DOUBLE the speed, at that!

Funny thing is, I was actually considering an Nvidia gpu when I saw how impressive PhysX was on Batman AA. But I think I would rather have near double the frame rates compared to seeing extra paper fluffing around here and there (though the scenes with the scarecrow are downright amazing). I'll just have to wait and see how the GT300 series does, seeing as I can't afford any of this right now (but boy, oh boy, is that upgrade bug itching like it never has before).

SiliconDoc - Thursday, September 24, 2009 - link

Fine, but performance per dollar is on the very low end, often the lowest of all the cards. That's why it was omitted here.http://www.techpowerup.com/reviews/ATI/Radeon_HD_5...">http://www.techpowerup.com/reviews/ATI/Radeon_HD_5...

THE LOWEST overall, or darn near it.

erple2 - Friday, September 25, 2009 - link

So what you're saying then is that everyone should buy the 9500 GT and ignore everything else? If that's the most important thing to you, then clearly, that's what you mean.I think that the performance per dollar metrics that are shown are misleading at best and terrible at worst. It does not take into account that any frame rates significantly above your monitor refresh are for all intents and purposes wasted, and any frame rates significantly below 30 should by heavily weighted negatively. I haven't seen how techpowerup does their "performance per dollar" or how (if at all) they weight the FPS numbers in the dollar category.

SLI/Crossfire has always been a lose-lose in the "performance per dollar" category. Curiously, I don't see any of the nvidia SLI cards listed (other than the 295).

That sounds like biased "reporting" on your part.