AMD's Radeon HD 5870: Bringing About the Next Generation Of GPUs

by Ryan Smith on September 23, 2009 9:00 AM EST- Posted in

- GPUs

More GDDR5 Technologies: Memory Error Detection & Temperature Compensation

As we previously mentioned, for Cypress AMD’s memory controllers have implemented a greater part of the GDDR5 specification. Beyond gaining the ability to use GDDR5’s power saving abilities, AMD has also been working on implementing features to allow their cards to reach higher memory clock speeds. Chief among these is support for GDDR5’s error detection capabilities.

One of the biggest problems in using a high-speed memory device like GDDR5 is that it requires a bus that’s both fast and fairly wide - properties that generally run counter to each other in designing a device bus. A single GDDR5 memory chip on the 5870 needs to connect to a bus that’s 32 bits wide and runs at base speed of 1.2GHz, which requires a bus that can meeting exceedingly precise tolerances. Adding to the challenge is that for a card like the 5870 with a 256-bit total memory bus, eight of these buses will be required, leading to more noise from adjoining buses and less room to work in.

Because of the difficulty in building such a bus, the memory bus has become the weak point for video cards using GDDR5. The GPU’s memory controller can do more and the memory chips themselves can do more, but the bus can’t keep up.

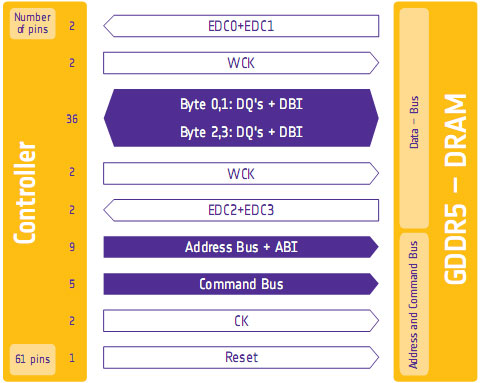

To combat this, GDDR5 memory controllers can perform basic error detection on both reads and writes by implementing a CRC-8 hash function. With this feature enabled, for each 64-bit data burst an 8-bit cyclic redundancy check hash (CRC-8) is transmitted via a set of four dedicated EDC pins. This CRC is then used to check the contents of the data burst, to determine whether any errors were introduced into the data burst during transmission.

The specific CRC function used in GDDR5 can detect 1-bit and 2-bit errors with 100% accuracy, with that accuracy falling with additional erroneous bits. This is due to the fact that the CRC function used can generate collisions, which means that the CRC of an erroneous data burst could match the proper CRC in an unlikely situation. But as the odds decrease for additional errors, the vast majority of errors should be limited to 1-bit and 2-bit errors.

Should an error be found, the GDDR5 controller will request a retransmission of the faulty data burst, and it will keep doing this until the data burst finally goes through correctly. A retransmission request is also used to re-train the GDDR5 link (once again taking advantage of fast link re-training) to correct any potential link problems brought about by changing environmental conditions. Note that this does not involve changing the clock speed of the GDDR5 (i.e. it does not step down in speed); rather it’s merely reinitializing the link. If the errors are due the bus being outright unable to perfectly handle the requested clock speed, errors will continue to happen and be caught. Keep this in mind as it will be important when we get to overclocking.

Finally, we should also note that this error detection scheme is only for detecting bus errors. Errors in the GDDR5 memory modules or errors in the memory controller will not be detected, so it’s still possible to end up with bad data should either of those two devices malfunction. By the same token this is solely a detection scheme, so there are no error correction abilities. The only way to correct a transmission error is to keep trying until the bus gets it right.

Now in spite of the difficulties in building and operating such a high speed bus, error detection is not necessary for its operation. As AMD was quick to point out to us, cards still need to ship defect-free and not produce any errors. Or in other words, the error detection mechanism is a failsafe mechanism rather than a tool specifically to attain higher memory speeds. Memory supplier Qimonda’s own whitepaper on GDDR5 pitches error correction as a necessary precaution due to the increasing amount of code stored in graphics memory, where a failure can lead to a crash rather than just a bad pixel.

In any case, for normal use the ramifications of using GDDR5’s error detection capabilities should be non-existent. In practice, this is going to lead to more stable cards since memory bus errors have been eliminated, but we don’t know to what degree. The full use of the system to retransmit a data burst would itself be a catch-22 after all – it means an error has occurred when it shouldn’t have.

Like the changes to VRM monitoring, the significant ramifications of this will be felt with overclocking. Overclocking attempts that previously would push the bus too hard and lead to errors now will no longer do so, making higher overclocks possible. However this is a bit of an illusion as retransmissions reduce performance. The scenario laid out to us by AMD is that overclockers who have reached the limits of their card’s memory bus will now see the impact of this as a drop in performance due to retransmissions, rather than crashing or graphical corruption. This means assessing an overclock will require monitoring the performance of a card, along with continuing to look for traditional signs as those will still indicate problems in memory chips and the memory controller itself.

Ideally there would be a more absolute and expedient way to check for errors than looking at overall performance, but at this time AMD doesn’t have a way to deliver error notices. Maybe in the future they will?

Wrapping things up, we have previously discussed fast link re-training as a tool to allow AMD to clock down GDDR5 during idle periods, and as part of a failsafe method to be used with error detection. However it also serves as a tool to enable higher memory speeds through its use in temperature compensation.

Once again due to the high speeds of GDDR5, it’s more sensitive to memory chip temperatures than previous memory technologies were. Under normal circumstances this sensitivity would limit memory speeds, as temperature swings would change the performance of the memory chips enough to make it difficult to maintain a stable link with the memory controller. By monitoring the temperature of the chips and re-training the link when there are significant shifts in temperature, higher memory speeds are made possible by preventing link failures.

And while temperature compensation may not sound complex, that doesn’t mean it’s not important. As we have mentioned a few times now, the biggest bottleneck in memory performance is the bus. The memory chips can go faster; it’s the bus that can’t. So anything that can help maintain a link along these fragile buses becomes an important tool in achieving higher memory speeds.

327 Comments

View All Comments

ilnot1 - Wednesday, September 23, 2009 - link

In fact, going by the lowest Newegg prices, this is how the top setups would stack up today:5870 CF .......= $760

GTX 285 SLI .= $592

GTX 295 .......= $470

GTX 275 SLI .= $420

5870 ............= $380

4890 CF ........= $360

4870 X2 ........= $330

This would make the 4890 CF or the 275 SLI setups the best value. And yes I realize there will be availability issues and price adjustments over the next month or so.

DominionSeraph - Thursday, September 24, 2009 - link

you forgot:4870 CF: $260-280

and how about the $180 4850 CF, which is probably the best price/performance for sub-1920x1200 gaming. http://www.anandtech.com/video/showdoc.aspx?i=3517...">http://www.anandtech.com/video/showdoc.aspx?i=3517... You can even get 1GB versions for ~$190.

ilnot1 - Wednesday, September 23, 2009 - link

Another very good review, thanks.But piggy backing on what wicko said, I'm surprised you didn't include two 4890 in CF. Seeing as how you can get two 4890 for less than a 5870 (whenever they are actually available). $180 x 2 = $360 < $379. And this from Newegg, not some super special sale price.

http://www.newegg.com/Product/Product.aspx?Item=N8...">http://www.newegg.com/Product/Product.aspx?Item=N8...

Ryan Smith - Wednesday, September 23, 2009 - link

It's something we would have included if we had the cards. I don't have 2 4890s, and we couldn't get a second one in time.AnotherGuy - Wednesday, September 23, 2009 - link

as always anandtech roxnbjsl2000 - Wednesday, September 23, 2009 - link

Finally time to upgrade..SiliconDoc - Wednesday, September 23, 2009 - link

Not only all that, but when there were 13 big titles for PhysX and a hundred smaller ones, we were told here, "Meh", who needs it.Now, we have a papery and unavailable (egg)except by pre-order(tiger) 5870 launch, a not-existing 5850, with guess what ? NO DX11 games!

Oh wait, there is actually just ONE - see page 7 of review. LOL

---

Conclusion ?: " It looks like NO(err.. just one) DX11 games ready, so... it also looks like NVidia is launching at the right time, and ATI blew their dry unimpressive wad on a piece of paper porn. "

---

Gee no one crowing about the first DX11 card... imagine that...

Good thing , too, considering how 13 big or a hundred titles small of PhysX enhanced games was "nothing to change one's purchase decision over". At least Anand got addicted to Mirror's Edge with PhysX enabled before concluding in the article "meh" this PhysX thing is ok if you like this game, but who cares...

---

Now we have the DX11 pre DX11 games launch with a paper product, so crowing about it wouldn't be too fitting, huh.

monomer - Wednesday, September 23, 2009 - link

Why would a developer would release a DX11 game before DX11 is even available?SiliconDoc - Friday, September 25, 2009 - link

Why would a developer release a DX11 card before DX11 is even available ?(I suppose you'll have to unscrew your hate nvidia foil cap, and grind in the red spikes in it's place to answer that one.)

However, allow me, instead.

1.I have been running windows 7 32&64 for quite some time now, not sure why you haven't been.

2. Battleforge, an ATI promo game, as noted in the review, has released their DX11 patch, hence, with W7 from MSFT (the beta+ free trial good till March 1st 2010 or something like that) I believe any gamer has had a reasonable chance to preview DX11.

---

So anyway...

cactusdog - Friday, September 25, 2009 - link

Ya , ATI has done it again. Excellent performance for a fair price. If Nvidia released this exact card it would be $150-$200 more expensive.LOL, Nvidia sales are gonna be slow for a while.