AMD's Radeon HD 5870: Bringing About the Next Generation Of GPUs

by Ryan Smith on September 23, 2009 9:00 AM EST- Posted in

- GPUs

Eyefinity

Somewhere around 2006 - 2007 ATI was working on the overall specifications for what would eventually turn into the RV870 GPU. These GPUs are designed by combining the views of ATI's engineers with the demands of the developers, end-users and OEMs. In the case of Eyefinity, the initial demand came directly from the OEMs.

ATI was working on the mobile version of its RV870 architecture and realized that it had a number of DisplayPort (DP) outputs at the request of OEMs. The OEMs wanted up to six DP outputs from the GPU, but with only two active at a time. The six came from two for internal panel use (if an OEM wanted to do a dual-monitor notebook, which has happened since), two for external outputs (one DP and one DVI/VGA/HDMI for example), and two for passing through to a docking station. Again, only two had to be active at once so the GPU only had six sets of DP lanes but the display engines to drive two simultaneously.

ATI looked at the effort required to enable all six outputs at the same time and made it so, thus the RV870 GPU can output to a maximum of six displays at the same time. Not all cards support this as you first need to have the requisite number of display outputs on the card itself. The standard Radeon HD 5870 can drive three outputs simultaneously: any combination of the DVI and HDMI ports for up to 2 monitors, and a DisplayPort output independent of DVI/HDMI. Later this year you'll see a version of the card with six mini-DisplayPort outputs for driving six monitors.

It's not just hardware, there's a software component as well. The Radeon HD 5000 series driver allows you to combine all of these display outputs into one single large surface, visible to Windows and your games as a single display with tremendous resolution.

I set up a group of three Dell 24" displays (U2410s). This isn't exactly what Eyefinity was designed for since each display costs $600, but the point is that you could group three $200 1920 x 1080 panels together and potentially have a more immersive gaming experience (for less money) than a single 30" panel.

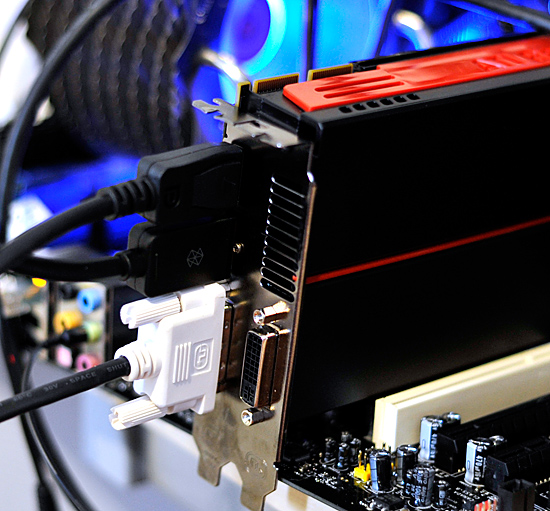

For our Eyefinity tests I chose to use every single type of output on the card, that's one DVI, one HDMI and one DisplayPort:

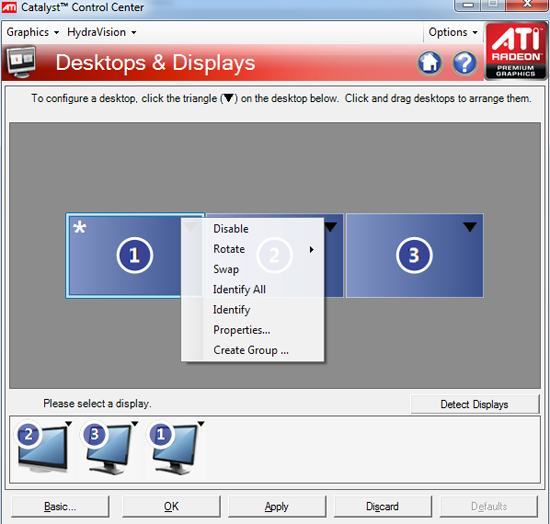

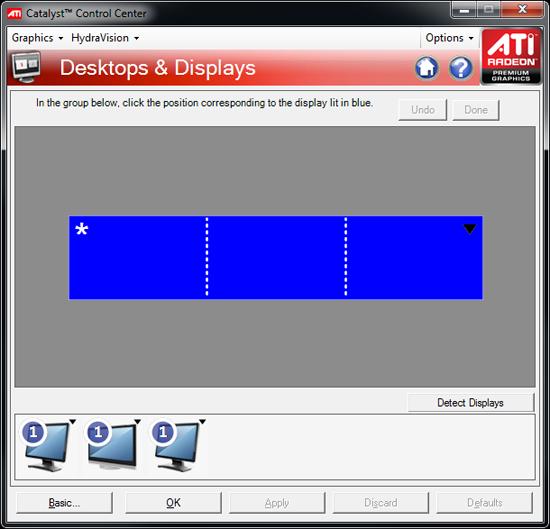

With all three outputs connected, Windows defaults to cloning the display across all monitors. Going into ATI's Catalyst Control Center lets you configure your Eyefinity groups:

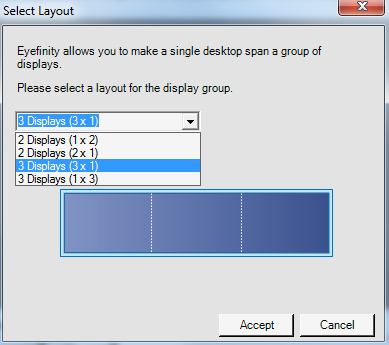

With three displays connected I could create a single 1x3 or 3x1 arrangement of displays. I also had the ability to rotate the displays first so they were in portrait mode.

You can create smaller groups, although the ability to do so disappeared after I created my first Eyefinity setup (even after deleting it and trying to recreate it). Once you've selected the type of Eyefinity display you'd like to create, the driver will make a guess as to the arrangement of your panels.

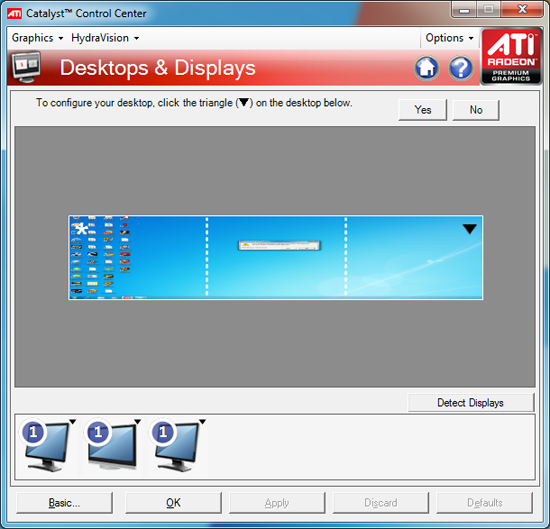

If it guessed correctly, just click Yes and you're good to go. Otherwise ATI has a handy way of determining the location of your monitors:

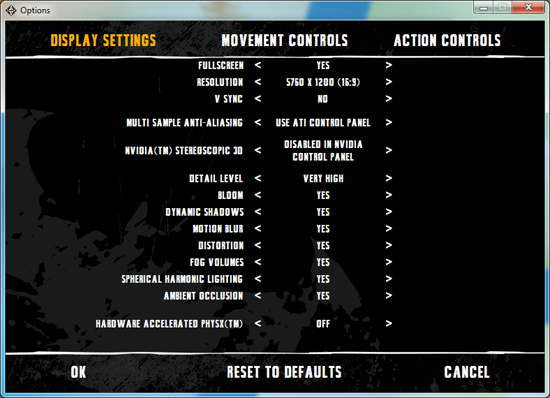

With the software side taken care of, you now have a Single Large Surface as ATI likes to call it. The display appears as one contiguous panel with a ridiculous resolution to the OS and all applications/games:

Three 24" panels in a row give us 5760 x 1200

The screenshot above should clue you into the first problem with an Eyefinity setup: aspect ratio. While the Windows desktop simply expands to provide you with more screen real estate, some games may not increase how much you can see - they may just stretch the viewport to fill all of the horizontal resolution. The resolution is correctly listed in Batman Arkham Asylum, but the aspect ratio is not (5760:1200 !~ 16:9). In these situations my Eyefinity setup made me feel downright sick; the weird stretching of characters as they moved towards the outer edges of my vision left me feeling ill.

Dispite Oblivion's support for ultra wide aspect ratio gaming, by default the game stretches to occupy all horizontal resolution

Other games have their own quirks. Resident Evil 5 correctly identified the resolution but appeared to maintain a 16:9 aspect ratio without stretching. In other words, while my display was only 1200 pixels high, the game rendered as if it were 3240 pixels high and only fit what it could onto my screens. This resulted in unusable menus and a game that wasn't actually playable once you got into it.

Games with pre-rendered cutscenes generally don't mesh well with Eyefinity either. In fact, anything that's not rendered on the fly tends to only occupy the middle portion of the screens. Game menus are a perfect example of this:

There are other issues with Eyefinity that go beyond just properly taking advantage of the resolution. While the three-monitor setup pictured above is great for games, it's not ideal in Windows. You'd want your main screen to be the one in the center, however since it's a single large display your start menu would actually appear on the leftmost panel. The same applies to games that have a HUD located in the lower left or lower right corners of the display. In Oblivion your health, magic and endurance bars all appear in the lower left, which in the case above means that the far left corner of the left panel is where you have to look for your vitals. Given that each panel is nearly two feet wide, that's a pretty far distance to look.

The biggest issue that everyone worried about was bezel thickness hurting the experience. To be honest, bezel thickness was only an issue for me when I oriented the monitors in portrait mode. Sitting close to an array of wide enough panels, the bezel thickness isn't that big of a deal. Which brings me to the next point: immersion.

The game that sold me on Eyefinity was actually one that I don't play: World of Warcraft. The game handled the ultra wide resolution perfectly, it didn't stretch any content, it just expanded my viewport. With the left and right displays tilted inwards slightly, WoW was more immersive. It's not so much that I could see what was going on around me, but that whenever I moved forward I I had the game world in more of my peripheral vision than I usually do. Running through a field felt more like running through a field, since there was more field in my vision. It's the only example where I actually felt like this was the first step towards the holy grail of creating the Holodeck. The effect was pretty impressive, although costly given that I only really attained it in a single game.

Before using Eyefinity for myself I thought I would hate the bezel thickness of the Dell U2410 monitors and I felt that the experience wouldn't be any more engaging. I was wrong on both counts, but I was also wrong to assume that all games would just work perfectly. Out of the four that I tried, only WoW worked flawlessly - the rest either had issues rendering at the unusually wide resolution or simply stretched the content and didn't give me as much additional viewspace to really make the feature useful. Will this all change given that in six months ATI's entire graphics lineup will support three displays? I'd say that's more than likely. The last company to attempt something similar was Matrox and it unfortunately didn't have the graphics horsepower to back it up.

The Radeon HD 5870 itself is fast enough to render many games at 5760 x 1200 even at full detail settings. I managed 48 fps in World of Warcraft and a staggering 66 fps in Batman Arkham Asylum without AA enabled. It's absolutely playable.

327 Comments

View All Comments

dieselcat18 - Saturday, October 3, 2009 - link

It truly amazes me that AnandTech allows a Troll like you to keep posting...but there is always one moron that comes to a forum like this and shows his a** to the world...So we all know it to be you...nice work not bringing anything resembling an intelligent discussion to the table..Oh and please don't tell me what it is that I bring to the conversation...my thoughts about this topic have nothing to do with my reply to you about your vulgar manner and lack of respect for anyone that has a difference of opinion.Oh and as for you paper launch...well sites like Newegg were sold out immediately because of the overwhelming demand for this card and I'll bet you anything there are cards available and in good supply at this very moment...Why don't you take a look and give us all another update.....I guess having that big "L" stamped on your forehead sums it up.....

SiliconDoc - Wednesday, September 23, 2009 - link

No, they didn't, because the 5870's just showed up last night, 4 of them, and just a bit ago the ONE of them actually became "available", the Powercolor brand.The other three 5870's are NOT AVAILABLE but are listed....

So "ATI paper launch" is the key idea here (for non red roosters).

1:43 PM CST, Wed. Sept. 23rd, 2009.

---

Yes, I watched them appear on the egg last night(I'm such a red fanboy I even love paper launches)... LOL

crimson117 - Wednesday, September 23, 2009 - link

Current cheapest GTX 295 at Newegg is $469.99.http://www.newegg.com/Product/Product.aspx?Item=N8...">http://www.newegg.com/Product/Product.a...p;cm_re=...

B3an - Wednesday, September 23, 2009 - link

Ryan, on your AA page, you have an example of the unofficial Nvidia SSAA where the tree branches have gone missing in HL2. And say because of this it's not suitable for general use.But for both the ATI pics, on either MSAA or SSAA, the tree branches are missing as well. Did you not notice this? because you do not comment on it.

Either way it looks like ATI AA is still worse, or there is a bug.

Ryan Smith - Wednesday, September 23, 2009 - link

We used the same save game, but not the same computer. These were separate issues we were chasing down at the same time, so they're not meant to be comparable. In this case I believe some of the shots were at 1600x1200, and others were at 1680x1050. The result of which is that the widescreen shots are effectively back a bit farther due to the use of the same FOV at all times in HL2.As you'll see in our Crysis shots, there's no difference. I can look in to this issue later however, if you'd like.

chizow - Wednesday, September 23, 2009 - link

Really enjoyed the discussion of the architecture, new features, DX11, Compute Shaders, the new AF algorithm and the reintroduction of SSAA an ATI parts.As for the card itself, its definitely impressive for a single-GPU but the muted enthusiasm in your conclusion seems justified. Its not the definite leader for single-card performance as the 295 is still consistently faster and the 5870 even fails to consistently outperform its own predecessor, the 4870X2.

Its scaling problems are really odd given its internals and overall specs seem to indicate its just RV790 CF on a single die, yet it scales worst than the previous generation in CF. I'd say you're probably onto something thinking AMD underestimated the 5870's bandwidth requirements.

Anyways, nice card and nice effort from AMD, even if its stay at the top is short-lived. AMD did a better job pricing this time around and will undoubtedly enjoy high sales volume with little competition in the coming months with Win 7's launch, up until Nvidia is able to counter with GT300.

chizow - Wednesday, September 23, 2009 - link

Holy....lolI didn't even realize til I read another comment that Ryan Smith wrote this and not Anand/Derek collaboration. That's a compliment btw, it read very Anand-esque the entire time! ;-) Really enjoyed it similar to some of your earlier efforts like the 3-part Vista memory investigation.

formulav8 - Wednesday, September 23, 2009 - link

I wouldn't be surprised if most of us already knew what was going to take place with performance and what-not. But its still a nice card whether I knew the specs before its official release or not. (And viewed many purposely leak benches). :)Jason

PJABBER - Wednesday, September 23, 2009 - link

Another fine review and nice to see it hit today. Your reviews are one reason I keep coming back to AT!Unfortunately, at MSRP the 5870 doesn't offer enough for me to move past the 4890 I am currently using, and bought for $130 during one of the sales streaks a month or so ago. Will re-evaluate when we actually start seeing price drops and/or DX11 games hit the shelves.

wicko - Wednesday, September 23, 2009 - link

It would have been nice to see 4890 in CF against 5870 in CF. 500$ spent vs 800$ spent :p