AMD's Radeon HD 5870: Bringing About the Next Generation Of GPUs

by Ryan Smith on September 23, 2009 9:00 AM EST- Posted in

- GPUs

Eyefinity

Somewhere around 2006 - 2007 ATI was working on the overall specifications for what would eventually turn into the RV870 GPU. These GPUs are designed by combining the views of ATI's engineers with the demands of the developers, end-users and OEMs. In the case of Eyefinity, the initial demand came directly from the OEMs.

ATI was working on the mobile version of its RV870 architecture and realized that it had a number of DisplayPort (DP) outputs at the request of OEMs. The OEMs wanted up to six DP outputs from the GPU, but with only two active at a time. The six came from two for internal panel use (if an OEM wanted to do a dual-monitor notebook, which has happened since), two for external outputs (one DP and one DVI/VGA/HDMI for example), and two for passing through to a docking station. Again, only two had to be active at once so the GPU only had six sets of DP lanes but the display engines to drive two simultaneously.

ATI looked at the effort required to enable all six outputs at the same time and made it so, thus the RV870 GPU can output to a maximum of six displays at the same time. Not all cards support this as you first need to have the requisite number of display outputs on the card itself. The standard Radeon HD 5870 can drive three outputs simultaneously: any combination of the DVI and HDMI ports for up to 2 monitors, and a DisplayPort output independent of DVI/HDMI. Later this year you'll see a version of the card with six mini-DisplayPort outputs for driving six monitors.

It's not just hardware, there's a software component as well. The Radeon HD 5000 series driver allows you to combine all of these display outputs into one single large surface, visible to Windows and your games as a single display with tremendous resolution.

I set up a group of three Dell 24" displays (U2410s). This isn't exactly what Eyefinity was designed for since each display costs $600, but the point is that you could group three $200 1920 x 1080 panels together and potentially have a more immersive gaming experience (for less money) than a single 30" panel.

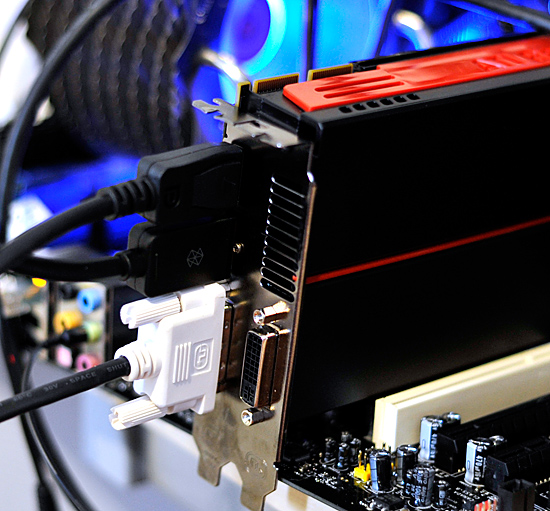

For our Eyefinity tests I chose to use every single type of output on the card, that's one DVI, one HDMI and one DisplayPort:

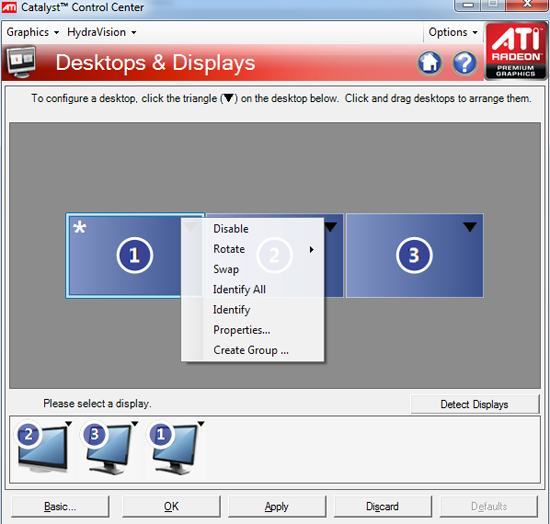

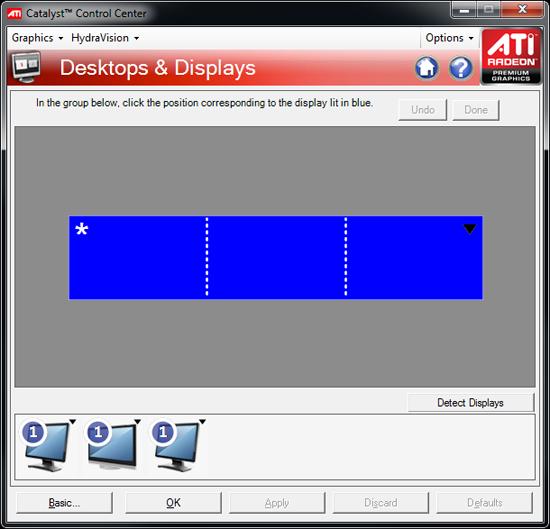

With all three outputs connected, Windows defaults to cloning the display across all monitors. Going into ATI's Catalyst Control Center lets you configure your Eyefinity groups:

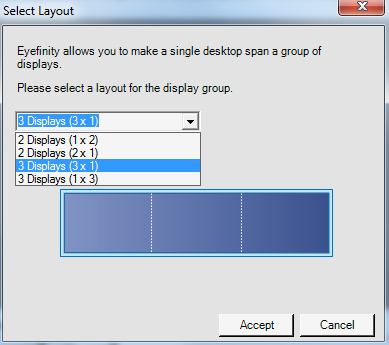

With three displays connected I could create a single 1x3 or 3x1 arrangement of displays. I also had the ability to rotate the displays first so they were in portrait mode.

You can create smaller groups, although the ability to do so disappeared after I created my first Eyefinity setup (even after deleting it and trying to recreate it). Once you've selected the type of Eyefinity display you'd like to create, the driver will make a guess as to the arrangement of your panels.

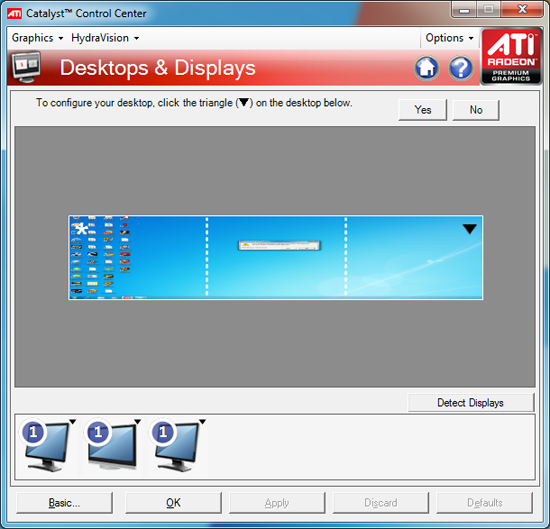

If it guessed correctly, just click Yes and you're good to go. Otherwise ATI has a handy way of determining the location of your monitors:

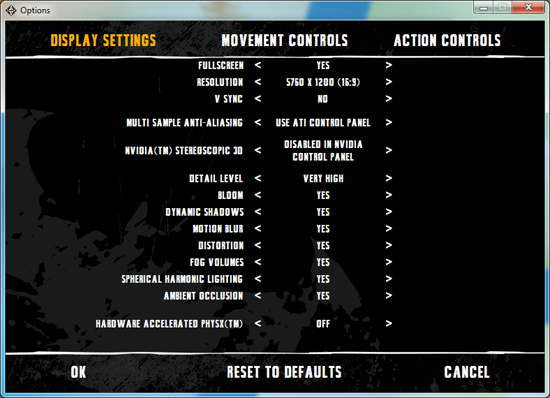

With the software side taken care of, you now have a Single Large Surface as ATI likes to call it. The display appears as one contiguous panel with a ridiculous resolution to the OS and all applications/games:

Three 24" panels in a row give us 5760 x 1200

The screenshot above should clue you into the first problem with an Eyefinity setup: aspect ratio. While the Windows desktop simply expands to provide you with more screen real estate, some games may not increase how much you can see - they may just stretch the viewport to fill all of the horizontal resolution. The resolution is correctly listed in Batman Arkham Asylum, but the aspect ratio is not (5760:1200 !~ 16:9). In these situations my Eyefinity setup made me feel downright sick; the weird stretching of characters as they moved towards the outer edges of my vision left me feeling ill.

Dispite Oblivion's support for ultra wide aspect ratio gaming, by default the game stretches to occupy all horizontal resolution

Other games have their own quirks. Resident Evil 5 correctly identified the resolution but appeared to maintain a 16:9 aspect ratio without stretching. In other words, while my display was only 1200 pixels high, the game rendered as if it were 3240 pixels high and only fit what it could onto my screens. This resulted in unusable menus and a game that wasn't actually playable once you got into it.

Games with pre-rendered cutscenes generally don't mesh well with Eyefinity either. In fact, anything that's not rendered on the fly tends to only occupy the middle portion of the screens. Game menus are a perfect example of this:

There are other issues with Eyefinity that go beyond just properly taking advantage of the resolution. While the three-monitor setup pictured above is great for games, it's not ideal in Windows. You'd want your main screen to be the one in the center, however since it's a single large display your start menu would actually appear on the leftmost panel. The same applies to games that have a HUD located in the lower left or lower right corners of the display. In Oblivion your health, magic and endurance bars all appear in the lower left, which in the case above means that the far left corner of the left panel is where you have to look for your vitals. Given that each panel is nearly two feet wide, that's a pretty far distance to look.

The biggest issue that everyone worried about was bezel thickness hurting the experience. To be honest, bezel thickness was only an issue for me when I oriented the monitors in portrait mode. Sitting close to an array of wide enough panels, the bezel thickness isn't that big of a deal. Which brings me to the next point: immersion.

The game that sold me on Eyefinity was actually one that I don't play: World of Warcraft. The game handled the ultra wide resolution perfectly, it didn't stretch any content, it just expanded my viewport. With the left and right displays tilted inwards slightly, WoW was more immersive. It's not so much that I could see what was going on around me, but that whenever I moved forward I I had the game world in more of my peripheral vision than I usually do. Running through a field felt more like running through a field, since there was more field in my vision. It's the only example where I actually felt like this was the first step towards the holy grail of creating the Holodeck. The effect was pretty impressive, although costly given that I only really attained it in a single game.

Before using Eyefinity for myself I thought I would hate the bezel thickness of the Dell U2410 monitors and I felt that the experience wouldn't be any more engaging. I was wrong on both counts, but I was also wrong to assume that all games would just work perfectly. Out of the four that I tried, only WoW worked flawlessly - the rest either had issues rendering at the unusually wide resolution or simply stretched the content and didn't give me as much additional viewspace to really make the feature useful. Will this all change given that in six months ATI's entire graphics lineup will support three displays? I'd say that's more than likely. The last company to attempt something similar was Matrox and it unfortunately didn't have the graphics horsepower to back it up.

The Radeon HD 5870 itself is fast enough to render many games at 5760 x 1200 even at full detail settings. I managed 48 fps in World of Warcraft and a staggering 66 fps in Batman Arkham Asylum without AA enabled. It's absolutely playable.

327 Comments

View All Comments

maomao0000 - Sunday, October 11, 2009 - link

http://www.myyshop.com">http://www.myyshop.comQuality is our Dignity; Service is our Lift.

Myyshop.com commodity is credit guarantee, you can rest assured of purchase, myyshop will

provide service for you all, welcome to myyshop.com

Air Jordan 7 Retro Size 10 Blk/Red Raptor - $34

100% Authentic Brand New in Box DS Air Jordan 7 Retro Raptor colorway

Never Worn, only been tried on the day I bought them back in 2002

$35Firm; no trades

http://www.myyshop.com/productlist.asp?id=s14">http://www.myyshop.com/productlist.asp?id=s14 (Jordan)

http://www.myyshop.com/productlist.asp?id=s29">http://www.myyshop.com/productlist.asp?id=s29 (Nike shox)

shaolin95 - Wednesday, October 7, 2009 - link

So Eyefinity may use 100 monitors but if we are still gaming on the flat plant then it makes no difference to me.Come on ATI, go with the real 3D games already..been waiting since the Radeon 64 SE days for you to get on with it.... :-(

GTX 295 for this boy as it is the only way to real 3D on a 60" DLP.

Nice that they have a fast product at good prices to keep the competition going. If either company goes down we all lose so support them both! :-)

Regards

raptorrage - Tuesday, October 6, 2009 - link

wow what a joke this review is but that i mean the reviewer stance on the 5870 sounds like he is a nvidia fan just because it like what 2-3fps off of the gtx 295 doesn't actually mean it can't catch that gpu as the driver updates come out and get the gpu to actually compete against that gpu and if i remember wasn't the GTX 295 the same when it came out .. its was good but it wasn't where we all thought it should have been then BAM a few months go by and it finds the performance it was missingi don't know if this was a fail on anandtech or the testing practices but i question them as i've read many other review sites and they had a clear view where the 5870 / GTX 295 where neck N neck as i've seen them first hand so i go ahead and state them here head 2 head @ 1920x1200 but at 2560x1600 the dual gpu cards do take the top slot but that is expected but it isn't as big as a margin as i see it.

and clearly he missed the whole point YES the 5870 dose compete with the GTX 295 i just believe your testing practices do come into question here because i've seen many sites where they didn't form the opinion that you have here it seems completely dismissive like AMD has failed i just don't see that in my opinion - I'll just take this review with a gain of salt as its completely meaningless

dieselcat18 - Saturday, October 3, 2009 - link

@Silicon DocNvidia fan-boy, troll, loser....take your gforce cards and go home...we can now all see how terrible ATi is thanks you ...so I really don't understand why people are beating down their doors for the 5800 series, just like people did for the 4800 and 3800 cards. I guess Nvidia fan-boy trolls like you have only one thing left to do and that's complain and cry like the itty-bitty babies that some of you are about the competition that's beating you like a drum.....so you just wait for your 300 series cards to be released (can't wait to see how many of those are available) so you can pay the overpriced premiums that Nvidia will be charging AGAIN !...hahaha...just like all that re-badging BS they pulled with the 9800 and 200 cards...what a joke !.. Oh my, I must say you have me in a mood and the ironic thing is I do like Nvidia as much as ATi, I currently own and use both. I just can't stand fools like you who spout nothing but mindless crap while waving your team flag (my card is better than your's..WhaaWhaaWhaa)...just take yourself along with your worthless opinions and slide back under that slimly rock you came from.

dieselcat18 - Saturday, October 3, 2009 - link

@Silicon DocNvidia fan-boy, troll, loser....take your gforce cards and go home...we can now all see how terrible ATi is thanks you ...so I really don't understand why people are beating down their doors for the 5800 series, just like people did for the 4800 and 3800 cards. I guess Nvidia fan-boy trolls like you have only one thing left to do and that's complain and cry like the itty-bitty babies that some of you are about the competition that's beating you like a drum.....so you just wait for your 300 series cards to be released (can't wait to see how many of those are available) so you can pay the overpriced premiums that Nvidia will be charging AGAIN !...hahaha...just like all that re-badging BS they pulled with the 9800 and 200 cards...what a joke !.. Oh my, I must say you have me in a mood and the ironic thing is I do like Nvidia as much as ATi, I currently own and use both. I just can't stand fools like you who spout nothing but mindless crap while waving your team flag (my card is better than your's..WhaaWhaaWhaa)...just take yourself along with your worthless opinions and slide back under that slimly rock you came from.

Scali - Thursday, October 1, 2009 - link

I have the GPU Computing SDK aswell, and I ran the Ocean test on my 8800GTS320. I got 40 fps, with the card at stock, with 4xAA and 16xAF on. Fullscreen or windowed didn't matter.How can your score be only 47 fps on the GTX285? And why does the screenshot say 157 fps on a GTX280?

157 fps is more along the lines of what I'd expect than 47 fps, given the performance of my 8800GTS.

Ryan Smith - Thursday, October 1, 2009 - link

Full screen, 2560x1600 with everything cranked up. At that resolution, it can be a very rough benchmark.The screenshot you're seeing is just something we took in windowed mode with the resolution turned way down so that we could fit a full-sized screenshot of the program in to our document engine.

Scali - Friday, October 2, 2009 - link

I've just checked the sourcecode and experimented a bit with changing some constants.The CS part always uses a dimension of 512, hardcoded, so not related to the screen size.

So the CS load is constant, the larger you make the window, the less you measure the GPGPU-performance, since it will become graphics-limited.

Technically you should make the window as small as possible to get a decent GPGPU-benchmark, not as large as possible.

Scali - Friday, October 2, 2009 - link

Hum, I wonder what you're measuring though.I'd have to study the code, see if higher resolutions increase only the onscreen polycount, or also the GPGPU-part of generating it.

Scali - Thursday, October 1, 2009 - link

That's 152 fps, not 257, sorry.