Intel's Core i7 870 & i5 750, Lynnfield: Harder, Better, Faster Stronger

by Anand Lal Shimpi on September 8, 2009 12:00 AM EST- Posted in

- CPUs

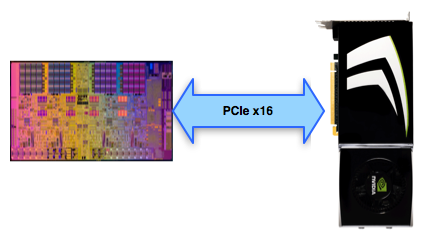

Multi-GPU SLI/CF Scaling: Lynnfield's Blemish

When running in single-GPU mode, the on-die PCIe controller maintains a full x16 connection to your graphics card:

Hooray.

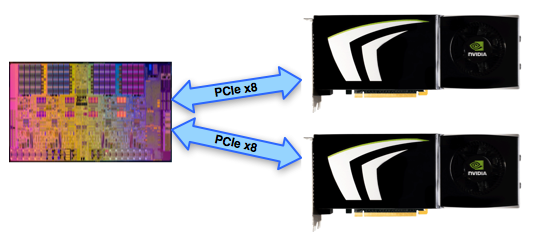

In multi-GPU mode, the 16 lanes have to be split in two:

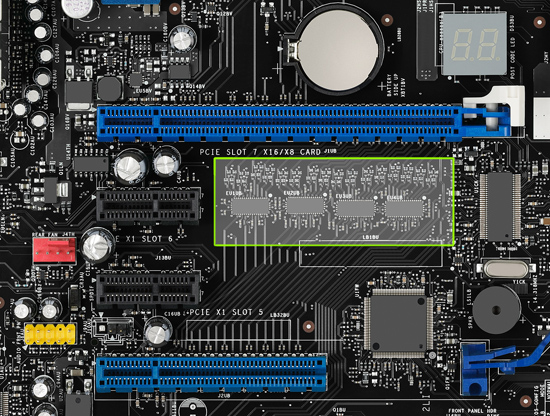

To support this the motherboard maker needs to put down ~$3 worth of PCIe switches:

Now SLI and Crossfire can work, although the motherboard maker also needs to pay NVIDIA a few dollars to legally make SLI work.

The question is do you give up any performance when going with Lynnfield's 2 x8 implementation vs. Bloomfield/X58's 2 x16 PCIe configuration? In short, at the high end, yes.

I looked at scaling in two games that scaled the best with multiple GPUs: Crysis Warhead and FarCry 2. I ran all settings at their max, resolution at 2560 x 1600 but with no AA.

I included two multi-GPU configurations. A pair of GeForce GTX 275s from EVGA for NVIDIA:

A coupla GPUs and a few cores can go a long way

And to really stress things, I looked at two Radeon HD 4870 X2s from Sapphire. Note that each card has two GPUs so this is actually a 4-GPU configuration, enough to really stress a PCIe x8 interface.

First, the dual-GPU results from NVIDIA.

| NVIDIA GeForce GTX 275 | Crysis Warhead (ambush) | Crysis Warhead (avalanche) | Crysis Warhead (frost) | FarCry 2 Playback Demo Action |

| Intel Core i7 975 (X58) - 1GPU | 20.8 fps | 23.0 fps | 21.4 fps | 41.0 fps |

| Intel Core i7 870 (P55) 1GPU | 20.8 fps | 22.9 fps | 21.5 fps | 40.5 fps |

| Intel Core i7 975 (X58) - 2GPUs | 38.4 fps | 42.3 fps | 38.0 fps | 73.2 fps |

| Intel Core i7 870 (P55) 2GPUs | 38.0 fps | 41.9 fps | 37.4 fps | 65.9 fps |

The important data is in the next table. What you're looking at here is the % speedup from one to two GPUs on X58 vs. P55. In theory, X58 should have higher percentages because each GPU gets 16 PCIe lanes while Lynnfield only provides 8 per GPU.

| GTX 275 -> GTX 275 SLI Scaling | Crysis Warhead (ambush) | Crysis Warhead (avalanche) | Crysis Warhead (frost) | FarCry 2 Playback Demo Action |

| Intel Core i7 975 (X58) | 84.6% | 83.9% | 77.6% | 78.5% |

| Intel Core i7 870 (P55) | 82.7% | 83.0% | 74.0% | 62.7% |

For the most part, the X58 platform was only a couple of percent better in scaling. That changes with the Far Cry 2 results where X58 manages to get 78% scaling while P55 only delivers 62%. It's clearly not the most common case, but it can happen. If you're going to be building a high-end dual-GPU setup, X58 is probably worth it.

Next, the quad-GPU results from AMD:

| AMD Radeon HD 4870 X2 | Crysis Warhead (ambush) | Crysis Warhead (avalanche) | Crysis Warhead (frost) | FarCry 2 Playback Demo Action |

| Intel Core i7 975 (X58) - 2GPUs | 25.8 fps | 31.3 fps | 27.0 fps | 70.9 fps |

| Intel Core i7 870 (P55) 2GPUs | 24.4 fps | 31.1 fps | 26.6 fps | 71.4 fps |

| Intel Core i7 975 (X58) - 4GPUs | 27.0 fps | 57.4 fps | 47.9 fps | 117.9 fps |

| Intel Core i7 870 (P55) 4GPUs | 24.2 fps | 50.0 fps | 36.5 fps | 116 fps |

Again, what we really care about is the scaling. Note how single GPU performance is identical between Bloomfield/Lynnfield, but multi-GPU performance is noticeably lower on Lynnfield. This isn't going to be good:

| 4870 X2 -> 4870 X2 CF Scaling | Crysis Warhead (ambush) | Crysis Warhead (avalanche) | Crysis Warhead (frost) | FarCry 2 Playback Demo Action |

| Intel Core i7 975 (X58) | 4.7% | 83.4% | 77.4% | 66.3% |

| Intel Core i7 870 (P55) | -1.0% | 60.8% | 37.2% | 62.5% |

Ouch. Maybe Lynnfield is human after all. Almost across the board the quad-GPU results significantly favor X58. It makes sense given how data hungry these GPUs are. Again, the conclusion here is that for a high end multi-GPU setup you'll want to go with X58/Bloomfield.

A Quick Look at GPU Limited Gaming

With all of our CPU reviews we try to strike a balance between CPU and GPU limited game tests in order to show which CPU is truly faster at running game code. In fact all of our CPU tests are designed to figure out which CPUs are best at a number of tasks.

However, the vast majority of games today will be limited by whatever graphics card you have in your system. The performance differences we talked about a earlier will all but disappear in these scenarios. Allow me to present data from Crysis Warhead running at 2560 x 1600 with maximum quality settings:

| NVIDIA GeForce GTX 275 | Crysis Warhead (ambush) | Crysis Warhead (avalanche) | Crysis Warhead (frost) |

| Intel Core i7 975 | 20.8 fps | 23.0 fps | 21.4 fps |

| Intel Core i7 870 | 20.8 fps | 22.9 fps | 21.5 fps |

| AMD Phenom II X4 965 BE | 20.9 fps | 23.0 fps | 21.5 fps |

They're all the same. This shouldn't come as a surprise to anyone, it's always been the case. Any CPU near the high end, when faced with the same GPU bottleneck, will perform the same in game.

Now that doesn't mean you should ignore performance data and buy a slower CPU. You always want to purchase the best performing CPU you can at any given pricepoint. It'll ensure that regardless of the CPU/GPU balance in applications and games that you're always left with the best performance possible.

The Test

| Motherboard: | Intel DP55KG (Intel P55) Intel DX58SO (Intel X58) Intel DX48BT2 (Intel X48) Gigabyte GA-MA790FXT-UD5P (790FX) |

| Chipset: | Intel X48 Intel X58 Intel P55 AMD 790FX |

| Chipset Drivers: | Intel 9.1.1.1015 (Intel) AMD Catalyst 9.8 |

| Hard Disk: | Intel X25-M SSD (80GB) |

| Memory: | Qimonda DDR3-1066 4 x 1GB (7-7-7-20) Corsair DDR3-1333 4 x 1GB (7-7-7-20) Patriot Viper DDR3-1333 2 x 2GB (7-7-7-20) |

| Video Card: | eVGA GeForce GTX 280 |

| Video Drivers: | NVIDIA ForceWare 190.62 (Win764) NVIDIA ForceWare 180.43 (Vista64) NVIDIA ForceWare 178.24 (Vista32) |

| Desktop Resolution: | 1920 x 1200 |

| OS: | Windows Vista Ultimate 32-bit (for SYSMark) Windows Vista Ultimate 64-bit Windows 7 64-bit |

Turbo mode is enabled for the P55 and X58 platforms.

343 Comments

View All Comments

Gary Key - Wednesday, September 9, 2009 - link

Actually the manufacturers wanted Clarkdale desperately for the school/holiday shopping seasons. It is delayed as they are still debugging the platform, unofficially I think that means the drivers are not ready. ;) Believe me, if we had a stable Clarkdale platform worthy of a preview, you would have read about it already.justme2009 - Wednesday, September 9, 2009 - link

You are incorrect sir. The manufacturers were complaining to Intel that they couldn't get rid of the current stock before Intel released mobile Nehalem, so Intel caved.http://techreport.com/discussions.x/16152">http://techreport.com/discussions.x/16152

http://www.techspot.com/news/33065-notebook-vendor...">http://www.techspot.com/news/33065-note...-pushing...

http://www.brighthub.com/computing/hardware/articl...">http://www.brighthub.com/computing/hardware/articl...

http://gizmodo.com/5123632/notebook-makers-want-in...">http://gizmodo.com/5123632/notebook-mak...o-delay-...

Needless to say, I'm waiting for mobile Nehalem (clarkdale/arrendale). With a 32nm manufacturing process, plus starting in 2010, Intel will begin to move both the northbridge and southbridge chips onto the processor die. The move should complete some time around 2011 as far as I can tell.

It will be far better than what we have today, and I'm really ticked off at the manufacturers for holding back progress because of their profit margin.

Gary Key - Wednesday, September 9, 2009 - link

I spoke directly with the manufacturers, not unnamed sources. The story is quite different than the rumors that were posted. I will leave it at that until we product for review.justme2009 - Wednesday, September 9, 2009 - link

Of course the manufacturers wouldn't fess up to it. It's bad business, and it makes them look bad. It already angered a great many people. I don't think they are rumors at all.justme2009 - Wednesday, September 9, 2009 - link

Personally I'm holding off on buying a new system until the northbridge/southbridge migration to the processor die is complete, ~2 years from now. That will definitely be the time to buy a new system.ClagMaster - Tuesday, September 8, 2009 - link

“These things are fast and smart with power. Just wait until Nehalem goes below 65W...”I surely will Mr Shimpi with this exceptional processor. I am going to wait until the summer of 2010 when prices are the lowest, rebates are the sweetest, before I buy my i7 860. By that time, hopefully, there would be 65W versions available on improved stepping. It’s worth the wait.

I would wager the on-chip PCIe controller could use some additional optimization which would result in lower power draw for a given frequency.

Intel sure delivered the goods with Lynnfield.

cosminliteanu - Tuesday, September 8, 2009 - link

Well done Anandtech for this article... :)ereavis - Tuesday, September 8, 2009 - link

great article. Good replies to all the bashing, most seem to have misread.Now, we want to see results in AnandTech Bench!

MODEL3 - Tuesday, September 8, 2009 - link

Wow, the i5 750 is even better than what i was expecting...For the vast, vast majority of the consumers, (not enthusiasts, overclocking guys, etc...) with this processor Intel effectively erased the above 200$ CPU market...

I hope this move to have the effect to kill their ASP also... (except AMDs...) (not that this will hurt Intel much with so many cash, but it is better than nothing...)

I see that the structure/composition in this review and in many others tech sites reviews is very good, maybe this time Intel helped more in relation with the past regarding info / photos / diagrams / review guide etc...

One question that i have (out of the conspiracy book again...) is,

if the integration of the PCI-Express controller in the CPU die on the mainstream LGA-1156 platform will be a permanent strategy from now on...

and if the recent delay for the PCI-Express standard 3.0 has a connection with the timing of the launch of mainstream LGA-1156 based CPUs with PCI-Express 3.0 controller integrated...

Sure, they can launch future LGA-1156 motherboard chipsets with PCI-Express 3.0 controller, but doesn't this contradict the integration strategy that Intel just started with the new processors?

MODEL3 - Tuesday, September 8, 2009 - link

I can't edit...I just want to clarify that the PCI-Express 3.0 question is for LOL reasons, not taken serious...