The SSD Relapse: Understanding and Choosing the Best SSD

by Anand Lal Shimpi on August 30, 2009 12:00 AM EST- Posted in

- Storage

Why You Absolutely Need an SSD

Compared to mechanical hard drives, SSDs continue to be a disruptive technology. These days it’s difficult to convince folks to spend more money, but I can’t stress the difference in user experience between a mechanical HDD and a good SSD. In every major article I’ve written about SSDs I’ve provided at least one benchmark that sums up exactly why you’d want an SSD over even a RAID array of HDDs. Today’s article is no different.

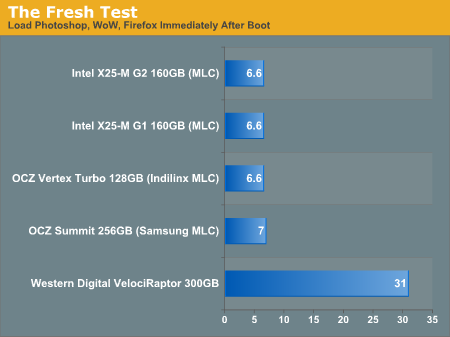

The Fresh Test, as I like to call it, involves booting up your PC and timing how long it takes to run a handful of applications. I always mix up the applications and this time I’m actually going with a lighter lineup: World of Warcraft, Adobe Photoshop CS4 and Firefox 3.5.1.

Other than those three applications, the system was a clean install - I didn’t even have any anti-virus running. This is easily the best case scenario for a hard drive and on the world’s fastest desktop hard drive, a Western Digital VelociRaptor, the whole process took 31 seconds.

And on Intel’s X25-M SSD? Just 6.6 seconds.

A difference of 24 seconds hardly seems like much, until you actually think about it in terms of PC response time. We expect our computers to react immediately to input; even waiting 6.6 seconds is an eternity. Waiting 31 seconds is agony in the PC world. Worst of all? This is on a Core i7 system. To have the world’s fastest CPU and to have to wait half a minute for a couple of apps to launch is just wrong.

A Personal Anecdote on SSDs

I’m writing this page of the article on the 15-inch MacBook Pro I reviewed a couple of months ago. I’ve kept the machine stock but I’ve used it quite a bit since that review thanks to its awesome battery life. Of course, by “stock” I mean that I have yet to install an SSD.

Using the notebook is honestly disappointing. I always think something is wrong with the machine when I go to fire up Adium, Safari, Mail and Pages all at the same time to get to work. The applications take what feels like an eternity to start. While they are all launching the individual apps are generally unresponsive, even if they’ve loaded completely and I’m waiting on others. It’s just an overall miserable experience by comparison.

It’s shocking to think that until last year, this is how all of my computer usage transpired. Everything took ages to launch and become useful, particularly the first time you boot up your PC. It was that more than anything else that drove me to put my PCs to sleep rather than shut them down. It was also the pain of starting applications from scratch and OS X’s ability to get in/out of sleep quickly that made me happier using OS X than XP and later Vista.

It’s particularly interesting when you think of the ramifications of this. It’s the poor random read/write performance of the hard disk that makes some aspects of PC usage so painful. It’s the multi-minute boot times that make users more frustrated with their PCs. While the hard disk helped the PC succeed, it’s the very device that’s killing the PC in today’s instant-on, consumer electronics driven world. I challenge OEMs to stop viewing SSDs as a luxury item and to bite the bullet. Absorb the cost, work with Intel and Indilinx vendors to lower prices, offer bundles, do whatever it takes but get these drives into your systems.

I don’t know how else to say this: it’s an order of magnitude faster than a hard drive. It’s the difference between a hang glider and the space shuttle; both will fly, it’s just that one takes you to space. And I don’t care that you can buy a super fast or high flying hang glider either.

295 Comments

View All Comments

Wwhat - Sunday, September 6, 2009 - link

If you read the first part of the article alone you would see how important a good controller is in a SSD and you would no ask his question probably, plus SSD's use the flash in parallel where a bunch of USB drives would not, the parallel thing is also mentioned in the article.And USB has a lot of overhead actually on the system, both in CPU cycles as well as in IO interrupts.

There are plug in PCI(e) cards to stick SD cards in though, to get a similar setup, but it's a bit of a hack and with the overhead and the management and controllers used and the price to buy many SD cards it's not competitive in the end and you are better of with a real SSD I'm told.

Transisto - Sunday, September 6, 2009 - link

You are right, the controller is very important.I think caching about 4-8 gig of most often accessed program files has the best price/performance ratio, for improving application load time. It it also very easily scalable.

One of the problem I see is integrating this ssd cache in the OS or before booting so it act where it matter the most.

I think there could be a near x25-m speedup from optimized caching and good controller no matter what SSD form factor it rely on. SD, CF, usb , pci or onboard.

Why it seams nobody talk about eboostr type of caching AND ,,, on other news ,,, Intel's Braidwood flash memory module could kill SSD market.

I am quite of a performance seeker.

But I don't think I need 80gig of SSD in my desktop,just some 8gb of good caching. Mabe a 60gb ssd on a laptop.

Well... I'm gonna pay for that controller once, not twice (160gb?)

Wwhat - Saturday, September 5, 2009 - link

Not that it's not a good article, although it does seem like 2 articles in one, but what I miss is getting to brass tacks regarding the filesystem used, and why there isn't a SSD-specific file system made, and what choices can be made during formatting in regards to blocksize, obviously if you select large blocks on filesystem level a would impact he performance of the garbage collection right? It actually seem the author never delved very deeply into filesystems from reading this.The thing is that even with large blocks on filesystem level the system might still use small segments for the actuall keepin track, and if it needs to write small bits to keep track of large blocks you'd still have issues, that's why I say a specific SSD filesystem migh be good, but only if there isn't a new form of SSD in the near future that makes the effort poinless, and if a filesystem for SSD was made then the firmware should not try to compensate for exising filesystem issues with SSD's.

I read that the SD people selected exFAT as filesystem for their next generation, and that also makes me wonder, is that just to do with licensing costs or is NTFS bad for flash based devices?

Point being at the filesystem needs to be highlighted more I think,

Bolas - Friday, September 4, 2009 - link

Would someone please hit Dell with the clue-board and convince them to offer the Intel SSD's in their Alienware systems? The Samsung SSD's are all that is stopping me from buying an Alienware laptop at the moment.EatTheMeat - Friday, September 4, 2009 - link

Congratulations on another fab masterclass. This is easily the best educational material on the internet regarding SSDs, and contrary to some comments, I think you've pitched your recommendations just right. I can also appreciate why you approached this article with some trepidation. Bravo.I have a RAID question for Anand (or anyone else who feels qualified :-))

I'm thinking of setting up 2 160GB x25-m G2 drives in RAID-0 for Win 7. I'd simply use the ICH10R controller for it. It's not so much to increase performance but rather to increase capacity and make sure each drive wears equally. After considering it further I'm wondering if SSD RAID is wise. First there's the eternal question of stripe size and write amplification. It makes sense to me to set the stripe size to be the same as, or a fraction of, the block size of the SSD. If you choose the wrong stripe size does it influence write amplification?

I'm aware that performance should increase with larger spripes, but I'm more concerned about what's healthy for the SSD.

Do you think I should just let SSD RAID wait until RAID drivers are optimised for SSDs?

I know you're planning a RAID article for SSDs - I for one look forward to it greatly. I've read all your other SSD articles like four times!

Bolas - Friday, September 4, 2009 - link

If SSD's in RAID lose the benefit of the TRIM command, then you're shooting yourself in the foot if you set them up in RAID. If you need more capacity, wait for the Intel 320GB SSD drives next year. Or better yet, use a 160 GB for your boot drive, then set up some traditional hard disk drives in RAID for your storage requirements.EatTheMeat - Friday, September 4, 2009 - link

Thanks for reply. I definitely hear you about the TRIM functionality as I doubt RAID drivers will pass this through before 2010. Still though, it doesn't look like the G2s drop much in performance with use anyway from Anand's graphs. With regard to waiting for 320 GB drives - I can't. These things are just too enticing, and you could always say that technology will be better / faster / cheaper next year. I've decided to take the plunge now as I'm fed up with an i7 965 booting and loading apps / games like a snail even from a RAID drive.I just don't want to bugger the SSDs up with loads of write amplification / fragmentation due to RAID-0. ie, is RAID-0 bad for the health of SSDs like defragmentation / prefetch is? I wonder if anyone knows the answer to this question yet.

jagreenm - Saturday, September 5, 2009 - link

What about just using Windows drive spanning for 2 160's?EatTheMeat - Saturday, September 5, 2009 - link

As far as I know drive spanning doesn't even the wear between the discs. It just fills up first one and then the other. That's important with SSDs because RAID can really help reduce drive wear by spreading all reads and writes across 2 drives. In fact, it should more than half drive wear as both drives will have large scratch portions. Not so with spanning as far as I know.Does anyone know if I'm talking sh1t here? :-)

pepito - Monday, November 16, 2009 - link

If you are not sure, then why do you assert such things?I don't know about Windows, but at least in Linux when using LVM2 or RAID0 the writes spread evenly against all block devices.

That means you get twice the speed and better drive wear.

I would like to think that microsoft's implementation works more or less the same way, as this is completely logical (but then again, its microsoft, so who can really know?).