The SSD Relapse: Understanding and Choosing the Best SSD

by Anand Lal Shimpi on August 30, 2009 12:00 AM EST- Posted in

- Storage

The Cleaning Lady and Write Amplification

Imagine you’re running a cafeteria. This is the real world and your cafeteria has a finite number of plates, say 200 for the entire cafeteria. Your cafeteria is open for dinner and over the course of the night you may serve a total of 1000 people. The number of guests outnumbers the total number of plates 5-to-1, thankfully they don’t all eat at once.

You’ve got a dishwasher who cleans the dirty dishes as the tables are bussed and then puts them in a pile of clean dishes for the servers to use as new diners arrive.

Pretty basic, right? That’s how an SSD works.

Remember the rules: you can read from and write to pages, but you must erase entire blocks at a time. If a block is full of invalid pages (files that have been overwritten at the file system level for example), it must be erased before it can be written to.

All SSDs have a dishwasher of sorts, except instead of cleaning dishes, its job is to clean NAND blocks and prep them for use. The cleaning algorithms don’t really kick in when the drive is new, but put a few days, weeks or months of use on the drive and cleaning will become a regular part of its routine.

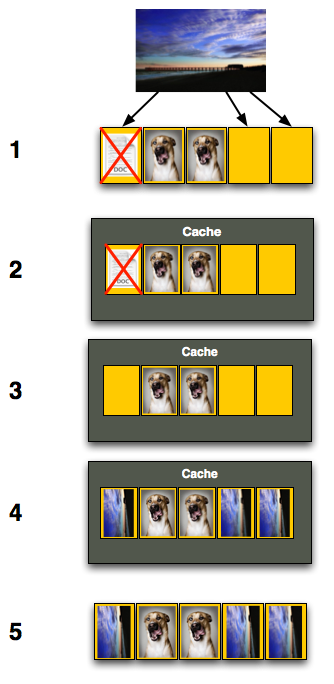

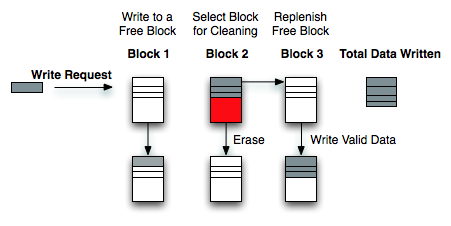

Remember this picture?

It (roughly) describes what happens when you go to write a page of data to a block that’s full of both valid and invalid pages.

In actuality the write happens more like this. A new block is allocated, valid data is copied to the new block (including the data you wish to write), the old block is sent for cleaning and emerges completely wiped. The old block is added to the pool of empty blocks. As the controller needs them, blocks are pulled from this pool, used, and the old blocks are recycled in here.

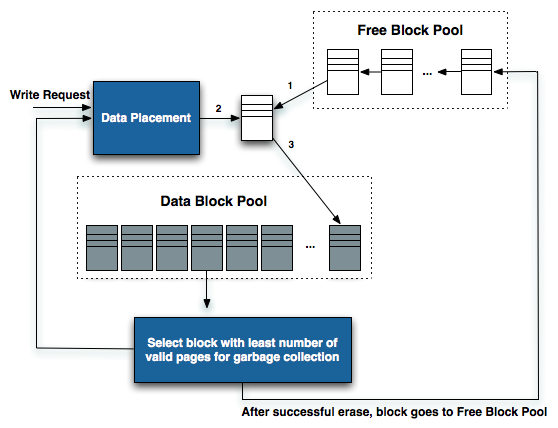

IBM's Zurich Research Laboratory actually made a wonderful diagram of how this works, but it's a bit more complicated than I need it to be for my example here today so I've remade the diagram and simplified it a bit:

The diagram explains what I just outlined above. A write request comes in, a new block is allocated and used then added to the list of used blocks. The blocks with the least amount of valid data (or the most invalid data) are scheduled for garbage collection, cleaned and added to the free block pool.

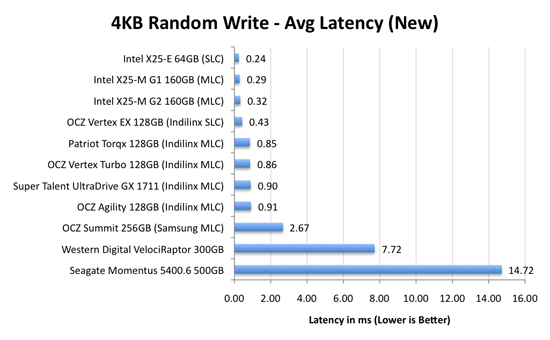

We can actually see this in action if we look at write latencies:

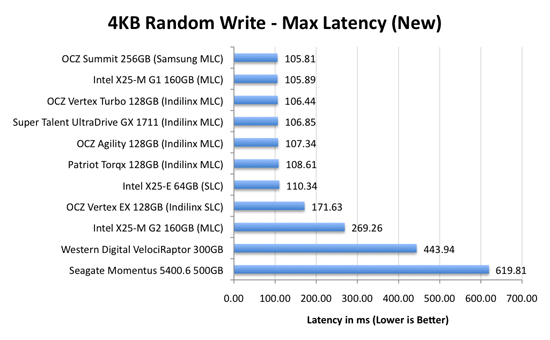

Average write latencies for writing to an SSD, even with random data, are extremely low. But take a look at the max latencies:

While average latencies are very low, the max latencies are around 350x higher. They are still low compared to a mechanical hard disk, but what's going on to make the max latency so high? All of the cleaning and reorganization I've been talking about. It rarely makes a noticeable impact on performance (hence the ultra low average latencies), but this is an example of happening.

And this is where write amplification comes in.

In the diagram above we see another angle on what happens when a write comes in. A free block is used (when available) for the incoming write. That's not the only write that happens however, eventually you have to perform some garbage collection so you don't run out of free blocks. The block with the most invalid data is selected for cleaning; its data is copied to another block, after which the previous block is erased and added to the free block pool. In the diagram above you'll see the size of our write request on the left, but on the very right you'll see how much data was actually written when you take into account garbage collection. This inequality is called write amplification.

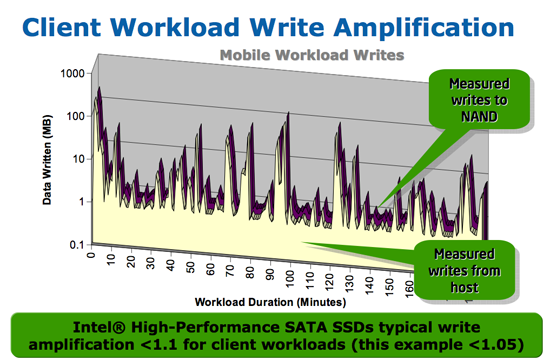

Intel claims very low write amplification on its drives, although over the lifespan of your drive a < 1.1 factor seems highly unlikely

The write amplification factor is the amount of data the SSD controller has to write in relation to the amount of data that the host controller wants to write. A write amplification factor of 1 is perfect, it means you wanted to write 1MB and the SSD’s controller wrote 1MB. A write amplification factor greater than 1 isn't desirable, but an unfortunate fact of life. The higher your write amplification, the quicker your drive will die and the lower its performance will be. Write amplification, bad.

295 Comments

View All Comments

Anand Lal Shimpi - Monday, August 31, 2009 - link

Intel insists it's not an artificial cap and I tend to believe the source that fed me that information.That being said, if it's not an artificial cap it's either:

1) Designed that way and can't be changed without a new controller

2) A bug and can be fixed with firmware

3) A bug and can't be fixed without a new controller

Or some combination of those items. We'll see :)

Take care,

Anand

Adul - Monday, August 31, 2009 - link

Another fine article anand :). Keep up the good work.CurseTheSky - Monday, August 31, 2009 - link

This is absolutely the best article I've read in a very long time - not just from Anandtech - from anywhere.I've been collecting information and comparing benchmarks / testimonials for over a month, trying to help myself decide between Intel, Indilinx, and Samsung-based drives. While it was easy to see that one of the three trails the pack, it was difficult to decide if the Intel G2 or Indilinx drives were the best bang for the buck.

This article made it all apparent: The Intel G2 drives have better random read / write performance, but worse sequential write performance. Regardless, both drives are perfectly acceptable for every day use, and the real world difference would be hardly noticeable. Now if only the Intel drives would come back in stock, close to MSRP.

Thank you for taking the time to write the article.

deputc26 - Monday, August 31, 2009 - link

been waiting months for this one.therealnickdanger - Monday, August 31, 2009 - link

Ditto! Thanks Anand! Now the big question... Intel G2 or Vertex Turbo? :) It's nice to have options!Hank Scorpion - Monday, August 31, 2009 - link

Anand,YOU ARE A LEGEND!!! go and get some good sleep, thanks for answering and allaying my fears... i appreciate all your hard work!!!!

256GB OCZ Vertex is on the top of my list as soon as a validated Windows 7 TRIM firmware that doesnt need any work by me is organized....

once a firmware is organised then my new machine is born.... MUHAHAHAHAHAHA

AbRASiON - Monday, August 31, 2009 - link

Vertex Turbo is a complete rip off, Anand clearly held back saying it from offending the guy at OCZ.Now the other OCZ models however, could be a different story.

MikeZZZZ - Monday, August 31, 2009 - link

I too love my Vertex. Running these things in RAID0 will blow your mind. I'm just waiting for some affordable enterprise-class drives for our servers.Mike

http://solidstatedrivehome.com">http://solidstatedrivehome.com

JPS - Monday, August 31, 2009 - link

I loved the first draft of the Anthology and this is a great follow-up. I have been running a Vertex in workstation and laptop for months know and continue to be amazed at the difference when I boot up a comparable system still running standard HDDs.gigahertz20 - Monday, August 31, 2009 - link

Another great article from Anand, now where can I get my Intel X-25M G2 :)