The SSD Relapse: Understanding and Choosing the Best SSD

by Anand Lal Shimpi on August 30, 2009 12:00 AM EST- Posted in

- Storage

The Cleaning Lady and Write Amplification

Imagine you’re running a cafeteria. This is the real world and your cafeteria has a finite number of plates, say 200 for the entire cafeteria. Your cafeteria is open for dinner and over the course of the night you may serve a total of 1000 people. The number of guests outnumbers the total number of plates 5-to-1, thankfully they don’t all eat at once.

You’ve got a dishwasher who cleans the dirty dishes as the tables are bussed and then puts them in a pile of clean dishes for the servers to use as new diners arrive.

Pretty basic, right? That’s how an SSD works.

Remember the rules: you can read from and write to pages, but you must erase entire blocks at a time. If a block is full of invalid pages (files that have been overwritten at the file system level for example), it must be erased before it can be written to.

All SSDs have a dishwasher of sorts, except instead of cleaning dishes, its job is to clean NAND blocks and prep them for use. The cleaning algorithms don’t really kick in when the drive is new, but put a few days, weeks or months of use on the drive and cleaning will become a regular part of its routine.

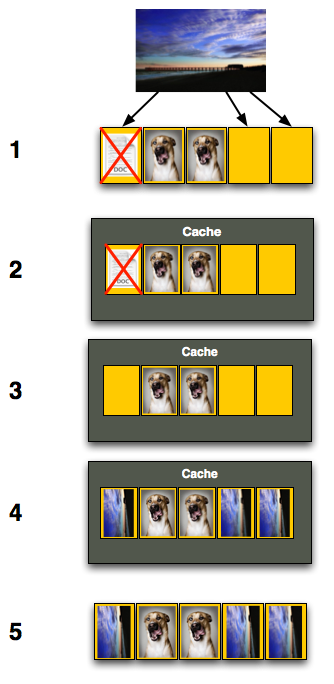

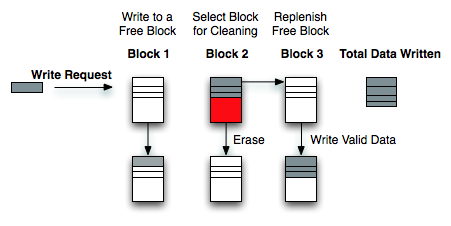

Remember this picture?

It (roughly) describes what happens when you go to write a page of data to a block that’s full of both valid and invalid pages.

In actuality the write happens more like this. A new block is allocated, valid data is copied to the new block (including the data you wish to write), the old block is sent for cleaning and emerges completely wiped. The old block is added to the pool of empty blocks. As the controller needs them, blocks are pulled from this pool, used, and the old blocks are recycled in here.

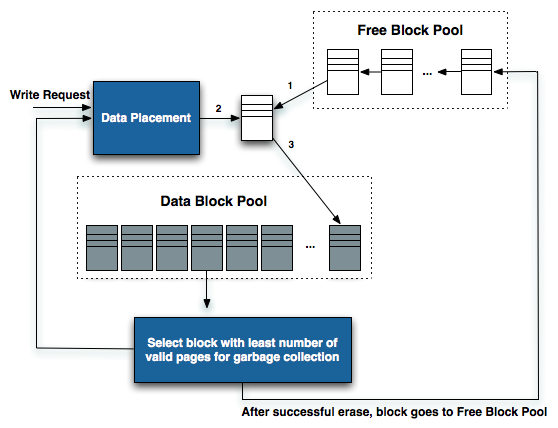

IBM's Zurich Research Laboratory actually made a wonderful diagram of how this works, but it's a bit more complicated than I need it to be for my example here today so I've remade the diagram and simplified it a bit:

The diagram explains what I just outlined above. A write request comes in, a new block is allocated and used then added to the list of used blocks. The blocks with the least amount of valid data (or the most invalid data) are scheduled for garbage collection, cleaned and added to the free block pool.

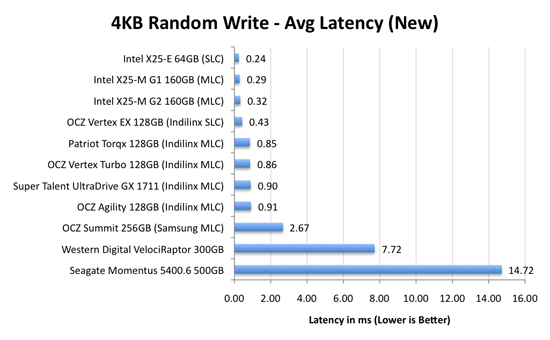

We can actually see this in action if we look at write latencies:

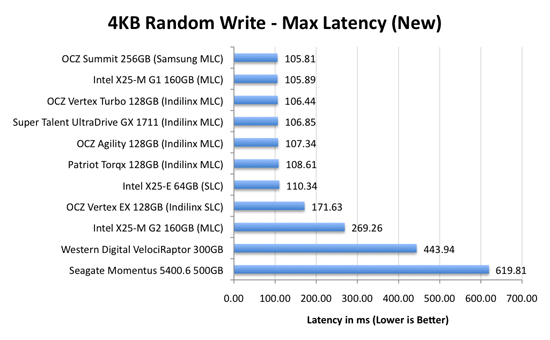

Average write latencies for writing to an SSD, even with random data, are extremely low. But take a look at the max latencies:

While average latencies are very low, the max latencies are around 350x higher. They are still low compared to a mechanical hard disk, but what's going on to make the max latency so high? All of the cleaning and reorganization I've been talking about. It rarely makes a noticeable impact on performance (hence the ultra low average latencies), but this is an example of happening.

And this is where write amplification comes in.

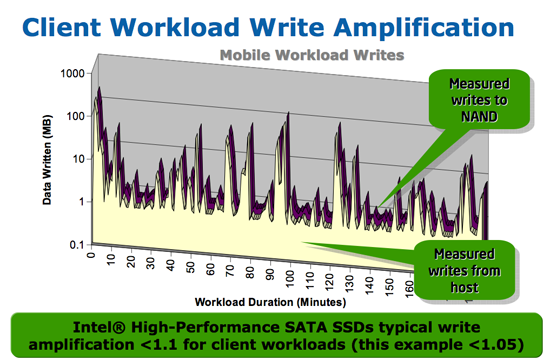

In the diagram above we see another angle on what happens when a write comes in. A free block is used (when available) for the incoming write. That's not the only write that happens however, eventually you have to perform some garbage collection so you don't run out of free blocks. The block with the most invalid data is selected for cleaning; its data is copied to another block, after which the previous block is erased and added to the free block pool. In the diagram above you'll see the size of our write request on the left, but on the very right you'll see how much data was actually written when you take into account garbage collection. This inequality is called write amplification.

Intel claims very low write amplification on its drives, although over the lifespan of your drive a < 1.1 factor seems highly unlikely

The write amplification factor is the amount of data the SSD controller has to write in relation to the amount of data that the host controller wants to write. A write amplification factor of 1 is perfect, it means you wanted to write 1MB and the SSD’s controller wrote 1MB. A write amplification factor greater than 1 isn't desirable, but an unfortunate fact of life. The higher your write amplification, the quicker your drive will die and the lower its performance will be. Write amplification, bad.

295 Comments

View All Comments

Anand Lal Shimpi - Monday, August 31, 2009 - link

I believe OCZ cut prices to distributors that day, but the retail prices will take time to fall. Once you see X25-M G2s in stock then I'd expect to see the Indilinx drives fall in price. Resellers won't give you a break unless they have to :)Take care,

Anand

bobjones32 - Monday, August 31, 2009 - link

Another great AnandTech article, thanks for the read.Just a head's-up on the 80GB X-25m Gen2 - A day before Newegg finally had them on sale, they bumped their price listing from $230 to $250. They sold at $250 for about 2 hours last Friday, went back out of stock until next week, and bumped the price again from $250 to $280.

So....plain supply vs. demand is driving the price of the G2 roughly $50 higher than it was listed at a week ago. I have a feeling that if you wait a week or two, or shop around a bit, you'll easily find them selling elsewhere for the $230 price they were originally going for.

AbRASiON - Monday, August 31, 2009 - link

Correct, Newegg has gouged the 80gb from 229 to 279 and the 160gb from 449 to 499 :(Stan Zaske - Monday, August 31, 2009 - link

Absolutely first rate article Anand and I thoroughly enjoyed reading it. Get some rest dude! LOLJaramin - Monday, August 31, 2009 - link

I'm wondering, if I were to use a low capacity SSD to install my OS on, but install my programs to a HDD for space reasons, just how much would that spoil the SSD advantage? All OS reads an writes would still be on the SSD, and the paging file would also be there. I'm very curious about the amount of degradation one would see relative to different use routines and apps.Anand Lal Shimpi - Monday, August 31, 2009 - link

Putting all of your apps (especially frequently used ones) off of your SSD would defeat the purpose of an SSD. You'd be missing out on the ultra-fast app launch times.Pick a good SSD and you won't have to worry too much about performance degradation. As long as you don't stick it into a database server :)

Take care,

Anand

swedishchef - Tuesday, September 1, 2009 - link

What if you just put your photoshop cache on a pair of Velociraptors? Would it be the same loss of benefit?I have the same question regarding uncompressed HD video work, where I need write speeds well over the Intel x25-m ( over 240Mb/s). My assumption would be that I could enjoy the fast IO and App. launch of an SSD and increase CPU performance with the SSD while keeping the files on a fast external or internal raid configuration.

Thank you again for a a brilliant Article Anand.

I have been waiting for it for a long time. Yours are the only calm words out on the net.

Grateful Geek /Also professional image creator.

creathir - Monday, August 31, 2009 - link

Great article Anand. I've been waiting for it...My only thoughts are, why can't Intel get their act together with the sequential business? Why can the others handle it, but they can't? To have such an awesome piece of hardware have such a nasty blemish is strange to me, especially on a Gen-2 product.

I suppose there is some technical reason as to why, but it needs to be addressed.

- Creathir

Anand Lal Shimpi - Monday, August 31, 2009 - link

If Intel would only let me do a deep dive on their controller I'd be able to tell you :) There's more I'd like to say but I can't yet unfortunately.Take care,

Anand

shotage - Monday, August 31, 2009 - link

Awesome article!I'm intrigued with the cap on the sequential reads that Intel has on the G2 drives as well. I always thought it was strange to see even on their first gen stuff.

I'm assuming that this cap might be in place to somehow ensure the excellent performance they are giving with random read/writes. All until TRIM finally shows up and you'll have to write up another full on review (which I eagerly await!).

I can't wait to see what 2010 brings to the table. What with the next version of SATA and TRIM just over the horizon, I could finally get the kind of performance out of my PC that I want!!