Digging Deeper: Galloping Horses Example

Rather than pull out a bunch of math and traditional timing diagrams, we've decided to put together a more straight forward presentation. The diagrams we will use show the frames of an actual animation that would be generated over time as well as what would be seen on the monitor for each method. Hopefully this will help illustrate the quantitative and qualitative differences between the approaches.

Our example consists of a fabricated example (based on an animation example courtesy of Wikipedia) of a "game" rendering a horse galloping across the screen. The basics of this timeline are that our game is capable of rendering at 5 times our refresh rate (it can render 5 different frames before a new one gets swapped to the front buffer). The consistency of the frame rate is not realistic either, as some frames will take longer than others. We cut down on these and other variables for simplicity sake. We'll talk about timing and lag in more detail based on a 60Hz refresh rate and 300 FPS performance, but we didn't want to clutter the diagram too much with times and labels. Obviously this is a theoretical example, but it does a good job of showing the idea of what is happening.

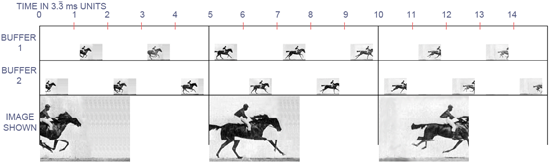

First up, we'll look at double buffering without vsync. In this case, the buffers are swapped as soon as the game is done drawing a frame. This immediately preempts what is being sent to the display at the time. Here's what it looks like in this case:

Good performance but with quality issues.

The timeline is labeled 0 to 15, and for those keeping count, each step is 3 and 1/3 milliseconds. The timeline for each buffer has a picture on it in the 3.3 ms interval during which the a frame is completed corresponding to the position of the horse and rider at that time in realtime. The large pictures at the bottom of the image represent the image displayed at each vertical refresh on the monitor. The only images we actually see are the frames that get sent to the display. The benefit of all the other frames are to minimize input lag in this case.

We can certainly see, in this extreme case, what bad tearing could look like. For this quick and dirty example, I chose only to composite three frames of animation, but it could be more or fewer tears in reality. The number of different frames drawn to the screen correspond to the length of time it takes for the graphics hardware to send the frame to the monitor. This will happen in less time than the entire interval between refreshes, but I'm not well versed enough in monitor technology to know how long that is. I sort of threw my dart at about half the interval being spent sending the frame for the purposes of this illustration (and thus parts of three completed frames are displayed). If I had to guess, I think I overestimated the time it takes to send a frame to the display.

For the above, FRAPS reported framerate would be 300 FPS, but the actual number of full images that get flashed up on the screen is always only a maximum of the refresh rate (in this example, 60 frames every second). The latency between when a frame is finished rendering and when it starts to appear on screen (this is input latency) is less than 3.3ms.

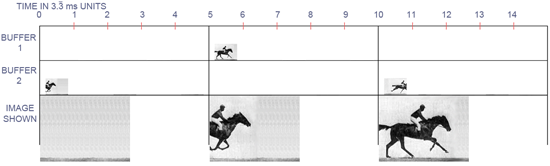

When we turn on vsync, the tearing goes away, but our real performance goes down and input latency goes up. Here's what we see.

Good quality, but bad performance and input lag.

If we consider each of these diagrams to be systems rendering the exact same thing starting at the exact same time, we can can see how far "behind" this rendering is. There is none of the tearing that was evident in our first example, but we pay for that with outdated information. In addition, the actual framerate in addition to the reported framerate is 60 FPS. The computer ends up doing a lot less work, of course, but it is at the expense of realized performance despite the fact that we cannot actually see more than the 60 images the monitor displays every second.

Here, the price we pay for eliminating tearing is an increase in latency from a maximum of 3.3ms to a maximum of 13.3ms. With vsync on a 60Hz monitor, the maximum latency that happens between when a rendering if finished and when it is displayed is a full 1/60 of a second (16.67ms), but the effective latency that can be incurred will be higher. Since no more drawing can happen after the next frame to be displayed is finished until it is swapped to the front buffer, the real effect of latency when using vsync will be more than a full vertical refresh when rendering takes longer than one refresh to complete.

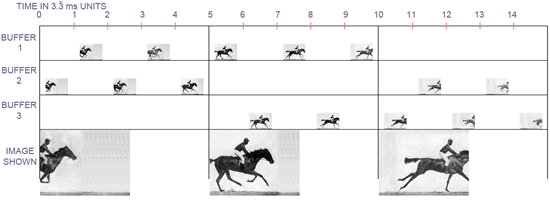

Moving on to triple buffering, we can see how it combines the best advantages of the two double buffering approaches.

The best of both worlds.

And here we are. We are back down to a maximum of 3.3ms of input latency, but with no tearing. Our actual performance is back up to 300 FPS, but this may not be reported correctly by a frame counter that only monitors front buffer flips. Again, only 60 frames actually get pasted up to the monitor every second, but in this case, those 60 frames are the most recent frames fully rendered before the next refresh.

While there may be parts of the frames in double buffering without vsync that are "newer" than corresponding parts of the triple buffered frame, the price that is paid for that is potential visual corruption. The real kicker is that, if you don't actually see tearing in the double buffered case, then those partial updates are not different enough than the previous frame(s) to have really mattered visually anyway. In other words, only when you see the tear are you really getting any useful new information. But how useful is that new information if it only comes with tearing?

184 Comments

View All Comments

oralpain - Saturday, June 27, 2009 - link

Even though I've been well aware of how triple buffering works, and how to enable it, I rarely use it.Even on my 60Hz LCDs, I usually have a better subjective experience with vsynch off. Not exactly sure why this is, but higher FSP, even if I'm not seeing the visual effects of it, is worth it over an elemination in the occasional tearing I notice.

In the handful of games where I do prefer vsych, I've always tried to use triple buffering.

billythefisherman - Saturday, June 27, 2009 - link

Ok triple buffering can undeniably offer benefits in certain situations but saying turn on triple buffering always gives you a better experience is nonsense.Take for example the case where you are running under 60hz in this case over an average amount of frames you'll experience exactly the same amount of lag as double buffering with vsync but now you have lost some video memory that could be used to hold that top level mip map your currently staring at and so see a lower quality picture at that point.

Another problem is that with tripple buffering your lag is unequally distributed because each frame takes a variable amount of time to create/render which could give a wierd feel to your play compared to what you otherwise maybe accustomed to - and it'll get worse with lower frame rates.

Another problem is that developers may take advantage of this lag on the CPU side of things if they've coded for vsync double buffered (which they invariably will do in most modern games) and know that on faster machine they may have more CPU resources so they may speed up the AI update or process more physics calculations with this left over CPU time.

Ok they may not but a game engine is not a straight forward simple system that runs everything on the GPU: it consists of many parts all working together to try to produce the lowest lag possible from input to output and a vsynced double buffered scenario provides the easiest environment to tune that system.

Its no where near as clear cut as this article makes out.

DerekWilson - Saturday, June 27, 2009 - link

This is definitely NOT true at all. you will, in fact, experience the same amount of lag as double buffering WITHOUT vsync. If you real performance is consistently 45 FPS every frame (each frame takes 22.2ms), in triple buffering and double buffering without vsync with both deliver 45 FPS with the same latency for the start of the displayed image. Average latency will be 1.5 frames. BUT double buffered WITH vsync will only give 30 FPS in this case average latency is 2 frames.

for triple buffering, lag is distributed the same as double buffering without vsync for the top of the displayed frame (above any tearing).

The CPU side of rendering for rendering's sake is no longer huge, especially with multicore CPUs. The way a developer handles work between frames won't be hampered on the CPU side by a high framerate unless they have done something wrong.

I intentionally kept this article simple in order to get the concept across and start talking about the subject. I could have included examples of things like 50 FPS, 45 FPS, and 20 FPS with all three page flipping techniques, but I felt it would just get in the way of itself by making the article unnecessarily longer and more complicated -- and all the examples deliver the same information: that triple buffering is equivalent in lag to double buffering without vsync for the top of the frame and the only time you see significant newer info in a double buffered no vsync situation is after a visible tear.

Developing /for/ the page flipping method is not the most desirable approach... Unless it's triple buffering :-)

billythefisherman - Thursday, July 9, 2009 - link

Example, your monitor is running at 60fps your graphics card is running at 45fps, as they are not in sync because of triple buffering for 2 out of 3 frames the monitor will be displaying the same frame, at best the user sees 40 new frames per second.Ok thats more frames but if your looking at what is arguably more important: the amount of lag between your input being sampled and the results being displayed then you see that your no better off.

For example lets assume your input on the game side is locked to the GPU which is typically the case in triple buffering or without vsync setup.

If the GPU is running at a constant 45fps you will see on the first frame 0 lag between the last frame being displayed. The last sample of your analogue input will be lets say for sake of simplicity ~16.667ms ago.

On the second frame the monitor will display the same frame becuase the GPU has finished rendering and so will be displaying input from ~33.334ms ago ie the frame will be now ~16.667ms old.

On the third frame the monitor will now display the first new frame rendered since the start which will now be 8.3335ms old (at constant 45fps) ie the input sampled is now ~25.00ms old.

With double buffered vsync on, your input on frame one will be 16.667ms old and on the second frame it will be 33.334ms old then on the third frame it repeats ie it will be 16.667ms old again etc.

Multiply this out over a 60fps ie 20*1667+20*33.334+20*25.002=~1500 and 30*16.667+30*33.334=~1500 and as you can see the lag between your input being sampled and it being displayed is on average the same.

All the game systems such as physics etc running on the CPU will have similar lag time characteristics - you won't see that much difference from frame to frame and now with triple buffering your sampling at uneven periods of time which could give undesirable effects.

billythefisherman - Thursday, July 9, 2009 - link

Sorry correction:...

On the second frame the monitor will display the same frame becuase the GPU *hasn't* finished rendering

...

Nighteye2 - Saturday, June 27, 2009 - link

It looks like Triple Buffering, while delivering good results, also involves a lot of excess rendering of frames that never get displayed.Unlike double buffering with vsync, where every rendered frame gets displayed.

It should be possible to get triple buffer performance with double buffering and vsync - by predicting how long it takes to render a frame (based on render time of the previous frame and a small margin), the computer could delay drawing the next frame instead of starting to draw it immediately. If the rendering of the frame gets finished just in time instead of shortly after the last refresh, it would eliminate the display lag.

DerekWilson - Saturday, June 27, 2009 - link

When framerate is less than 60 FPS, triple buffering doesn't spin off into oblivion doing work no one sees -- it maintains the same performance of double buffering without vsync but avoids tearing. predicting rendering time isn't a viable option at this point for games ...Nighteye2 - Saturday, June 27, 2009 - link

If it renders 300 frames and only 60 frames get shown, doesn't that mean 240 excess frames have been rendered?It would be better to conserve energy and have the GPU run less hot by rendering less frames, while still getting the exact same output on the screen...

Scalarscience - Saturday, June 27, 2009 - link

I'm late into this so I don't know if Derek (or anyone else) will get around to responding, but there's 2 things I thought I might bring up. I'll post the second as a separate post in case actual discussion ensues...First, the comments have established the differences between 'render ahead' & Double/Triple buffering in DirectX fairly well. But for the people who are actually trying this, the situation is imo potentially confusing. For instance, does forcing triple buffering+vsync via Rivatuner's utility (for games with no native implementation) still keep the default render-ahead setting (ie, 3 frames?) If so then this indeed is the source of a huge latency penalty.

Even with games that implement Triple Buffering themselves in DirectX, there seems to be some variance and it would be nice for devs to publish their implementation (Valve?) and how it interacts with the 'render ahead' control panel setting. I always find that for FPS (online or otherwise) setting the render ahead for DirectX to 2 instead of 3 helps the game's 'feel', though I do put it back to the default of 3 for single player games where the eye candy is making my machine struggle (and I'm willing to trade some performance for keeping the graphics cranked up.)

Now some games will have their OWN 'render ahead' implementation, like UT3 and other Unreal3 engine games. I've had to not only set 'render ahead' to 2 but also dig into UT3's ini and disable it's native 'one frame render queue' setting (or whatever it is.) The last major update did bring that into the GUI settings finally.

So the question there is how does the DirectX render queue & vsync + double/triple buffering interact? I'm guessing there's at least a few variations in that answer and I would love a discussion or article that begins with the early 3d games (Quake engine, Unreal engine then Source etc) and moves forward in time covering the mainstays in modern FPS games.

DerekWilson - Saturday, June 27, 2009 - link

Let me preface this with: I'm unsure what game developers actually do at this point. If there is enough interest for an article, I'll try and sit down with some game developers and ask them about this.But this is what they /should/ do when combining render ahead with triple buffering.

Start by rendering into the queue. Every vertical refresh, you send the oldest fully completed frame to the front buffer. If you fill up the queue before the next vertical refresh, drop the oldest frame and start rendering another newer one. Continue this until the next vertical refresh comes along.

The game always renders to whatever buffer is marked current, and front buffer is always swapped with the buffer marked oldest.

You still end up with a high potential latency of (16.67ms * queue_length) but depending on how the game handles it, this could potentially only happen when frametime >= (16.67ms * queue_lenght) anyway. The minimum latency in this case is longer than without the render ahead queue as well ...

but there could be some flexibility in maintaining a minimum number of frames in the queue or even keeping it full until frametime severely dips ... there might be some ways to use this to help SLI/CF play nicer with triple buffering as well. Not that multiGPU needs anything to add more potential lag or anything ...