The SSD Anthology: Understanding SSDs and New Drives from OCZ

by Anand Lal Shimpi on March 18, 2009 12:00 AM EST- Posted in

- Storage

The Trim Command: Coming Soon to a Drive Near You

We run into these problems primarily because the drive doesn’t know when a file is deleted, only when one is overwritten. Thus we lose performance when we go to write a new file at the expense of maintaining lightning quick deletion speeds. The latter doesn’t really matter though, now does it?

There’s a command you may have heard of called TRIM. The command would require proper OS and drive support, but with it you could effectively let the OS tell the SSD to wipe invalid pages before they are overwritten.

The process works like this:

First, a TRIM-supporting OS (e.g. Windows 7 will support TRIM at some point) queries the hard drive for its rotational speed. If the drive responds by saying 0, the OS knows it’s a SSD and turns off features like defrag. It also enables the use of the TRIM command.

When you delete a file, the OS sends a trim command for the LBAs covered by the file to the SSD controller. The controller will then copy the block to cache, wipe the deleted pages, and write the new block with freshly cleaned pages to the drive.

Now when you go to write a file to that block you’ve got empty pages to write to and your write performance will be closer to what it should be.

In our example from earlier, here’s what would happen if our OS and drive supported TRIM:

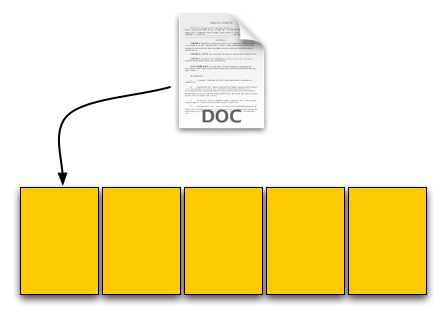

Our user saves his 4KB text file, which gets put in a new page on a fresh drive. No differences here.

Next was a 8KB JPEG. Two pages allocated; again, no differences.

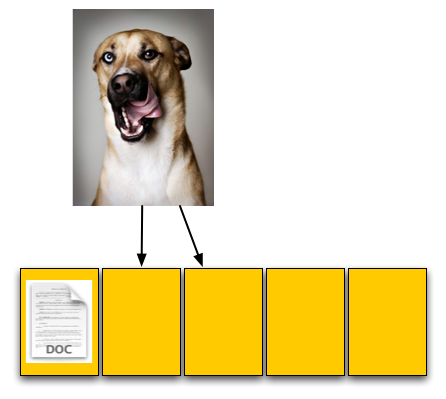

The third step was deleting the original 4KB text file. Since our drive now supports TRIM, when this deletion request comes down the drive will actually read the entire block, remove the first LBA and write the new block back to the flash:

The TRIM command forces the block to be cleaned before our final write. There's additional overhead but it happens after a delete and not during a critical write.

Our drive is now at 40% capacity, just like the OS thinks it is. When our user goes to save his 12KB JPEG, the write goes at full speed. Problem solved. Well, sorta.

While the TRIM command will alleviate the problem, it won’t eliminate it. The TRIM command can’t be invoked when you’re simply overwriting a file, for example when you save changes to a document. In those situations you’ll still have to pay the performance penalty.

Every controller manufacturer I’ve talked to intends on supporting TRIM whenever there’s an OS that takes advantage of it. The big unknown is whether or not current drives will be firmware-upgradeable to supporting TRIM as no manufacturer has a clear firmware upgrade strategy at this point.

I expect that whenever Windows 7 supports TRIM we’ll see a new generation of drives with support for the command. Whether or not existing drives will be upgraded remains to be seen, but I’d highly encourage it.

To the manufacturers making these drives: your customers buying them today at exorbitant prices deserve your utmost support. If it’s possible to enable TRIM on existing hardware, you owe it to them to offer the upgrade. Their gratitude would most likely be expressed by continuing to purchase SSDs and encouraging others to do so as well. Upset them, and you’ll simply be delaying the migration to solid state storage.

250 Comments

View All Comments

zdzichu - Sunday, March 22, 2009 - link

Very nice and thorough article. I only lack more current status of TRIM command support in current operating systems. For example, Linux supports it since last year:http://kernelnewbies.org/Linux_2_6_28#head-a1a9591...">http://kernelnewbies.org/Linux_2_6_28#h...a9591f48...

Sinned - Sunday, March 22, 2009 - link

Outstanding article that really helped me understand SSD drives. I wonder how much of an impact the new SATA III standard will have on SSD drives? I believe we are still at the beginning stage for SSD drives and your article shows that much more work needs to be done. My respect for OCZ and how they responded in a positive and productive way should be a model for the rest of the SSD makers. Thank you again for such a concise article.Respectfully,

Sinned

529th - Sunday, March 22, 2009 - link

The first thing I thought of was Democracy. Don't know why. Maybe it was because a company listened to our common goal of performance. Thank you OCZ for listening, I'm sure it will pay off!!!araczynski - Saturday, March 21, 2009 - link

very nice read. the 4/512 issue seems a rather stupid design decision, or perhaps more likely a stupid problem to find this 4/512 solution as 'acceptable'.although a great marketing choice, built in automatic life expectancy reduction.

sounds like the manufacturers want the hard drives to become a disposable medium like styrofoam cups.

perhaps when they narrow the disparity down to 4/16, i might consider buying an ssd. that, or when they beat the 'old school' physical platters in price.

until then, get back to the drawing board and stop crapping out these half arsed 'should be good enough' solutions.

IntelUser2000 - Sunday, March 22, 2009 - link

araczynski: The 4/512 isn't done by accident. It's done to lower prices. The flash technology used in SSDs are meant to replace platter HDDs in the future. There's no way of doing that without cost reductions like these. Even with that the SSDs still cost several times more per storage space.araczynski - Tuesday, March 24, 2009 - link

i understand that, but i don't remember original hard drives being released and being slower than the floppy drives they were replacing.this is part of the 'release beta' products mentality and make the consumer pay for further development.

the 5.25" floppy was better than the huge floppy in all respects when it was released. the 3.5" floppy was better than the 5.25" floppy when it was released. the usb flash drives were better than the 3.5" floppies when they were released.

i just hate the way this is being played out at the consumer's expense.

hellcats - Saturday, March 21, 2009 - link

Anand,What a great article. I usually have to skip forwards when things bog down, but they never did with this long, but very informative article. Your focus on what matters to users is why I always check anandtech first thing every morning.

juraj - Saturday, March 21, 2009 - link

I'm curious what capacity is the OCZ Vertex drive reviewed. Is it an 120 / 250g drive or supposedly slower 30 / 60g one?Symbolics - Friday, March 20, 2009 - link

The method for generating "used" drives is flawed. For creating a true used drive, the spare blocks must be filled as well. Since this was not done, the results are biased towards the Intel drives with their generous amount of spare blocks that were *not* exhausted when producing the used state. An additional bias is introduced by the reduction of the IOmeter write test to 8 GB only. Perhaps there are enough spare blocks on the Intel drives so that these 8 GB can be written to "fresh" blocks without the need for (time-consuming) erase operations.Apart from these concerns, I enjoyed reading the article.

unknownError - Saturday, March 21, 2009 - link

I also just created an account to post, very nice article!Lots of good well thought out information, I'm so tired of synthetic benchmarks glad someone goes through the trouble to bench these things right (and appears to have the education to really understand them). Whats with the grammar police though? geez...