The SSD Anthology: Understanding SSDs and New Drives from OCZ

by Anand Lal Shimpi on March 18, 2009 12:00 AM EST- Posted in

- Storage

Random Read/Write Performance

Arguably much more important to any PC user than sequential read/write performance is random access performance. It's not often that you're writing large files sequentially to your disk, but you do encounter tons of small file reads/writes as you use your PC.

To measure random read/write performance I created an iometer script that peppered the drive with random requests, with an IO queue depth of 3 (to add some multitasking spice to the test). The write test was performed over an 8GB range on the drive, while the read test was performed across the whole drive. I ran the test for 3 minutes.

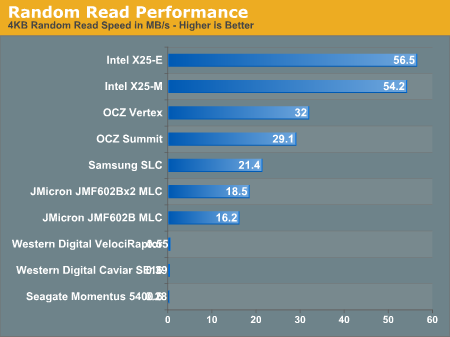

The three hard drives all posted scores below 1MB/s and thus aren't visible on our graph above. This is where SSDs shine and no hard drive, regardless of how many you RAID together, can come close.

The two Intel drives top the charts and maintain a huge lead. The OCZ Vertex actually beats out the more expensive (and unreleased) Summit drive with a respectable 32MB/s transfer rate here. Note that the Vertex is also faster than last year's Samsung SLC drive that everyone was selling for $1000. Even the JMicron drives do just fine here.

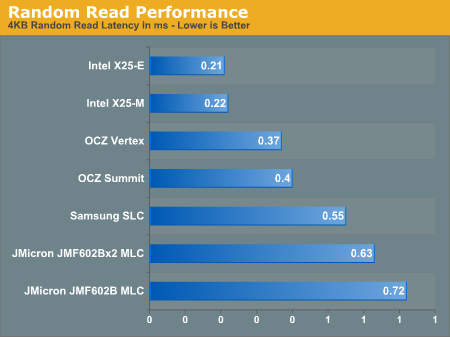

If we look at latency instead of transfer rate it helps put things in perspective:

Read latencies for hard drives have always been measured in several ms, but every single SSD here manages to complete random reads in less than 1ms under load.

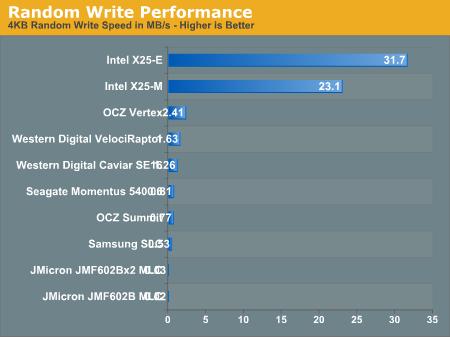

Random write speed is where we can thin the SSD flock:

Only the Intel drives and to an extent, the OCZ Vertex, post numbers visible on this scale. Let's go to a table to see everything in greater detail:

| 4KB Random Write Speed | |

| Intel X25-E | 31.7 MB/s |

| Intel X25-M | 23.1 MB/s |

| JMicron JMF602B MLC | 0.02 MB/s |

| JMicron JMF602Bx2 MLC | 0.03 MB/s |

| OCZ Summit | 0.77 MB/s |

| OCZ Vertex | 2.41 MB/s |

| Samsung SLC | 0.53 MB/s |

| Seagate Momentus 5400.6 | 0.81 MB/s |

| Western Digital Caviar SE16 | 1.26 MB/s |

| Western Digital VelociRaptor | 1.63 MB/s |

Every single drive other than the Intel X25-E, X25-M and OCZ's Vertex is slower than the 2.5" Seagate Momentus 5400.6 hard drive in this test. The Vertex, thanks to OCZ's tweaks, is now 48% faster than the VelociRaptor.

The Intel drives are of course architected for the type of performance needed on a desktop/notebook and thus they deliver very high random write performance.

Random write performance is merely one corner of the performance world. A drive needs good sequential read, sequential write, random read and random write performance. The fatal mistake is that most vendors ignore random write performance and simply try to post the best sequential read/write speeds; doing so simply produces a drive that's undesirable.

While the Vertex is slower than Intel's X25-M, it's also about half the price per GB. And note that the Vertex is still 48% faster than the VelociRaptor here, and multiple times faster in the other tests.

250 Comments

View All Comments

sbuckler - Wednesday, March 18, 2009 - link

Depends on how smart the controller is? Shuffling around the data now and again in the background would make sense.Frallan - Wednesday, March 18, 2009 - link

@AT

This is why i come to AT to read up on the developments.

@OCZ

Well played :0)

The ruler of the roost are the Intels however I will be able to afford one of those when there are cows enjoying themselfs by dancing on the moon. My next upgrade will be a Vertex - not only bc its Valu for money but equally much bc. OCZ obviously takes care of thier customers and listens to reason.

pmonti80 - Wednesday, March 18, 2009 - link

This is the kind of article that makes me come back here.nowayout99 - Wednesday, March 18, 2009 - link

OCZ, you should listen to Uncle Anand. ;) Hopefully Mr. Petersen understands that it's tough love.And the final product seems perfectly cool -- great performance at a better price than Intel. It's the first SSD I'd be able to reasonably consider.

SOLIDNecro - Wednesday, March 18, 2009 - link

Thx for this article Anand, I have been in a hotly contested debate over OCZ vs Samsung with my "Asperger Enhanced" nemisis/close friend...(In all fairness, I should mention I use the BiPolar SSE instruction set myself)

He was only looking at Samsung, I said he should look into what OCZ has now.

His reply was "I don't know them, and don't want to be disapointed"

(Long story behind that...He's from the Server/Workstain/HPC crowd, I am from the hardcore OC/Gamer/Desktop group, so he is not familiar with OCZ)

Looks like the Samsung (And alot of others) has "Issues" with performance degrading over time that are somewhat solved by Intel and OCZ (Plus maybe a few other companies that use the Rev B JMicron controller on there low cost SSD's)

I agree the OCZ Vertex offers the best bang for low buck SSD today, and I am tempted to grab one. But a year from now, anyone that bought a current gen MLC SSD will be saying "I coulda had a V-8" if that TRIM technology does what it promises!!!

James5mith - Wednesday, March 18, 2009 - link

As people continue to try and push the envelope of storage performance in a variety of ways, and as 6gbps SATA becomes available, the performance of SSD's will only go up.As always, I wanted to say thanks for the great article and keep them coming. It's the only way the rest of us can keep pace with what's happening out there in the world of performance storage.

vailr - Wednesday, March 18, 2009 - link

Is there any benefit in using 2 SSD's in a Raid 0 configuration?And: any differences between motherboard Intel Raid vs. a Raid controller card from Areca, for example. Also: can the "Trim" command work while in Raid mode? Probably not, I'm guessing...

7Enigma - Thursday, March 19, 2009 - link

Raid0 is really the holy grail for SSD's. The low risk of failure of SSD's which normally makes Raid0 with typical mechanical HD's more dangerous is very appealing. My personal storage-size goal is ~120-160gigs. Once they reach that size for under $300 I think I'm going to jump in. But I'm more likely to grab 2 60's or 2 80's and Raid0 them than get a single large SSD. The added performance will outweigh the higher power draw of 2 drives, and should make them extremely competitive with Intel's offerings (or whatever holds the crown at the time).I figure it will be about a year or so until the prices are in that range, as 2 60gig Vertex drives will currently run you about $400 after rebate.

I can't wait to jump on that upgrade and will then put my current 250gig mechanical drive as the storage drive (I don't use a ton of space in general as I have a 320gig external backup).

Rasterman - Thursday, March 19, 2009 - link

The problem with doing that is if you want to move your drives to another system they won't work, so upgrading is a pain. You could image them I guess, but plugging one drive in is much simpler. I had an older XP install that made it through 3-4 different systems.I would also question real world results, if you're going at 250MB/s or 500MB/s its not even going to be noticeable unless you are doing some massive video editing or some other huge file operations, and as Anand says, SSDs don't fill this role right now as they are super expensive per GB. So if you really are editing video a lot, you are going to need a hell of a lot more space than SSDs can offer you.

Gasaraki88 - Friday, March 20, 2009 - link

RAID is a universal standard so if you take two RAID0 drives out and move them to another computer with a RAID controller, it SHOULD just work if the original RAID was doing it correctly.