The SSD Anthology: Understanding SSDs and New Drives from OCZ

by Anand Lal Shimpi on March 18, 2009 12:00 AM EST- Posted in

- Storage

The Trim Command: Coming Soon to a Drive Near You

We run into these problems primarily because the drive doesn’t know when a file is deleted, only when one is overwritten. Thus we lose performance when we go to write a new file at the expense of maintaining lightning quick deletion speeds. The latter doesn’t really matter though, now does it?

There’s a command you may have heard of called TRIM. The command would require proper OS and drive support, but with it you could effectively let the OS tell the SSD to wipe invalid pages before they are overwritten.

The process works like this:

First, a TRIM-supporting OS (e.g. Windows 7 will support TRIM at some point) queries the hard drive for its rotational speed. If the drive responds by saying 0, the OS knows it’s a SSD and turns off features like defrag. It also enables the use of the TRIM command.

When you delete a file, the OS sends a trim command for the LBAs covered by the file to the SSD controller. The controller will then copy the block to cache, wipe the deleted pages, and write the new block with freshly cleaned pages to the drive.

Now when you go to write a file to that block you’ve got empty pages to write to and your write performance will be closer to what it should be.

In our example from earlier, here’s what would happen if our OS and drive supported TRIM:

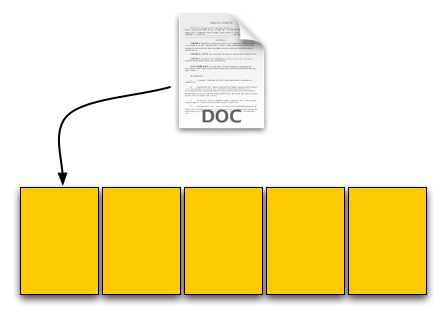

Our user saves his 4KB text file, which gets put in a new page on a fresh drive. No differences here.

Next was a 8KB JPEG. Two pages allocated; again, no differences.

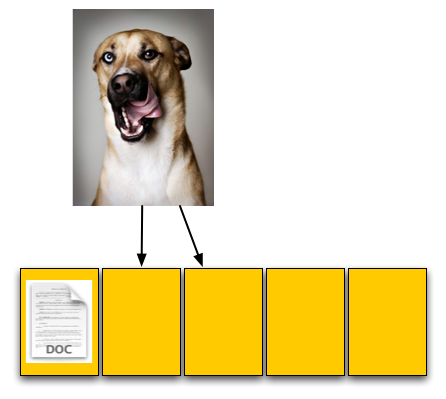

The third step was deleting the original 4KB text file. Since our drive now supports TRIM, when this deletion request comes down the drive will actually read the entire block, remove the first LBA and write the new block back to the flash:

The TRIM command forces the block to be cleaned before our final write. There's additional overhead but it happens after a delete and not during a critical write.

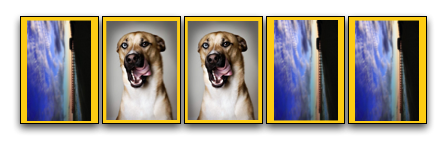

Our drive is now at 40% capacity, just like the OS thinks it is. When our user goes to save his 12KB JPEG, the write goes at full speed. Problem solved. Well, sorta.

While the TRIM command will alleviate the problem, it won’t eliminate it. The TRIM command can’t be invoked when you’re simply overwriting a file, for example when you save changes to a document. In those situations you’ll still have to pay the performance penalty.

Every controller manufacturer I’ve talked to intends on supporting TRIM whenever there’s an OS that takes advantage of it. The big unknown is whether or not current drives will be firmware-upgradeable to supporting TRIM as no manufacturer has a clear firmware upgrade strategy at this point.

I expect that whenever Windows 7 supports TRIM we’ll see a new generation of drives with support for the command. Whether or not existing drives will be upgraded remains to be seen, but I’d highly encourage it.

To the manufacturers making these drives: your customers buying them today at exorbitant prices deserve your utmost support. If it’s possible to enable TRIM on existing hardware, you owe it to them to offer the upgrade. Their gratitude would most likely be expressed by continuing to purchase SSDs and encouraging others to do so as well. Upset them, and you’ll simply be delaying the migration to solid state storage.

250 Comments

View All Comments

SkullOne - Wednesday, March 18, 2009 - link

Fantastic article. Definitely one of the best I've read in a long time. Incredibly informative. Everyone who reads this article is a little bit smarter afterwards.All the great information about SSDs aside, I think the best part though is how OCZ is willing to take blame for failure earlier and fix the problems. Companies like that are the ones who will get my money in the future especially when it is time for me to move from HDD to SSD.

Apache2009 - Wednesday, March 18, 2009 - link

i got one Vertex SSD. Why suspend will cause system halt ? My laptop is nVidia chipset and it is work fine with HDD. Somebody know it ?MarcHFR - Wednesday, March 18, 2009 - link

Hi,You wrote that there is spare-area on X25-M :

"Intel ships its X25-M with 80GB of MLC flash on it, but only 74.5GB is available to the user"

It's a mistake. 80 GB of Flash look like 74.5GB for the user because 80,000,000,000 bytes of flash is 74.5 Go for the user point of view (with 1 KB = 1024 byte).

You did'nt point out the other problem of the X25-M : LBA "optimisation". After doing a lot of I/O random write the speed in sequential write can get down to only 10 MB /s :/

Kary - Thursday, March 19, 2009 - link

The extra space would be invisible to the end user (it is used internally)Also, addressing is normally done in binary..as a result actual sizes are typically in binary in memory devices (flash, RAM...):

64gb

128gb

80 GB...not compatible with binary addressing

(though 48GB of a 128GB drive being used for this seems pretty high)

ssj4Gogeta - Wednesday, March 18, 2009 - link

Did you bother reading the article? He pointed out that you can get any SSD (NOT just Intel's) stuck into a situation when only a secure erase will help you out. The problem is not specific to Intel's SSD, and it doesn't occur during normal usage.MarcHFR - Wednesday, March 18, 2009 - link

The problem i've pointed out has nothing to do with the performance dregradation related to the write on a filled page, it's a performance degradation related to an LBA optimisation that is specific to Intel SSD.VaultDweller - Wednesday, March 18, 2009 - link

So where would Corsair's SSD fit into this mix? It uses a Samsung MLC controller... so would it be comparable to the OCZ Summit? I would expect not since the rated sequential speeds on the Corsair are tremendously lower than the Summit, but the Summit is the closest match in terms of the internals.kensiko - Wednesday, March 18, 2009 - link

No, OCZ Summit = newest Samsung controller. The Corsair use the previous controller, smaller performance.VaultDweller - Wednesday, March 18, 2009 - link

So what's the difference?The Summit is optimized for sequential performance at the cost of random I/O, as per the article. That is clearly not the case with the Corsair drive, so how does the Corsair hold up in terms of random I/O? That's what I'm interested in, since the sequential on the Corsair is "fast enough" if the random write performance is good.

jatypc - Wednesday, March 18, 2009 - link

A detailed description of how SSDs operate makes me wonder: Imagene hypothetically I have a SSD drive that is filled from more than 90% (e.g., 95%) and those 90% are read-only things (or almost read-only things such as exe and other application files). The remaining 10% is free or frequently written to (e.g., page/swap file). Then the use of drive results - from what I understood in the article - in very fast aging of those 10% of the SSD disk because the 90% are occupied by read-only stuff. If the disk in question has for instance 32GB, those 10% are 3.2 GB (e.g., a size of a usual swap file) and after writing it approx. 10000 times, the respective part of the disk would become dead. Being occupies by a swap file, this number of reads/writes can be achieved in one or two years... Am I right?