The SSD Anthology: Understanding SSDs and New Drives from OCZ

by Anand Lal Shimpi on March 18, 2009 12:00 AM EST- Posted in

- Storage

New vs Used SSD Performance

We begin our look at how the overhead of managing pages impacts SSD performance with iometer. The table below shows iometer random write performance; there are two rows for each drive, one for “new” performance after a secure erase and one for “used” performance after the drive has been well used.

| 4KB Random Write Speed | New | "Used" |

| Intel X25-E | 31.7 MB/s | |

| Intel X25-M | 39.3 MB/s | 23.1 MB/s |

| JMicron JMF602B MLC | 0.02 MB/s | 0.02 MB/s |

| JMicron JMF602Bx2 MLC | 0.03 MB/s | 0.03 MB/s |

| OCZ Summit | 12.8 MB/s | 0.77 MB/s |

| OCZ Vertex | 8.2 MB/s | 2.41 MB/s |

| Samsung SLC | 2.61 MB/s | 0.53 MB/s |

| Seagate Momentus 5400.6 | 0.81 MB/s | - |

| Western Digital Caviar SE16 | 1.26 MB/s | - |

| Western Digital VelociRaptor | 1.63 MB/s | - |

Note that the “used” performance should be the slowest you’ll ever see the drive get. In theory, all of the pages are filled with some sort of data at this point.

All of the drives, with the exception of the JMicron based SSDs went down in performance in the “used” state. And the only reason the JMicron drive didn’t get any slower was because it is already bottlenecked elsewhere; you can’t get much slower than 0.03MB/s in this test.

These are pretty serious performance drops; the OCZ Vertex runs at nearly 1/4 the speed after it’s been used and Intel’s X25-M can only crunch through about 60% the IOs per second that it did when brand new.

So are SSDs doomed? Is performance going to tank over time and make these things worthless?

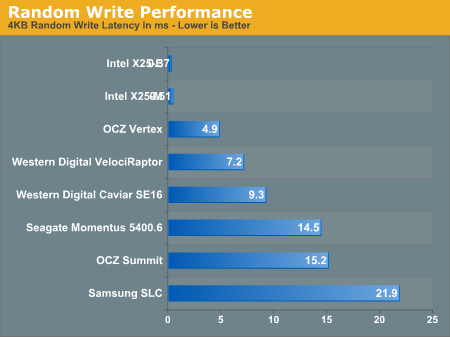

"Used" SSD performance vs. conventional hard drives.

Pay close attention to the average write latency in the graph above. While Intel’s X25-M pulls an extremely fast sub-0.3ms write latency normally, it levels off at 0.51ms in its used mode. The OCZ Vertex manages a 1.43ms new and 4.86ms used. There’s additional overhead for every write but a well designed SSD will still manage extremely low write latencies. To put things in perspective, look at these drives at their worst compared to Western Digital’s VelociRaptor.The degraded performance X25-M still completes write requests in around 1/8 the time of the VelociRaptor. Transfer speeds are still 8x higher as well.

Note that not all SSDs see their performance drop gracefully. The two Samsung based drives perform more like hard drives here, but I'll explain that tradeoff much later in this article.

How does this all translate into real world performance? I ran PCMark Vantage on the new and used Intel drive to see how performance changed.

| PCMark Overall Score | New | "Used" | % Drop |

| Intel X25-M | 11902 | 11536 | 3% |

| OCZ Summit | 10972 | 9916 | 9.6% |

| OCZ Vertex | 11253 | 9836 | 14.4% |

| Samsung SLC | 10143 | 9118 | 10.1% |

| Seagate Momentus 5400.6 | 6817 | - | - |

| Western Digital VelociRaptor | 7500 | - | - |

The real world performance hit varies from 0 - 14% depending on the drive. While the drives are still faster than a regular hard drive, performance does drop in the real world by a noticeable amount. The trim command would keep the drive’s performance closer to its peak for longer, but it would not have prevented this from happening.

| PCMark Vantage HDD Test | New | "Used" | % Drop |

| Intel X25-M | 29879 | 23252 | 22% |

| JMicron JMF602Bx2 MLC | 11613 | 11283 | 3% |

| OCZ Summit | 25754 | 16624 | 36% |

| OCZ Vertex | 20753 | 17854 | 14% |

| Samsung SLC | 17406 | 12392 | 29% |

| Seagate Momentus 5400.6 | 3525 | - | |

| Western Digital VelociRaptor | 6313 | - |

HDD specific tests show much more severe drops, ranging from 20 - 40% depending on the drive. Despite the performance drop, these drives are still much faster than even the fastest hard drives.

250 Comments

View All Comments

Kary - Thursday, March 19, 2009 - link

Why use TRIM at all?!?!?If you have extras Blocks on the drive (NOT PAGES, FULL BLOCKS) then there is no need for TRIM command.

1)Currently in use BLOCK is half full

2)More than half a block needs to be written

3)extra BLOCK is mapped into the system

4)original/half full block is mapped out of system.. can be erased during idle time.

You could even bind multiple continuous blocks this way (I assume that it is possible to erase simultaneously any of the internal groupings pages from Blocks on up...they probably share address lines...ex. erase 0000200 -> just erase block #200 ....erase 00002*0 -> erase block 200 to 290...btw, did addressing in base ten instead of binary just to simplify for some :)

korbendallas - Wednesday, March 18, 2009 - link

Actually i think that the Trim command is merely used for marking blocks as free. The OS doesn't know how the data is placed on the SSD, so it can't make informed decision on when to forcefully erase pages. In the same way, the SSD doesn't know anything about what files are in which blocks, so you can't defrag files internally in the drive.So while you can't defrag files, you CAN now defrag free space, and you can improve the wear leveling because deleted data can be ignored.

So let's say you have 10 pages where 50% of the blocks were marked deleted using the Trim command. That means you can move the data into 5 other pages, and erase the 10 pages. The more deleted blocks there are in a page, the better a candidate for this procedure. And there isn't really a problem with doing this while the drive is idle - since you're just doing something now, that you would have to do anyway when a write command comes.

GourdFreeMan - Wednesday, March 18, 2009 - link

This is basically what I am arguing both for and against in the fourth paragraph of my original post, though I assumed it would be the OS'es responsibility, not the drive's.Do SSDs track dirty pages, or only dirty blocks? I don't think there is enough RAM on the controller to do the former...

korbendallas - Wednesday, March 18, 2009 - link

Well, let's take a look at how much storage we actually need. A block can be erased, contain data, or be marked as trimmed or deallocated.That's three different states, or two bits of information. Since each block is 4kB, a 64GB drive would have 16777216 blocks. So that's 4MB of information.

So yeah, saving the block information is totally feasible.

GourdFreeMan - Thursday, March 19, 2009 - link

Actually the drive only needs to know if the page is in use or not, so you can cut that number in half. It can determine a partially full block that is a candidate for defragmentation by looking at whether neighboring pages are in use. By your calculation that would then be 2 MiB.That assumes the controller only needs to support drives of up to 64 GiB capacity, that pages are 4 KiB in size, and that the controller doesn't need to use RAM for any other purpose.

Most consumer SSD lines go up to 256 GiB in capacity, which would bring the total RAM needed up to 8 MiB using your assumption of a 4 KiB page size.

However, both hard drives and SSDs use 512 byte sectors. This does not necessarily mean that internal pages are therefore 512 bytes in size, but lacking any other data about internal pages sizes, let's run the numbers on that assumption. To support a 256 MiB drive with 512 byte pages, you would need 64 MiB of RAM -- which only the Intel line of SSDs has more than -- dedicated solely to this purpose.

As I said before there are ways of getting around this RAM limitation (e.g. storing page allocation data per block, keeping only part of the page allocation table in RAM, etc.), so I don't think the technical challenge here is insurmountable. There still remains the issue of wear, however...

GourdFreeMan - Wednesday, March 18, 2009 - link

Substitute "allocated" for "dirty" in my above post. I muddled the terminology, and there is no edit function to repair my mistake.Also, I suppose the SSD could store some per block data about page allocation appended to the blocks themselves at a small latency penalty to get around the RAM issue, but I am not sure if existing SSDs do such a thing.

My concerns about added wear in my original post still stand, and doing periodic internal defragmentation is going to necessitate some unpredictable sporadic periods of poor response by the drive as well if this feature is to be offered by the drive and not the OS.

Basilisk - Wednesday, March 18, 2009 - link

I think your concerns parallel mine, allbeit we have different conclusions.Parag.1: I think you misunderstand the ERASE concept: as I read it, after an ERASE parts of the block are re-written and parts are left erased -- those latter parts NEED NOT be re-erased before they are written, later. If the TRIM function can be accomplished at an idle moment, access time will be "saved"; if the TRIM can erase (release) multiple clusters in one block [unlikely?], that will reduce both wear & time.

Parag.2: This argument reverses the concept that OS's should largely be ignorant about device internals. As devices with different internal structures have proliferated over the years -- and will continue so with SSD's -- such OS differentiation is costly to support.

Parag 3 and onwards: Herein lies the problem: we want to save wear by not re-writing files to make them contiguous, but we now have a situation where wear and erase times could be considerably reduced by having those files be contiguous. A 2MB file fragmented randomly in 4KB clusters will result in around 500 erase cycles when it's deleted; if stored contiguously, that would only require 4-5 erase cycles (of 512KB SSD-blocks)... a 100:1 reduction in erases/wear.

It would be nice to get the SSD blocks down to 4KB in size, but I have to infer there are counter arguments or it would've been done already.

With current SSDs, I'd explore using larger cluster sizes -- and here we have a clash with MS [big surprise]. IIRC, NTFS clusters cannot exceed 4KB [for something to do with file compression!]. That makes it possible that FAT32 with 32KB clusters [IIRC clusters must be less than 64KB for all system tools to properly function] might be the best choice for systems actively rewriting large files. I'm unfamiliar with FAT32 issues that argue against this, but if the SSD's allocate clusters contiguously, wouldn't this reduce erases by a factor of 8 for large file deletions? 32KB clusters might ham-string caching efficiency and result in more disk accesses, but it might speed-up linear reads and s/w loads.

The impact of very small file/directory usage and for small incremental file changes [like appending to logs] wouldn't be reduced -- it might be increased as data-transfer sizes would increase -- so the overall gain for having fewer clusters-per-SSD-block is hard to intuit, and it would vary in different environments.

GourdFreeMan - Wednesday, March 18, 2009 - link

RE Parag. 1: As I understand it, the entire 512 KiB block must always be erased if there is even a single page of valid data written to it... hence my concerns. You may save time reading and writing data if the device could know a block were partially full, but you still suffer the 2ms erase penalty. Please correct me if I am mistaken in my assumption.RE Parag. 2: The problem is the SSD itself only knows the physical map of empty and used space. It doesn't have any knowledge of the logical file system. NTFS, FAT32, ext3 -- it doesn't matter to the drive, that is the OS'es responsibility.

RE Parag. 3: I would hope that reducing the physical block size would also reduce the block erase time from 2ms, but I am not a flash engineer and so cannot comment. One thing I can state for certain, however is that moving to smaller physical block sizes would not increase wear across the surface of the drive, except possibly for the necessity to keep track of a map of used blocks. Rewriting 128 blocks on a hypothetical SSD with 4 KiB blocks versus 1 512 KiB block still erases 512 KiB of disk space (excepting the overhead in tracking which blocks are filled).

Regarding using large filesystem clusters: 4 KiB clusters offer a nice tradeoff between filesystem size, performance and slack (lost space due to cluster size). If you wanted to make an SSD look artificially good versus a hard drive, a 512 KiB cluster size would do so admirably, but no one would use such a large cluster size except for a data drive used to store extremely large files (e.g. video) exclusively. BTW, in case you are unaware, you can format a non-OS partition with NTFS to cluster sizes other than 4 KiB. You can also force the OS to use a different cluster size by first formating the drive for the OS as a data drive with a different cluster size under Windows and then installing Windows on that partition. I have a 2 KiB cluster size on a drive that has many hundreds of thousands of small files. However, I should note that since virtual memory pages are by default 4 KiB (another compelling reason for the 4 KiB default cluster size), most people don't have a use for other cluster sizes if they intend to have a page file on the drive.

ssj4Gogeta - Wednesday, March 18, 2009 - link

Thanks for the wonderful article. And yes, I read every single word. LOLrudolphna - Wednesday, March 18, 2009 - link

Hey anand, page 3, the random read latency graph, they are mixed up. it is listed as the WD Velociraptor having a .11ms latency, I think you might want to fix that. :)