NVIDIA GeForce GTS 250: A Rebadged 9800 GTX+

by Derek Wilson on March 3, 2009 3:00 AM EST- Posted in

- GPUs

In the beginning there was the GeForce 8800 GT, and we were happy.

Then, we then got a faster version: the 8800 GTS 512MB. It was more expensive, but we were still happy.

And then it got complicated.

The original 8800 GT, well, it became the 9800 GT. Then they overclocked the 8800 GTS and it turned into the 9800 GTX. Now this made sense, but only if you ignored the whole this was an 8800 GT to begin with thing.

The trip gets a little more trippy when you look at what happened on the eve of the Radeon HD 4850 launch. NVIDIA introduced a slightly faster version of the 9800 GTX called the 9800 GTX+. Note that this was the smallest name change in the timeline up to this point, but it was the biggest design change; this mild overclock was enabled by a die shrink to 55nm.

All of that brings us to today where NVIDIA is taking the 9800 GTX+ and calling it a GeForce GTS 250.

Enough about names, here's the card:

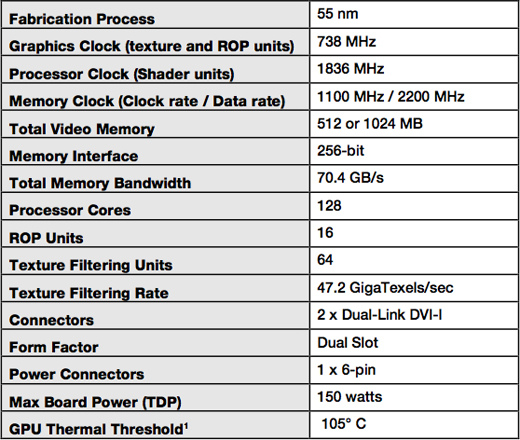

You can get it with either 512MB or 1GB of GDDR3 memory, both clocked at 2.2GHz. The core and shader clocks remain the same at 738MHz and 1.836GHz respectively. For all intents and purposes, this thing should perform like a 9800 GTX+.

If you get the 1GB version, it's got a brand new board design that's an inch and a half shorter than the 9800 GTX+:

GeForce GTS 250 1GB (top) vs. GeForce 9800 GTX+ (bottom)

The new board design isn't required for the 512MB cards unfortunately, so chances are that those cards will just be rebranded 9800 GTX+s.

The 512MB cards will sell for $129 while the 1GB cards will sell for $149.

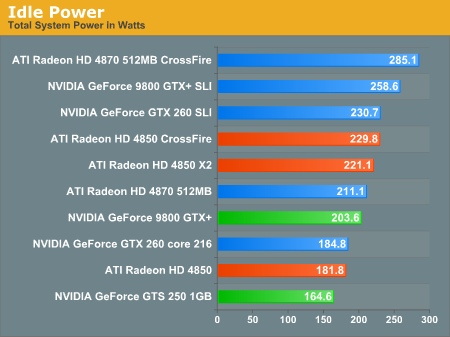

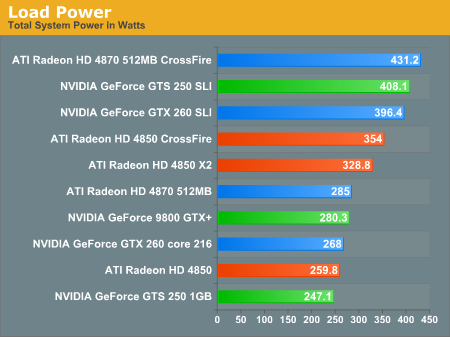

While the GPU is still a 55nm G92b, this is a much more mature yielding chip now than when the 9800 GTX+ first launched and thus power consumption is lower. With GPU and GDDR3 yields higher, power is lower and board costs can be driven down as well. The components on the board draw a little less power all culminating in a GPU that will somehow contribute to saving the planet a little better than the Radeon HD 4850.

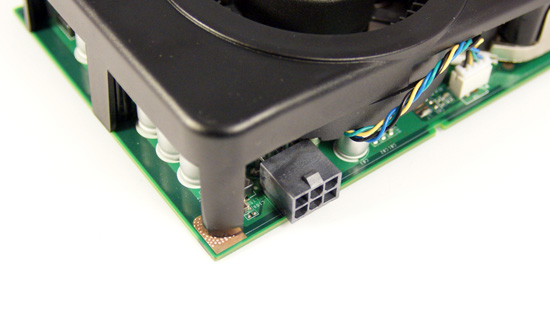

There's only one PCIe power connector on the new GTS 250 1GB boards

Note that you need to have the new board design to be guaranteed the power savings, so for now we can only say that the GTS 250 1GB will translate into power savings:

These are the biggest gains you'll see from this GPU today. It's still a 9800 GTX+.

103 Comments

View All Comments

Leyawiin - Tuesday, March 3, 2009 - link

Good refinement of an already good card. New more compact PCB, lower power comsumption, lower heat, better performance, 1GB. If Nvidia feels thats worthy of a rename, why should anyone get their drawers in a bunch?But please, let the conspiracy theories fly if there was a rewrite of the conclusion. Could be it was just poorly done and wasn't edited, but thats not as fun as insinuating Nvidia must have put pressure on the AT.

Gannon - Tuesday, March 3, 2009 - link

Because it's lying, the Core should always match the original naming scheme. Nvidia is just doing this to get rid of inventory and cause market confusion so that dimwits who don't do their research go for the 'newer' ones, when in fact they are the older.I hate this practice, creative did the same thing with some of their soundblaster cards, the soundblaster PCI I believe it was, it was some other chipset from a company they had bought out and merely renamed and rebadge the card "soundblaster"

Needless to say I hate the practice of deceiving customers, imagine you're in a restaurant and you ordered something but then they switched it on you to something else, you'd rightly get pissed off.

If people weren't so clueless about technology they wouldn't get away with this shit. This is where the market fails, when your customers are clueless, it's sheep to the slaughter.

SiliconDoc - Wednesday, March 18, 2009 - link

Yeah, imagine, you ordered coke one day, and the next week you ordered coca cola off the same menu, and they even had the nerve to bring it in a different shaped glass. What nerve, huh !They sqirted a little more syrup in the latter mix, and a bit less ice, and you screamed you were decieved and they tricked you, and then you went off wailing away that it's the same thing anyway, but you want the coca cola not the coke because it tasted just a tiny bit better, and you had darn better see them coming yup with some honest names.

Then ten thousand morons agreed with you - then the cops hauled you out in a straight jecket.

I see what you mean.

Coke is coca cola, and it should not be renamed like that - or heck people might buy it.

I guess that isn't fair ... because people might buy it. It might even a different price at a different restaurant, or even be called something else and taste different out of a can vs a glass - and heck that ain't "fair".

You do know I think you're all pretty much whining lunatics, now, right ? Just my silly opinion, huh.

Coke, coca cola, soda, pop, golly - what will people do but listen to the endless whiners SCREAM it's all the same and stop fooling people....

I guess it was a slow news YEAR.

SunnyD - Tuesday, March 3, 2009 - link

Since NVIDIA really wanted to push PhysX... I'm curious which if any of the tested titles have PhysX support and if it's enabled in those titles as tested. I'd be really interested to see what kind of performance hit the PhysX "holy grail" takes from this new/old card when trying to compare it to its competition.SiliconDoc - Wednesday, March 18, 2009 - link

I wonder why they haven't done a Mirror's Edge PhysX extravaganza test - they can use secondary PhysX cards and then use the primary for enabling, turn it on and off and compare - etc.But not here - Derek would grind off all his tooth enamel, and Anand can't afford the insurance for him.

SiliconDoc - Wednesday, March 18, 2009 - link

Derek CAN'T include PsysX, and NEVER SAYS wether or not he has it disabled in the nvidia driver panel - although this site USED to say that.If they even dare bring up PhysX - ati looks BAD.

Hence, to keep as absolutely MUM as possible, is the best red fan rager course.

You see of course, Derek the red has to admit that yes, even NVIDIA ITSELF brought this SAD BIAS up to Derek...

Oh well, once a raging red rooster, always a red rooster - and NOTHING is going to change that. (or so it appears)

Is that 10 or 15 points of absolute glaring bias now ?

____________________________________________________________

" We're just trying to save the billions losing ati so we have competition and lower prices - so shut up SiliconDoc ! Do you want to pay more, ALL OVER AGAIN FOR NVIDIA CARDS !!?!"

____________________________________________________________

PS, thanks for lying so much red roosters, you've done a wonderful job of endless bs and fud, hopefully now Obama can bail out amd/ati, and my nvidia cuba badaboom low power game profiles, forced sli, PhysX cards will remain the best buy and continue to utterly dominate with only DDR3 memory in them.

PSS - Yes, I can hardly wait for nvidia DDR5 - oh will that ever be fun - be ready to rip your red badges off your puny chests fellers - I'm sure you'll suddenly find a way to reverse 180 degrees after a few weeks of "gating nvidia for stealing ati ddr5 intellectual property".

LOL

Oh it's gonna be a blast.

C'DaleRider - Tuesday, March 3, 2009 - link

Very early this morning, I stumbled upon this article when it was originally put up....and went directly to the conclusions page. Interesting read....and I should have saved that page.Subsequently, the entire review went down with this reasoning, "...ust we had some engine issues... missing images and such. I don't have the images or I'd put them on the server and set the article to "live" again. Anand and Derek have been notified; sorry for the delays."

Well, it's back up and what do you know.....the conclusions have now become somewhat softer, or as a few others on another forum put it who also saw the "original" review...circumcised, censored, and bullied by nVidia.

Shame that the original conclusion has been redone....would have liked others to actually see AT had some independence. Guess that's a lost ideal now...........

strikeback03 - Tuesday, March 3, 2009 - link

Interesting, you mentioned in the comments in the other article that you didn't get to see any of the review, as when you clicked it went to the i7 system review.JarredWalton - Wednesday, March 4, 2009 - link

Thanks for the speculation, but I can 100% guarantee that the "pulling" of the article was me taking it down due to missing images. I did it, and I never even looked at the rest of the article, seeing that it was 3AM and I had just finished editing a different article.Was the conclusion edited before it was put back up? Yes, but not by me. That's not really unusual, though, since we typically have someone else read over things before an article goes live, and with a bit more discussion the wording can be changed around. It would have changed regardless, and not because of anything NVIDIA said.

Is the 9800 GTX+ naming change stupid? I certainly think so. However, that doesn't make the current conclusion wrong. The card reworking does have benefits, and at the new price it's definitely worth a look as a midrange option.

RamarC - Tuesday, March 3, 2009 - link

please consider styling the resolution links so they stand out a bit or look button-ish. it took me a minute to realize they were clickable.