Intel's 32nm Update: The Follow-on to Core i7 and More

by Anand Lal Shimpi on February 11, 2009 12:00 AM EST- Posted in

- CPUs

Enter the 32nm Lineup

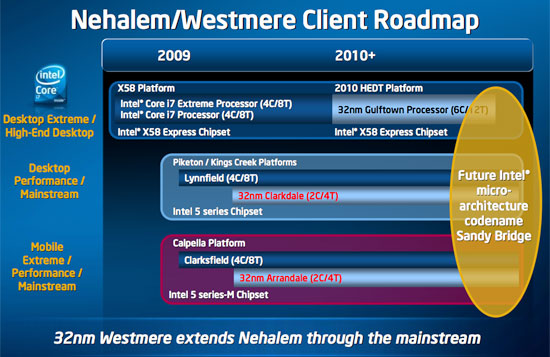

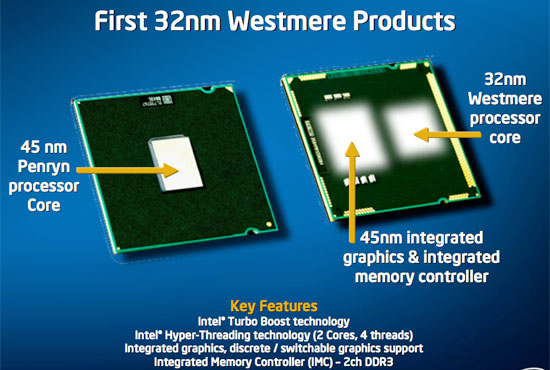

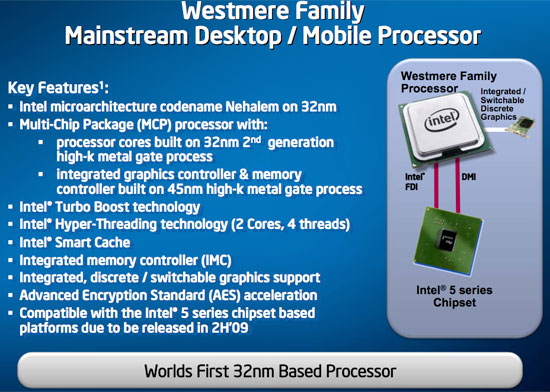

Instead of Havendale in Q4, we’ll get Clarkdale and Arrandale. These are both dual-core, quad-thread processors, and both have on-package graphics. The CPU cores will be built on Intel’s 32nm process and in fact, they will be the first Westmere CPUs shipping into the market.

Now note that the dual-core market is the largest slice of the processor pie. Intel must be incredibly confident in its 32nm process to start shipping it into these demand markets first. Remember that both 65nm and 45nm initially launched on the high end desktop, but 32nm is making its debut in mainstream notebooks and desktops. The 32nm ramp is going to be a good one folks.

| Segment | Manufacturing Process | Socket | Processor | Cores | Threads | Release Date |

| High End Desktop | 32nm | LGA-1366 | Gulftown | 6 | 12 | 1H 2010 |

| Mainstream Desktop | 32nm | LGA-1156 | Clarkdale | 2 | 4 | Q4 2009 |

| Mobile | 32nm | mPGA-989 | Arrandale | 2 | 4 | Q4 2009 |

| 4S Server | 32nm | LGA-1567 | ??? | ? | ? | 2010 |

| 2S Server | 32nm | LGA-1366 | ??? | ? | ? | 2010 |

| 1S Server | 32nm | LGA-1156 | Clarkdale | 2 | 4 | 2010 |

Clarkdale/Arrandale have 32nm CPUs but their on-package GPUs are still built on Intel’s 45nm process; these are the GPUs that were supposed to be used for Havendale! It won’t be until 2010 with Sandy Bridge that we see a 32nm CPU and 32nm GPU on the same package.

A side effect of the Clarkdale/Arrandale architecture is that the memory controller is now located on the GPU and not the CPU, although both are still on package and should still be quite low latency.

Keep following; if you want a quad-core Westmere, your only option will be in the LGA-1366 socket with Gulftown. Core i7 will get replaced with a six-core, twelve-thread processor in early 2010. There won’t be a 32nm quad-core part on the desktop until the end of 2010 with Sandy Bridge.

64 Comments

View All Comments

Jovec - Wednesday, February 11, 2009 - link

Take a look at your Program Menu and tell me what apps today that are not multithreaded would receive serious benefit from being multithreaded? Besides gaming? Single-thread apps do receive benefits from multiple cores in typical usage scenarios because they can be run on a (semi) dedicated core and not interfere with other apps.philosofool - Wednesday, February 11, 2009 - link

Interesting thought. I'm hoping that with the mainstreaming of the dual core, multi-threaded apps become more common and that the single to dual jump turns out to be the biggest leap. But it's really just a hope on my part, don't know if it will happen.Isn't there a multitasking advantage with 4 core machines? Also, once we start ripping 720 and 1080p files, 6 cores is gonna be hot.

7Enigma - Thursday, February 12, 2009 - link

There are definite multitasking advantages with quadcore if you are heavily multitasking (i'd argue tri-core is probably used more effectively currently than that final 4th core). Single to dual, however, was a much greater difference for multitasking on the whole.I just don't see the quad-hex jump being more beneficial than quad-juicedquad in this case.

strikeback03 - Wednesday, February 11, 2009 - link

Yeah, can't say I'm real happy about the lack of a 32nm quad-core for 1366. If my motherboard supported Penryn I'd probably just buy one of those cheap, getting an SSD, and waiting for Sandy Bridge. Since it doesn't, the decision is more difficult. Probably depends how much business I get this year.Pakman333 - Wednesday, February 11, 2009 - link

DailyTech says Lynnfield will come in Q3? Hopefully it will have ECC support.iwodo - Wednesday, February 11, 2009 - link

SSE 4.2 doesn't bring much useful performance to consumers.There is no Dual Core Westmere or Nehalem. Not without Intel Sh*test Graphics On Earth.

No wonder why Unreal Dev and Valve are complaining that Intel GFX is basically Toxic....

And i cant understand why Anand is excited, Macbook with Intel Graphics all over again?

And Just before anyone who say Intel Gfx will improve. Please refer to history, from G965 to their X series are so full of Marketing BS.... And never did they delivery what they promised.

ssj4Gogeta - Wednesday, February 11, 2009 - link

noone's forcing you to use G45. you can still use discrete gfx cards.Daemyion - Wednesday, February 11, 2009 - link

Actually, they fully delivered on the marketing. It's just that when Nvidia/ATI delivered products in the same space Intels product looked rubbish. There is nothing wrong with the G45 other than it not being an 9400 or a 790GX.Spoelie - Wednesday, February 11, 2009 - link

wasn't yonah the first processor out at the 65nm node? if so intel did perform the same stunt earlier, only at 45nm did they not release a laptop version first.IntelUser2000 - Wednesday, February 11, 2009 - link

No, the Pentium 955XE based on Pentium D was.