NVIDIA GeForce GTX 295 Coming in January

by Derek Wilson on December 18, 2008 9:00 AM EST- Posted in

- GPUs

A Quick Look Under The Hood

Our first concern, upon hearing about this hardware, was whether or not they could fit two of GTX 260 GPUs on a single card without melting PSUs. With only a 6 pin + 8 pin PCIe power configuration, this doesn't seem like quite enough to push the hardware. But then we learned something interesting: the GeForce GTX 295 is the first 55nm part from NVIDIA. Of course, the logical conclusion is that single GPU 55nm hardware might not be far behind, but that's not what we're here to talk about today.

Image courtesy NVIDIA

55nm is only a half node process, so we won't see huge changes in die-size (we don't have one yet, so we can't measure it), but the part should get a little smaller and cheaper to build. As well as a little easier to cool and lower power at the same performance levels (or NVIDIA could choose to push performance a little higher).

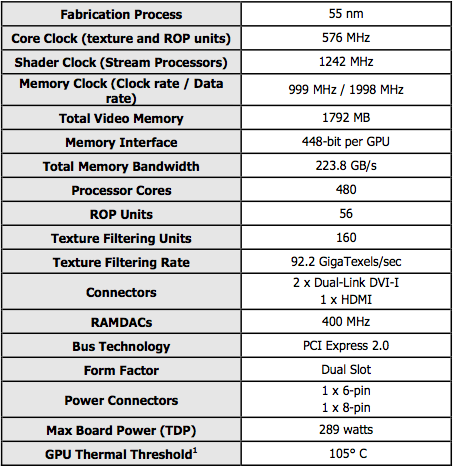

Image courtesy NVIDIA

As we briefly mentioned, the GPUs strapped on to this beast aren't your stock GTX 260 or GTX 280 parts. These chips are something like a GTX 280 with one memory channel disabled running at GTX 260 clock speeds. I suppose you could also look at them as GTX 260 ICs with all 10 TPCs enabled. Either way, you end up with something that has higher shader performance than a GTX 260 and lower memory bandwidth and fillrate (remember that ROPs are tied to memory channels, so this new part only has 28 rops instead of 32) than a GTX 280. This is a hybrid part.

Image courtesy NVIDIA

Our first thought was binning (or what AMD calls harvesting), but being that this is also a move to 55nm we have to rethink that. It isn't clear whether this chip will make it's way onto a single GPU board. But if it did, it would likely be capable of higher clock speeds due to the die shrink and would fall between the GTX 260 core 216 and GTX 280 in performance. Of course, this part may not end up on single GPU boards. We'll just have to wait and see.

What is clear, is that this is a solution gunning for the top. It is capable of quad SLI and sports not only two dual-link DVI outputs, but an HDMI out as well. It isn't clear whether all boards built will include the HDMI port the reference board includes, but more flexibility is always a good thing.

Image courtesy NVIDIA

69 Comments

View All Comments

derek85 - Thursday, December 18, 2008 - link

I hardly doubt NVidia can go on a price war with AMD given their huge die size and poorer yield.SiliconDoc - Sunday, December 21, 2008 - link

Well how about we all be bright about it, and hope both companies survive and prosper ?Golly, that would actually be the SANE THING to do.

No, no there aren't many sane people around.

I think I'll start a fanboy wars company, and we'll make two teams, and have people sign up for like 5 bucks each, and then they can have their fan wars cheering for their corporate master while they prey for the demise of the disfavored one and argue over it.

Maybe we can get some kind of additional betting company going, like how they bet on whose going to get the political nominations and get elected... and make it a deranged fanboy stock market.

We can even get carbon credit points for the low power user arguable leader of the quarter, and award a carbon credit bundle when one company is destroyed (therefore adding to saving the green earth).

I mean why not ? There's so many loons who want one company or the other to expire, or so their raging argument goes...

I'd like to ask a simple question though.

If your fanoyism company can "gut their price structure" in order to destroy the other company, as so many fanboys claim, doesn't it follow that you've been getting RIPPED OFF by your favorite corporate profiteer ? Doesn't it also follow that the other guy has been getting a much more for his or her money ?

LOL

The answers are YES, and YES.

I bet the corporate pigs love this stuff... "my fan rage company can destroy yours by slashing prices because they have a huge profit margin to work with, while your company has been gouging everyone !" the fanboy shrieked.

Uhhhh... if your company, the very best one, has all the leeway in lowering prices, why then they have been the one raping wallets, not the other guy's company.

So while you're screaming like a 2 year old about how much more moola your bestes company has from every sale, turn around, and keep chasing your own tail, because your wallet has been unfairly emptied - by the very company you claim could wipe the other out with the huge margin they can lower the prices with.

Yeah, you're claiming you got robbed blind, and the sad thing is, if there is so much room, why then they COULD HAVE lowered prices a long time ago and wiped out the competition.

But they didn't, they took that extra money from your fanboy wallet, and you thanked them, then you screamed at the other guy how great it is - and made the empty threat of destruction... and called the enemy the gouger...( when your argument says your company is gouging, with all it's extra profit margin ...)

Oh well.

So much for being sane.

__________________________________________________

Sanity is : hoping both companies survive and prosper.

Razorbladehaze - Sunday, December 21, 2008 - link

This comment is senseless dribble. It is so ambiguous that it doesn't say anything useful. What can be discerned is a very odd hypothetical situation, that turns into venting about something.This comment should be stricken from the record.

SiliconDoc - Saturday, December 27, 2008 - link

Ahh, so you bought the ATI, and now realize that you got "robbed" and they made a big profit screwing you.Sorry about that.

Just stay in denial, it's easier on you that way.

chizow - Thursday, December 18, 2008 - link

They've done a pretty good job of leading price cuts despite the Holiday Inn economics logic employed around various forums. After the initial price cuts in June, GTX 260 has consistently been priced lower than the 512MB 4870 and the GTX 260 c216 has been consistently priced lower than the 1GB 4870. Now that everyone is on a more expensive 55nm process, I'd say any price differences are easily negated by AMD's use of much more expensive GDDR5.SuperGee - Saturday, December 20, 2008 - link

It's about profits.The smaller the Die size the more chips out of a waver. The more chips on a waver bette bin and yield possibility's. Also less waver wast. A wafer is round. chips rectangle shape.

With 55nm equal for both, nV has still a bigger chip. 1,4Transister while RV770 is 950mil. That a 1,5 difference.

RV770 is still cheaper to produce. But GDDR5 is a tad expensive. But that not AMD costs but IHV problem.

SkullOne - Thursday, December 18, 2008 - link

ardOcp has a brief preview of the GTX295 and I don't find it anything terribly exciting. It's nothing but a way to try to get a few more people to hold off buying cards until after the holidays and market Quad-SLI a littl bit more.I do agree with some of the previous comments about how AMD doesn't allow users to create their own AFR profiles. There is a rumor that it is in the works so as a HD4870 Crossfire user I hope it is true and AMD has been very good at listening to their users. The manual fan control is a good example. Then again Crossfire works on all the games I currently play so I don't really have an issue.

magreen - Thursday, December 18, 2008 - link

Do we get nice mesquite bbq flavored char-grilled video?SiliconDoc - Saturday, December 20, 2008 - link

Ok, now you rekeyed my record. What we might get is 2 vidoes side by side, the video on the left has a barbeque, but the coals aren't crackling and popping, and the sauce isn't splashing about, and flame lickage is missing. That's no PhysiX - SUPPOSEDLY.On the right side of the screen is the "wonderful PhysiX display" with flames licking about, sauce drips and coals crackling off PhysiX sparks!

Yes, some things (planned deception) in fact are " not good for end users ".

strikeback03 - Thursday, December 18, 2008 - link

The image at the bottom of the page? I'd guess it is probably a reflection in the back plate, maybe the lens was close to the product when that was shot.Though a video card that could double as a Foreman grill is possible with a 289W TDP.