The Dark Knight: Intel's Core i7

by Anand Lal Shimpi & Gary Key on November 3, 2008 12:00 AM EST- Posted in

- CPUs

Multiple Clock Domains

Functionally there are some basic differences between Nehalem and previous Intel architectures. The Front Side Bus is gone and replaced with Intel's Quick Path Interconnect, similar to AMD's Hyper Transport. The QPI implementation on the first Nehalem is a 25.6GB/s interface which matches up perfectly to the 25.6GB/s of memory bandwidth Nehalem has.

The CPU operates on a multiplier of the QPI source clock, which in this case is 133MHz. The top bin Nehalem runs at 3.2GHz or 133MHz x 24. The L3 cache and memory controller operate on a separate clock frequency called the un-core clock. This frequency is currently 20x the BCLK, or 2.66GHz.

This is all very similar to AMD's Phenom, but where the two differ is in how they handle power management. While AMD will allow individual cores to request different clock speeds, Nehalem attempts to run all of its cores at the same frequency; if one core is idle then it's simply power gated and the core is effectively turned off. I explain this in greater detail here but the end result is that we don't have the strange performance issues that sometimes appear with AMD's Cool'n'Quiet enabled. While we have to turn off CnQ to get repeatable results in some of our benchmarks (in some cases we'll see a 50% performance hit with CnQ enabled), Intel's EIST seems to be fine when turned on and does not concern us.

My Concern

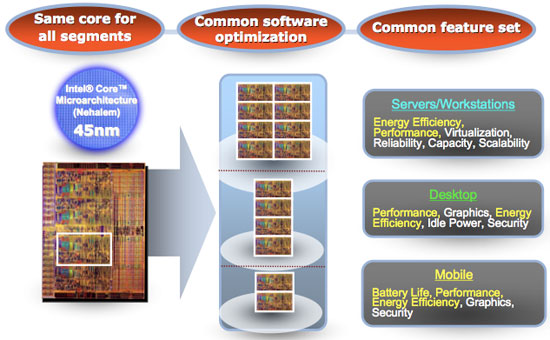

Looking at Nehalem's microarchitecture one thing becomes very clear: this is a CPU designed to address Intel's shortcomings in the server space. There's nothing inherently wrong about that, but it's a different approach than what Intel did with Conroe. With Conroe Intel took a mobile architecture and using the philosophy that what was good for mobile, in terms of power efficiency and performance per watt, would also be good for the desktop, it created its current microarchitecture.

This was in stark contrast to how microprocessor development used to work; chips would be designed for the server/workstation/high end desktop market and trickle down to mainstream users and the mobile space. But Conroe changed all of that, it's a good part of why Intel's Core 2 architecture makes such a great desktop and mobile processor.

Power obviously also matters in servers but not to the same extent as notebooks, needless to say Conroe did well in the server market but it lacked some key features that allowed AMD to hang onto market share.

Nehalem started out as an architecture that addressed these enterprise shortcomings head on. The on-die memory controller, Hyper Threading, larger TLBs, improved virtualization performance, restructured cache hierarchy, the new 2nd level branch predictor, all of these features will be very important to making Intel more competitive in the enterprise space, but at what cost to desktop power consumption and performance?

Intel promises better energy efficiency for the desktop, we'll be the judge of that...

I'm stating the concern up front because when I approached today's Nehalem review that's what I had in mind. Everyone has high expectations for Nehalem, but it hasn't been that long since Intel dropped Prescott on us - what I want to find out is whether Intel has stayed true to its mission on keeping power in check or if we've simply regressed with Nehalem.

The only hope I had for Nehalem was that it was the first high performance desktop core that implemented Intel's new 2:1 performance:power ratio rule. Also used by the Atom's design team, every feature that made its way into Nehalem had to increase performance by 2% for every 1% increase in power consumption otherwise it wasn't allowed in the design. In the past Intel used a general 1:1 ratio between power and performance, but with Nehalem the standards were much higher. We'll find out if Intel was all talk in a moment, but let's take a look at Nehalem's biggest weakness first.

73 Comments

View All Comments

Kaleid - Monday, November 3, 2008 - link

http://www.guru3d.com/news/intel-core-i7-multigpu-...">http://www.guru3d.com/news/intel-core-i...and-cros...bill3 - Monday, November 3, 2008 - link

Umm, seems the guru3d gains are probably explained by them using a dual core core2dou versus quad core i7...Quad core's run multi-gpu quiet a bit better I believe.tynopik - Monday, November 3, 2008 - link

what about those multi-threading tests you used to run with 20 tabs open in firefox while running av scan while compressing some files while converting something else while etc etc?this might be more important for daily performance than the standard desktop benchmarks

D3SI - Monday, November 3, 2008 - link

So the low end i7s are OC'able?

what the hell is toms hardware talking about lol

conquerist - Monday, November 3, 2008 - link

Concerning x264, Nehalem-specific improvements are coming as soon as the developers are free from their NDA.See http://x264dev.multimedia.cx/?p=40">http://x264dev.multimedia.cx/?p=40.

Spectator - Monday, November 3, 2008 - link

can they do some CUDA optimizations?. im guessing that video hardware has more processors than quad core intel :PIf all this i7 is new news and does stuff xx faster with 4 core's. how does 100+ core video hardware compare?.

Yes im messing but giant Intel want $1k for best i7 cpu. when likes of nvid make bigger transistor count silicon using a lesser process and others manufacture rest of vid card for $400-500 ?

Where is the Value for money in that. Chukkle.

gramboh - Monday, November 3, 2008 - link

The x264 team has specifically said they will not be working on CUDA development as it is too time intensive to basically start over from scratch in a more complex development environment.npp - Monday, November 3, 2008 - link

CUDA Optimizations? I bet you don't understand completely what you're talking about. You can't just optimize a piece of software for CUDA, you MUST write it from scratch for CUDA. That's the reason why you don't see too much software for nVidia GPUs, even though the CUDA concept was introduced at least two years ago. You have the BadaBOOM stuff, but it's far for mature, and the reason is that writing a sensible application for CUDA isn't exactly an easy task. Take your time to look at how it works and you'll understand why.You can't compare the 100+ cores of your typical GPU with a quad core directly, they are fundamentaly different in nature, with your GPU "cores" being rather limited in functionality. GPGPU is a nice hype, but you simply can't offload everything on a GPU.

As a side note, top-notch hardware always carries price premium, and Intel has had this tradition with high-end CPUs for quite a while now. There are plenty of people who need absolutely the fastest harware around and won't hesitate paying it.

Spectator - Monday, November 3, 2008 - link

Some of us want more info.A) How does the integrated Thermal sensor work with -50+c temps.

B) Can you Circumvent the 130W max load sensor

C) what are all those connection points on the top of the processor for?.

lol. Where do i put the 2B pencil to. to join that sht up so i dont have to worry about multiply settings or temp sensors or wattage sensors.

Hey dont shoot the messenger. but those top side chip contacts seem very curious and obviously must serve a purpose :P

Spectator - Monday, November 3, 2008 - link

Wait NO. i have thought about it..The contacts on top side could be for programming the chips default settings.

You know it makes sence.Perhaps its adjustable sram style, rather than burning connections.

yes some technical peeps can look at that. but still I want the fame for suggesting it first. lmao.

Have fun. but that does seem logical to build in some scope for alteration. alot easier to manufacture 1 solid item then mod your stock to suit market when you feel its neccessary.

Spectator.