Understanding 8-channel LPCM over HDMI: Why it Matters and Who Supports it

by Anand Lal Shimpi on September 17, 2008 2:00 PM EST- Posted in

- GPUs

In several recent reviews I’ve talked about the importance of supporting 8-channel LPCM over HDMI. More specifically, you’ll see this as a feature listed with AMD’s Radeon HD 4800 series and more recently the 4600 series. Intel has quietly toted 8-channel LPCM support as a feature of its integrated graphics chipsets since the G965, yet I’ve never done a good job explaining what this feature is and why you should even care.

Honestly, it took my recent endeavors into the home theater world to really get an understanding for what it is and why it’s important. So without further ado, I present you with a “quick” (in Anand-terms) explanation of what 8-channel LPCM over HDMI is and why it matters.

Grab some popcorn.

The Necessity: Enabling 8-channel Audio on Blu-ray Discs

Movies ship with multi-channel audio tracks so that users with more than two speakers can enjoy what ultimately boils down to surround sound. Audio takes up a lot of space and studios keep trying to pack more data onto discs so most multi-channel movie audio is stored in a compressed format.

In the days of DVDs the studios used either Dolby Digital or DTS encoding for their audio tracks, but with Blu-ray (and HD-DVD) the stakes went up. Just as video encoding got an overhaul with the use of H.264 as a compression codec, audio on Blu-ray discs got a facelift of its own. Dolby Digital and DTS were both still supported, but now there were three more options: Dolby Digital TrueHD, DTS-HD Master Audio and uncompressed LPCM.

Dolby Digital and DTS, as implemented with the original DVD standard, had two flaws: 1) They were lossy codecs (you didn't get a bit for bit duplicate on disc of the audio the studio originally mastered when making the movie), and 2) they only supported a maximum of 6-channels of audio (aka 5.1 surround sound: right, left, center, left surround, right surround and LFE/sub channel).

DVDs could store 4.5GB or 9GB of data on a single disc, so using lossy audio codecs made sense. Blu-ray discs are either 25GB or 50GB in size meaning we can store more data and higher quality data at that, for both audio and video.

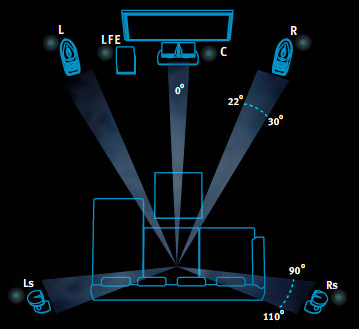

A standard 5.1 channel audio setup. Copyright Dolby Laboratories.

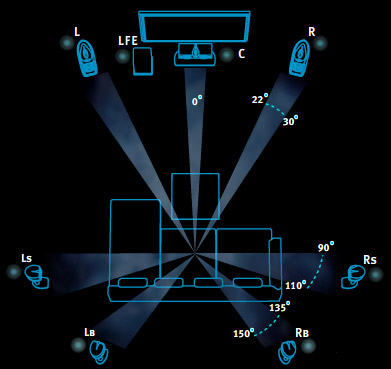

Both Dolby Digital TrueHD and DTS-HD MA improve upon their DVD counterparts by: 1) being lossless (when decoded properly, you get a bit for bit identical copy of the audio the studio originally mastered for the movie), and 2) currently supporting up to 8-channels of audio (aka 7.1 surround sound: right, left, center, left surround, left rear, right surround, right rear, and LFE/subwoofer channel; both specs actually support greater than 8-channels but current implementations are only limited to 8).

A standard 7.1 channel audio setup. Copyright Dolby Laboratories.

These standards are lossless, which is great. While we're not quite there on the video side, the fact that we can store and playback the original audio track from a movie is an incredible feat and a feather in the cap of technology in general.

The support for 8-channel speaker setups is also a boon, because currently the way people with 8-channel audio setups get those extra two channels is by some form of matrixed audio. Dolby ProLogic IIx and DTS Neo6 generate one or more additional channels of audio from existing surround sound channels; the downside is that these methods never sound good. While they make audio come out of all speakers, generally the original 5.1 audio track produces better sound in that it is less muffled and more distinct.

Now we have these wonderful audio codecs to give us the benefits of fully uncompressed audio without the incredible space requirements, but there is indeed a problem: decoding them on a PC.

53 Comments

View All Comments

Anand Lal Shimpi - Thursday, September 18, 2008 - link

Actually I believe both TrueHD and DTS-HD MA include the lossy DD/DTS tracks as a part of their spec. If you can't decode the lossless version, it should default to the lossy version. This is how it works on CE devices but admittedly I haven't played with it enough on the PC side.Sigh, there's so much work to be done here :)

-A

jnmfox - Thursday, September 18, 2008 - link

True, on the PS3 you have to make sure you have LPCM selected as your output, but if the original poster has it hooked up properly than he should be getting the lossless version.That is also part of the problem, so many blasted formats. I understand what they are and why we have them but to the un-Home Theater educated, i.e. my parents, it is just a bunch of mumbo jumbo.

This is a time when the movie industry should be trying to make things simpler instead it is just getting more and more complicated and as we can see from the posts it turns a lot of people off. A lot of people that may have been paying customers.

There is a lot of work to be done and it is sad we are so far away.

jnmfox - Wednesday, September 17, 2008 - link

Obviously I don't know your set-up but if you have your PS3 set to transcode the audio to LPCM and have it hooked up to your AVR via HDMI then you should be getting a lossless audio track not a downsampled DD signal."but my HTPC has a much better quality picture due to GPU acceleration magic"

Are you talking about SD-DVD PQ or Blu-ray PQ?

The Blu-ray jukebox would be nice.

sprockkets - Wednesday, September 17, 2008 - link

This is 2000 all over again: trying to find a sound card that actually passed on a DD signal via spdif with a dvd software program that properly talked to said sound card was a PITA. Then VLC came out and ended all the BS with the a52 codec and it being a free program. I remember buying a $20 sound card and finally having the right WinDVD to work with DD, even if it was the analog ports. What sucks is they wanted $60 for the same stupid program separately.Of course, why bother using cyberlink and paying them $$$ for the program (the version bundled with blue ray drives is crippled) when you can buy...

fri2219 - Wednesday, September 17, 2008 - link

I fail to see what problem 8 channel audio solves, aside from "how do audio vendors sell more equipment?".Human brains are lousy sound locators, this just isn't needed- 6 channel audio is pushing it as it is.

When you factor in the fact that most people in the G8 under 40 have damaged their hearing, it's even nuttier.

sxr7171 - Monday, September 22, 2008 - link

Seriously, just like the megapixel race the number of channels race is simply moronic.fuzz - Thursday, September 25, 2008 - link

don't know if there's any racing going on. i don't think i've got a single movie (regardless of format) that does anything over 5.1ch..nilepez - Sunday, September 21, 2008 - link

I'm not sure about 7.1 (since virtually nothing is encoded at 7.1), but 6.1 provides a rear center, which can help with pans for people who aren't in the center of the room. 7.1 does the same thing, in theory, but I don't htink there's much advantage unless the movie is encoded that way.I"m actually a bit surprised that BD movies aren't encoded in 6.1 or 7.1

fuzz - Thursday, September 18, 2008 - link

true where audio is concerned, thats why nobody has made this a priority.. the point though is not that 8ch 24/192 is so much better than 6ch 16/48, rather that these ineffective and costly practices are in place when they shouldnt be..the fidelity argument is also largely true of HD video.. a waste of time if you don't own a HD projector and view on a 100" screen. you won't see sh*t-all difference between your DVD and a HD movie on a 32" display if you're sitting further than a meter away.

well okay you might if you *really* pay attention but then you'd be missing the movie ;)

npp - Wednesday, September 17, 2008 - link

You couldn't be more right.But people like big numers - well, 7.1 can't be worse than 5.1, right, just like megapixels, horse power, cores, and everything else.

This aside, I find the "bit perfect" hype to be the next stupid thing. In my eyes, it's simply that most people don't want to admit that their ears and brain are imperfect, and can be fooled (by means of frequency masking). The word "lossy" seems to be a bad one, but I've heard plenty of "lossy" sound that was better than studio-mastered CD-s... And a lot, yes I mean A LOT of people can't hear any difference between properly compressed and uncompressed tracks at all... And yes, human hearing degrades rapidly with time, to a point when even a 10 Khz sound can't be heard - but you can rest assured that you have all your frequencies up to 48 Khz untouched, it's lossless.

You just have to swallow your ego to admit this, and there are plenty of people who aren't prepared to do it. You have the guys at stereophile.com which can hear not only differences between cables, but also between their wall sockets, ladies and gentlemen. A separate power line gave an amplifier something like more vivid and punching sound, for example.

I don't know who is crazy in this case but I think that people got what they needed long time ago and anything beyound that (read: all the 24bit/192Khz, 7.1, etc. stuff) provokes more imaginative than objective, quantitative effects... And of course you'll hear a difference if you've paid an amount enough to feed a small african village just for equipment, you have to.