Intel X25-M SSD: Intel Delivers One of the World's Fastest Drives

by Anand Lal Shimpi on September 8, 2008 4:00 PM EST- Posted in

- Storage

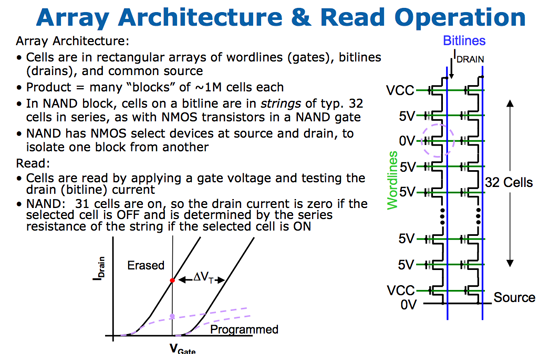

The Flash Hierarchy & Data Loss

We've already established that a flash cell can either store one or two bits depending on whether it's a SLC or MLC device. Group a bunch of cells together and you've got a page. A page is the smallest structure you can program (write to) in a NAND flash device. In the case of most MLC NAND flash each page is 4KB. A block consists of a number of pages, in the Intel MLC SSD a block is 128 pages (128 pages x 4KB per page = 512KB per block = 0.5MB). A block is the smallest structure you can erase. So when you write to a SSD you can write 4KB at a time, but when you erase from a SSD you have to erase 512KB at a time. I'll explore that a bit further in a moment, but let's look at what happens when you erase data from a SSD.

Whenever you write data to flash we go through the same iterative programming process again. Create an electric field, electrons tunnel through the oxide and the charge is stored. Erasing the data causes the same thing to happen but in the reverse direction. The problem is that the more times you tunnel through that oxide, the weaker it becomes, eventually reaching a point where it will no longer prevent the electrons from doing whatever they want to do.

On MLC flash that point is reached after about 10,000 erase/program cycles. With SLC it's 100,000 thanks to the simplicity of the SLC design. With a finite lifespan, SSDs have to be very careful in how and when they choose to erase/program each cell. Note that you can read from a cell as many times as you want to, that doesn't reduce the cell's ability to store data. It's only the erase/program cycle that reduces life. I refer to it as a cycle because an SSD has no concept of just erasing a block, the only time it erases a block is to write new data. If you delete a file in Windows but don't create a new one, the SSD doesn't actually remove the data from flash until you're ready to write new data.

Now going back to the disparity between how you program and how you erase data on a SSD, you program in pages and you erase in blocks. Say you save an 8KB file and later decide that you want to delete it, it could just be a simple note you wrote for yourself that you no longer need. When you saved the file, it'd be saved as two pages in the flash memory. When you go to delete it however, the SSD mark the pages as invalid but it won't actually erase the block. The SSD will wait until a certain percentage of pages within a block are marked as invalid before copying any valid data to new pages and erasing the block. The SSD does this to limit the number of times an individual block is erased, and thus prolong the life of your drive.

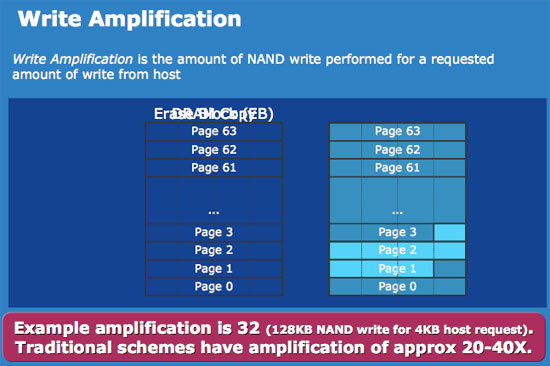

Not all SSDs handle deletion requests the same way, how and when you decide to erase a block with invalid pages determines the write amplification of your device. In the case of a poorly made SSD, if you simply wanted to change a 16KB file the controller could conceivably read the entire block into main memory, change the four pages, erase the block from the SSD and then write the new block with the four changed pages. Using the page/block sizes from the Intel SSD, this would mean that a 16KB write would actually result in 512KB of writes to the SSD - a write amplification factor of 32x.

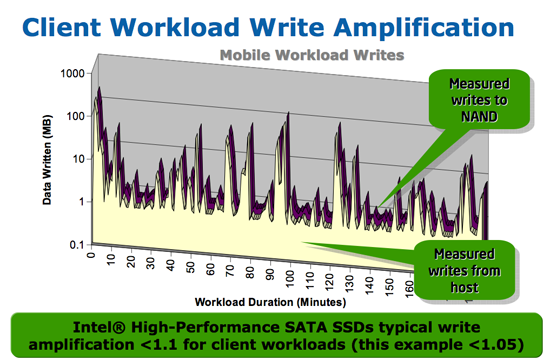

At this point we don't have any data from any of the other SSD controller makers on how they handle situations like this, but Intel states that traditional SSD controllers suffer from write amplification in the 20 - 40x range, which reduces the longevity of their drives. Intel states that on typical client workloads its write amplification factor is less than 1.1x, in other words you're writing less than 10% more data than you need to. The write amplification factor itself doesn't mean much, what matters is the longevity of the drive and there's one more factor that contributes there.

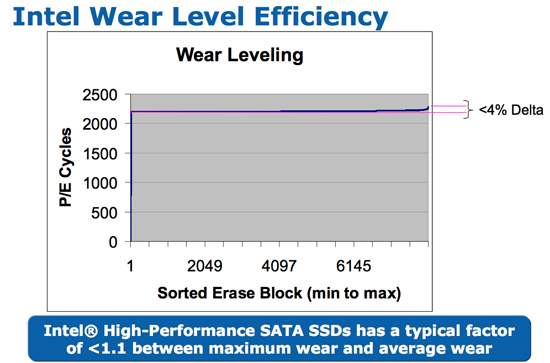

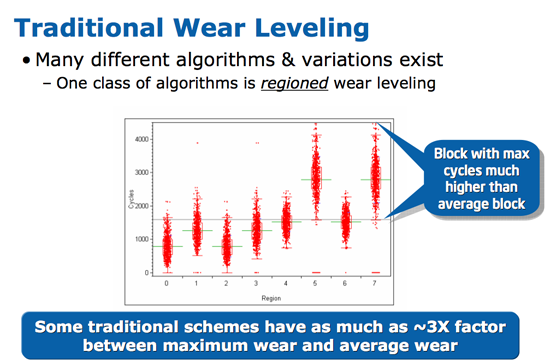

We've already established that with flash there are a finite number of times you can write to a block before it loses its ability to store data. SSDs are pretty intelligent and will use wear leveling algorithms to spread out block usage across the entirety of the drive. Remember that unlike mechanical disks, it doesn't matter where on a SSD you write to, the performance will always be the same. SSDs will thus attempt to write data to all blocks of the drive equally. For example, let's say you download a 2MB file to your band new, never been used SSD, which gets saved to blocks 10, 11, 12 and 13. You realize you downloaded the wrong file and delete it, then go off to download the right file. Rather than write the new file to blocks 10, 11, 12 and 13, the flash controller will write to blocks 14, 15, 16 and 17. In fact, those four blocks won't get used again until every other block on the drive has been written to once. So while your MLC SSD may only have a lifespan of 10,000 cycles, it's going to last quite a while thanks to intelligent wear leveling algorithms.

Intel's wear leveling efficiency, all blocks get used nearly the same amount

Bad wear leveling, presumably on existing SSDs, some blocks get used more than others

Intel's SSDs carry about a 4% wear leveling inefficiency, meaning that 4% of the blocks on an Intel SSD will be worn at a rate higher than the rest.

96 Comments

View All Comments

aeternitas - Thursday, September 11, 2008 - link

Converting all your DVDs to divx is a silly idea. Why would you want to lose dynamic range and overall quality (no matter the settings) for a smaller movie size when 1TB costs 130$?SSD = Preformance (when done right)

HHD = Storage.

johncl - Tuesday, September 9, 2008 - link

Noise isnt a big problem on a 3.5" in a media pc as the other poster states. But heat can be a problem, especially if you plan on passively cool everything else in the computer. An SSD will solve both problems, but only if the SSD is the only disk in the system. From what I understand you want to have both in yours which makes sense since movies/music occupy a lot of space. In that case you will not experience any improved performance since the media would have to be read off the mechanical drive anyway.Your best bet would be to build yourself a small media server and put all noisy hot mechanical disks in that and use small SSDs on your media pc (and indeed any other pc). That way you get the best of both worlds, fast response on application startup/OS boot, silent and no heat - as well as a library of media. You would probably have to use a media frontend that caches information about all media on your server though so it doesnt have to wait on server harddisk spinup etc for every time you browse your media. Perhaps Vista Media Center already does this?

mindless1 - Thursday, September 11, 2008 - link

An SSD will not "solve" a heat problem. The hard drive adds only a small % of heat to a system and being lower heat density it has one of the less difficult requirements for cooling.Speed of the HTPC shouldn't be an issue, unlike a highly mixed use desktop scenario all one needs is to use stable apps without memory leaks then they can hibernate to get rid of the most significant boot-time waiting. Running the HTPC itself the OS performance difference would be trivial and the bitrate for the videos is easily exceeding by either storage type or an uncongested LAN.

piroroadkill - Tuesday, September 9, 2008 - link

To be honest most decent HDDs don't make significant noise anyway, even further quelled by grommets or suspending the drive.Also, the reads will occur on the drive you're reading the movie from - so if you plan to use an external HDD as the source, this will make no difference whatsoever.

dickeywang - Tuesday, September 9, 2008 - link

Imaging you have a 80GB SSD, with 75GB been already occupied by some existing data (OS, installed software, etc), so you only have 10GB space left, now lets say you write and then erase 100GB/day on this SSD, shouldn't the 100GB/day data all be written on the 5GB space? So each cell would be written 100GB/5GB=20cycle/day, so you will reach the 10000cycle/cell limit within less than 18months.Can someone tell me if the analysis above is correct? I guess when they say "100GB/day for 5 years", they should really take into account how much storage space that is un-occupied on the SSD, right?

johncl - Tuesday, September 9, 2008 - link

A good wear leveling algorithm can move about "static" blocks so that their cells are also available for wear. I do not know if the current implementations use this though. Anyone know this?Lux88 - Tuesday, September 9, 2008 - link

I remember reading a number of SSD reviews, but it's first time I read about the pauses. Indeed, quick search revealed 5 articles, starting from May 2007, but the conclusions only mentioned a high price and a small capacity as drawbacks. Nothing about freezing nor pauses. Some of these 5 probably were SCL-drives, some MLC.It's funny how a simple multitasking test can reveal an Achille's heel of large group of products, just when a product appears that doesn't suffer from this particular drawback.

Overall good article and good info. So good that all the previous articles on the matter of SSDs on this site seem bad in comparison. Thanks for the info anyway, better late than never ;).

eva2000 - Tuesday, September 9, 2008 - link

If the OCZ Core controller does indeed have 16KB on chip cache for read/writes maybe that's the problem as OCZ Core pdf states for their SSD"each page contains 4 Kbytes of data, however, because of the parallelism at the back end of the controller, every access includes simultaneous opening of 16 pages for a total accessible data contingent of 64 Kbytes"

????

araczynski - Tuesday, September 9, 2008 - link

looks quite promising. maybe within about 2 years they'll get the bugs worked out, a more realistic price, and an extended life span, and i'll replace my regular drives.yyrkoon - Tuesday, September 9, 2008 - link

"No one really paid much attention to Intel getting into the SSD (Solid State Disk) business. We all heard the announcements, we heard the claims of amazing performance, but I didn't really believe it. After all, it was just a matter of hooking up a bunch of flash chips to a controller and putting them in a drive enclosure, right? "You mean you did not pay attention? I know I did, because Intel has always been serious with things of this nature. That and they are partnered with Crucial(Micron) right ?. . . Now if this was some attempt at sarcasm, or a joke . . .

Seriously, and I mean VERY seriously, I was excited when Anandtech 'reported' that Intel/Micron were going to get into the SSD market. After all affordable SSDs are very desirable, never mind affordable/very good performing SSDs. That, and I knew if Intel got into the market, that we would not have these half-fast implementations that we're seeing now from these so called 'SSD manufactures'. Well, even Intel is not impervious to screw ups, but they usually learn by their mistakes quickly, and correct them. Micron (most notably Crucial) from my experience does not like to be anything but the best in what they do, so to me this seemed like a perfect team, in a perfect market. Does this mean I think Micron is the best ? Not necessarily. Lets me just say that after years of dealing with Crucial, I have a very high opinion of Crucial/Micron.

"What can we conclude here? SSDs can be good for gaming, but they aren't guaranteed to offer more performance than a good HDD; and Intel's X25-M continues to dominate the charts."

Are we reading the same charts ? These words coming from the mouth of someone who sometimes mentions even the most minuscule performance difference as being a 'clear winner' ? Regardless, I think it *is* clear to anyone willing to pay attention to the charts that the Intel SSD "dominates". Now whether the cost of admission is worth this performance gain is another story altogether. I was slightly surprised to see a performance gain in FPS just by changing HDDS, and to be honest I will remain skeptical. I suppose that some data that *could* effect FPS performance could be pulled down while the main game loop is running.

Either way, this is a good article, and there was more than enough information here for me(a technology junky). Now lets hope that Intel lowers the cost of these drives to a more reasonable price(sooner rather than later). The current price arrangement kind of reminds me of CD burner prices years ago.