Intel X25-M SSD: Intel Delivers One of the World's Fastest Drives

by Anand Lal Shimpi on September 8, 2008 4:00 PM EST- Posted in

- Storage

How Long Will Intel's SSDs Last?

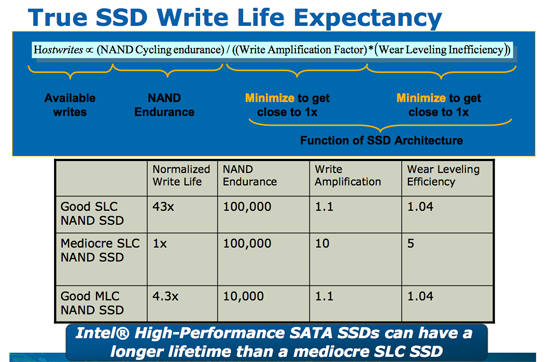

SSD lifespans are usually quantified in the number of erase/program cycles a block can go through before it is unusable, as I mentioned earlier it's generally 10,000 cycles for MLC flash and 100,000 cycles for SLC. Neither of these numbers are particularly user friendly since only the SSD itself is aware of how many blocks it has programmed. Intel wanted to represent its SSD lifespan as a function of the amount of data written per day, so Intel met with a number of OEMs and collectively they came up with a target figure: 20GB per day. OEMs wanted assurances that a user could write 20GB of data per day to these drives and still have them last, guaranteed, for five years. Intel had no problems with that.

Intel went one step further and delivered 5x what the OEMs requested. Thus Intel will guarantee that you can write 100GB of data to one of its MLC SSDs every day, for the next five years, and your data will remain intact. The drives only ship with a 3 year warranty but I suspect that there'd be some recourse if you could prove that Intel's 100GB/day promise was false.

Just like Intel's CPUs can run much higher than their rated clock speed, Intel's NAND should be able to last much longer than its rated lifespan

It's also possible for a flash cell to lose its charge over time (albeit a very long time). Intel adheres to the JEDEC spec on how long your data is supposed to last on its SSDs. The spec states that if you've only used 10% of the lifespan of your device (cycles or GB written), then your data needs to remain intact for 10 years. If you've used 100% of available cycles, then your data needs to remain intact for 1 year. Intel certifies its drives in accordance with the JEDEC specs from 0 - 70C; at optimal temperatures your data will last even longer (these SSDs should operate at below 40C in normal conditions).

Intel and Micron have four joint fabs manufactured under the IMFT partnership, and these are the fabs that produce the flash going into Intel's SSDs. The 50nm flash used in the launch drives are rated at 10,000 erase/programming but like many of Intel's products there's a lot of built in margin. Apparently it shouldn't be unexpected to see 2, 3 or 4x the rated lifespan out of these things, depending on temperature and usage model obviously.

Given the 100GB per day x 5 year lifespan of Intel's MLC SSDs, there's no cause for concern from a data reliability perspective for the desktop/notebook usage case. High load transactional database servers could easily outlast the lifespan of MLC flash and that's where SLC is really aimed at. These days the MLC vs. SLC debate is more about performance, but as you'll soon see - Intel has redefined what to expect from an MLC drive.

Other Wear and Tear

With no moving parts in a SSD, the types of failures are pretty unique. While erasing/programming blocks is the most likely cause of failure with NAND flash, a secondary cause of data corruption is something known as program disturb. When programming a cell there's a chance that you could corrupt the data in an adjacent cell. This is mostly a function of the quality of your flash, and obviously being an expert in semiconductor manufacturing the implication here is that Intel's flash is pretty decent quality.

Intel actually includes additional space on the drive, on the order of 7.5 - 8% more (6 - 6.4GB on an 80GB drive) specifically for reliability purposes. If you start running out of good blocks to write to (nearing the end of your drive's lifespan), the SSD will write to this additional space on the drive. One interesting sidenote, you can actually increase the amount of reserved space on your drive to increase its lifespan. First secure erase the drive and using the ATA SetMaxAddress command just shrink the user capacity, giving you more spare area.

96 Comments

View All Comments

Mocib - Thursday, October 9, 2008 - link

Good stuff, but why isn't anyone talking about ioXtreme, the PCI-E SSD drive from Fusion-IO? It baffles me just how little talk there is about ioXtreme, and the ioDrive solution in general.Shadowmaster625 - Thursday, October 9, 2008 - link

I think the Fusion-IO is great as a concept. But what we really need is for Intel and/or AMD to start thinking intelligently about SSDs.AMD and Intel need to agree on a standard for an integrated SSD controller. And then create a new open standard for a Flash SSD DIMM socket.

Then I could buy a 32 or 64 GB SSD DIMM and plug it into a socket next to my RAM, and have a SUPER-FAST hard drive. Imagine a SSD DIMM that costs $50 and puts out even better numbers than the Fusion-IO! With economy of scale, it would only cost a few dollers per CPU and a few dollars more for the motherboard. But the performance would shatter the current paradigm.

The cost of the DIMMs would be low because there would be no expensive controller on the module, like there is now with flash SSDs. And that is how it should be! There is NO need for a controller on a memory module! How we ended up taking this convoluted route baffles me. It is a fatally flawed design that is always going to be bottlenecked by the SATA interface, no matter how fast it is. The SSD MUST have a direct link to the CPU in order to unleash its true performance potential.

This would increase performance so much that if VIA did this with their Nano CPU, they would have an end product that outperforms even Nehalem in real-world everyday PC usage. If you dont believe me, you need to check out the Fusion-IO. With SSD controller integration, you can have Fusion-IO level performance for dirt cheap.

If you understand what I am talking about here, and can see that this is truly the way to go with SSDs, then you need to help get the word to AMD and Intel. Whoever does it first is going to make a killing. I'd prefer it to be AMD at this point but it just needs to get done.

ProDigit - Tuesday, October 7, 2008 - link

Concerning the Vista boottime,I think it'd make more sense to express that in seconds rather than MB/s.I rather have a Windows boot in 38seconds article,than a windows boots with 51MB/s speeds.. That'd be totally useless to me.

Also, I had hoped for entry level SSD cards, replacements for mininotebooks rather in the category of sub 150$ drives.

On an XP machine, 32GB is more then enough for a mininotebook (8GB has been done before). Mininotebooks cost about $500,and cheap ones below $300. I,as many out there, am not willing to spend $500 on a SSD drive, when the machine costs the same or less.

I had hoped maybe a slightly lower performance 40GB SSD drive could be sold for 149$,which is the max price for SSD cards for mini notebooks.

for laptops and normal notebooks drives upto 200-250$ range would be enough for 64-80GB. I don't agree on the '300-400' region being good for SSD drives. Prices are still waaay too high!

Ofcourse we're paying a lot of R&D right now,prices should drop 1 year from now. Notebooks with XP should do with drives starting from 64GB,mini notebooks with drives from 32-40GB,and for desktops 160GB is more than enough. In fact, desktops usually have multiple harddrives, and an SSD is only good for netbooks for it's faster speeds, and lower powerconsumption.

If you want to benefit from speeds on a desktop,a 60-80GB will more then do, since only the Windows, office applications, anti-virus and personal programs like coreldraw, photoshop, or games need to be on the SSD drive.

Downloaded things, movies, mp3 files, all those things that take up space might as well be saved on an external/internal second HD.

Besides if you can handle the slightly higher game loadtimes on conventional HD's, many older games already run fine (over 30fps) on full detail, 1900x??? resolution.

Installing older games on an SSD doesn't really benefit anyone, apart from the slightly lower loadtimes.

Seeing that I'd say for the server market highest speed and largest diskspace-size matter, and occasionally also lowest power consumption matter.

=> highest priced SSD's. X >$1000, X >164GB /SSD

For the desktop high to highest speed matters, less focus on diskspace size and power consumption.

=> normal priced SSD's $250 > X > $599 X > 80GB/SSD

For the notebooks high speed and lowest power consumption matter, smaller size as compensation for price.

=> Normal priced SSD's $175 > X > $399 X > 60GB/SSD

For the mininotebook normal speed, and more focus on lower power consumption and lowest pricing matter!

=> Low powered small SSD's $75 > X > $199 X > 32GB/SSD

gemsurf - Sunday, October 5, 2008 - link

Just in case anyone hasn't noticed, these are showing up for sale all over the net in the $625 to $750 range. Using live search, I bought one from teckwave on ebay yesterday for $481.80 after the live search cashback from microsoft.BTW, Does Jmicron do anything right? Seems I had AHCI/Raid issues on the 965 series Intel chipsets a few years back with jmicron controllers too!

Shadowmaster625 - Wednesday, September 24, 2008 - link

Obviously Intel has greater resources than you guys. No doubt they threw a large number of bodies into write optimizations.But it isnt too hard to figure out what they did. I'm assuming that when the controller is free from reads or writes, that is when it takes the time to actually go and erase a block. The controller probably adds up all the pages that are flagged for erasure, and when it has enough to fill an entire block, then it goes and erases and writes that block.

Assuming 4KB pages and 512KB blocks (~150,000 blocks per 80GB device) what Intel must be doing is just writing each page wherever they could shove it. And erasing one block while writing to all those other blocks. (With that many blocks you could do a lot of writing without ever having to wait for one to erase.) And I would go ahead and have the controller acknowledge the data was written once it is all in the buffer. That would free up the drive as far as Windows is concerned.

If I was designing one of these devices, I would definately demand as much SRAM as possible. I dont buy that line of bull about Intel not using the SRAM for temporary data storage. That makes no sense. You can take steps to ensure the data is protected, but making use of SRAM is key to greater performance in the future. That is what allows you to put off erasing and writing blocks until the drive is idle. Even a SRAM storage time limit of just one second would add a lot of performance, and the risk of data loss would be negligable.

Shadowmaster625 - Wednesday, September 24, 2008 - link

OCZ OCZSSD2-1S32G32GB SLC, currently $395

The 64GB version is more expensive than the Intel right now, but with the money they've already raked in who really thinks they wont be able to match intel performance or pricewise? Of course they will. So how can this possibly be that great of a thing? So its a few extra GB. Gimme a break, I would rather take the 32GB and simply juggle around stuff onto my media drive every now and then. Did you know you can simply copy your entire folder from the Program Files directory over to your other drive and then put it back when you want to use it? I do that with games all the time. It takes all of 2 minutes... Why pay hundreds of dollars extra to avoid having to do that? It's just a background task anyway. That's how 32GB has been enough space for my system drive for a long time now. (Well, that and not using Vista.) At any rate this is hardly a game changer. The other MLC vendors will address the latency issue.

cfp - Tuesday, September 16, 2008 - link

Have you seen any UK/Euro shops with these available (for preorder even?) yet? There are many results on the US Froogle (though none of them seem to have stock or availability dates) but still none on the UK one.Per Hansson - Friday, September 12, 2008 - link

What about the Mtron SSD'sYou said they used a different controller vs Samsung in the beginning of the article but you never benchmarked them?

7Enigma - Friday, September 19, 2008 - link

I would like to know the question to this as well...NeoZGeo - Thursday, September 11, 2008 - link

The whole review is based on Intel vs OCZ Core. We all know OCZ core had issues that you have mentioned. However, what I would like to see is other drives test bench against OCZ core drive, or even the core II drive. Suppose the controller has a different firmware according to some guys from OCZ on the core 2, and I find that a bit bias if you are using a different spec item to represent all the other drives in the market.