Intel's Larrabee Architecture Disclosure: A Calculated First Move

by Anand Lal Shimpi & Derek Wilson on August 4, 2008 12:00 AM EST- Posted in

- GPUs

Drilling Deeper and Making the AMD/NVIDIA Comparison

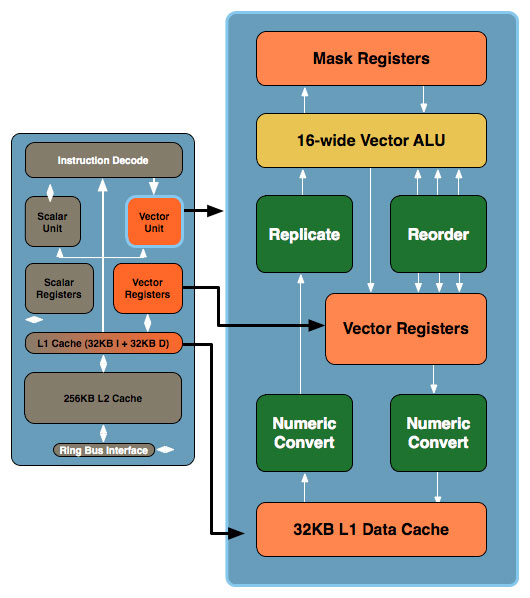

Don't be fooled by the initial diagram, this simple x86 core gets far more complex. In the image below, the block to the left is the Larrabee core we mentioned earlier, to the right we've blown up the vector unit and its associated parts:

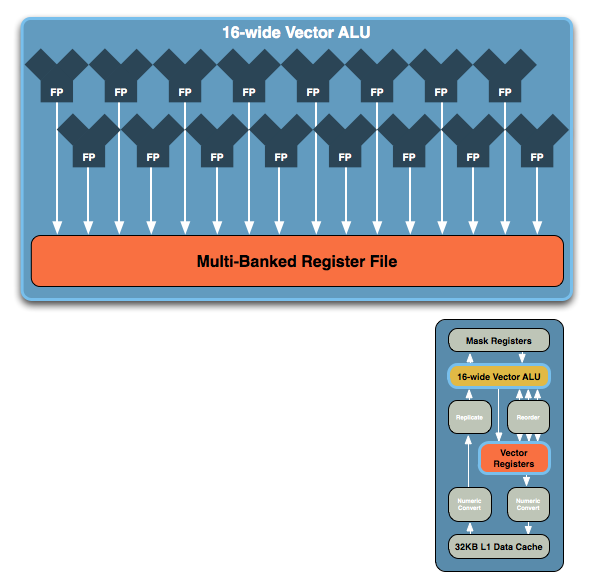

The vector unit is key and within that unit you've got a ton of registers and a very wide vector ALU, which leads us to the fundamental building block of Larrabee. NVIDIA's GT200 is built out of Streaming Processors, AMD's RV770 out of Stream Processing Units and Larrabee's performance comes from these 16-wide vector ALUs:

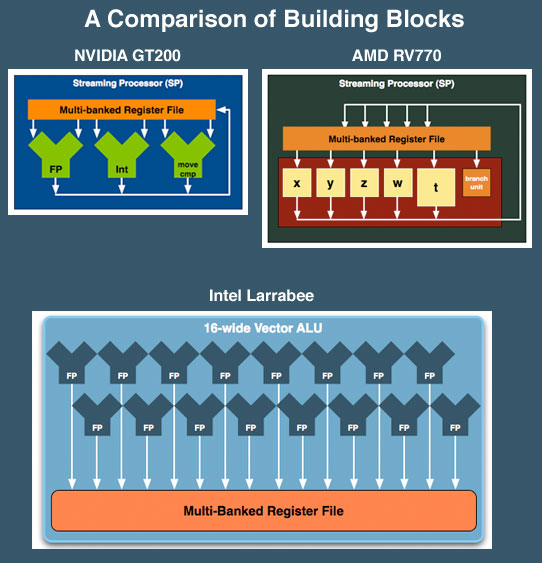

The vector ALU can behave as a 16-wide single precision ALU or an 8-wide double precision, although that doesn't necessarily translate into equivalent throughput (which Intel would not at this point clarify). Compared to ATI and NVIDIA, here's how Larrabee looks at a basic execution unit level:

NVIDIA's SPs work on a single operation, AMD's can work on five, and Larrabee's vector unit can work on sixteen. NVIDIA has a couple hundred of these SPs in its high end GPUs, AMD has 160 and Intel is expected to have anywhere from 16 - 32 of these cores in Larrabee. If NVIDIA is on the tons-of-simple-hardware end of the spectrum, Intel is on the exact opposite end of the scale.

We've already shown that AMD's architecture requires a lot of help from the compiler to properly schedule and maximize the utilization of its execution resources within one of its 5-wide SPs, with Larrabee the importance of the compiler is tremendous. Luckily for Larrabee, some of the best (if not the best) compilers are made by Intel. If anyone could get away with this sort of an architecture, it's Intel.

At the same time, while we don't have a full understanding of the details yet, we get the idea that Larrabee's vector unit is sort of a chameleon. From the information we have, these vector units could exectue atomic 16-wide ops for a single thread of a running program and can handle register swizzling across all 16 exectution units. This implies something very AMD like and wide. But it also looks like each of the 16 vector execution units, using the mask registers can branch independently (looking very much more like NVIDIA's solution).

We've already seen how AMD and NVIDIA architectural differences show distinct advantages and disadvantages against eachother in different games. If Intel is able to adapt the way the vector unit is used to suit specific situations, they could have something huge on their hands. Again, we don't have enough detail to tell what's going to happen, but things do look very interesting.

101 Comments

View All Comments

iop3u2 - Monday, August 4, 2008 - link

First of all it's called d3d not directx.Secondly you seem to imply that direct3d/opengl will cease to exist at some point if larrabee succeeds. I thinks you don't quite get what they are. They are APIs. Larrabee won't make programming APIless. Are you serious anand or what?

The Preacher - Tuesday, August 5, 2008 - link

It could make programming D3D/OpenGL-less for programs/PCs that exploit Larrabee. And if the share of such programs/PCs increases, the share of competing solutions logically decreases and might eventually vanish (although not anytime soon).iop3u2 - Tuesday, August 5, 2008 - link

Just because you can for example write a c program without the c lib it doesn't mean that people follow that road. It's all about what programmers will choose to do.Also, even if they do vanish there will still be a need for an api. So there will either be a new api or they won't vanish. Both situations make no difference whatsoever to the fact that larrabee will always need api implementations.

ZootyGray - Tuesday, August 5, 2008 - link

right - and I will put hotels on boardwalk and park place :)I used to own an 815chipset - it was like version 14 or whatever so it didn't suk as bad as some of the earlier ones - but it did blow up - I think pixelated FarCry and Doom3 really killed it. But o sure, the software fixes and bubblegum patches made it good, for a while. I really do think I am going to wait for this just so I can watch the lineups of returns - or read the funny forums posts of sheep seeking help - baaaahaha :) The best part is that it doesn't exist - delay, postpone - kinda like the 64bit chip also. Maybe later, maybe. But the ads invade the livingroom.

Make sure you keep yer getouttajailfree card - receipt.

Ummm let's see: I think I will buy this one!

Reality is that 4870x2 is on deck. Not 'rumour and sigh'. I just know there will be a 16page article on that - not!

Pok3R - Monday, August 4, 2008 - link

Larrabee means good news for consumers, and definitely bad news for nvidia. Maybe the worst in decades...with AMD and Ati having enough human resources now to face it, and Nvidia having nothing but bad policies and falling stocks despite good $elling numbers...The future, today, is definitely Intel vs AMD/Ati.

initialised - Monday, August 4, 2008 - link

a miniature render farm (you know like they use to make films like Hulk and WALL-E) on a chip. Lets hope AMD and nVidia can keep up.ZootyGray - Monday, August 4, 2008 - link

Really? Guess again. There is NOT anything to keep up to.I do not accept that the grafx loser in the industry is going to simply become numero uno overnight.

You really think that nvidia and ati have been sleeping for decades?

Supporting the destruction of ntel's only competitors leaves us at the mercy of a group that's already been busted for monop and antitrst.

Well written article? Of course, but I think it's like you are all fished in on many fronts. Nothing is really known except spin. This is beachfront property in the desert.

There's nothing to watch except what we usually watch - released hardware benchmarks.

I tell you AMD is going to be the cpu of choice in a few months when the truth about the bias in the benchies is revealed. And try - try real hard - to imagine ati+amd creating the ultimate cpu+gpu powerhouse. ntel needs this hype because I am not the only one with vision here. they are rich and scared, for now.

but such talk seems to be frowned upon - so let's all cheer for the best grafx manufacturer - ntel = kkaakk! sorry to offend, so many of you just might be lost in the paid mob. so just watch and you will see for yourself- no need to believe me. I really know almost nothing - but I am free to see for myself. sorry to offend - I just can't cosign bs. but that's just me and a very few other posters here who have also been criticized. watch and see for yourself. watch...

Mr Roboto - Monday, August 4, 2008 - link

I'd have to agree with the skeptics here. While the article is well written and informative (What AnandTech articles aren't?) it's purely speculation that Intel can get all of the variables right. How does a company that hasn't made a competitive GPU since the days of the 486 suddenly jump to Nvidia and ATI GPU type levels on their first try, never mind surpassing them. It's absolutely absurd to think that these chips are going to replace GPU's in terms of performance. I believe Larrabee will kick the shit out of Intel's own IGP but then again that's not much of a feat.Again I have to agree with previous posters that Intel just isn't that innovative. Even as I speak their are many lawsuits pending against Intel, most of them having to do with accusations of stolen IP that were used to design the Core2Duo. Antitrust suits aside, it's clear that Intel is similar to MS in that they just bully, bribe or outright steal to get ahead then pay whatever fines are levied because in the end they can never fine them enough to not make it worthwhile for Intel or MS to break the law.

The 65nm Core2Duo is amazing. The 45nm E8400 I just bought is even more so. However the more I think about Intel's past failures as well as how they operate as a company the more far fetched this whole thing becomes.

IMO they should have tried to compete in the dedicated GPU market before trying something like this. From a purely marketing standpoint Intel and graphics just don't go together. To come in to a new field in which they are unproven (I would bet Intel executives believe that building IGP's have somehow given them experience) and make outrageous claims such as the GPU is dead and Intel will now be the leader, is absurd.

JarredWalton - Tuesday, August 5, 2008 - link

I think a lot of you are missing the point that we fully understand this is all on paper and what remains to be seen is how it actually pans out in practice. Without the necessary drivers to run DirectX and OpenGL at high performance, this will fail. How many times was that mentioned? At least two or three.Now, the other thing to consider is that in terms of complexity, a modern Core 2 core is far more complex to design than any of the GPUs out there. You have all sorts of general functions that need to be coded. A GPU core these days consists of a relatively simple core that you then repeat 4, 8, 16, 32, etc. times. Intel is doing exactly that with Larrabee. They went back to a simple x86 core and tacked on some serious vector processing power. Sounds a lot like NVIDIA's SP or ATI's SPU really.

Fundamentally, they have what is necessary to make this work, and all that remains is to see if they can pull off the software side. That's a big IF, but then Intel is a big company. We have reached the point where GPUs and CPUs are merging - CUDA and GPGPU aim to do just that in some ways - so for Intel to start at the CPU side and move towards a GPU is no less valid an approach than NVIDIA/ATI starting at GPUs and moving towards general purpose CPUs.

Midwayman - Monday, August 4, 2008 - link

I not interested in the graphics so much. It may or may not compete with the the top end nvidia chips if released on time. What is more interesting is if this can easily be integrated as a general purpose cpu for non-graphics work? Imagine getting a benefit out of your gpu 100% of the time, not just when you're gaming. I know its possible to use more modern GPU's this way if you code specifically for them, but with its x86 architecture, it might be able to do it without having apps specifically coded for it.