The Radeon HD 4850 & 4870: AMD Wins at $199 and $299

by Anand Lal Shimpi & Derek Wilson on June 25, 2008 12:00 AM EST- Posted in

- GPUs

Power Consumption

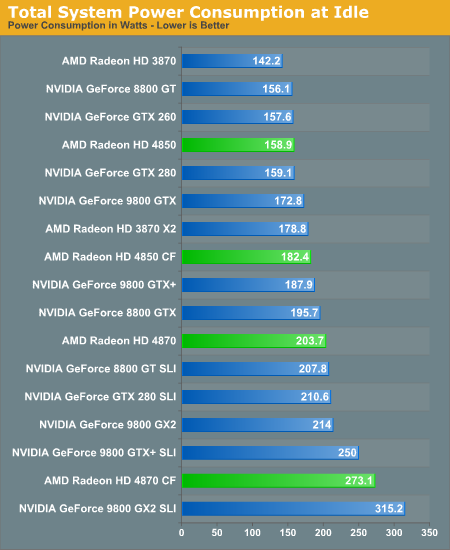

NVIDIA's idle power optimizations do a great job of keeping their very power hungry parts sitting pretty when in 2D mode. Many people I know just leave their computers on all day and generally playing games 24 hours a day is not that great for the health. Idle power is important, especially as energy costs rise, and taking steps to ensure that less power is drawn when less power is needed is a great direction to move in. AMD's 4870 hardware is less power friendly, but 4850 is pretty well balanced at idle.

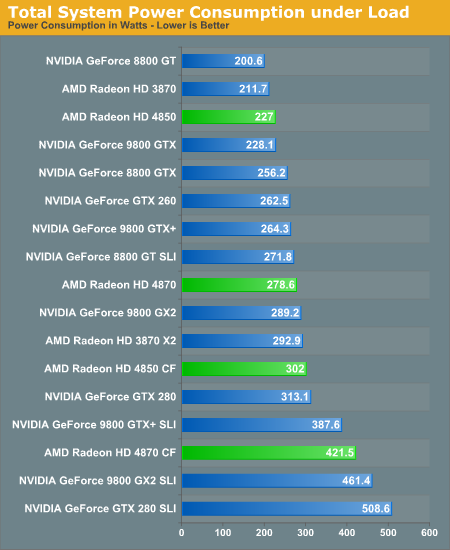

Moving on to load power.

These numbers are peak power draw experienced over multiple runs of 3dmark vantage's third feature test (pixel shaders). This test heavily loads the GPU while being very light on the rest of the system so that we can get as clear a picture of relative GPU power draw as possible. Playing games will incur much higher system level power draw as the CPU, memory, drives and other hardware may also start to hit their own peak power draw at the same time. 4850 and 4870 CrossFire both require large and stable PSUs in order to play actual games.

Clearly the 4870 is a power junky posting the second highest peak power of any card (second only to NVIDIA's GTX 280). While a single 4870 draws more power than the 9800 GX2, quad SLI does peak higher than 4870 crossfire. 4850 power draw is on par with its competitors, but 4850 crossfire does seem to have an advantage in power draw over the 9800 GTX+.

Heat and Noise

These cards get way too hot. I keep burning my hands when I try to swap them out, and Anand seems to enjoy using recently tested 4800 series cards as space heaters. We didn't look at heat data for this article, but our 4850 tests show that things get toasty. And the 4870 gets hugely hot.

The fans are kind of quiet most of the time, but some added noise for less system heat might be a good trade off. Even if it's load, making the rest of a system incredibly hot isn't really the right way to go as other fans will need to work harder and/or components might start to fail.

The noise level of the 4850 fan is alright, but when the 4870 spins up I tend to glance out the window to make sure a jet isn't just about to fly into the building. It's hugely loud at load, but it doesn't get there fast and it doesn't stay there long. It seems AMD favored cooling things down quick and then returning to quiet running.

215 Comments

View All Comments

BusterGoode - Sunday, June 29, 2008 - link

Thanks, great article by the way Anandtech is my first stop for reviews.jay401 - Wednesday, June 25, 2008 - link

Good but I just wish AMD would give it a full 512-bit memory bus bandwidth. Tired of 256-bit. It's so dated and it shows in the overall bandwidth compared to NVidia's cards with 512-bit bus widths. All that fancy GDDR4/5 and it doesn't actually shoot them way ahead of NVidia's cards in memory bandwidth because they halve the bus width by going with 256-bit instead of 512-bit. When they offer 512-bit the cards will REALLY shine.Spoelie - Thursday, June 26, 2008 - link

Except that when R600 had a 512bit bus, it didn't show any advantage over RV670 with a 256bit bus. And that was with GDDR3 vs GDDR3, not GDDR5 like in RV770 case.JarredWalton - Thursday, June 26, 2008 - link

R600 was 512-bit ring bus with 256-bit memory interface (four 64-bit interfaces). http://www.anandtech.com/showdoc.aspx?i=2552&p...">Read about it here for a refresh. Besides being more costly to implement, it used a lot of power and didn't actually end up providing provably better performance. I think it was an interesting approach that turned out to be less than perfect... just like NetBurst was an interesting design that turned out to have serious power limitations.Spoelie - Thursday, June 26, 2008 - link

Except that it was not, that was R520 ;) and R580 is the X19x0 series. That second one proved to be the superior solution over time.R600 is the x2900xt, and it had a 1024bit ring bus with 512bit memory interface.

DerekWilson - Sunday, June 29, 2008 - link

yeah, r600 was 512-bithttp://www.anandtech.com/showdoc.aspx?i=2988&p...">http://www.anandtech.com/showdoc.aspx?i=2988&p...

looking at external bus width is an interesting challenge ... and gddr5 makes things a little more crazy in that clock speed and bus width can be so low with such high data rates ...

but the 4870 does have 16 memory modules on it ... so that's a bit of a barrier to higher bit width busses ...

JarredWalton - Wednesday, June 25, 2008 - link

I'd argue that the 512-bit memory interface on NVIDIA's cards is at least partly to blame for their high pricing. All things being equal, a 512-bit interface costs a lot more to implement than a 256-bit interface. GDDR5 at 900MHz is effectively the same as GDDR3 at 1800MHz... except no one is able to make 1800MHz GDDR3. Latencies might favor one or the other solution, but latencies are usually covered by caching and other design decisions in the GPU world.geok1ng - Wednesday, June 25, 2008 - link

The tests showed what i feared: my 8800GT is getting old to pump my Apple at 2560x1600 even without AA! But the tests also showed that the 512MB of DDR5 on the 4870 justifies the higher price tag over the 4850, something that the 3870/3850 pair failed to demonstrate. It remains the question: will 1GB of DDR5 detrone NVIDIA and rule the 30 inches realm of single GPU solutions?IKeelU - Wednesday, June 25, 2008 - link

"It is as if AMD and NVIDIA just started pulling out hardware and throwing it at eachother"This makes me crack up...I just imagine two bruised and sweaty middle-aged CEO's flinging PCBs at each other, like children in a snowball fight.

Thorsson - Wednesday, June 25, 2008 - link

The heat is worrying. I'd like to see how aftermarket coolers work with a 4870.