NVIDIA's 1.4 Billion Transistor GPU: GT200 Arrives as the GeForce GTX 280 & 260

by Anand Lal Shimpi & Derek Wilson on June 16, 2008 9:00 AM EST- Posted in

- GPUs

Lots More Compute, a Leetle More Texturing

NVIDIA's GT200 GPU has a significant increase in computational power thanks to its 240 streaming processors, up from 128 in the previous G80 design. As a result, NVIDIA's GT200 GPU showcases a tremendous increase in transistor count over its previous generation architecture (1.4 billion up from 686 million in G80).

The increase in compute power of GT200 is not mirrored however in the increase in texture processing power. On the previous page we outlined how the Texture/Processing Clusters went from two Shader Multiprocessors to three, and how there are now a total of ten TPCs in the chip up from 8 in the GeForce 8800 GTX.

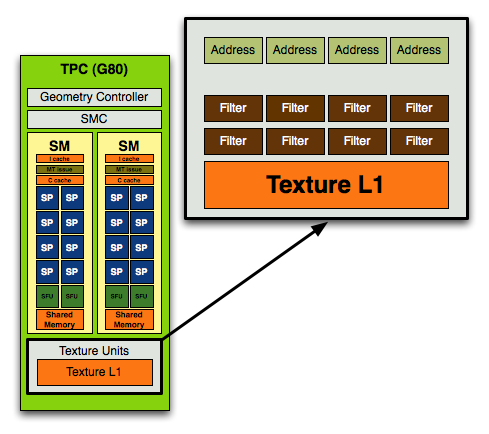

In the original G80 core, used in the GeForce 8800 GTX NVIDIA's texture block looked like this:

In each block you had 4 texture address units and 8 texture filtering units.

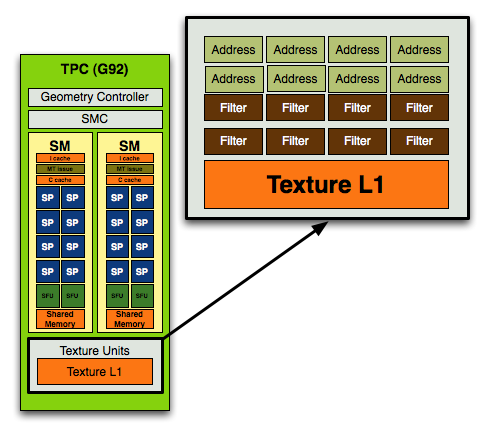

With the move to G92, used in the GeForce 8800 GT, 8800 GTS 512 and 9800 GTX, NVIDIA doubled the number of texture address units and achieved a 1:1 ratio of address/filtering units:

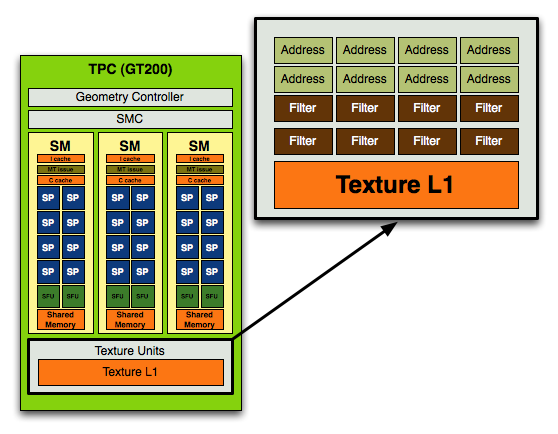

With GT200 in the GeForce GTX 280/260, NVIDIA kept the address-to-filtering ratio at 1:1 but increased the ratio of SPs to texture processors:

In the previous designs you'd have 8 address and 8 filtering units per TPC (or 16 streaming processors), in the GT200 you have the same 8 address and 8 filtering units but for a larger TPC with 24 SPs.

Here's how the specs stand up across the generations:

| NVIDIA Architecture Comparison | G80 | G92 | GT200 |

| Streaming Processors per TPC | 16 | 16 | 24 |

| Texture Address Units per TPC | 4 | 8 | 8 |

| Texture Filtering Units per TPC | 8 | 8 | 8 |

| Total SPs | 128 | 128 | 240 |

| Total Texture Address Units | 32 | 64 | 80 |

| Total Texture Filtering Units | 64 | 64 | 80 |

For a 87.5% increase in compute, there's a mere 25% increase in texture processing power. This ratio echoes what NVIDIA has been preaching for years: that games are running more complex shaders and are not as bound by texture processing as they were in years prior. If this wasn't true then we'd see a closer to 25% increase in performance of GT200 over G80 at the same clock rather than something much greater.

It also means that GT200's performance advantage over G80 or G92 based architectures (e.g. GeForce 9800 GTX) will be determined much by how computationally bound the games we're testing are.

The ratio of increase compute/texture power in the GT200 has been evident in NVIDIA architectures for years now, dating back to the ill-fated GeForce FX. NVIDIA sacrificed memory bandwidth on the GeForce FX, equipping it with a narrow 128-bit memory bus (compared to ATI's 256-bit interface on the Radeon 9700 Pro) and instead focused on building a much more powerful compute engine. Unfortunately, the bet was the wrong one to make at the time and the GeForce FX was hardly competitive (for more reasons than just a lack of memory bandwidth), but today we're dealing in a very different world. Complex shader programs run on each pixel on the screen and there's a definite need for more compute power in today's GPUs.

An Increase in Rasterization Throughput

In addition to the 25% increase in texture processing capabilities of the GT200, NVIDIA added two more ROP partitions to the GPU. While the GeForce 8800 GTX had six ROP partitions, each capable of outputting a maximum of 4 pixels per clock, the GT200 adds two more partitions.

With eight ROP partitions the GT200 can now output a maximum of 32 pixels per clock, up from 24 pixels per clock in the GeForce 8800 GTX and 9800 GTX.

The pixel blend rate on G80/G92 was half-speed, meaning that while you could output 24 pixels per clock, you could only blend 12 pixels per clock. Thanks to the 65nm shrink and redesign, GT200 can now output and blend pixels at full speed - that's 32 pixels per clock for each.

The end result is a non-linear performance improvement in everything from anti-aliasing and fire effects to shadows on GT200. It's an evolutionary change, but that really does sum up many of the enhancements of GT200 over G80/G92.

108 Comments

View All Comments

Anand Lal Shimpi - Monday, June 16, 2008 - link

Thanks for the heads up, you're right about G92 only having 4 ROPs, I've corrected the image and references in the article. I also clarified the GeForce FX statement, it definitely fell behind for more reasons than just memory bandwidth, but the point was that NVIDIA has been trying to go down this path for a while now.Take care,

Anand

mczak - Monday, June 16, 2008 - link

Thanks for correcting. Still, the paragraph about the FX is a bit odd imho. Lack of bandwidth really was the least of its problem, it was a too complicated core with actually lots of texturing power, and sacrificed raw compute power for more programmability in the compute core (which was its biggest problem).Arbie - Monday, June 16, 2008 - link

I appreciate the in-depth look at the architecture, but what really matters to me are graphics performance, heat, and noise. You addressed the card's idle power dissipation but only in full-system terms, which masks a lot. Will it really draw 25W in idle under WinXP?And this highly detailed review does not even mention noise! That's very disappointing. I'm ready to buy this card, but Tom's finds their samples terribly noisy. I was hoping and expecting Anandtech to talk about this.

Arbie

Anand Lal Shimpi - Monday, June 16, 2008 - link

I've updated the article with some thoughts on noise. It's definitely loud under load, not GeForce FX loud but the fan does move a lot of air. It's the loudest thing in my office by far once you get the GPU temps high enough.From the updated article:

"Cooling NVIDIA's hottest card isn't easy and you can definitely hear the beast moving air. At idle, the GPU is as quiet as any other high-end NVIDIA GPU. Under load, as the GTX 280 heats up the fan spins faster and moves much more air, which quickly becomes audible. It's not GeForce FX annoying, but it's not as quiet as other high-end NVIDIA GPUs; then again, there are 1.4 billion transistors switching in there. If you have a silent PC, the GTX 280 will definitely un-silence it and put out enough heat to make the rest of your fans work harder. If you're used to a GeForce 8800 GTX, GTS or GT, the noise will bother you. The problem is that returning to idle from gaming for a couple of hours results in a fan that doesn't want to spin down as low as when you first turned your machine on.

While it's impressive that NVIDIA built this chip on a 65nm process, it desperately needs to move to 55nm."

Mr Roboto - Monday, June 16, 2008 - link

I agree with what Darkryft said about wanting a card that absolutely without a doubt, stomps the 8800GTX. So far that hasn't happened as the GX2 and GT200 hardly do either. The only thing they proved with the G90 and G92 is that they know how to cut costs.Well thanks for making me feel like such a smart consumer as it's going on 2 years with my 8800GTX and it still owns 90% of the games I play.

P.S. It looks like Nvidia has quietly discontinued the 8800GTX as it's no longer on major retail sites.

Rev1 - Monday, June 16, 2008 - link

Ya the 640 8800 gts also. No Sli for me lol.wiper - Monday, June 16, 2008 - link

What about noise ? Other reviews show mixed data. One says it's another dustblower, others says the noise level is ok.Zak - Monday, June 16, 2008 - link

First thing though, don't rely entirely on spell checker:)) Page 4 "Derek Gets Technical": "borrowing terminology from weaving was cleaver" I believe you meant "clever"?As darkryft pointed out:

"In my opinion, for $650, I want to see some f-ing God-like performance."

Why would anyone pay $650 for this? Ugh? This is probably THE disappointment of the year:(((

Z.

js01 - Monday, June 16, 2008 - link

On techpowerups review it seemed to pull much bigger numbers but they were using xp sp2.http://www.techpowerup.com/reviews/Point_Of_View/G...">http://www.techpowerup.com/reviews/Point_Of_View/G...

NickelPlate - Monday, June 16, 2008 - link

Pfft, title says it all. Let's hope that driver updates widen the gap between previous high end products. Otherwise, I'll pass on this one.