NVIDIA's 1.4 Billion Transistor GPU: GT200 Arrives as the GeForce GTX 280 & 260

by Anand Lal Shimpi & Derek Wilson on June 16, 2008 9:00 AM EST- Posted in

- GPUs

SLI Performance Throwdown: GTX 280 SLI vs. 9800 GX2 Quad SLI

We had two GeForce GTX 280s on hand and a plethora of SLI bridges, so we of course had to run them in SLI. Now remember that a single GTX 280 uses more power than a GeForce 9800 GX2, and thus two of them is going to use a lot of power. It was so much power in fact that our OCZ EliteXStream 1000W power supply wasn't enough. While the SLI system would boot and get into Windows, we couldn't actually complete any benchmarks. All of the power supplies on the GTX 280 SLI certified list are at least 1200W units. We didn't have any on hand so we had to rig up a second system with a separate power supply and used the second PSU to power the extra GTX 280 card. A 3-way SLI setup using GTX 280s may end up requiring a power supply that can draw more power than most household circuits can provide.

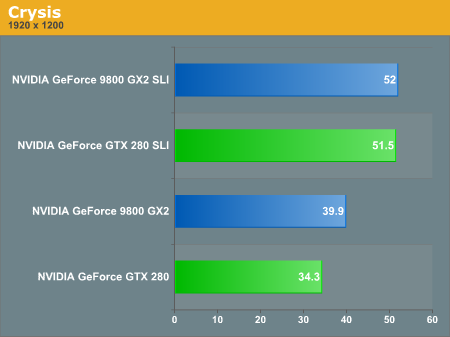

Although a single GeForce GTX 280 loses to a GeForce 9800 GX2 in most cases, scaling from two to four GPUs is never as good as scaling from one to two. Thus forcing the question: are a pair of GTX 280s in SLI faster than a 9800 GX2 Quad SLI setup?

Let's look at the performance improvements from one to two cards across our games:

| GTX 280 SLI (Improvement from 1 to 2 cards) | 9800 GX2 SLI (Improvement from 1 to 2 cards) |

|

| Crysis | 50.1% | 30.3% |

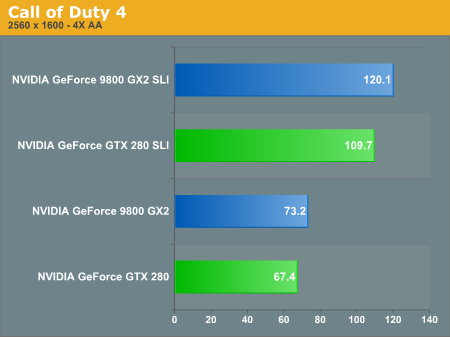

| Call of Duty 4 | 62.8% | 64.0% |

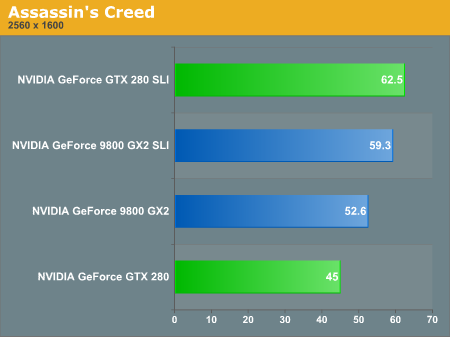

| Assassin's Creed | 38.9% | 12.7% |

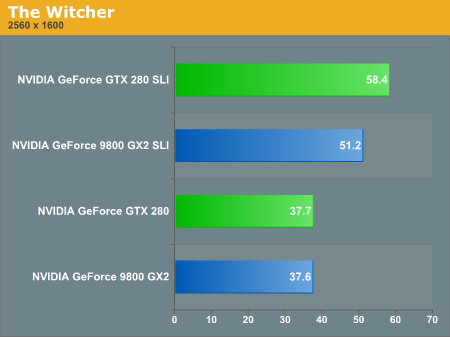

| The Witcher | 54.9% | 36.2% |

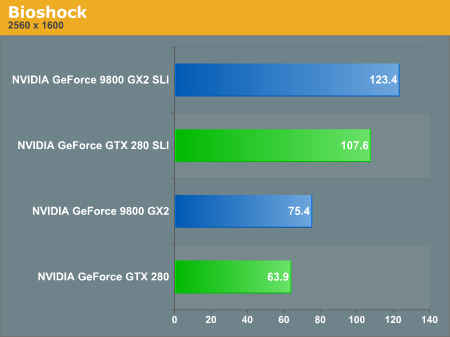

| Bioshock | 68.4% | 63.7% |

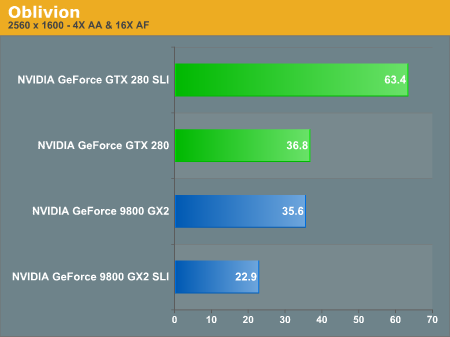

| Oblivion | 72.3% | -35.7% |

Crysis, Assassin's Creed, The Witcher and Oblivion are all situations where performance either doesn't scale as well or drops when going from one to two GX2s, giving NVIDIA a reason to offer two GTX 280s over a clumsy Quad SLI setup.

Thanks to poor Quad SLI scaling, the GX2 SLI and the GTX 280 SLI perform the same, despite the GTX 280 being noticeably slower than the 9800 GX2 in single-card mode.

When it does scale well however, the GX2 SLI outperforms the GTX 280 SLI setup just as you'd expect.

Sometimes you run into serious issues with triple and quad SLI where performance is actually reduced; Oblivion at 2560 x 1600 is one of those situations and the result is the GTX 280 SLI gives you a better overall experience.

While we'd have trouble recommending a single GTX 280 over a single 9800 GX2, a pair of GTX 280s will probably give you a more hassle-free, and consistent experience than a pair of 9800 GX2s.

108 Comments

View All Comments

Anand Lal Shimpi - Monday, June 16, 2008 - link

Thanks for the heads up, you're right about G92 only having 4 ROPs, I've corrected the image and references in the article. I also clarified the GeForce FX statement, it definitely fell behind for more reasons than just memory bandwidth, but the point was that NVIDIA has been trying to go down this path for a while now.Take care,

Anand

mczak - Monday, June 16, 2008 - link

Thanks for correcting. Still, the paragraph about the FX is a bit odd imho. Lack of bandwidth really was the least of its problem, it was a too complicated core with actually lots of texturing power, and sacrificed raw compute power for more programmability in the compute core (which was its biggest problem).Arbie - Monday, June 16, 2008 - link

I appreciate the in-depth look at the architecture, but what really matters to me are graphics performance, heat, and noise. You addressed the card's idle power dissipation but only in full-system terms, which masks a lot. Will it really draw 25W in idle under WinXP?And this highly detailed review does not even mention noise! That's very disappointing. I'm ready to buy this card, but Tom's finds their samples terribly noisy. I was hoping and expecting Anandtech to talk about this.

Arbie

Anand Lal Shimpi - Monday, June 16, 2008 - link

I've updated the article with some thoughts on noise. It's definitely loud under load, not GeForce FX loud but the fan does move a lot of air. It's the loudest thing in my office by far once you get the GPU temps high enough.From the updated article:

"Cooling NVIDIA's hottest card isn't easy and you can definitely hear the beast moving air. At idle, the GPU is as quiet as any other high-end NVIDIA GPU. Under load, as the GTX 280 heats up the fan spins faster and moves much more air, which quickly becomes audible. It's not GeForce FX annoying, but it's not as quiet as other high-end NVIDIA GPUs; then again, there are 1.4 billion transistors switching in there. If you have a silent PC, the GTX 280 will definitely un-silence it and put out enough heat to make the rest of your fans work harder. If you're used to a GeForce 8800 GTX, GTS or GT, the noise will bother you. The problem is that returning to idle from gaming for a couple of hours results in a fan that doesn't want to spin down as low as when you first turned your machine on.

While it's impressive that NVIDIA built this chip on a 65nm process, it desperately needs to move to 55nm."

Mr Roboto - Monday, June 16, 2008 - link

I agree with what Darkryft said about wanting a card that absolutely without a doubt, stomps the 8800GTX. So far that hasn't happened as the GX2 and GT200 hardly do either. The only thing they proved with the G90 and G92 is that they know how to cut costs.Well thanks for making me feel like such a smart consumer as it's going on 2 years with my 8800GTX and it still owns 90% of the games I play.

P.S. It looks like Nvidia has quietly discontinued the 8800GTX as it's no longer on major retail sites.

Rev1 - Monday, June 16, 2008 - link

Ya the 640 8800 gts also. No Sli for me lol.wiper - Monday, June 16, 2008 - link

What about noise ? Other reviews show mixed data. One says it's another dustblower, others says the noise level is ok.Zak - Monday, June 16, 2008 - link

First thing though, don't rely entirely on spell checker:)) Page 4 "Derek Gets Technical": "borrowing terminology from weaving was cleaver" I believe you meant "clever"?As darkryft pointed out:

"In my opinion, for $650, I want to see some f-ing God-like performance."

Why would anyone pay $650 for this? Ugh? This is probably THE disappointment of the year:(((

Z.

js01 - Monday, June 16, 2008 - link

On techpowerups review it seemed to pull much bigger numbers but they were using xp sp2.http://www.techpowerup.com/reviews/Point_Of_View/G...">http://www.techpowerup.com/reviews/Point_Of_View/G...

NickelPlate - Monday, June 16, 2008 - link

Pfft, title says it all. Let's hope that driver updates widen the gap between previous high end products. Otherwise, I'll pass on this one.