NVIDIA's 1.4 Billion Transistor GPU: GT200 Arrives as the GeForce GTX 280 & 260

by Anand Lal Shimpi & Derek Wilson on June 16, 2008 9:00 AM EST- Posted in

- GPUs

Derek Gets Technical: 15th Century Loom Technology Makes a Comeback

Because it's multithreaded...

Yes I know it's horrible, but NVIDIA has gone a bit deeper in explaining their architecture to us and they thought borrowing terminology from weaving was clever. But as much as that might make you want to roll your eyes, the explanation of how things work that is enabled is worth it.

In cloth weaving, a warp is the vertical group of parallel threads that are held taught while the weft are the threads passed through these. I suppose it makes sense, then, that NVIDIA decided to call their grouping of parallel threads to be executed on an SM a warp.

See the group of threads that hang from the top of this loom? That's called a warp.

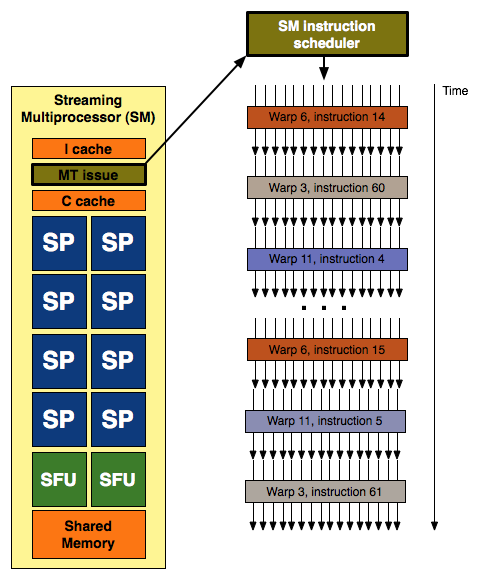

With each SM having 8 SPs and G80 having two SMs per TPC (for a total of 16 SPs) it looked like a natural fit to execute quads across these 16 scalar units. We learned that this was not the case, but seeing the grouping of three SMs per TPC in GT200 still looked a little funny until we really learned more about how things are scheduled on NVIDIA's unified architecture. Each SM's scheduler picks a new warp to work on every clock cycle, and since the scheduler runs at half speed this ends up being every other clock cycle from the perspective of the rest of the SM. Each warp is made up of a group of 32 threads (in pixel shaders this is a group of 8 quads) that share an instruction stream (a shader program or a kernel if you're talking about GPU computing).

32 threads in a warp, issued in two groups of 16 threads - one such group is depicted above

Different warps built from threads executing the same shader program can follow completely independant paths down the code, but every thread within a warp must be exectuing the same instruction. This means that our branch granularity is 32 threads: every block of 32 threads (every warp) can branch independantly of all others, but if one or more threads within a warp branch in a different direction than the rest then every single thread in that warp must execute both code paths. Each threads only retains the result from the path it was supposed to follow probably by using the branch result to dynamically predicate the apporpriate path per thread. Each SM can have 32 (up from 24 in G80) warps in flight at the same time (for a total of 1024 threads in flight per SM). With 10 TPCs each containing 3 SMs, that's up to 30720 threads in flight on a GTX 280.

Each SM with it's 8 SPs and 2 SFUs is capable of processing up to 8 ops per clock (MAD (a fused floating point multiply and add) being the most complex) in the SPs and 8 ops per clock on the SFUs (which are made up of 4 floating point MUL units and other logic). Warps are executed on SMs in 2 groups of 16 threads (probably four quads for pixels) over four clock cycles.

This is where it gets interesting.

The SPs and SFUs are scheduled on alternating clock cycles from the perspective of the SM scheduler. They execute independent warps. SPs are scheduled on one clock cycle and SFUs are scheduled the next. Each is able to complete the processing of an entire warp before the next time it is scheduled, which happens to be four clocks (relative to the SPs and SFUs) after they it was scheduled with the last warp.

But wait ... aren't SMs supposed to be able to support dual-issue MAD+MUL? Well, back when G80 launched, Beyond3d did an excellent job of exploring the reality of the situation. With the fact that the SFU handles the "dual-issued" MUL and shares its time with other responsibilities like transcendental calculation and attribute interpolation, the ability of the hardware to actually accelerate cases that could take advantage of dual-issue was significantly reduced. But this scheduling revelation makes it clear that the problem is much more complex.

In order for code that could benefit from dual-issue hardware to take advantage of G80 or GT200, a warp must be scheduled on an SP for the MAD and then it must be re-scheduled on the SFU before it hits the SPs again. If the SFU is too busy handling attribute interpolation or transcendental math, then our warp will be rescheduled on the SPs to calculate the MUL and we will have lost our potential dual-issue speed up.

NVIDIA tells us that they "had some scheduling/issue problems with getting the MUL in the SFU to work consistently per clock. We fixed that in GT200." This makes perfect sense in light of what we now know about scheduling on G80/GT200. What they needed to do to improve the ability of their hardware to speed up cases where a MAD+MUL dual issue would help was to make sure that these cases were properly prioritized to execute on the SFU. We don't see MAD+MUL in every line of code, so giving these cases higher priority on the SFU than its other duties should help to optimize the utilization of the hardware. They do have to be careful not to start just running random MULs on the SFU because those special functions do still need to get done, but pushing a select subset of MULs onto the SFU (where they directly follow MADs that have just been executed on SPs) should definitely help in certain cases.

Really the main focus for NVIDIA is proper utilization and prioritization. Everything for any given frame needs to get done at some point or other, so simulating "dual-issue" shouldn't take priority over other things that need to get done that might be more important to completing the next frame. But organizing how to handle it is a big deal. Real time compilers are a big part of that, but internal thread management and scheduling in an architechure this wide also cannot be ignored. NVIDIA says they can now get 93-94% efficiency from their "dual-issue" implementation in directed tests and that this is significantly higher than on G80. Real world results will be lower, but the thing to remember is that the goal is simply to make maximally efficient use of the hardware availalbe. Just because the SFU isn't assisting in a MAD+MUL every clock doesn't mean it isn't doing something important.

This whole situation leaves us with mixed feelings. The hardware itself is not capable of "dual-issue" as it is understood in an architectural sense. The obfuscation of graphics hardware technology in a competitive industry has been the norm for the past decade, and we can accept this. We would prefer to know what the hardware is actually doing, but we are more than happy to have an explanation of something as a hardware feature where the hardware merely simulates the effect a specific architectural design has. But in the case where the hardware doesn't perform in nearly the same way as it would if the feature had actually been implemented as a hardware feature, we just can't help but be a little disappointed.

And we are conflicted about this because NVIDIA's design is actually very elegant. Attribute interpolation will always need to be done, and having hardware set aside for complex math is also very useful. But rather than making a dedicated fixed function interpolator or doing taylor expansion of complex math on SPs, NVIDIA built hardware that could serve both purposes and that had time left over to help offload some well placed MULs within the instruction stream of running programs.

If NVIDIA had been as open about their architecture as Intel is about their CPU designs, we could not have helped but to be impressed by this. The "missing MUL" wouldn't have been seen as a problem with NVIDIA's dual-issue "hardware"; we would have been praising NVIDIA's ability to schedule and multitask the different units within their SMs in order to improve utilization.

108 Comments

View All Comments

tkrushing - Wednesday, June 18, 2008 - link

Say what you want about this guy but this is partially true which is why AMD/ATI is in the position they have been. They are slowly climbing out of that hole they've been in though. Would have been nice to see 4870x2 hit the market first. As we know competition = less prices for everyone!hk690 - Tuesday, June 17, 2008 - link

I would love to kick you hard in the face, breaking it. Then I'd cut your stomach open with a chainsaw, exposing your intestines. Then I'd cut your windpipe in two with a boxcutter. Then I'd tie you to the back of a pickup truck, and drag you, until your useless fucking corpse was torn to a million fucking useless, bloody, and gory pieces.

Hopefully you'll get what's coming to you. Fucking bitch

http://www.youtube.com/watch?v=XNAFUpDTy3M">http://www.youtube.com/watch?v=XNAFUpDTy3M

I wish you a truly painful, bloody, gory and agonizing death, cunt

7Enigma - Wednesday, June 18, 2008 - link

Anand, I'm all for free speech and such, but this guy is going a bit far. I read these articles at work frequently and once the dreaded C-word is used I'm paranoid I'm being watched.Mr Roboto - Thursday, June 19, 2008 - link

I thought those comments would be deleted already. I'm sure no one cares if they are. I don't know what that person is so mad about .hk690 - Tuesday, June 17, 2008 - link

Die painfully okay? Prefearbly by getting crushed to death in a garbage compactor, by getting your face cut to ribbons with a pocketknife, your head cracked open with a baseball bat, your stomach sliced open and your entrails spilled out, and your eyeballs ripped out of their sockets. Fucking bitch

Mr Roboto - Wednesday, June 18, 2008 - link

Ouch.. Looks like you hit a nerve with AMD\ATI's marketing team!bobsmith1492 - Monday, June 16, 2008 - link

The main benefit from the 280 is the reduced power at idle! If I read the graph right, at idle the 9800 takes ~150W more than the 280 while at idle. Since that's where computers spend the majority of their time, depending on how much you game, that can be a significant cost.kilkennycat - Monday, June 16, 2008 - link

Maybe you should look at the GT200 series from the point of view of nvidia's GPGPU customers - the academic researchers, technology companies requiring fast number-cruching available on the desktop, the professionals in graphics-effects and computer animation - not necessarily real-time, but as quick as possible... The CUDA-using crew. The Tesla initative. This is an explosively-expanding and highly profitable business for nVidia - far more profitable per unit than any home desktop graphics application. An in-depth analysis by Anandtech of what the GT200 architecture brings to these markets over and above the current G8xx/G9xx architecture would be highly appreciated. I have a very strong suspicion that sales of the GT2xx series to the (ultra-rich) home user who has to have the latest and greatest graphics card is just another way of paying the development bills and not the true focus for this particular architecture or product line.nVidia is strongly rumored to be working on the true 2nd-gen Dx10.x product family, to be introduced early next year. Considering the size of the GTX280 silicon, I would expect them to transition the 65nm GTX280 GPU to either TSMC's 45nm or 55nm process before the end of 2008 to prove out the process with this size of device, then in 2009 introduce their true 2nd-gen GPU/GPGPU family on this latter process. A variant on the Intel "tic-toc" process strategy.

strikeback03 - Tuesday, June 17, 2008 - link

But look at the primary audience of this site. Whatever nvidia's intentions are for the GT280, I'm guessing more people here are interested in gaming than in subsidizing research.Wirmish - Tuesday, June 17, 2008 - link

"...requiring fast number-cruching available on the desktop..."GTX 260 = 715 GFLOPS

GTX 280 = 933 GFLOPS

HD 4850 = 1000 GFLOPS

HD 4870 = 1200 GFLOPS

4870 X2 = 2400 GFLOPS

Take a look here: http://tinyurl.com/5jwym5">http://tinyurl.com/5jwym5