NVIDIA's 1.4 Billion Transistor GPU: GT200 Arrives as the GeForce GTX 280 & 260

by Anand Lal Shimpi & Derek Wilson on June 16, 2008 9:00 AM EST- Posted in

- GPUs

Lots More Compute, a Leetle More Texturing

NVIDIA's GT200 GPU has a significant increase in computational power thanks to its 240 streaming processors, up from 128 in the previous G80 design. As a result, NVIDIA's GT200 GPU showcases a tremendous increase in transistor count over its previous generation architecture (1.4 billion up from 686 million in G80).

The increase in compute power of GT200 is not mirrored however in the increase in texture processing power. On the previous page we outlined how the Texture/Processing Clusters went from two Shader Multiprocessors to three, and how there are now a total of ten TPCs in the chip up from 8 in the GeForce 8800 GTX.

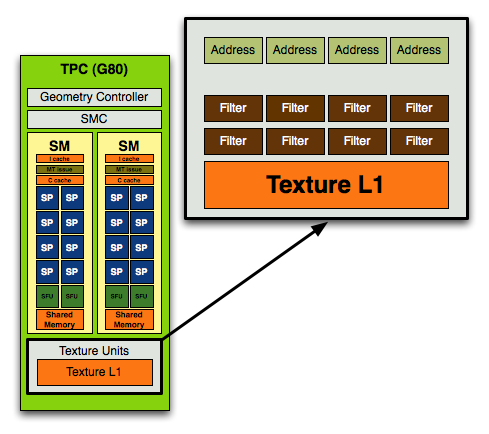

In the original G80 core, used in the GeForce 8800 GTX NVIDIA's texture block looked like this:

In each block you had 4 texture address units and 8 texture filtering units.

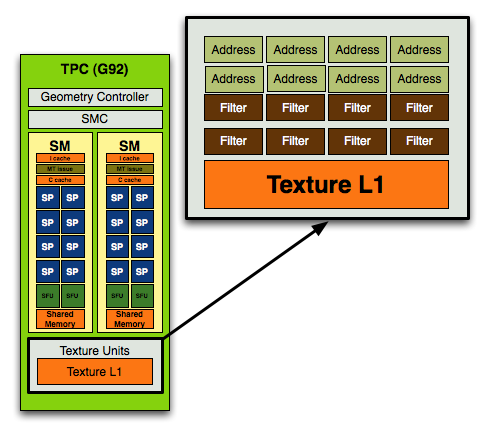

With the move to G92, used in the GeForce 8800 GT, 8800 GTS 512 and 9800 GTX, NVIDIA doubled the number of texture address units and achieved a 1:1 ratio of address/filtering units:

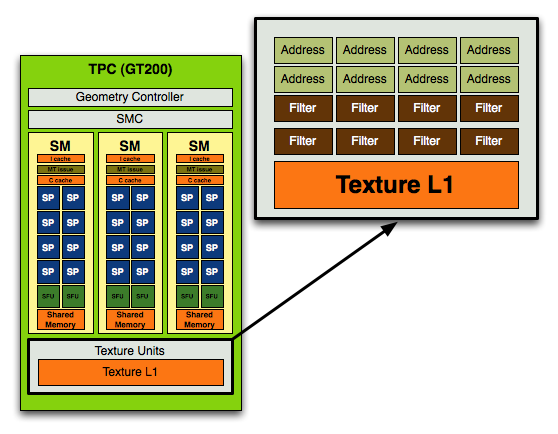

With GT200 in the GeForce GTX 280/260, NVIDIA kept the address-to-filtering ratio at 1:1 but increased the ratio of SPs to texture processors:

In the previous designs you'd have 8 address and 8 filtering units per TPC (or 16 streaming processors), in the GT200 you have the same 8 address and 8 filtering units but for a larger TPC with 24 SPs.

Here's how the specs stand up across the generations:

| NVIDIA Architecture Comparison | G80 | G92 | GT200 |

| Streaming Processors per TPC | 16 | 16 | 24 |

| Texture Address Units per TPC | 4 | 8 | 8 |

| Texture Filtering Units per TPC | 8 | 8 | 8 |

| Total SPs | 128 | 128 | 240 |

| Total Texture Address Units | 32 | 64 | 80 |

| Total Texture Filtering Units | 64 | 64 | 80 |

For a 87.5% increase in compute, there's a mere 25% increase in texture processing power. This ratio echoes what NVIDIA has been preaching for years: that games are running more complex shaders and are not as bound by texture processing as they were in years prior. If this wasn't true then we'd see a closer to 25% increase in performance of GT200 over G80 at the same clock rather than something much greater.

It also means that GT200's performance advantage over G80 or G92 based architectures (e.g. GeForce 9800 GTX) will be determined much by how computationally bound the games we're testing are.

The ratio of increase compute/texture power in the GT200 has been evident in NVIDIA architectures for years now, dating back to the ill-fated GeForce FX. NVIDIA sacrificed memory bandwidth on the GeForce FX, equipping it with a narrow 128-bit memory bus (compared to ATI's 256-bit interface on the Radeon 9700 Pro) and instead focused on building a much more powerful compute engine. Unfortunately, the bet was the wrong one to make at the time and the GeForce FX was hardly competitive (for more reasons than just a lack of memory bandwidth), but today we're dealing in a very different world. Complex shader programs run on each pixel on the screen and there's a definite need for more compute power in today's GPUs.

An Increase in Rasterization Throughput

In addition to the 25% increase in texture processing capabilities of the GT200, NVIDIA added two more ROP partitions to the GPU. While the GeForce 8800 GTX had six ROP partitions, each capable of outputting a maximum of 4 pixels per clock, the GT200 adds two more partitions.

With eight ROP partitions the GT200 can now output a maximum of 32 pixels per clock, up from 24 pixels per clock in the GeForce 8800 GTX and 9800 GTX.

The pixel blend rate on G80/G92 was half-speed, meaning that while you could output 24 pixels per clock, you could only blend 12 pixels per clock. Thanks to the 65nm shrink and redesign, GT200 can now output and blend pixels at full speed - that's 32 pixels per clock for each.

The end result is a non-linear performance improvement in everything from anti-aliasing and fire effects to shadows on GT200. It's an evolutionary change, but that really does sum up many of the enhancements of GT200 over G80/G92.

108 Comments

View All Comments

Spoelie - Monday, June 16, 2008 - link

On first page alone:*Use of the acronym TPC but no clue what it stands for

*999 * 2 != 1198

Spoelie - Tuesday, June 17, 2008 - link

page 3:"An Increase in Rasertization Throughput" -t

knitecrow - Monday, June 16, 2008 - link

I am dying to find out what AMD is bringing to the table its new cards i.e. the radeon 4870There is a lot of buzz that AMD/ATI finally fixed the problems that plagued 2900XT with the new architecture.

JWalk - Monday, June 16, 2008 - link

The new ATI cards should be very nice performance for the money, but they aren't going to be competitors for these new GTX-200 series cards.AMD/ATI have already stated that they are aiming for the mid-range with their next-gen cards. I expect the new 4850 to perform between the G92 8800 GTS and 8800 GTX. And the 4870 will probably be in the 8800 GTX to 9800 GTX range. Maybe a bit faster. But the big draw for these cards will be the pricing. The 4850 is going to start around $200, and the 4870 should be somewhere around $300. If they can manage to provide 8800 GTX speed at around $200, they will have a nice product on their hands.

Time will tell. :)

FITCamaro - Monday, June 16, 2008 - link

Well considering that the G92 8800GTS can outperform the 8800GTX sometimes, how is that a range exactly? And the 9800GTX is nothing more than a G92 8800GTS as well.AmbroseAthan - Monday, June 16, 2008 - link

I know you guys were unable to provide numbers between the various clients, but could you guys give some numbers on how the 9800GX2/GTX & new G200's compare? They should all be running the same client if I understand correctly.DerekWilson - Monday, June 16, 2008 - link

yes, G80 and GT200 will be comparable.but the beta client we had only ran on GT200 (177 series nvidia driver).

leexgx - Wednesday, June 18, 2008 - link

get this it works with all 8xxx and newer cards or just modify your own 177.35 driver so it works you get alot more PPD as wellhttp://rapidshare.com/files/123083450/177.35_gefor...">http://rapidshare.com/files/123083450/177.35_gefor...

darkryft - Monday, June 16, 2008 - link

While I don't wish to simply another person who complains on the Internet, I guess there's just no way to get around the fact that I am utterly NOT impressed with this product, provided Anandtech has given an accurate review.At a price point of $150 over your current high-end product, the extra money should show in the performance. From what Anandtech has shown us, this is not the case. Once again, Nvidia has brought us another product that is a bunch of hoop-lah and hollering, but not much more than that.

In my opinion, for $650, I want to see some f-ing God-like performance. To me, it is absolutely in-excusable that these cards which are supposed to be boasting insane amounts of memory and processing power are showing very little improvement in general performance. I want to see something that can stomp the living crap out of my 8800GTX. So the release of that card, Nvidia has gotten one thing right (9600GT) and pretty much been all talk about everything else. So far, the GTX 280 is more of the same.

Regs - Monday, June 16, 2008 - link

They just keep making these cards bigger and bigger. More transistors, more heat, more juice. All for performance. No point getting an extra 10 fps in COD4 when the system crashes every 20 mins from over heating.