AMD's B3 Stepping Phenom Previewed, TLB Hardware Fix Tested

by Anand Lal Shimpi on March 12, 2008 12:00 AM EST- Posted in

- CPUs

The "TLB Bug" Explained

Phenom is a monolithic quad core design, each of the four cores has its own internal L2 cache and the die has a single L3 cache that all of the cores share. As programs are run, instructions and data are taken into the L2 cache, but page table entries are also cached.

Virtual memory translation is commonplace in all modern OSes, the premise is simple: each application thinks it has contiguous access to all memory locations so they don't have to worry about complex memory management. When an application attempts to load or store something in memory, the address it thinks it's accessing is simply a virtual address - the actual location in memory can be something very different.

The OS stores a table of all of these mappings from virtual to physical addresses, the CPU can cache frequently used mappings so memory accesses take place much quicker.

If the CPU didn't cache page table entries, each memory access would proceed as follows:

1) Read from a pagetable directory

2) Read a pagetable entry

3) Then read the translated address and access memory

Then there's something called a Translation Lookaside Buffer (TLB) which takes the addresses and maps them one to one, so you don't even need to perform a cache lookup - there's just a direct translation stored in the TLB. The TLB is much smaller than the cache so you can't store too many items in the TLB, but the benefit is that with a good TLB algorithm you can get good hit rates within your TLB. If the mapping isn't contained in the TLB then you have to do a lookup in cache, and if it's not there then you have to go out to main memory to figure out the actual memory address you want to access.

Page table entries eventually have to be updated, for example there are situations when the OS decides to move a set of data to another physical location so all of the virtual addresses need to be updated to reflect the new address.

When page table entries are updated the cached entries stored in a core's L2 cache also need to be updated. Page table entries are a special case in the L2, not only does the cache controller have to modify the data in the entries to reflect their new values, but it also needs to set a couple of status bits in the page table entries in order to mark that the data has been modified.

Page table entries in cache are very different than normal data. With normal data you simply modify it and your cache coherency algorithms take care of making sure everything knows that the data is modified. With page table entries the cache controller must manually mark it by setting access and dirty bits because page tables and TLBs are actually managed by the OS. The cache line has to be modified, have a couple of bits set and then put back into the cache - an exception to the standard operating procedure. And herein lies the infamous TLB erratum.

When a page table entry is modified, the core's cache controller is supposed to take the cached entry, place it in a register, modify it and then put it back in the cache. However there is a corner case whereby if the core is in the middle of making this modification and about to set the access/dirty bits and some other activity goes into the cache, hits the same index that the page table entry is stored in and if the page table entry is marked as the next thing to be evicted, then the data will be evicted from the L2 cache and put into the L3 cache in the middle of this modification process. The line that's evicted from L2 is without its access and dirty bits set and now in L3, and technically incorrect.

Now the update operation is still taking place and when it finishes setting the appropriate bits, the same page table data will be placed into the core's L2 cache again. Now the L2 and L3 cache have the same data, which shouldn't happen given AMD's exclusive cache hierarchy.

If the line in L2 gets evicted once more, it'll be sent off to the L3 and there will be a conflict creating a L3 protocol error. But the more dangerous situation is what happens if another core requests the data.

If another core requests the data it will first check for it in L3, and of course find it there, not knowing that an adjacent core also has the data in its L2. The second core will now pull the data from L3 and place it in its L2 cache but keep in mind that the data is marked as unmodified, while the first core has it marked as modified in its L2.

At this point everything is ok since the data in both L2 caches is identical, but if the second core modifies the page table data that could create a dangerous problem as you end up in a situation where two cores have different data that is expected to be the same. This could either result in a hard lock of the system or even worse, silent data corruption.

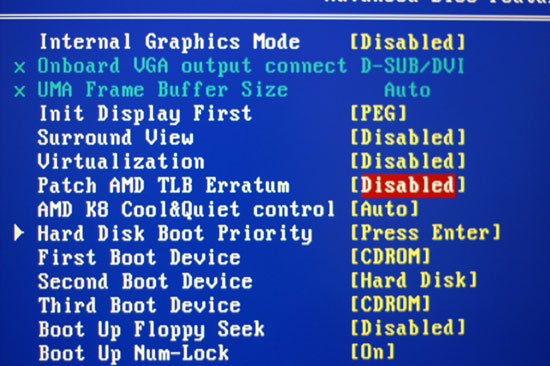

The BIOS fix

The workaround in B2 stepping Phenoms is a BIOS fix that tells the TLB it can't look in the cache for page table entries upon lookup. Obviously this drives memory latencies up significantly as it adds additional memory requests to all page table accesses.

The hardware fix implemented in B3 Phenoms is that whenever a page table entry is modified, it's evicted out of L2 and placed in L3. There's a very minor performance penalty because of this but no where near as bad as the software/BIOS TLB fix mentioned above.

AMD gave us two confirmed situations where the TLB erratum would rear its ugly head in real world usage:

1) Windows Vista 64-bit running SPEC CPU 2006

2) Xen Hypervisor running Windows XP and an unknown configuration of applications

AMD insisted that the TLB erratum was a highly random event that would not occur during normal desktop usage and we've never encountered it during our testing of Phenom. Regardless, the two scenarios listed above aren't that rare and there could be more that trigger the problem, which makes a great case for fixing the problem

29 Comments

View All Comments

aguilpa1 - Thursday, March 13, 2008 - link

It has already been done. There are tons of sites that have already benchmarked the Phenom (errata fix disabled) against the core 2. Fixing the TLB via hardware doesn't magically make it any faster. There is only a slight increase but its not significant.Redoing all the benchmarks just to prove a slight increase but still lagging behind overall is just beating a dead horse at this point.

crimson117 - Wednesday, March 12, 2008 - link

Clock for Clock is an irrelevant metric. So what if 2.0GHZ on a C2D is faster than 2.0GHZ on a Phenom?Performance per Dollar or Performance per Watt are much more relevant metrics.

backtomac - Wednesday, March 12, 2008 - link

All those metrics are important. Each individual will have a differing importance on each metric.flipmode - Wednesday, March 12, 2008 - link

Says you. It's relevant to at least two people here.JarredWalton - Thursday, March 13, 2008 - link

Actually, I'd say clock-for-clock is one of the worst comparisons to make, short of two things:1) If available clock speeds are similar (they're not - Core 2 Quad tends to have about a 33% advantage in clock speed)

2) If you want to look purely at the architectural performance

While item two looks interesting at first, you have to remember that architecture and design ultimately have a large impact on clock speed. Which is better: more pipeline stages and higher clock speeds, or fewer pipeline stages with lower clock speeds? If you think you know the answer, go work for Intel or AMD. In truth, there is no correct answer - both approaches have merits, and so we end up with a balancing act.

Pentium 4 (NetBurst) is often regarded as going too far in the way of pipeline stages. Which Prescott certainly had some problems due to the pipeline stage count, Northwood and the current Penryn are actually not that far off in terms of stages. The difference is that Penryn (and Core 2 in general) have made numerous changes to the underlying architecture that makes the pipeline stage count less important now.

Clock for clock, I'd imagine an updated 486 core could compete very well in today's market. That is, IF you could actually make such a core. Just think about it: four pipeline stages, give it some more cache, add in SSE and x64 support, put two or four cores on a chip, and then run that sucker at 3.0GHz! But each stage is the old 486 requires so much work to be done that you could never actually get such a design to scale to 2.0GHz on current technology, let alone 3-4GHz.

So when someone says clock-for-clock comparisons are irrelevant, I largely tend to agree. Why don't we do a "clock-for-clock" comparison of a tractor-trailer diesel engine and a formula one engine? Or a "clock-for-clock" comparison of apples and oranges? The latter takes things to an extreme to illustrate a point, but in the case of the former all you really could end up determining is that large diesel engines and racing engines are vastly different.

K10 and Penryn might not be quite so different, but they are dissimilar in enough ways that the best way to compare them really ends up being a large selection of real world performance metrics. Sure, a 2.4GHz Penryn and a 2.4GHz Phenom X4 gives us some idea of how the designs match up, but at the end of the day what really matters is price, performance, stability/reliability, and power requirements (the latter also impacting noise).

flipmode - Sunday, March 16, 2008 - link

Whether or not there is value in comparing IPC is pretty subjective. I happen to disagree with you - I find it valuable, at least for the time being while both AMD and Intel are offering CPUs at comparable clockspeeds (1.6GHz to 3.2GHz, generally). If AMD's were all less than 2.5GHz and Intel's were all more than 2.5GHz then it would be much less useful info to me to know how they performed at the same clockspeed since they didn't operate at the same clockspeed. But it's not the end of the world if Anandtech chooses not to look at such things.mindless1 - Friday, March 14, 2008 - link

Clock for clock is quite relevant because prices change and people overclock. It doesn't mean someone only picks which has more performance per MHz or which has higher MHz or any such thing, rather within a family it is quite relevant to know how it performs clock per clock then the user does the math to further evaluate other alternatives.murphyslabrat - Wednesday, March 12, 2008 - link

However, it does give a foundation for comparing prices and clockspeeds not explicitly compared. It also helps to evaluate potential gain from overclocking.You are right, there are better methods. This one (clock-for-clock performance), while not a very valuable metric in and of itself, does allow better extrapolation.

Cygni - Wednesday, March 12, 2008 - link

Its called a PREview for a reason. ;) Im sure there will be AT rundown of the chip later. This short blurb is only to tell us about the TLB fix.