NVIDIA Acquires AGEIA: Enlightenment and the Death of the PPU

by Derek Wilson on February 12, 2008 11:00 AM EST- Posted in

- GPUs

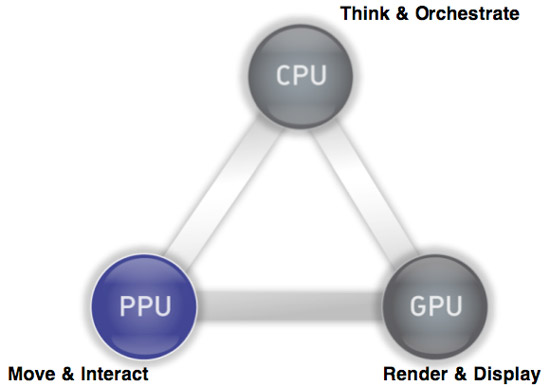

Last week, NVIDIA announced that they have agreed to acquire AGEIA. As most here probably know, AGEIA is the company that make the PhysX physics engine and acceleration hardware. The PhysX PPU (physics processing unit) is designed to accelerate the processing of physics calculations in order to offer developers the potential to deliver more realistic and immersive worlds. The PhysX SDK is there for developers to be able to write game engines that can take advantage of either a CPU or dedicated hardware.

While this has been a terrific idea in theory, the benefits of the hardware are currently a tough sell. The problem stems from the fact that game developers can't rely on gamers having a physics card, and thus they are unable to base fundamental aspects of gameplay on the assumption of physics hardware being present. It is similar to the issues we saw when hardware 3D graphics acceleration first came on to the scene, only the impact from hardware 3D was more readily apparent. The long term benefit from physics hardware is less in what you see and more in the basic principles of how a game world works.

Currently, the way the developers make use of PhysX is based on the lowest common denominator performance: how fast can it run on a CPU. With added hardware, effects can scale (more particles, more objects, faster processing, etc.), but you can't get much beyond "eye candy" style enhancements; you can't yet expect game developers to implement worlds dependent on hardware accelerated physics.

The NVIDIA acquisition of AGEIA would serve to change all that by bringing physics hardware to everyone via a software platform already tailored to scale physics capabilities and performance to the underlying hardware. How is NVIDIA going to be successful where AGEIA failed? After all, not everyone has or

will have NVIDIA graphics hardware. That's the interesting bit.

PPU/GPU, What's the Difference?

Why Dedicated Hardware?

Ever since AGEIA hit the scene, GPU makers have been jumping up and down saying "we can do that too." Sure, physics can run on GPUs. Both graphics and physics lend themselves to a parallel architecture. There are differences though, and AGEIA claimed to be able to handle massively parallel and dependent computations much better than anything else out there. And their claim is probably true. They built hardware to do lots of physics really well.

The problem with that is the issue we mentioned above: developers aren't going to push this thing to the limits by creating games centered on dedicated physics hardware. The type of "effects" physics that developer are currently using the PhysX hardware for is also well suited to a GPU. Certainly complex systems with collisions between rigid and soft bodies happening everywhere would drown a GPU, but adding particles or more fragments from explosions or more gibs or debris is not a problem for either NVIDIA or AMD.

The Saga of Havok FX

Of course, that's why Havok FX came along. Attempting to make use of shaders to implement a physics engine, Havok FX would have enabled developers to start looking at using more horsepower for effects physics without regard for dedicated hardware. While the contemporary GPUs might not have been able to match up to the physics processing power of the PhysX hardware, that really didn't matter because developers were never going to push PhysX to its limits if they wanted to sell games.

But, now that Intel has acquired Havok, it seems that Havok FX is no longer a priority. Or even a thing at all from what we can find. Obviously Intel would prefer all the physics processing stay on the CPU at this point. We can't really blame them; it's good business sense. But it is certainly not the most beneficial thing for the industry as a whole or for gamers in particular.

And now, with no promise of a physics SDK to support graphics cards, and slow adoption of PhysX hardware, NVIDIA saw itself with an opportunity.

Seriously: Why Dedicated Hardware?

In light of the Intel / Havok situation, NVIDIA's acquisition of AGEIA makes sense. They get the PhysX physics engine that they can port over to their graphics hardware. The SDK is already used in many games across many platforms. Adding NVIDIA GPU acceleration to PhysX instantly provides all owners of games that make use of PhysX with hardware for physics acceleration when running on an NVIDIA GPU.

As we pointed out, compared to current GPUs, dedicated physics hardware has more potential physics power. But we also are not going to see a high level of relative physics complexity implemented until developers can be sure consumers have the hardware to handle it. The GPU is just as good as the PhysX card at this stage in hardware accelerated physics. At this point in time there is no benefit to all the power that sits dormant in a PhysX card, and the GPU offers a good solution to the kinds of effects developers are actually using PhysX to implement.

The PhysX software engine is capable of scaling complexity and performance if there is hardware present, and with NVIDIA GPUs essentially being that hardware there is certainly an instantaneously larger install base for PhysX. This totally tips the scales away from the need for dedicated hardware and towards the replacement of the PPU with the GPU at this point in time. We'll look at the future in a second.

32 Comments

View All Comments

kilkennycat - Tuesday, February 12, 2008 - link

Larrabee...Marketing speak for Intel's GPU-killer wannabee. Now about 2 years out? ROTFL.....recoiledsnake - Tuesday, February 12, 2008 - link

Isn't DirectX 11 supposed to ship with physics support? AMD/ATI has said accelerated physics is dead till DirectX 11 comes out, and I tend to agree with them.http://news.softpedia.com/news/GPU-Physics-Dead-an...">http://news.softpedia.com/news/GPU-Phys...nd-Burie...

http://www.xbitlabs.com/news/multimedia/display/20...">http://www.xbitlabs.com/news/multimedia/display/20...

I am shocked that the author didn't even mention DirectX 11 in this two page article.

PrinceGaz - Tuesday, February 12, 2008 - link

OpenPL would be better than a MS dependent solution like DirectPhysics. AFAIK though, no work is being done towards developing an OpenPL standard.It's a shame that CUDA is nVidia specific as otherwise it might be viable as a starting-point for OpenPL development.

mlambert890 - Thursday, February 14, 2008 - link

Why? I mean really. Why would some theoretical "OpenPL" be "better"? Unless you have some transformational OSS agenda, it wouldnt be.Come back to practical reality. Im sure its important to niche Linux fans or the small Mac installed base that everything be NOT based on DirectX, so go ahead and spark that up.

For (literally) 90+% of gamers, DirectX works out just fine and DirectPhysics *will* be the best solution.

A theoretical "OpenPL" would be the same as OpenGL. Marginally supported on the PC, loudly and often rudely evangelized by the OSS holy warriors and, ultimately, not all that much different from a proprietary API in practical application when put in context *on Windows*.

Griswold - Thursday, February 14, 2008 - link

If I build the church, will you come and preach it?MrKaz - Tuesday, February 12, 2008 - link

"Who is NVIDIA's competition when it comes to their burgeoning physics business? It's certainly not AMD now that Havok is owned by Intel, and with the removal of AGEIA, we've got one option left: Intel itself. "I think you are wrong. Microsoft is the principal actor of this “useful technology”.

If Microsoft adds physics to the DX11 API (or even some add-on to the DX10) with the processing done at CPU and/or GPU level AMD will not lose nothing. In fact any Intel/Havok or Nvidia/Ageia implementation might not be even supported. So there goes the Intel and Nvidia investment down the drain.

Of course you are right by excluding Microsoft now because they didn’t show anything yet while Ageia and Havok have real products. But I think it is all up to DirectX.

Resuming I think it’s all up to Microsoft for physics to succeed because I don’t see different implementations to succeed or to get support from developers.

In the end AMD might even have the final word if get to use the Intel version or the Nvidia version ;) or wait for Microsoft…

Mr Alpha - Tuesday, February 12, 2008 - link

Two things that weren't discussed that should have been:1.

We are already GPU limited in most games, so where exactly are we supposed to get this processing power for physics from? Will I in the future have to buy a second 8800GTX for $500, instead of a PhysX card for $99, like I can do now?

2.

Hasn't there been some rumours about Direct Physics, where Microsoft does vendor neutral, GPU accelerated physics?

spidey81 - Tuesday, February 12, 2008 - link

I could be mistaken on this, but hasn't Nvidia decided to start putting onboard graphics on ALL motherboards with their chipsets? Wouldn't that be a great way to have a second GPU to handle physics. Albeit, it may be an underpowered GPU, but it may work well enough to offload physics from the CPU. Like it was pointed out, beats having that horsepower sitting there going to waste.7Enigma - Tuesday, February 12, 2008 - link

I had the same question until I re-read the "official" position of Nvidia. They don't plan (for mainstream market) to integrate the chip into upcoming gfx cards, rather its a technology they can keep in-house until the time arrives where it could be beneficial.At some point if/when physics becomes the norm instead of the feature, I could see the company offering a hybrid gfx chip as the higher-end part(s). Just imagine in 5 years the current AMD 3870X2 was a single high-end gpu, with an attached physics chip (using this as an example since IMO it had been the most effective dual chip solution in real-world performance to date, Nvidia's previous try not so much). That would be practical, again as long as the software is coded for it.

Here's hoping THAT is the future.....I don't want ANOTHER piece of equipment that requires upgrading at every new build.

wingless - Tuesday, February 12, 2008 - link

Intel is going down in 2010! Thats all I'll say about that lol.Seriously though, if AMD manages to get on board with this PhysX thing, their Fusion CPU's and CrossfireX will make a helluva lot of sense. Think about GPU physics on the processor itself, motherboard north bridge, and GPU all adding their powers together to run games with tons of physics. An AMD/ATI or AMD/Nvidia box would make more gaming sense than using Intel CPU/GPU's. This could be a big help to the ailing AMD right now...