ATI Radeon HD 3870 X2: 2 GPUs 1 Card, A Return to the High End

by Anand Lal Shimpi on January 28, 2008 12:00 AM EST- Posted in

- GPUs

Last May, AMD introduced its much delayed Radeon HD 2900 XT at $399. In a highly unexpected move, AMD indicated that it would not be introducing any higher end graphics cards. We discussed this in our original 2900 XT review:

"In another unique move, there is no high end part in AMD's R600 lineup. The Radeon HD 2900 XT is the highest end graphics card in the lineup and it's priced at $399. While we appreciate AMD's intent to keep prices in check, the justification is what we have an issue with. According to AMD, it loses money on high end parts which is why we won't see anything more expensive than the 2900 XT this time around. The real story is that AMD would lose money on a high end part if it wasn't competitive, which is why we feel that there's nothing more expensive than the 2900 XT. It's not a huge deal because the number of people buying > $399 graphics cards is limited, but before we've started the review AMD is already giving up ground to NVIDIA, which isn't a good sign."

AMD has since released even more graphics cards, including the competitive Radeon HD 3870 and 3850, but it still lacked a high end offering. The end of 2007 saw a slew of graphics cards released that brought GeForce 8800 GTX performance to the masses at lower price points, but nothing any faster. Considering we have yet to achieve visual perfection in PC games, there's still a need for even faster hardware.

At the end of last year both AMD and NVIDIA hinted at bringing back multi-GPU cards to help round out the high end. The idea is simple: take two fast GPUs, put them together on a single card and sell them as a single faster video card.

These dual GPU designs are even more important today because of the SLI/CrossFire limitations that exist on various chipsets. With few exceptions, you can't run SLI on anything other than a NVIDIA chipset; and unless you're running an AMD or Intel chipset, you can't run CrossFire. These self-contained SLI/CrossFire graphics cards will work on anything however.

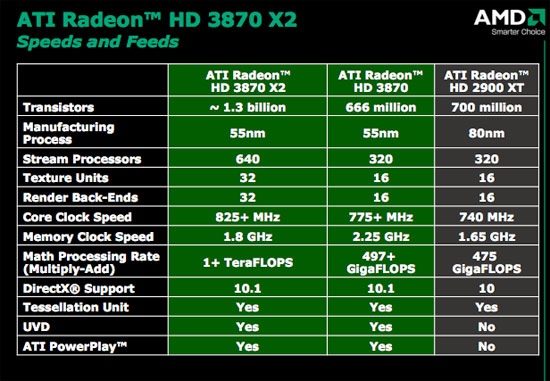

AMD is the first out of the gates with the Radeon HD 3870 X2, based on what AMD is calling its R680 GPU. Despite the codename, the product name tells the entire story: the Radeon HD 3870 X2 is made up of two 3870s on a single card.

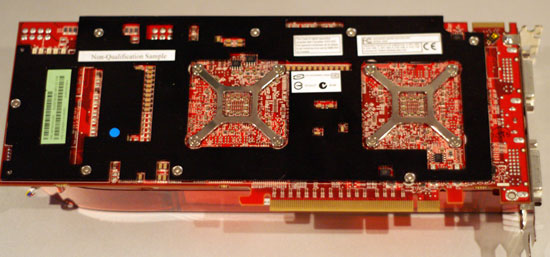

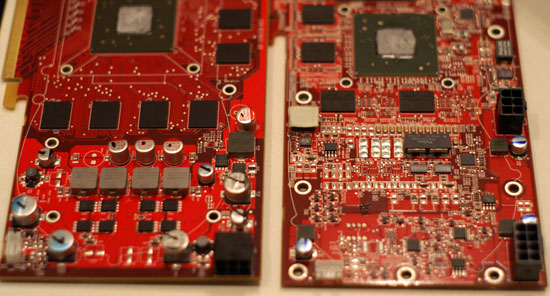

The card is long, measuring 10.5" it's the same length as a GeForce 8800 GTX or Ultra. AMD is particularly proud of its PCB design which is admittedly quite compact despite featuring more than twice the silicon of a single Radeon HD 3870.

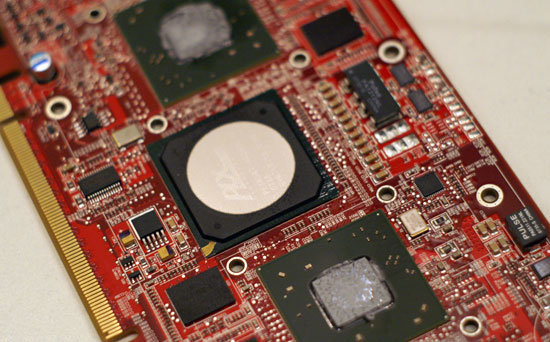

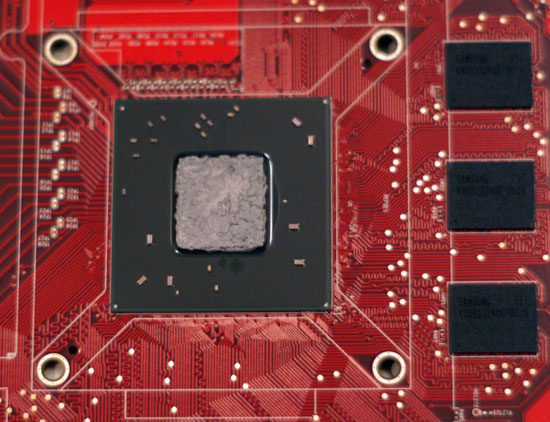

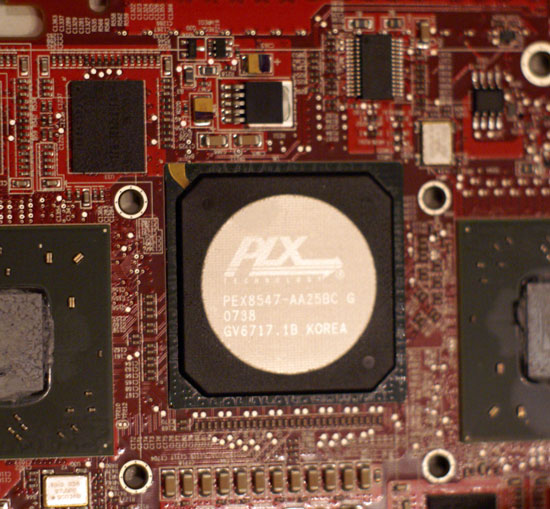

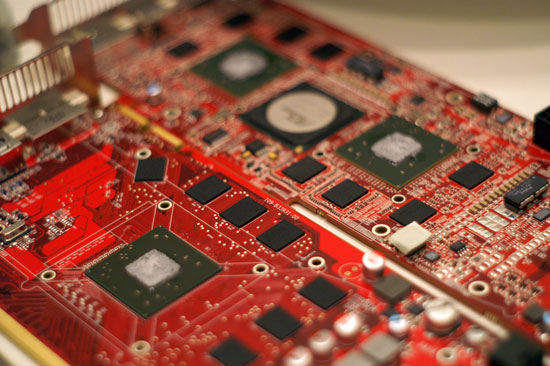

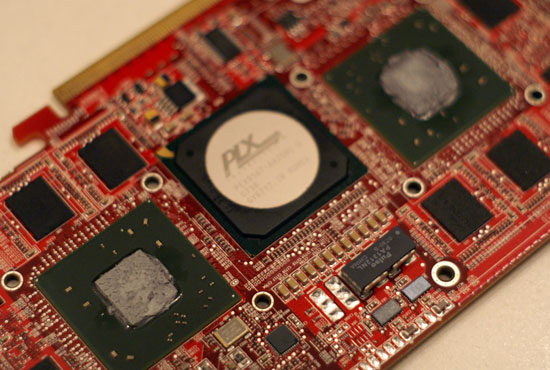

On the board we've got two 3870 GPUs, separated by a 48-lane PCIe 1.1 bridge (no 2.0 support here guys). Each GPU has 16 lanes going to it, and then the final 16 lanes head directly to the PCIe connector and out to the motherboard's chipset.

Two RV670 GPUs surround the PCIe bridge chip - Click to Enlarge

Thanks to the point-to-point nature of the PCI Express interface, that's all you need for this elegant design to work.

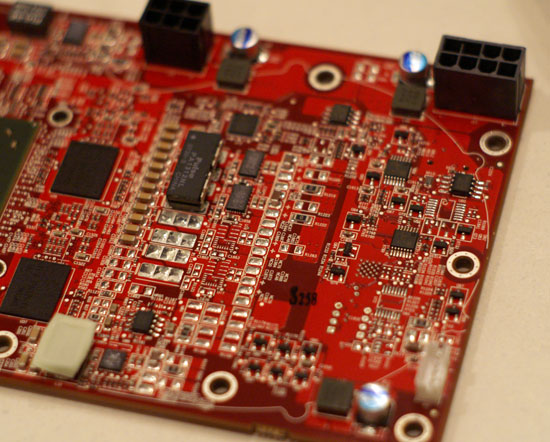

Each GPU has its own 512MB frame buffer, but the power delivery on the board has been reworked to deal with supplying two 3870 GPUs.

The Radeon HD 3870 X2 is built on a 12-layer PCB, compared to the 8-layer design used by the standard 3870. The more layers you have on a PCB the easier routing and ground/power isolation becomes, AMD says that this is the reason it is able to run the GPUs on the X2 faster than on the single GPU board. A standard 3870 runs its GPU at 775MHz, while both GPUs on the X2 run at 825MHz.

Memory speed is reduced however; the Radeon HD 3870 X2 uses slower, more available GDDR3 in order to keep board cost under control. While the standard 3870 uses 2.25GHz data rate GDDR4, the X2 runs its GDDR3 at a 1.8GHz data rate.

AMD expects the Radeon HD 3870 X2 to be priced at $449, which is actually cheaper than a pair of 3870s - making it sort of a bargain high end product. We reviewed so many sub-$300 cards at the end of last year that we were a bit put off by the $500 pricetag at first; then we remembered how things used to be, and it seems that the 3870 X2 will be the beginning of a return to normalcy in the graphics industry.

One GPU on the Radeon HD 3870

74 Comments

View All Comments

boe - Monday, January 28, 2008 - link

I really appreciate this article.The things I'd really like to see on the next is adding FEAR benchmarks.

I'd also appreciate a couple of older cards added for comparison like the 7900 or the x1900.

Butterbean - Monday, January 28, 2008 - link

"And we all know how the 3870 vs. 8800 GT matchup turned out"Yeah it was pretty close except for Crysis - where Nvidia got busted not drawing scenes out so as to cheat out a fps gain.

Stas - Monday, January 28, 2008 - link

Conveniently the tests showed how *2* GTs are faster in most cases than X2. Power consumption test only shows *single* GT on the same chart with X2.geogaddi - Wednesday, January 30, 2008 - link

Conveniently, most of us can multiply by 2.

ryedizzel - Monday, January 28, 2008 - link

in the 2nd paragraph under 'Final Words' you put:Even more appealing is the fast that the 3870 X2 will work in all motherboards:

but i think you meant to say:

Even more appealing is the fact that the 3870 X2 will work in all motherboards:

you are welcome to delete this comment when fixed.

abhaxus - Monday, January 28, 2008 - link

I would really, really like to see a crysis benchmark that actually uses the last level of the game rather than the built in gpu bench. My system (q6600@2.86ghz, 2x 8800GTS 320mb @ 620/930) gets around 40fps with 'high' defaults (actually some very high settings turned on per tweakguides) on both of the default crysis benchies, but only got around 10fps on the last map. Even on all medium, the game only got about 15-20 fps on the last level. Performance was even lower with the release version, the patch improved performance by about 10-15%.Of course I'd be interested to see how 2 of these cards do in crysis :)

Samus - Monday, January 28, 2008 - link

Even though Farcry is still unplayable at 1900x1200 (<30FPS) but its really close. My 8800GT only manages 18FPS on my PC using the same ISLAND_DEM benchmark AT did, so to scale, the 3870 X2 will do about 27FPS for me. Maybe with some overclocking it can hit 30FPS. $450 to find out is a bit hard to swallow though :\customcoms - Monday, January 28, 2008 - link

Well, if you're still getting <30 fps on Far Cry, I think you're PC is a bit too outdated to benefit from an upgrade to an HD3870 X2.I assume you meant Crysis. This game is honestly poorly coded with graphical glitches in the final scenes. With my 8800GT (and a 2.6ghz Opteron 165, 2gb ram), I pull 50 FPS in the Island_Dem benchmark at 1680x1050 with a mixture of medium-high settings, 15 fps more than the 8800GTS 320mb I replaced it with. However, when you get to some of the final levels, these frames drop to more like 30 or less, and I am forced to drop to medium or a combination of medium-low settings at this point (perhaps my 2.6ghz dual core cpu isn't up to 3ghz C2D snuff).

Clearly, the game was rushed to market (not unlike Far Cry). Yes, the visuals are stunning, and the storyline is decent, but I much prefer Call of Duty 4, where the visuals are SLIGHTLY less appealing, but the storyline is better, the game is more realistic, the controls are better, and I can crank everything to max without worrying about slowdowns in later levels. Its the only game I have ever immediately started replaying on veteran.

The point is, no card(s) appears to be able to really play Crysis max everything at 1900x1200 or higher, and in my findings, the built in time demo's do not realistically simulate the games full demands in later levels.

swaaye - Wednesday, January 30, 2008 - link

Yeah, armchair game developer in action!In what way is CoD4 realistic, exactly? I suppose it does portray actual real military assets. It doesn't portray them in a realistic way, however.

Did you notice that Crysis models bullet velocity? It's not just graphical glitz.

Griswold - Monday, January 28, 2008 - link

Ahh, gotta love the arm chair developers who can see the game code just by looking at the DVD.