NVIDIA GeForce 8800 GT: The Only Card That Matters

by Derek Wilson on October 29, 2007 9:00 AM EST- Posted in

- GPUs

Getting Cocky: 8800 GT vs. the GTX

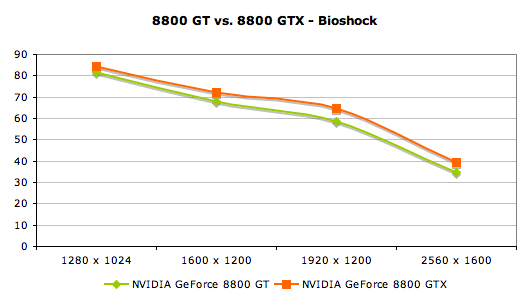

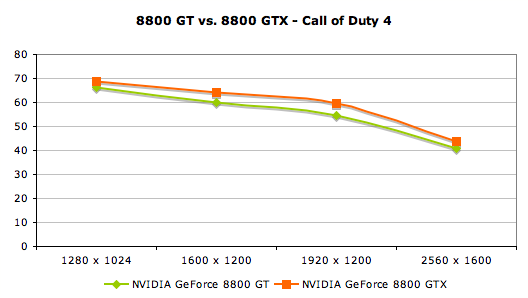

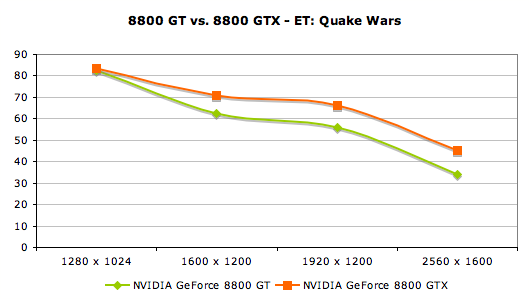

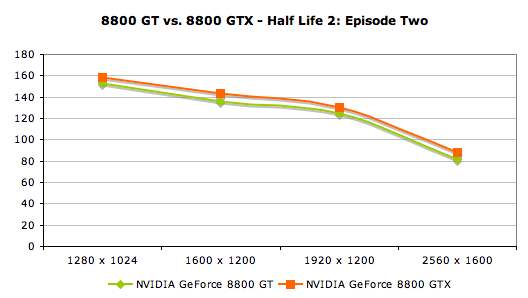

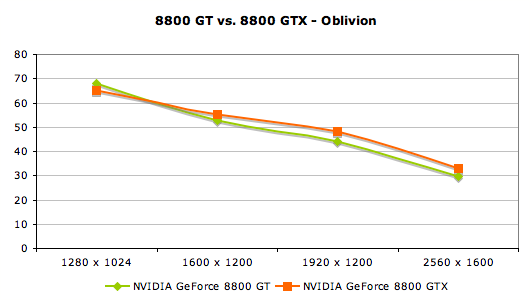

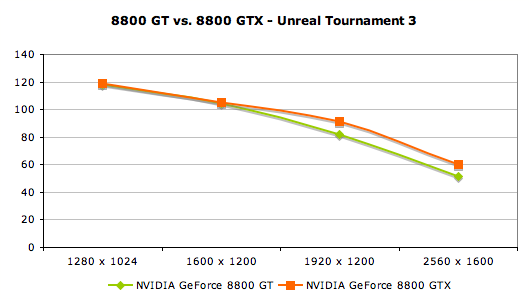

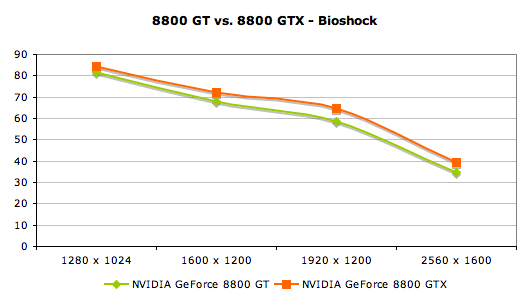

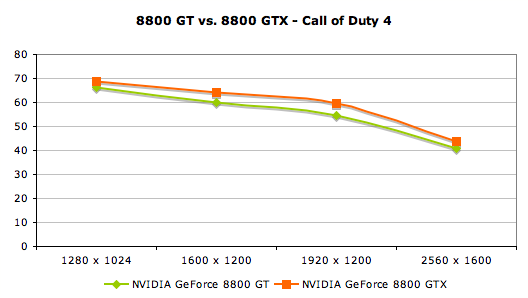

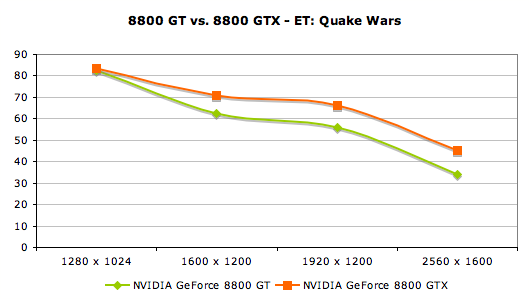

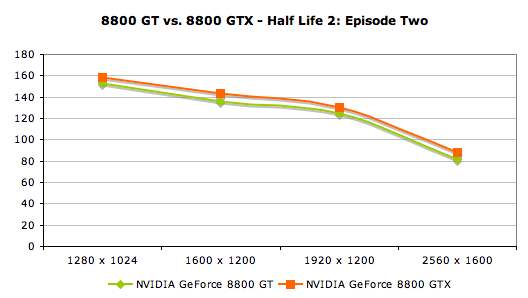

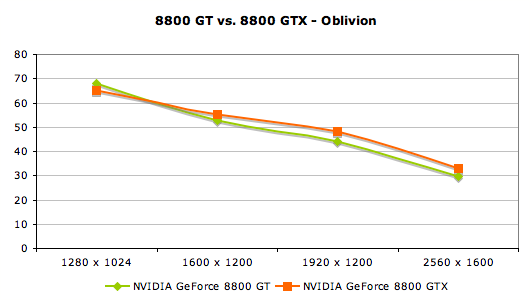

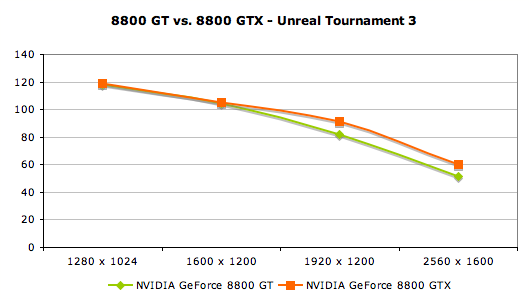

We've already established that NVIDIA's new 8800 GT is better than the 8800 GTS, and we've just proved that the 8800 GT is a much better value than the 8600 GTS, but how does it fare against the current king of the hill - the GeForce 8800 GTX?

We would be out of our minds to expect the 8800 GT to even remotely compete with the GTX, but the real question is - how much more performance do you get from the extra money you spent on the GTX over the GT?

Because the 8800 GT does top the 8800 GTS, there is an even smaller gap between the 8800 GT and the GTX than between the existing $400 and $500 parts. With a smaller difference in performance than the GTS and about a $250 to $300 premium for the 8800 GTX beyond the part that falls one step down in performance, we really don't see much motivation to purchase the 8800 GTX.

Of course, there are exceptions to this, and people who own 30" displays and have deep pockets will still want the best of the best in their boxes, which means the 8800 GTX/Ultra SLI setup that will be necessary to get the most out of Crysis when the game finally makes its way on to shelves this fall. NVIDIA still owns the high end market, and while it's not for everyone, it is there for those who need it.

But back to the real story, in spite of the fact that the 8800 GT doesn't touch the GTX, two of them will certainly beat it for either equal or less money.

We've already established that NVIDIA's new 8800 GT is better than the 8800 GTS, and we've just proved that the 8800 GT is a much better value than the 8600 GTS, but how does it fare against the current king of the hill - the GeForce 8800 GTX?

We would be out of our minds to expect the 8800 GT to even remotely compete with the GTX, but the real question is - how much more performance do you get from the extra money you spent on the GTX over the GT?

Because the 8800 GT does top the 8800 GTS, there is an even smaller gap between the 8800 GT and the GTX than between the existing $400 and $500 parts. With a smaller difference in performance than the GTS and about a $250 to $300 premium for the 8800 GTX beyond the part that falls one step down in performance, we really don't see much motivation to purchase the 8800 GTX.

Of course, there are exceptions to this, and people who own 30" displays and have deep pockets will still want the best of the best in their boxes, which means the 8800 GTX/Ultra SLI setup that will be necessary to get the most out of Crysis when the game finally makes its way on to shelves this fall. NVIDIA still owns the high end market, and while it's not for everyone, it is there for those who need it.

But back to the real story, in spite of the fact that the 8800 GT doesn't touch the GTX, two of them will certainly beat it for either equal or less money.

90 Comments

View All Comments

Spacecomber - Monday, October 29, 2007 - link

It's hard to tell what you are getting when you compare the results from one article to those of another article. Ideally, you would like to be able to assume that the testing was done in an identical manner, but this isn't typically the case. As was already pointed out, look at the drivers being used. The earlier tests used nvidia's 163.75 drivers while the tests in this article used nvidia's 169.10 drivers.Also, not enough was said about how Unreal 3 was being tested to know, but I wonder if they benchmarked the the game in different manners for the different articles. For example, were they using the same map "demo"? Were they using the game's built-in fly-bys or where they using FRAPS? These kind of differences between articles could make direct comparisons between articles difficult.

spinportal - Monday, October 29, 2007 - link

Have you checked the driver versions? Over time drivers do improve performance, perhaps?Parafan - Monday, October 29, 2007 - link

Well the 'new' drivers made the GF 8600GTS Perform alot worse. But the higher ranked cards better. I dont know how likely that isRegs - Monday, October 29, 2007 - link

To blacken. I am a big AMD fan, but right now it's almost laughable how they're getting stepped and kicked on by the competition.AMD's ideas are great for the long run, and their 65nm process was just a mistake since 45nm is right around the corner. They simply do not know how to compete when the heat is on. AMD is still traveling in 1st gear.

yacoub - Monday, October 29, 2007 - link

"NVIDIA Demolishes... NVIDIA? 8800 GT vs. 8600 GTS"Well the 8600GTS was a mistake that never should have seen the light of day: over-priced, under-featured from the start. The 8800 GT is the card we were expecting back in the Spring when NVidia launched that 8600 GTS turd instead.

yacoub - Monday, October 29, 2007 - link

First vendor to put a quieter/larger cooling hsf on it gets my $250.gamephile - Monday, October 29, 2007 - link

Dih. Toh.CrystalBay - Monday, October 29, 2007 - link

Hi Derek, How are the Temps on load? I've seen some results of the GPU pushing 88C degrees plus with that anemic stock cooler.Spacecomber - Monday, October 29, 2007 - link

I may be a bit misinformed on this, but I'm getting the impression that Crysis represents the first game that makes major use of DX10 features, and as a consequence, it takes a major bite out of the performance that existing PC hardware can provide. When the 8800GT is used in a heavy DX10 game context does the performance that results fall into a hardware class that we typically would expect from a $200 part? In other words, making use of the Ti-4200 comparison, is the playable performance only acceptable at moderate resolutions and medium settings?We've seen something like this before, when DX8 hardware was available and people were still playing DX7 games with this new hardware, the performance was very good. Once games started to show up that were true DX8 games, hardware (like the Ti-4200) that first supported DX8 features struggled to actually run these DX8 features.

Basically, I'm wondering whether Crysis (and other DX10 games that presumably will follow) places the 8800GT's $200 price point into a larger context that makes sense.

Zak - Monday, November 5, 2007 - link

I've run Vista for about a month before switching back to XP due to Quake Wars crashing a lot (no more crashes under XP). I've run bunch of demos during that month including Crysis and Bioshock and I swear I didn't see a lot of visual difference between DX10 on Vista and DX9 on XP. Same for Time Shift (does it use DX10?). And all games run faster on XP. I really see no compelling reason to go back to Vista just because of DX10.Zak