NVIDIA GeForce 8800 GT: The Only Card That Matters

by Derek Wilson on October 29, 2007 9:00 AM EST- Posted in

- GPUs

Power Consumption

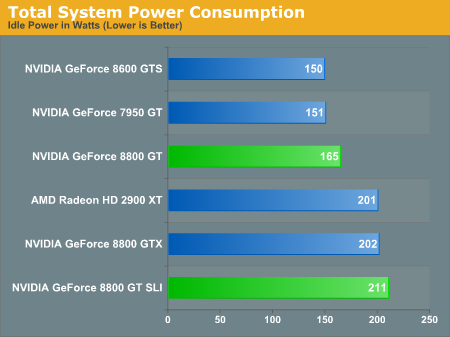

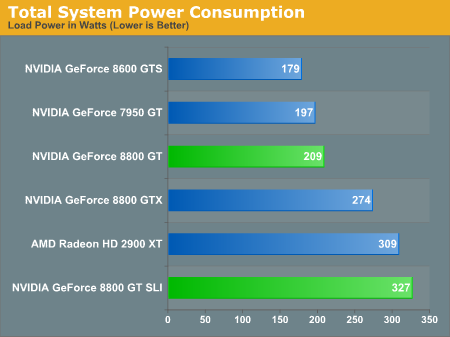

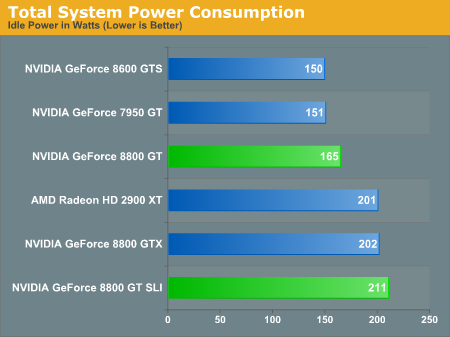

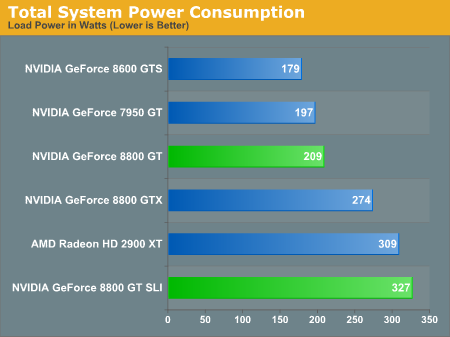

As this is NVIDIA's first 65nm part, it certainly of interest to see how it stacks up to the current line up in terms of power consumption. NVIDIA quotes the max power of the 8800 GT as 105W, but in the real world, we aren't just stressing the GPU. Let's take a look at system power draw under 3dmark 06 (specifically the pixel shader test).

The 8800 GT draws less power than anything that competes with it in terms of performance. When G80 hit last year, we made a big deal out of how power related to performance. This card simply blows everything else away in terms of how much little power is needed to attain incredible performance.

8800 GT SLI does draw more power than the 8800 GTX, but it also performs much better in cases where performance scales with SLI. For those who want high performance, power is generally less of an object, but it's good to know that 2x 8800 GT cards won't break the bank like a pair of 2900 XTs in CrossFire.

As this is NVIDIA's first 65nm part, it certainly of interest to see how it stacks up to the current line up in terms of power consumption. NVIDIA quotes the max power of the 8800 GT as 105W, but in the real world, we aren't just stressing the GPU. Let's take a look at system power draw under 3dmark 06 (specifically the pixel shader test).

The 8800 GT draws less power than anything that competes with it in terms of performance. When G80 hit last year, we made a big deal out of how power related to performance. This card simply blows everything else away in terms of how much little power is needed to attain incredible performance.

8800 GT SLI does draw more power than the 8800 GTX, but it also performs much better in cases where performance scales with SLI. For those who want high performance, power is generally less of an object, but it's good to know that 2x 8800 GT cards won't break the bank like a pair of 2900 XTs in CrossFire.

90 Comments

View All Comments

AggressorPrime - Monday, October 29, 2007 - link

I made a typo. Let us hope they are not on the same level.ninjit - Monday, October 29, 2007 - link

This page has my very confused:http://www.anandtech.com/video/showdoc.aspx?i=3140...">http://www.anandtech.com/video/showdoc.aspx?i=3140...

The text of the article goes on as if the GT doesn't really compare to the GTX, except on price/performance:

Yet all the graphs show the GT performing pretty much on par with the GTX, with at most a 5-10fps difference at the highest resolution.

I didn't understand that last sentence I quoted above at all.

archcommus - Monday, October 29, 2007 - link

This is obviously an amazing card and I hope it sets a new trend for getting good gaming performance in the latest titles for around $200 like it used to be, unlike the recent trend of having to spend $350+ for high end (not even ultra high end). However, I don't get why a GT part is higher performing than a GTS, isn't that going against their normal naming scheme a bit? I thought it was typically: Ultra -> GTX -> GTS -> GT -> GS, or something like that.mac2j - Monday, October 29, 2007 - link

I've been hearing rumors about an Nvidia 9800 card being released in the coming months .... is that the same card with an outdated/incorrect naming convention or a new architecture beyond G92?I guess if Nvidia had a next-gen architecture coming it would explain why they dont mind wiping some of their old products off the board with the 8800 GT which seems as though it will be a dominant part for the remaining lifetime of this generation of parts.

MFK - Monday, October 29, 2007 - link

After lurking on Anandtech for two layout/design revisions, I have finally decided to post a comment. :DFirst of all hi all!

Second of all, is it okay that nVidia decided not to introduce a proper next gen part in favour of this mid range offering? Okay so its good and what not, but what I'm wondering is, something that the article does not talk about, is what the future value of this card is. Can I expect this to play some upcoming games (Alan Wake?) on 1600 x 1200? I know its hard to predict, but industry analysts like you guys should have some idea. Also how long can I expect this card to continue playing games at acceptable framerates? Any idea, any one?

Thanks.

DerekWilson - Monday, October 29, 2007 - link

that's a tough call ....but really, it's up to the developers.

UT3 looks great in DX9, and Bioshock looks great in DX10. Crysis looks amazing, but its a demo, not final code and it does run very slow.

The bottom line is that developers need to balance the amazing effects they show off with playability -- it's up to them. They know what hardware you've got and they chose to push the envelope or not.

I konw that's not an answer, sorry :-( ... it is just nearly impossible to say what will happen.

crimson117 - Monday, October 29, 2007 - link

How much ram was on the 8800 GT used in testing? Was is 256 or 512?NoBull6 - Monday, October 29, 2007 - link

From context, I'm thinking 512. Since 512MB are the only cards available in the channel, and Derek was hypothesizing about the pricing of a 256MB version, I think you can be confident this was a 512MB test card.DerekWilson - Monday, October 29, 2007 - link

correct.256MB cards do not exist outside NVIDIA at this point.

ninjit - Monday, October 29, 2007 - link

I was just wondering about that too.I thought I missed it in the article, but I didn't see it in another run through.

I see I'm not the only one who was curious