Low Power Server CPU Shoot-out

by Jason Clark & Ross Whitehead on July 17, 2007 12:15 AM EST- Posted in

- IT Computing

Benchmarking Low Voltage

In our last review we tested with Power Management features turned off, and also with them turned on. Since this article is focused on low power parts, and the industry is mostly focused on Performance/Watt, we decided we would only report results with all Power Management features enabled.

To configure our servers with all Power Management features on, we perform the following:

On Intel

In the BIOS ensure that Thermal Management is On/Enabled, C1 Enhanced Mode is On/Enabled, and EIST Support is On/Enabled.

On AMD

In the BIOS ensure that PowerNow is On/Enabled. Additionally, you must install the Processor Driver, from AMD, in your OS.

For both platforms you must also set the Power Options in Control Panel to "Server Balanced Processor Power and Performance".

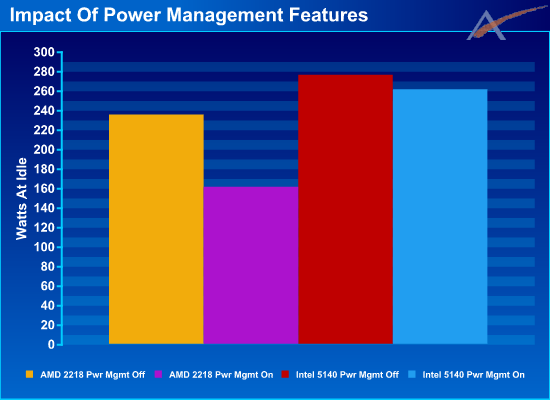

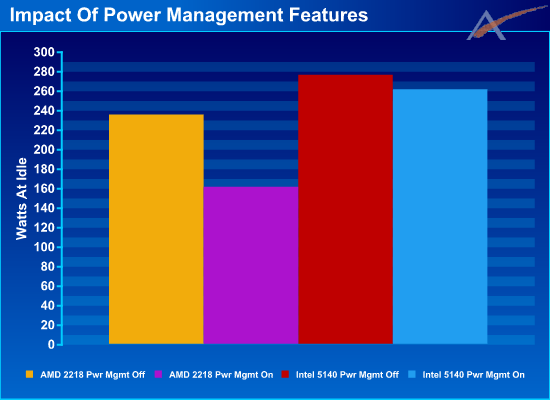

We wanted to measure the impact that the Power Management features had on a system at idle. The following graph shows the results.

With all Power Management features turned on, the AMD system uses 31% less power at idle than with the Power Management Features turned off. The Intel system on the other hand only uses 5% less with all Power Management features turned on, and this is still 62% more power than the AMD system.

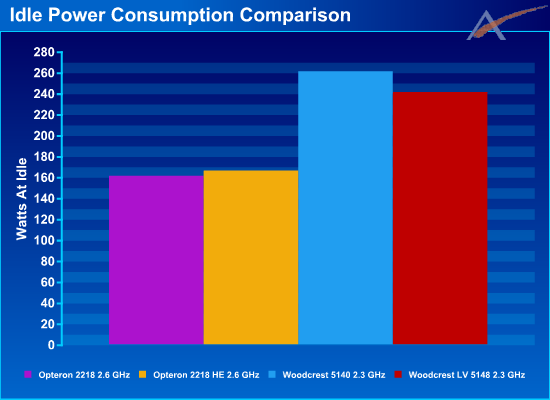

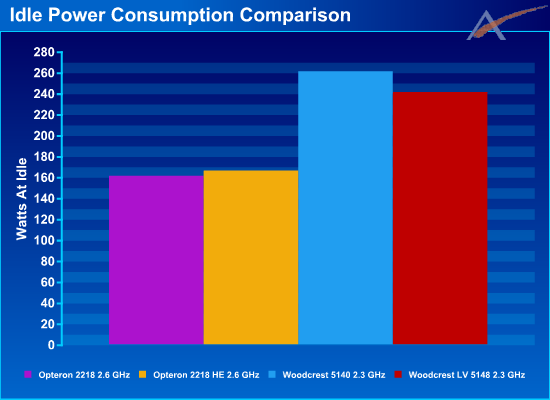

More on this later; first we want to explore the benefit on system power that the low power parts have. With all Power Management features turned on we recorded idle power usage for the same clocked parts for the regular and low power parts.

In the case of AMD, the low power parts actually consume 5 more watts, 2.5 watts/socket. We verified this a few times, and each time the results were consistent. We discussed this with AMD, and they were more concerned with the power consumption results under load, which we will get to in a few slides.

In the case of Intel, the low power parts use 20 watts less, 10 watts/socket. Again, this is at idle.

At the system level, the best savings at idle is only 8%. Is that consistent with what we will see under load? We will look at that shortly to find out.

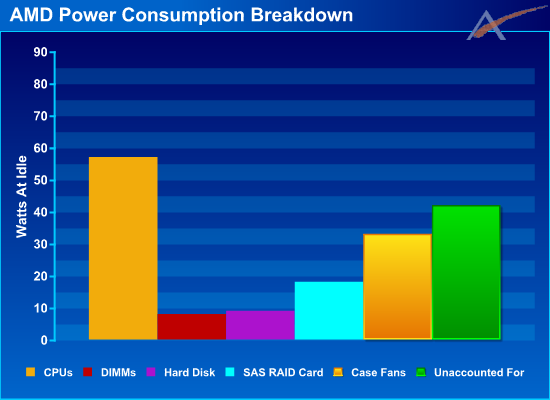

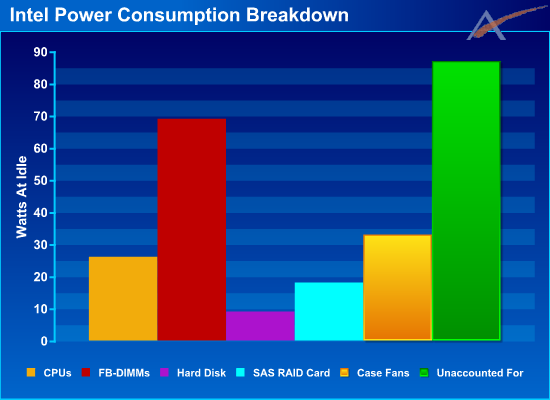

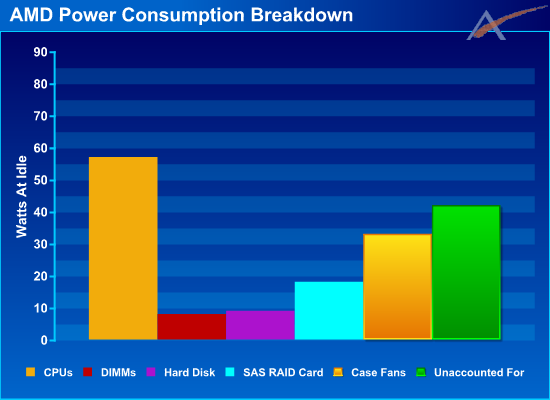

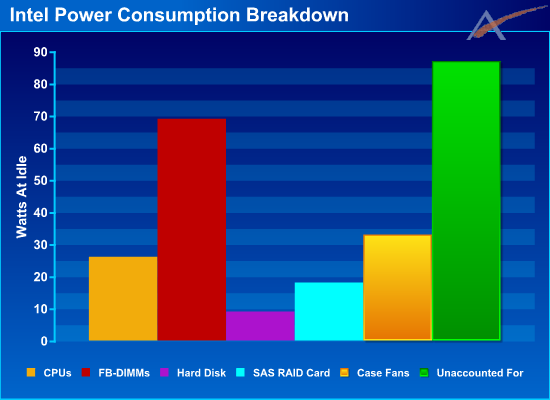

During the testing, we often speculate about where all the power goes. We attempted to find out by measuring power consumption of the entire system at idle, then removing a component and re-measuring the power consumption. The difference in power can be attributed to the removed component. This is not a perfect way to determine component power requirements, but it does provide some general guidance as to where all of the power goes. The results are very interesting:

In the AMD system we see that the bulk of the power is consumed by the idle CPUs. Overlooking the "Unaccounted For", the next biggest consumer is the 5 case fans, followed by the SAS RAID Card. The "Unaccounted For" is everything which is not listed, including the inefficiency of the power supply and the motherboard and chipset.

In the Intel system we see that the CPUs require significantly less power than the AMD CPUs, 54% less to be exact. On the other hand the FB-DIMMs require 862% more power than the AMD DIMMs. (Yikes!) Also, the "Unaccounted For" is twice as much on the Intel system as the AMD system. Keep in mind both of these systems have identical power supplies, so the efficiency is roughly the same.

Choosing the contenders

In previous articles, we've been asked to explain why we chose the parts we did for an article. For this article we used the highest clocked low power parts from both Intel and AMD, and equally clocked regular power parts for comparison. With equally clocked parts, it allows us to see that there is virtually no performance difference between low voltage parts and regular voltage parts. It also allows us to clearly determine the power savings of low power processors.

In our last review we tested with Power Management features turned off, and also with them turned on. Since this article is focused on low power parts, and the industry is mostly focused on Performance/Watt, we decided we would only report results with all Power Management features enabled.

To configure our servers with all Power Management features on, we perform the following:

On Intel

In the BIOS ensure that Thermal Management is On/Enabled, C1 Enhanced Mode is On/Enabled, and EIST Support is On/Enabled.

On AMD

In the BIOS ensure that PowerNow is On/Enabled. Additionally, you must install the Processor Driver, from AMD, in your OS.

For both platforms you must also set the Power Options in Control Panel to "Server Balanced Processor Power and Performance".

We wanted to measure the impact that the Power Management features had on a system at idle. The following graph shows the results.

With all Power Management features turned on, the AMD system uses 31% less power at idle than with the Power Management Features turned off. The Intel system on the other hand only uses 5% less with all Power Management features turned on, and this is still 62% more power than the AMD system.

More on this later; first we want to explore the benefit on system power that the low power parts have. With all Power Management features turned on we recorded idle power usage for the same clocked parts for the regular and low power parts.

In the case of AMD, the low power parts actually consume 5 more watts, 2.5 watts/socket. We verified this a few times, and each time the results were consistent. We discussed this with AMD, and they were more concerned with the power consumption results under load, which we will get to in a few slides.

In the case of Intel, the low power parts use 20 watts less, 10 watts/socket. Again, this is at idle.

At the system level, the best savings at idle is only 8%. Is that consistent with what we will see under load? We will look at that shortly to find out.

During the testing, we often speculate about where all the power goes. We attempted to find out by measuring power consumption of the entire system at idle, then removing a component and re-measuring the power consumption. The difference in power can be attributed to the removed component. This is not a perfect way to determine component power requirements, but it does provide some general guidance as to where all of the power goes. The results are very interesting:

In the AMD system we see that the bulk of the power is consumed by the idle CPUs. Overlooking the "Unaccounted For", the next biggest consumer is the 5 case fans, followed by the SAS RAID Card. The "Unaccounted For" is everything which is not listed, including the inefficiency of the power supply and the motherboard and chipset.

In the Intel system we see that the CPUs require significantly less power than the AMD CPUs, 54% less to be exact. On the other hand the FB-DIMMs require 862% more power than the AMD DIMMs. (Yikes!) Also, the "Unaccounted For" is twice as much on the Intel system as the AMD system. Keep in mind both of these systems have identical power supplies, so the efficiency is roughly the same.

Choosing the contenders

In previous articles, we've been asked to explain why we chose the parts we did for an article. For this article we used the highest clocked low power parts from both Intel and AMD, and equally clocked regular power parts for comparison. With equally clocked parts, it allows us to see that there is virtually no performance difference between low voltage parts and regular voltage parts. It also allows us to clearly determine the power savings of low power processors.

27 Comments

View All Comments

DeepThought86 - Tuesday, July 17, 2007 - link

Based on these results, it looks like even though Barcelona will top out at 2.0 GHz but with the same TDP, it should be a killer in performance/watt and a great server processorLTG - Tuesday, July 17, 2007 - link

Not for long - how hard would it be for Intel to come with a non FB-DIMM solution?Then they would crush AMD because the CPU's actually have better power consumption.

Hans Maulwurf - Tuesday, July 17, 2007 - link

The AMD numbers for power consumption of CPUs only seem far to high.I guess you didnt rearrange the memory modules when you took one CPU off the system. Thus you disabled half the memory modules as well.

Ross Whitehead - Tuesday, July 17, 2007 - link

We did not rearrange the memory modules as we oly wanted to alter one attribute of the system between measurements so that we could attribute all difference in power to the one change.If you consider that all of the AMD DIMMs only took 8 Watts total, and that the difference between AMD CPUs and Intel CPUs was 31 Watts total, I am fairly confident in the numbers.

TA152H - Tuesday, July 17, 2007 - link

Are these articles really meant to mislead people, or are there actual performance differences between the low voltage parts and the normal ones. I was under the impression they were the same parts but were picked for their ability to perform at lower voltages, thus their IPC should be completely identical. But the charts do show some difference, which is kind of surprising. This makes no sense to me at all, considering what AMD has been saying, but it is possible. Do you guys know what's going on with this? Are they just cherry picked CPUs that run with lower voltage, or do they differences that would alter IPC (most likely the L2 cache). It might be that the test variances are just statistical scatter, but if this is so, it would make no sense at all to report performance data on both types of processors, so I don't get it.Also, the reason you don't combine servers is fairly simple, and that last paragraph is mind-boggling it's so uninformed. If you run a server at 3% all day, except for say 30 minutes, and then your servers get pegged out, you might average 7% for the day, but for those moments when your two servers are getting hammered, you can't possibly merge them or you'd potentially suffer degraded performance. It's not the average that matters as much as the maximum, unless you can tolerate the degradation. Most people can not, and the cost of a server doesn't validate a loss of performance during peak times.

Jason Clark - Tuesday, July 17, 2007 - link

Typically low power processors are picked based on yields, you are correct.The assumption about combining servers is just not correct. If you look at an enterprise VM stack like VMware, they can move VM's around based on resource usage. If a VM is using most of the resources it can shuffle the other VM's around as required. Furthermore, VMware can allow for resource scheduling, whereas you can inform the stack that at 3:00AM this VM needs more resources... Just because you spike at 80-100% for 10 minutes by no means that you are now tied to being on one physical host...

Cheers.

TA152H - Tuesday, July 17, 2007 - link

On the first part, you should remove one of the pairs of processors, either the lower power, and say what you just said. The fact you test both for performance strongly implies a difference where none exists, and in fact is just confusing. Why test both if they are the same? Wouldn't it be better to just say they have the same IPC, and clean up your charts some (except for the cost per watt type), and remove this source of confusion?OK, with regards to VMWare, where do you get these extra resources from if you have gotten rid of the machine? Software is great, but if you don't have the hardware, how do you allocate these machines? I guess if you have a situation where one piece peaks at one time during the day, and another at another time, you could do something like this, so I'll grant you that it would work in some situations. In my experience, this is not typical though, and most of the time, you have "peak" hours where more people are just on and using all the servers more. And if you don't have the extra capacity sitting around on an underutilized server, there isn't much that will help it.

Alyx - Tuesday, July 17, 2007 - link

In regards to saving money with servers I'm sure that substantial amounts of cash that would warrant an upgrade of this type would only be called for in a case where there was some type of a server farm or at least enough servers to consolidate one or two. Saving $10-$20 a month on power would only be useful if you were saving it on 20+ servers.Hell, if you are only swapping out one or two servers then the number of tech hours spent are going to eat up any monetary benefit for at least the first years worth of power.

VooDooAddict - Tuesday, July 17, 2007 - link

If you can consolidate with VMWare / Xen, ect. You'll get far better raw system power usage out of a couple Dual Socket Quad Core systems running as hosts then running them all on physical with low voltage chips.Cooling is another issue those as once you get all those 8 Cores working on VMs you'll have quite alot of heat in a concentrated area.

duploxxx - Tuesday, July 17, 2007 - link

Well it will be depending on you're hardware. If you mean current available quadcore offers, for sure they do look interesting prise wise but are not interesting performance wise in a virtual (hypervisor) environment. Even current woodcrest system have a major FSB limit with there dual 1333FSB against current AMD k8 opteron systems, in quad core cpu systems you just add raw cpu power on the same limit.there are enough benchmarks providing this info and if you have the chanche to play with those systems, you will even notice it.

For real quadcore advantage you'll have to wait for the K10. Even if it is only at 2.0 GHZ with his updated dual mem controller, internal communication, shared cache and most important NPT feature it might even outperform 2.6-3.0 Clovers in virtualization.