A Messy Transition: Practical Problems With 32bit Addressing In Windows

by Ryan Smith on July 12, 2007 12:00 PM EST- Posted in

- Software

As we stated earlier, the inspiration behind this article is Supreme Commander, which was crashing during gameplay due to what we discovered to be was the game exhausting its user space. Without taking extreme and convoluted measures, most applications can do little besides (gracefully) crash when they've exhausted their user space. Server applications, which on the whole tend to be the king of memory hogs in the first place, tend to have some sort of compensation mechanism, but for more generalized applications like games this is not the case.

In this case, Supreme Commander was crashing late in to big games on a system that otherwise is completely stable. No amount of testing could come up with a problem outside of Supreme Commander, and most telltale of all was that it was only a problem with large maps with large number of players with high quality settings; turning anything down made the crashing stop. Although not clearly a memory error it greatly reduces the list of possible problems to a few things. The entire process, we suspect, will be similar for everyone hitting the 2GB barrier: the affected application is crashing under heavy load. Thankfully the problem is possible to debug, albeit with moderate difficulty.

With all of that said, I did not come up with the diagnosis or the solution myself and while the problem in retrospect is quite obvious, at the time I did not even take the 2GB barrier in to consideration. As such, credit for the diagnosis and solution belongs to MadBoris of the Gas Powered Games forums who identified Supreme Commander as hitting the 2GB barrier and publishing a solution for it, which we will cover here.

Getting to the root of the problem requires additional tools beyond what comes with Windows XP or Vista. The task manger, the logical tool for this task, doesn't track the information we need to know. At best, the task manager can list how much data is in the various memory pools altogether, however this is not the metric we're looking for. What we actually need to know is how much of the application's share of the virtual address space has been allocated (the fundamental problem after all is when we run out of unused virtual address space to allocate) which due to a multitude of reasons can be much greater than the amount of memory in use by an application. For finding this we'll need Sysinternals' Process Explorer.

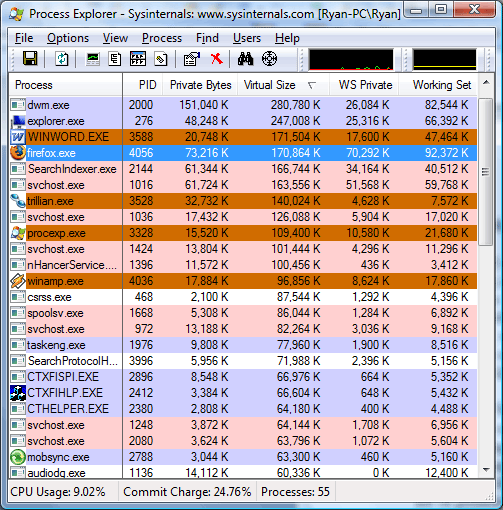

The above screenshot was taken as this paragraph was written, showcasing several different memory values for the running processes. The WS Private (Working Set Private) column lists how much physical memory the application is using, and this is the same column listed by the Windows Task Manager for memory usage. The Private Bytes column is the total amount of memory the application is using. Last but not least however is the Virtual Size column, which finally lists the amount of virtual address space allocated, the metric we're after.

We'll quickly focus on Microsoft Word as an example of the discrepancy between physical memory usage and private size. While Word is only using 17MB of physical memory, its virtual size is 170MB, 10 times the physical memory usage and 9 times the total memory usage. This is more or less a worse case scenario but it also points out just how misleading any memory usage - physical or total - is when trying to diagnose this kind of problem. Applications or games with high memory usage tend to not have nearly as a severe spread, but as we can see it's possible for an application to hit the 2GB barrier well before it needs 2GB of physical memory.

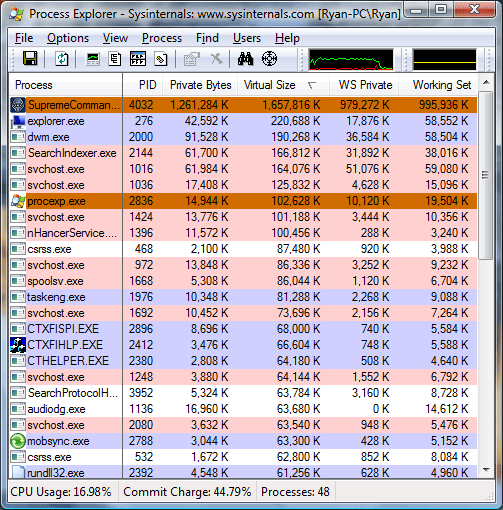

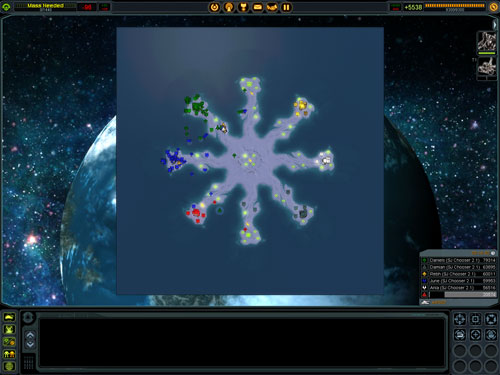

Getting to the meat of things however, the process readout for Supreme Commander basically says everything that needs to be said. In order to prove an exaggerated point, we started a 6 player game with 5 AI units on a maximum size map (81km x 81km) and set the game speed to maximum with maximum quality settings, while patching Supreme Commander and adjusting our system configuration to break the 2GB barrier. This actually isn't very playable for a variety of reasons (we'll overtax the CPU quickly due to all the AI units, slowing the game down dramatically) but represents not only what can happen in bad situations, but also is something that happens even in more manageable situations on smaller maps later in to the game.

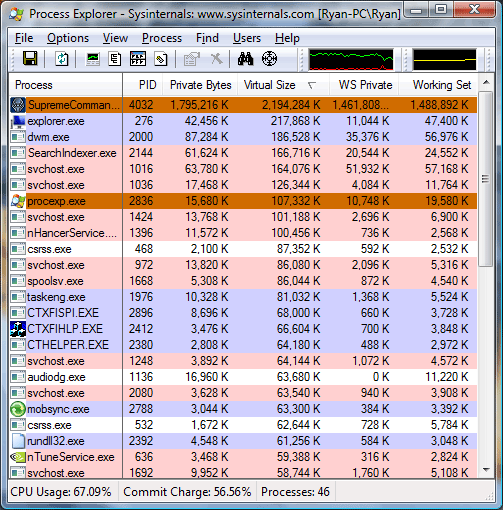

Just at the start of the game, our virtual size was already in excess of 1.6GB, dangerously close to the 2GB barrier. 16 minutes in to the game, we have already hit a virtual size of 2.2GB, meaning had we not broken the 2GB barrier the game would have already crashed. At this point our total memory usage (private bytes) is only at 1.7GB, with 1.4GB of that in physical memory, nearly maximizing the RAM usage of our 2GB system. Our spread between memory usage and virtual size is .5GB, showcasing how deceptive memory usage is in identifying 2GB barrier issues.

Finally at 22 minutes in to the game, the game crashes as the virtual size has reached the 2.6GB barrier we have reconfigured this system for. Perhaps the most troubling thing at this point is that Supreme Commander is not aware that it ran out of user space in its virtual address pool, as we are kicked out of the game with a generic error message. Unfortunately Windows Vista reverted to a non-accelerated desktop at this point, preventing us from capturing a screenshot of the exact memory readouts or the error message.

69 Comments

View All Comments

brink - Thursday, July 12, 2007 - link

it's a 2 prong solution for WinXP, you have to set a /3GB flag in your BOOT.INI file for the instance of windows you're booting. Additionally since Supcom doesn't have the LARGEADDRESSAWARE flag, you have to patch the EXE using the tool mentioned in the article ((someone created a batch that uses the tool to patch the supcom exe, easily found in the official supcom forums)I mention this since the article doesn't really say how they did it (and they used Vista, which is another boc to contend with) Since HardOCP did a comparison a while back between supcom perf in XP and Vista, I've really only installed/used supcom in XP still. With our fix for a 4GB machine (the machine I regularly use still has 2GB, I just stay away from 81KM maps) XP has still remained stable, but we did have one crash in a 40KM map game on Gentleman's Reef.

I don't like the article's preference on FPS in Supcom, mainly because I don't look at Supcom as a FPS centric game at all. If you've played, you know when a slow computer enters the game (or you have 7 computers each with 1,000 units on a 81KM map) the in-game timer will start to crawl. 1 second of game time will take 2 seconds, or much much worse. It would have been approx 100x cooler if the bench was "it took this much before the timer started to skew".

jay401 - Thursday, July 12, 2007 - link

lol funny, now that I am paging through the article I see you mention this very issue. Good!jay401 - Thursday, July 12, 2007 - link

Well you didn't address the 'WHY' - why the game uses so much memory. Hopefully I provided a little light on that subject.Also on Page 5 none of your graphs are labeled as to what they are measuring. Please note if they're measuring fps, which is my gut but I'm not sure because they are unlabeled.

Thanks.

MadBoris - Thursday, July 12, 2007 - link

Although you are right in part, the lead engineer did mention the units are one reason for large memory consumption. (BTW, I had heard that they are all being/were rerendered for November). There is another issue beyond that though that becomes obvious. The initial virtual address space at the beginning of a game between a 20k map and 81k map is only about 150MB difference. But as unit count climbs, the larger map gap grows somewhat exponentially compared to the smaller. So something else is askew.

As Ryan mentioned the whys and wherefores aren't really the point, this issue is a global one and 2GB is a real hard limit now for games since we have the horsepower(CPU & GPU) for larger memory consuming texture maps, larger resolutions, yet the 2GB memory limit for a game is a definitive roadblock to forward progress so I am glad the issue is coming to the forefront.

As much confusion and fear there is on this /3GB subject for the laymen, this is still a great rabbit in the hat for us with 32 bit OS's if more driver writers get on the ball, fears can subside. Hopefully devs like Crytek can continue to push demand for 64 bit with a nice 64 bit Crysis patch too, and we can start making the transition leaving 32 bit behind as drivers/apps also make the transition.

I think articles like these help the cause.

Ryan Smith - Thursday, July 12, 2007 - link

To be honest, we didn't address why because it really isn't relevant. Even if Supreme Commander was done perfectly in every way, the result would have been the same once it reached the 2GB barrier.gigahertz20 - Thursday, July 12, 2007 - link

They should have made Vista only 64 bit to put pressure on the transition.johnsonx - Friday, July 13, 2007 - link

The problem with going 64-bit only this time around is that there are too many 32-bit programs around that simply won't run on 64-bit windows. I have several myself that I depend on daily. They are slightly older programs that the developer doesn't intend to upgrade to be 64-bit, but that doesn't change the fact that I need them. If these apps didn't work with Vista because it was released in 64-bit only form, then I wouldn't be running Vista. Millions of others are (or would be) in this same situation, which would significantly harm Vista sales.If the next version of Windows were made 64-bit only, around the 2010 time frame, I think that would be quite reasonable. By then most 32-bit only programs will have been replaced or rendered obsolete.

I think Microsoft has handled the 32/64-bit issue correctly so far, for the most part. XP64 should have been ready sooner and should have been better supported though.

Related question for anyone who knows: I know retail Vistas include 32-bit and 64-bit on the same disc, and the user is free to install either. I also know that OEM Vistas include only the one version on the disc. What about the OEM keycodes though? Can you install a 64-bit Vista using the keycode that came with a 32-bit disc? Or has MS limited the keycode as well?

StygianAgenda - Thursday, June 5, 2008 - link

To answer your question about the Windows Vista Retail package, I have 2 copies of Vista Ultimate retail, and it was packed with 2 DVDs, 1 is 32bit, the other is the 64bit build.The set comes with a single key, and the key is bound to the 64bit version, so if you opt to use the 32bit version instead, you'll most likely have to call into Microsoft's activation center and manually activate your copy. I've had to do this 4 times now, due to hardware changes, because Vista detects system changes, so if you remove 1 or 2 boards and boot into Vista, the system will automatically de-activate. Now, granted, the call to MS was fairly painless, but it's annoying all the same.

Out of the 4 Vista systems I own currently (3 of which are laptops), I've had great success with the OS, itself. Unfortunately for me, the motherboard I've been using on my custom built workstation is flaky... I've done my research though (tonight), and might have a fix in the works, if it works, that is. Otherwise, I'll be ordering a new motherboard, and calling Microsoft yet again to transfer my license to the new configuration. By the way, they always ask "Is this copy running on more than one PC?". In light of all the hoopla over the licensing scheme in Vista, I would hope that no one is stupid enough to try to use a Vista Retail license on multiple PCs, because it'll cause all of them to be blacklisted. Oh, and the new Vista Enterprise edition is only available in lots of 25 licenses or higher, and requires a licensing server on the LAN with the deployed workstation licenses. It's either that, or expect to have a couple of extra hundred MB or so of net traffic from all of the Vista Ent. workstations checking in with MS everytime the systems are booted. Makes me glad that I also work with Linux very heavily, and all things considered, if Linux + WINE can run all of my criticle Win32 apps, then this will be the last Vista Licenses that I buy. I'll still keep Vista on my laptops, and I'll continue to run my XP workstations, and 2K3 servers, but MS is going to have to really do some impressive work to get me convinced to migrate to their next platform... such as maybe... a *real* 3D desktop... which is already available, stable and totally badass on Linux (check out Kubuntu + compiz).

(btw: Sorry if I seem like I'm on a rant here... no offense intended toward the readers, at all... it's just that when you work with OS's at the level that I do, after a while stupid mistakes made by OS vendors start to get beyond aggrivating.)

instant - Saturday, July 21, 2007 - link

And when we are talking about GAMES, how if at all is this relevant to the current discussion?x64 has been the way to go ever since it was released.

miahallen - Saturday, July 14, 2007 - link

That is incorrect, Vista x64 will run x86 apps without problem (so will XP x64), that's the nice thing about it. I ran x64 for quite a while, and ran almost nothing but x86 apps on it.The keycodes for x86 do not chnge for x64 installs...they use the same key. And the retail versions I bought did not have both versions on one disc, I had to order a x64 disc online (and pay $10 for S&H).