More Mainstream DX10: AMD's 2400 and 2600 Series

by Derek Wilson on June 28, 2007 8:35 AM EST- Posted in

- GPUs

A Closer Look at RV610 and RV630

The RV6xx parts are similar to the R600 hardware we've already covered in detail. There are a few major differences between the two classes of hardware. First and foremost, the RV6xx GPUs include full video decode acceleration for MPEG-2, VC-1, and H.264 encoded content through AMD's UVD hardware. There was some confusion over this when R600 first launched, but AMD has since confirmed that UVD hardware is not at all present in their high end part.

We also have a difference in manufacturing process. R600 uses an 80nm TSMC process aimed at high speed transistors, while their RV610 and RV630 GPU based cards are fabbed on a 65nm TSMC process aimed at lower power consumption. The end result is that these GPUs will run much cooler and require much less power than their big brother the R600.

Transistor speed between these two processes ends up being similar in spite of the focus on power over performance at 65nm. RV610 is built with 180M transistors, while RV630 contains 390M. This is certainly down from the huge transistor count of R600, but nearly 400M is nothing to sneeze at.

Aside from the obvious differences of transistor count and the number of different units (shaders, texture unit, etc.), the only other major difference is in memory bus width. All RV610 GPU based hardware will have a 64-bit memory bus, while RV630 based parts will feature a 128-bit connection to memory. Here's the layout of each GPU:

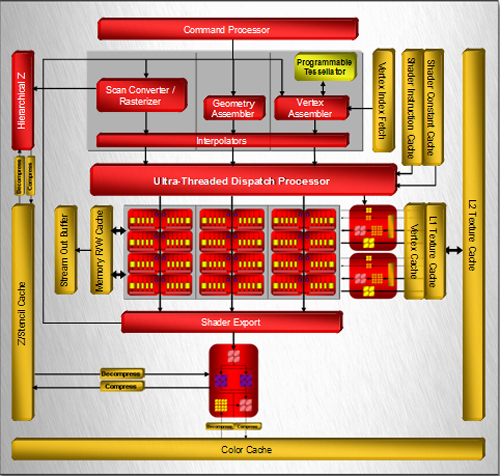

RV630 Block Diagram

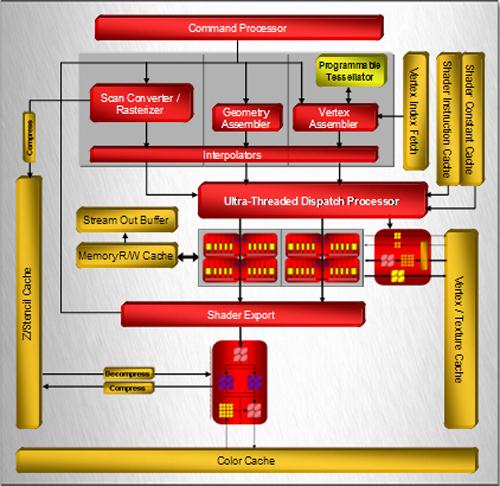

RV610 Block Diagram

One of the first things that jump out is that both RV6xx based designs feature only one render back end block. This part of the chip is responsible for alpha (transparency) and fog, dealing with final z/stencil buffer operations, sending MSAA samples back up to the shader to be resolved, and ultimately blending fragments and writing out final pixel color. Maximum pixel fill rate is limited by the number of render back ends.

In the case of both current RV6xx GPUs, we can only draw out a maximum of 4 pixels per clock (or we can do 8 z/stencil-only ops per clock). While we don't expect extreme resolutions to be run on these parts (at least not in games), we could run into issues with effects that make heavy use of MRTs (multiple render targets), z/stencil buffers, and antialiasing. With the move to DX10, we expect developers to make use of the additional MRTs they have available, and lower resolutions benefit from AA more than high resolutions as well. We would really like to see higher pixel draw power here. Our performance tests will reflect the fact that AA is not kind to AMD's new parts, because of the lack of hardware resolve as well as the use of only one render back end.

Among the notable features that we will see here are tessellation, which could have an even larger impact on low end hardware for enabling detailed and realistic geometry, and CFAA filtering options. Unfortunately, we might not see that much initial use made of the tessellation hardware, and with the reduced pixel draw and shading power of the RVxx series, we are a little skeptical of the benefits of CFAA.

From here, lets move on and take a look at what we actually get in retail products.

The RV6xx parts are similar to the R600 hardware we've already covered in detail. There are a few major differences between the two classes of hardware. First and foremost, the RV6xx GPUs include full video decode acceleration for MPEG-2, VC-1, and H.264 encoded content through AMD's UVD hardware. There was some confusion over this when R600 first launched, but AMD has since confirmed that UVD hardware is not at all present in their high end part.

We also have a difference in manufacturing process. R600 uses an 80nm TSMC process aimed at high speed transistors, while their RV610 and RV630 GPU based cards are fabbed on a 65nm TSMC process aimed at lower power consumption. The end result is that these GPUs will run much cooler and require much less power than their big brother the R600.

Transistor speed between these two processes ends up being similar in spite of the focus on power over performance at 65nm. RV610 is built with 180M transistors, while RV630 contains 390M. This is certainly down from the huge transistor count of R600, but nearly 400M is nothing to sneeze at.

Aside from the obvious differences of transistor count and the number of different units (shaders, texture unit, etc.), the only other major difference is in memory bus width. All RV610 GPU based hardware will have a 64-bit memory bus, while RV630 based parts will feature a 128-bit connection to memory. Here's the layout of each GPU:

One of the first things that jump out is that both RV6xx based designs feature only one render back end block. This part of the chip is responsible for alpha (transparency) and fog, dealing with final z/stencil buffer operations, sending MSAA samples back up to the shader to be resolved, and ultimately blending fragments and writing out final pixel color. Maximum pixel fill rate is limited by the number of render back ends.

In the case of both current RV6xx GPUs, we can only draw out a maximum of 4 pixels per clock (or we can do 8 z/stencil-only ops per clock). While we don't expect extreme resolutions to be run on these parts (at least not in games), we could run into issues with effects that make heavy use of MRTs (multiple render targets), z/stencil buffers, and antialiasing. With the move to DX10, we expect developers to make use of the additional MRTs they have available, and lower resolutions benefit from AA more than high resolutions as well. We would really like to see higher pixel draw power here. Our performance tests will reflect the fact that AA is not kind to AMD's new parts, because of the lack of hardware resolve as well as the use of only one render back end.

Among the notable features that we will see here are tessellation, which could have an even larger impact on low end hardware for enabling detailed and realistic geometry, and CFAA filtering options. Unfortunately, we might not see that much initial use made of the tessellation hardware, and with the reduced pixel draw and shading power of the RVxx series, we are a little skeptical of the benefits of CFAA.

From here, lets move on and take a look at what we actually get in retail products.

96 Comments

View All Comments

TA152H - Thursday, June 28, 2007 - link

While I see your points, I don't agree entirely. Does the 7600GT beat the 2400 in DX10? Well, no, it's not for that. So, I'd agree you wouldn't upgrade to the 2400 for DX9 performance, you might for DX10. But they didn't publish any results for DX10, so we don't know if it's even worthwhile for that.You make a good point with timing on an upgrade, but I'll offer a few good reasons. Sometimes, you NEED something right now, either because your old card died, or because you're building a new machine, or whatever. So, it's not always about an upgrade. And, in these instances, I think most people will be very interested in DX10 performance since we're already seeing titles for them coming out, and obviously DX9 will cease to be relevant in the future as DX10 takes over. The advantages of DX10 are huge and meaningful, and DX9 can't die fast enough. But, it will take a while because XP and old computers represent a huge installed base, and software companies will have to keep making DX9 versions along with DX10. But who will want to run them degraded? Also, Vista itself will be pushing demand for DX10, and XP is pretty much dead now. I don't think you'll see too many new machines with this OS on it, but Vista of course will continue to grow. Would you want a DX9 card for Vista? I wouldn't, and most people wouldn't, so they make these DX10 cards that at least nominally support DX10. Maybe that's all this is, just products that are made to fill a need for the company to say they have inexpensive DX10 cards to run Vista on. I don't know, because they didn't put out DX10 results, instead wasted the review on DX9 which isn't why these cards were put out. And naturally, most of the idiots who post here eat it up without understanding DX9 wasn't the point of this product. I won't be surprised if DX10 sucks too, and these were just first attempts that fell short, but I'd like to see results before I condemn the cards for being poor at something that wasn't their focus.

leexgx - Thursday, June 28, 2007 - link

?? how can XP be dead most Offices will be useing it probly for the next 5-8 years home users its going to take 2-3 years before users upgrade if thay are even bothered if it works it works

i love to use vista but Drivers Suck for it M$ Should Demand Fully working drivers Before WHQL an driver not just It works an slap on an WHQL cert on it (Nvidia crapy chip set drivers and Creative Poor driver support {but thats M$ fault for changeing the Driver model}..)

most norm Home users do not care what video card is in there pc so the Low end video parts whould not bother them

defter - Thursday, June 28, 2007 - link

ROFL. If 2400 XT gets 9 fps in a "DX9" game at 1280x1024, do you expect that any real "DX10" game will be playable on it at any reasonable resolution?

It's quite clear by now, that in order to really use those DX10 features (i.e. run games with settings where there is a noticable image quality difference compared to DX9 codepath), you need to have 8800/2900 class card. DX10 performance of 2600 series, let alone 2400 series, is quite irrelevant.

Frumious1 - Thursday, June 28, 2007 - link

Have you even tried any DX10 hardware? Because I have to say that it sounds like you're talking out of your ass! The reason I say that is because the current DX10 titles (which are really just DX9 titles with hacked in DX10 support) perform atrociously! Company of Heroes, for example, has performance cut in half or more when you enable DirectX 10 mode -- and that's running on a mighty 8800 GTX! (Yes, I own one.) It looks fractionally better (improved shadows for the most part) but the performance hit absolutely isn't worthwhile.Company of Heroes isn't the only example of games that perform poorly under DirectX 10. Call of Juarez and Lost Planet also perform worse in DirectX 10 mode than they do in DirectX 9 on the same hardware (all this testing having been done on HD 2900 XT and 8800 series hardware by several reputable websites - see FiringSquad among others). You don't actually believe that crippled hardware like the 8500/8600 or 2400/2600 will somehow magically perform better in DirectX 10 mode than the more powerful high-end hardware, do you?

Extrapolate those performance losses over to hardware that is already about one third as powerful as the 8800/2900 GPUs, and this low-end DirectX 10 hardware is simply a marketing attempt. If it's slower in DX9 mode and the hihg-end stuff suffers in performance, why would having fewer ROPs, Shader Units, etc. help matters?

This is blatant marketing -- marketing that people like you apparently buy into? "The 2600 isn't intended to perform well in DirectX 9. That's why it has DirectX 10 support! Wait until you see how great it performs in DirectX 10 -- something cards like the 7600/7900 and X1950 type cards can't run at all because they lack the feature. Blah de blah blah...." Don't make me laugh! (Too late.)

Radeon 9700 was the first DirectX 9 hardware to hit the planet, followed by the (insert derogatory adjectives) GeForce FX cards several months later. Is but is because we're seeing the exact same thing in reverse this time: NVIDIA 8800 hardware it appears to be pretty fast all told, and almost 9 months after the hardware became available ATI's response can't even keep up! Anyway, how long did it take for us to finally get software that required DirectX 9 support? I would say Half-Life 2 is about as early as you can go, and that came out in November 2004. That was more than two years after the first DirectX 9 hardware (as well as the DirectX 9 SDK) was released -- August 2002 for the Radeon 9700, I believe.

Sure, there were a few titles that tried to hack in rudimentary DirectX 9 support, but it wasn't until 2005 that we really started to see a significant number of games requiring DirectX 9 hardware for the higher-quality rendering modes. Given that DirectX 10 requires Windows Vista, I expect the transition to be even slower! I have a shiny little copy of Windows Vista sitting around my house. I installed it, played around with it, tried out a few DirectX 10 patched titles... and I promptly uninstalled. (Okay, truth be told I installed it on a spare 80GB hard drive, so technically I can still dual-boot if I want to.) Vista is a questionable investment at best, high-end DirectX 10 hardware is expensive as well, and the nail in the coffin right now is that we have yet to see any compelling DirectX 10 stuff. Thanks, but I'll pass on any claims of DX10 being useful for now.

Jedi2155 - Friday, June 29, 2007 - link

Farcry was February 04, and that was definitely full DX9 support.coldpower27 - Friday, June 29, 2007 - link

That was DX8 largely with some DX9 shader in. But the majority of the code use was still in DX8 mode.Were talking about native DX9 games which would be something on the magnitude of Oblivion, or Neverwinter Nights 2.

titan7 - Saturday, June 30, 2007 - link

d3d9 is basically d3d8, but with SM2.0 instead of SM1. Just because Oblivion doesn't support SM1 doesn't mean it is any more or less of a d3d9 title than one that also supported sm1 or OpenGL.TA152H - Thursday, June 28, 2007 - link

With any technology, the initial software and drivers is going to be sub-optimal, and not everyone is going to be playing at the same resolutions or configurations. But, these cards aren't made for the ultimate gaming experience, they are low end cards to perform tasks that are not so demanding well enough for the mainstream user.What you don't seem to understand is, not everyone is a dork that plays games all day. Some people actually have lives, and they don't need high end hardware to shoot aliens. For you, sure, get the high end stuff, but that's not what you compare these cards to. $60 card versus $800? Duh. Why would you even make the comparision? You make the comparison of how well the perform for their target, which is a low end, feature rich card. Will it work well with Vista and mainstream type of apps? Does the feature set and performance justify the cost?

Only an idiot would use this type of card for a high end gaming computer, and only the same type of person would even compare it to that.

LoneWolf15 - Thursday, June 28, 2007 - link

With any technology, the initial software and drivers is going to be sub-optimal, and not everyone is going to be playing at the same resolutions or configurations. But, these cards aren't made for the ultimate gaming experience, they are low end cards to perform tasks that are not so demanding well enough for the mainstream user.Yes, and by the time the technology and drivers (in this case, DirectX 10) ARE optimal, these cards will be replaced by a completely new generation, making them a pretty poor purchase at this time. Remember the timetable for going from DirectX 8 to DirectX 9?

Derek is completely right at this point. If you're buying a card, you should buy based on what kind of DirectX 9 performance you're going to get, because by the time REAL DX10 games come out (and I'm going to make a bet that will be a minimum of 12 months from now, and I mean for good ones, not some DX-9 game with additional hacks) there will be product refreshes or new designs from both ATI and nVidia. Buying a card solely for DirectX 10 performance, especially a low-to-midrange one, is completely silly. If it's outclassed now, it will be far worse in a year. It really makes sense to choose to either a)buy a minimum of a 320MB Geforce 8800GTS, or b)Stick with upper-middle to high-end last-gen hardware (read: Radeon x19xx, Geforce 79xx) until there's a reason to upgrade.

defter - Thursday, June 28, 2007 - link

Yes, it would be interesting to know the performance of 2400 Pro. After all, faster 2400 XT got whopping 8.9 fps at 1280x1024 no FSAA, no AF in Rainbow Six and 9.3 fps in Stalker with the same settings. I wonder if 2400 Pro gets more than 6 fps?

I actually don't see what is the point of 2400 XT. If somebody needs just multimedia functionality and doesn't care about gaming, then $50 2400 Pro/8400 GS is a cheaper choice. If somebody cares about gaming, then the 2400 XT is useless since 8600GT/2600 Pro are much faster and are only slightly more expensive.