ATI Radeon HD 2900 XT: Calling a Spade a Spade

by Derek Wilson on May 14, 2007 12:04 PM EST- Posted in

- GPUs

R600 Overview

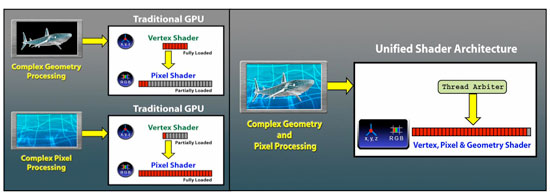

From a very high level, we have the same capabilities we saw in the G80, where each step in the pipeline runs on the same hardware. There are a lot of similarities when stepping way back, as the same goals need to be accomplished: data comes into the GPU, gets setup for processing, shader code runs on the data, and the result either heads back up for another pass through the shaders or moves on to be rendered out to the framebuffer.

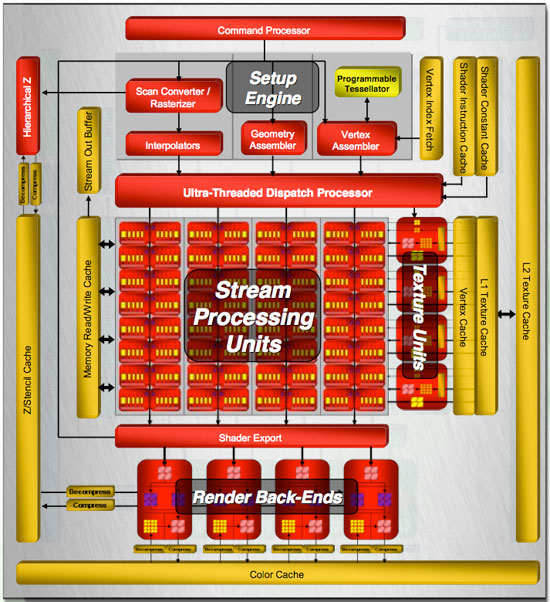

The obvious points are that R600 is a unified architecture that supports DX10. The set of requirements for DX10 are very firm this time around, so we won't see any variations in feature support on a basic level. AMD and NVIDIA are free to go beyond the DX10 spec, but these features might not be exposed through the Microsoft API without a little tweaking. AMD includes one such feature, a tessellator unit, which we'll talk about more later. For now, let's take a look at the overall layout of R600.

Our first look shows a huge amount of stream processing power: 320 SPs all told. These are a little different than NVIDIA's SPs, and over the next few pages we'll talk about why. Rather than a small number of SPs spread across eight groups, our block diagram shows R600 has a high number of SPs in each of four groups. Each of these four groups is connected to its own texture unit, while they share a connection to shader export hardware and a local read/write cache.

All of this is built on an 80nm TSMC process and uses in the neighborhood of 720 Million transistors. All other R6xx parts will be built on a 65nm processes with many fewer transistors, making them much smaller and more power efficient. Core clock speed is on the order of 740MHz for R600 with memory running at 825MHz.

Memory is slower this time around with higher bandwidth, as R600 implements a 512-bit memory bus. While we're speaking about memory, AMD has revised their Ring Bus architecture for this round, which we'll delve into later. Unfortunately we won't be able to really compare it to NVIDIA's implementation, as they won't go into any detail with us on internal memory buses.

And speaking of things NVIDIA won't go into detail on, AMD was good enough to share very low level details, including information on cache sizes and shader hardware implementation. We will be very happy to spend time talking about this, and hopefully AMD will inspire NVIDIA to start opening up a little more and going deeper into their underlying architecture.

To hit the other hot points, R600 does have some rather interesting unique features to back it up. Aside from including a tessellation unit, they have also included an audio processor on their hardware. This will accept audio streams and send them out over their DVI port through a special converter to integrate audio with a video stream over HDMI. This is unique, as current HDMI converters only work with video. AMD also included a programmable AA resolve feature that allows their driver team to create new ways of filtering subsample data.

R600 also features an independent DMA engine that can handle moving and managing all memory to and from the GPU, whether it's over the PCIe bus or local memory channels. This combined with huge amounts of memory bandwidth should really assist applications that require large amounts of data. With DX10 supporting up to 8k x 8k textures, we are very interested in seeing these limits pushed in future games.

That's enough of a general description to whet your appetite: let's dig down under the surface and find out what makes this thing tick.

86 Comments

View All Comments

TA152H - Monday, May 14, 2007 - link

Fanboy? What a dork.I've had success with ATI, not with NVIDIA, and I know ATI stuff a lot better so it's just easier for me to work with. It's not an irrational like or dislike. I bought one NVIDIA and it was a nightmare. Plus, I'm not as sure they'll be around for very long as I am ATI/AMD, although they had a good quarter, and AMD surely had a dreadful one.

Selling discrete video cards alone might get a lot more difficult with the integration of CPUs, and GPUs.

yyrkoon - Monday, May 14, 2007 - link

You are a fanboy, face it. 'I tried a nVidia card once . . .' How long ago was that ? Who made the card ? Did you have it configured properly? Once?! Details like this are important, and seemily/conviently left out. Anyhow, anyone claiming that nVIdia cards are 'junk' has definate issues with assembling/configuring hardware. I say this because my current system uses a nVidia based card, and is 100% rock solid. 'Person between the chair and keyboard' rings a bell.Ask any Linux user why they refuse to use ATI cards in their system . . . You are also one of these people out there that claims ATI driver support is superior to nVIdias driver support I suppose ? If you have truely been using ATI products for 20 years, then you know ATI has one of the worst reputations on the planet for driver support(and while it may have improved, it is not as good as nVidias still).

Yeah, anyhow, ATI, and nVidia both can have problems with their hardware, it is not based 100% on their architecture, but the OEM releasing the products have a lot of effect here also. There are bad OEMs to buy from here on both sides of the fence, knowing who to stay away from, is half the work when building a PC, and probably had a lot more to do with your alleged 'bad nVIdia card', assuming you actually configured the card properly.

I also had a problem with an nVIdia card once, I bought a brand new GF3 card about 7 years ago, and a few of the older games I had, would not display properly with it. What did I do ? I waited about a month, for a new driver, and the problem was solved. I have also had issues with ATI cards, one of which drew too much power from the AGP slot, and would cause the given system to crash 1-2 times a day. This was a design issue/oversight on ATI's behalf(the card was made by Saphire, who also makes ATIs cards). What did I do ? I replaced the card with an nVIdia card, and the system has been stable since.

So you see, I too can skew things to make anyone look bad also, and in the end, it would only serve to make me look like the dork. But if you want to pay more, for less, that is perfectly fine by me.

Pirks - Monday, May 14, 2007 - link

I've got all problems and crappy drivers (especially Linux ones) only from ATI while nVidia software was always much better in my experience. power hungry noisy monsters made by whom? by ATI! as always :) same shit as with their x1800/x1900 miserable power guzzling seriesdiscrete video cards are not going away any time soon. ever heard of integrated video used in games, besides ones from 2000, like old Quake 2? no? then please continue your lovefest with ATI, but for me - it looks like I'll pass on them this time again - since Radeon 9800Pro they went downhill and continue in that direction. they MAY make a decent integrated CPU/GPU budget-oriented vendor in a future, for all those office folks playing simple 2D office games, but real stuff? nope, ATI is still out of the game for me. let's see if they manage to come back with reincarnation of R300 in future.

ironically, AMD CPUs on the other hand have best price/performance ratio, so intel won't see me as their customer. I wish ATI 3D chips were as good as AMD CPUs in that regard (and overclockers please shut up, I'm not bothering to OC my rig because I don't enjoy benchmark numbers, I enjoy REAL stuff like games, and Intel is out of the game for me as well, at least until their budget single core Conroes are out)

utube545 - Tuesday, May 22, 2007 - link

Get a clue, you fucking cretin.dragonsqrrl - Thursday, August 25, 2011 - link

haha... lol, wow. facepalm.dragonsqrrl - Thursday, August 25, 2011 - link

Damn you're a fail noob of an ATI fanboy. Time has not been kind to the HD2900XT, and now you sound more ridiculous then ever... lol.yzkbug - Monday, May 14, 2007 - link

Not a word about new AVIVO HD and digital sound features?DerekWilson - Wednesday, May 16, 2007 - link

we mentioned this ...on the r600 overview page ...

photoguy99 - Monday, May 14, 2007 - link

First to be clear and I do not condone the title of this article, there's no need to bring racism into this.But my point is NVidia can and will react by making the performance per dollar competitive for the R600 vs 8800GTS.

Once the prices are comparable, why buy a more power hungry part (the ATI)?

This is one disadvantage they can't correct until the next respin.

DrMrLordX - Monday, May 14, 2007 - link

Based on the benchmarks results, the only reason I can see for getting 2900XTs is if a). you don't care about power consumption and b). want to run a Crossfire rig at a lower cost of entry than dual-8800 GTXs or 8800 Ultras.As others have said, some more benchmarks in mature DX10 titles might show who the real winner here is performance-wise, and that holds true for multi-GPU scenarios as well.