NVIDIA GeForce 8600: Full H.264 Decode Acceleration

by Anand Lal Shimpi on April 27, 2007 4:34 PM EST- Posted in

- GPUs

NVIDIA has always been the underdog when it comes to video processing features on its GPUs. For years ATI had dominated the market, being the first of the two to really take video decode quality and performance into account on its GPUs. Although now defunct, ATI maintained a significant lead over NVIDIA when it came to bringing TV to your PC. ATI's All-in-Wonder series offered a much better time shifting/DVR experience than anything NVIDIA managed to muster up, usually too late on top of that. Obviously these days most third party DVR applications have been made obsolete by the advent of Microsoft's Media Center 10-ft UI, but when the competition was tough, ATI was truly on top.

While NVIDIA eventually focused on more than just 3D performance with its GPUs, NVIDIA always seemed to be one step behind ATI when it came to video processing and decoding features. More recently, ATI was first to offer H.264 decode acceleration on its GPUs at the end of 2005.

NVIDIA has remained mostly quiet throughout much of ATI's dominance of the video market, but for the first time in recent history, NVIDIA actually beat ATI to the punch on implementing a new video related feature. With the launch of its GeForce 8600 and 8500 GPUs, NVIDIA became the first to offer 100% GPU based decoding of H.264 content. While we can assume that ATI will offer the same in its next-generation graphics architecture, the fact of the matter is that NVIDIA was first and you can actually buy these cards today with full H.264 decode acceleration.

We've taken two looks at 3D gaming performance of NVIDIA's GeForce 8600 series and came away relatively unimpressed, but for those interested in watching HD-DVD/Blu-ray content on their PCs does NVIDIA's latest mid-range offering have any redeeming qualities?

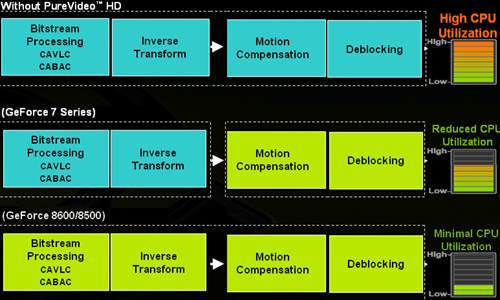

Before we get to the performance tests, it's important to have an understanding of what the 8600/8500 are capable of doing and what they aren't. You may remember this slide from our original 8600 article:

The blocks in green illustrate what stages in the H.264 decode pipeline are now handled completely by the GPU, and you'll note that this overly simplified decode pipeline indicates that the GeForce 8600 and 8500 do everything. Adding CAVLC/CABAC decode acceleration was the last major step in offloading H.264 processing from the host CPU, and it simply wasn't done in the past because of die constraints and transistor budgets. As you'll soon see, without CAVLC/CABAC decode acceleration, high bitrate H.264 streams can still eat up close to 100% of a Core 2 Duo E6320; with the offload, things get far more reasonable.

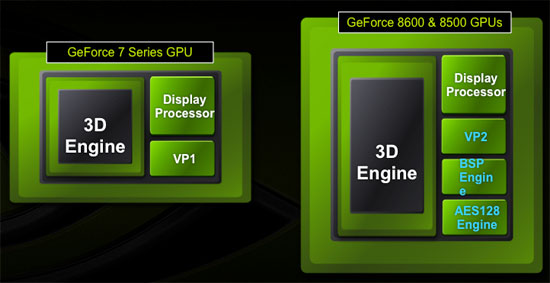

The GeForce 8600 and 8500 have a new video processor (that NVIDIA is simply calling VP2) that runs at a higher clock rate than its predecessor. Couple that with a new bitstream processor (BSP) to handle CAVLC/CABAC decoding, and these two GPUs can now handle the entire H.264 decode pipe. There's a third unit that wasn't present in previous GPUs that has made an appearance in the 8600/8500 and that is this AES128 engine. The AES128 engine is simply used to decrypt the content sent from the CPU as per the AACS specification, which helps further reduce CPU overhead.

Note that the offload NVIDIA has built into the G84/G86 GPUs is hardwired for H.264 decoding only; you get none of the benefit for MPEG-2 or VC1 encoded content. Admittedly H.264 is the more strenuous of the three, but given that VC1 content is still quite prevalent among HD-DVD titles it would be nice to have. Also note that as long as your decoder supports NVIDIA's VP2/BSP, any H.264 content will be accelerated. For MPEG-2 and VC1 content, the 8600 and 8500 can only handle inverse transform, motion compensation and in-loop deblocking and the rest of the pipe is handled by the host CPU; VP1 NVIDIA hardware only handles motion compensation and in-loop deblocking. ATI's current GPUs can handle inverse transform, motion compensation and in-loop deblocking, so they should in theory have lower CPU usage than the older NVIDIA GPUs on this type of content.

It's also worth noting that the new VP2, BSP and AES128 engines are only present in NVIDIA's G84/G86 GPUs, which are currently only used on the GeForce 8600 and 8500 cards. GeForce 8800 owners are out of luck, but NVIDIA never promised this functionality to 8800 owners so there are no broken promises. The next time NVIDIA re-spins its high end silicon we'd expect to see similar functionality there, but we're guessing that it won't be for quite some time.

64 Comments

View All Comments

JarredWalton - Saturday, April 28, 2007 - link

The peak numbers may not be truly meaningful other than indicating a potential for dropped frames. Average CPU utilization numbers are meaningful, however. Unlike SETI, there is a set amount of work that needs to be done in a specific amount of time in order to successfully decode a video. The video decoder can't just give up CPU time to lower CPU usage, because the content has to be handled or frames will be dropped.The testing also illustrates the problem with ATI's decode acceleration on their slower cards, though: the X1600 XT is only slightly faster than doing all the work on the CPU in some instances, and in the case of VC1 it may actually add more overhead than acceleration. Whether that's due to ATI's drivers/hardware or the software application isn't clear, however. Looking at the WinDVD vs. PowerDVD figures, the impact of the application used is obviously not negligible at this point.

BigLan - Saturday, April 28, 2007 - link

Does the 8600 also accelerate x264 content? It's looking like x264 will become the successor to xvid, so if these cards can, they'll be the obvious choice for HD-HTPCs.I guess the main question would be if windvd or powerdvd can play x264. I suspect they can't, but nero showtime should be able to.

MrJim - Tuesday, May 8, 2007 - link

Accelerating x264 content would be great but i dont know what the big media companies would think about that, maybe ATI or Nvidia will lead the way, hopefully.Xajel - Saturday, April 28, 2007 - link

I'm just asking why those enhancement are not in the higher 8800 GPU's ??I know 8600 will be more used in HTPC than 8800, but it's just not a good reason to not include them !!

Axbattler - Saturday, April 28, 2007 - link

Those cards came out 5 months after the 8800. Long enough for them to add the tech it seems. I'd expect them in the 8900 (or whatever nVidia name their refresh) though. Actually, it would be interesting to see if they add to the 8800 Ultra.Xajel - Saturday, April 28, 2007 - link

I don't expect Ultra to have them, AFAIK Ultra is just tweaked version of GTX with higher MHz for both Core and RAM...I can expect it for my 7950GT successor

Spacecomber - Friday, April 27, 2007 - link

I'm not sure I understand why Nvidia doesn't offer an upgraded version of their decoder software, instead of relying on other software companies to get something put together to work with their hardware.thestain - Friday, April 27, 2007 - link

http://www.newegg.com/product/product.asp?item=N82...">All this tech jock sniffing with the latest and greatest, but this old reliable is a better deal isn't it?For watching movies.. for the ordinary non-owner of the still expensive hd dvd players and hd dvds... for standard definition content.. even without the nice improvements nvidia has made.. seems to me that the old tech still does a pretty good job.

What do you think of this ole 6600 compared to the 8600 in terms of price paid for the performance you are going to see and enjoy in reality?

DerekWilson - Saturday, April 28, 2007 - link

the key line there is "if you have a decent cpu" ... which means c2d e6400.for people with slower cpus, the 6600 will not cut it and the 8600gt/8500gt will be the way to go.

the aes-128 step still needed to be done on older hardware (as it needs to decrypt the data stream sent to it by the CPU), but using dedicated hardware rather than the shader hardware to do this should help save power or free up resources for other shader processing (post processing like noise redux, etc).

Treripica - Friday, April 27, 2007 - link

I don't know if this is too far off-topic, but what PSU was used for testing?