GeForce 8800 Roundup: The Best of the Best

by Josh Venning on November 13, 2006 11:04 AM EST- Posted in

- GPUs

Power Consumption

Power consumption is something that is important to look at when evaluating a graphics card, and because these 8800s are such high performers, they create very high power levels as well.

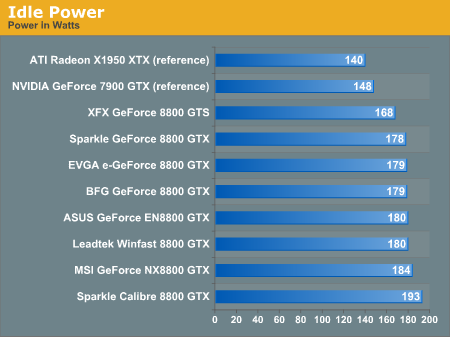

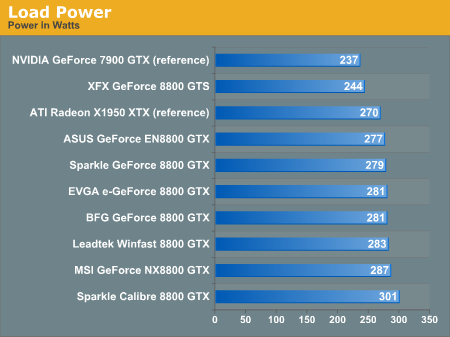

We tested power consumption for these parts in the same way we usually do, by measuring the total power draw of the system with each of the cards installed in two different states. The first state is with the system idle (no other programs running) and the second is while the GPU is under stress testing. We use a few of the benchmarks from 3DMark06 to stress the GPUs to find out their power consumption under load. Because we are measuring the wattage of the entire system and not simply the cards, we can only get a general idea of the type of power levels of these cards.

Looking at the data, we immediately see that the Sparkle Calibre 8800 GTX is the highest on the graphs for its power consumption. This was expected because of its peltier cooler. Most of these cards got results that remained around 180 Watts while the system was idle, and 280 Watts while the system was under load. The XFX GeForce 8800 GTS naturally got the lowest levels of the bunch, which makes sense given that it's the lone GTS out of a roundup of primarily GTX cards. Out of the 8800 GTXs though, the ASUS EN8800 GTX seemed to draw the least amount of power under load, and the reference design Sparkle 8800 GTX pulled the least while idle.

34 Comments

View All Comments

JarredWalton - Monday, November 13, 2006 - link

Derek already addressed the major problem with measuring GPU power draw on its own. However, given similar performance we can say that the cards are the primary difference in the power testing, so you get GTX cards using 1-6w more power at idle, and the Calibre uses up to 15W more. At load, the power differences cover a 10W spread, with the Calibre using up to 24W more.If we were to compare idle power with IGP and an 8800 card, we could reasonably compare how much power the card requires at idle. However, doing so at full load is impossible without some customized hardware, and such a measurement isn't really all that meaningful anyway if the card is going to make the rest of the system draw more power anyway. To that end, we feel the system power draw numbers are about the most useful representation of power requirements. If all other components are kept constant on a testbed, the power differences we should should stay consistent as well. How much less power would an E6400 with one of these cards require? Probably somewhere in the range of 10-15W at most be likely.

IKeelU - Monday, November 13, 2006 - link

Nice roundup. One comment about the first page, last paragraph:"HDMI outputs are still not very common on PC graphics cards and thus HDCP is supported on each card."

Maybe I'm misinterpreting, but it sounds like you are saying that HDCP is present *instead* of HDMI. The two are independent of each other. HDMI is the electrical/physical interface, whereas HDCP is the type of DRM with which the information will be encrypted.

Josh Venning - Monday, November 13, 2006 - link

The sentence has been reworked. We meant to say HDCP is supported through DVI on each card. Thanks.TigerFlash - Monday, November 13, 2006 - link

Does anyone know if the Evga WITH ACS3 is what is on retail right now? Evga's website seems to be the only place that distinguishes the difference. Everyone else is just selling an "8800 GTX."Thanks.

Josh Venning - Monday, November 13, 2006 - link

The ACS3 version of the EVGA 8800 GTX we had for this review is apparently not available yet anywhere, and we couldn't find any info on their website about it. Right now we are only seeing the reference design 8800 GTX for sale from EVGA, but the ACS3 should be out soon. The price for this part may be a bit higher, but our sample has the same clock speeds as the reference part.SithSolo1 - Monday, November 13, 2006 - link

They have two different heat sinks so I assume one could tell by looking at the product picture. I know a lot of sites use the product picture from the manfacture's site but I think they would use the one of the ASC3 if that's the one they had. I also assume they would charge a little more for it.imaheadcase - Monday, November 13, 2006 - link

Would love to see how these cards performance in Vista, even RC2 would be great.I know the graphics drivers for nivdia are terrible, i mean terrible, in vista atm but when they at least get a final out for 8800 a RC2 or a Vista final roundup vs winxp SP@ would be great :D

DerekWilson - Monday, November 13, 2006 - link

Vista won't be that interesting until we see a DX10 driver from NVIDIA -- which we haven't yet and don't expect for a while. We'll certainly test it and see what happens though.imaheadcase - Monday, November 13, 2006 - link

Oh the current drivers for the 8800 beta do not support DX10? Is that the new detX drivers I read about Nvidia working on are for?peternelson - Saturday, November 25, 2006 - link

I'd be interested to know if the 8800 drivers even support SLI yet? The initial ones I heard of did not.