NVIDIA's GeForce 8800 (G80): GPUs Re-architected for DirectX 10

by Anand Lal Shimpi & Derek Wilson on November 8, 2006 6:01 PM EST- Posted in

- GPUs

Shader Model 4.0 Enhancements

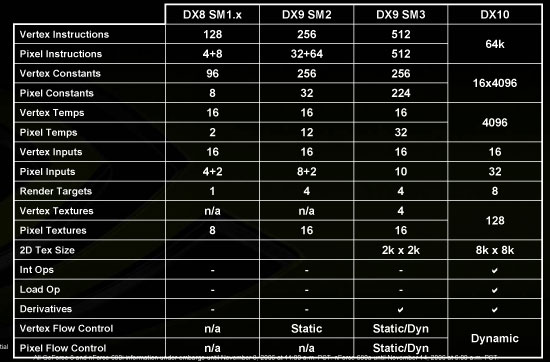

Aside from defining the capabilities and instructions that the different shaders must support, Microsoft also specifies attributes like precision, number of instructions that can make up a shader program, and the number of registers available to the programmer. Here's a table comparing DX9 and DX10 shader models.

Along with these changes, Microsoft has made some lower level adjustments. Until now, shaders have been exclusively floating point. This means that operations like memory addressing and array indexing (which use integer values) must be done carefully if interpolation is to be avoided. With DX10, integer and bitwise operations have been added to the mix. This means programmers can make use of traditional data structures and memory operations. Increasing the flexibility of the hardware and enabling programmers to employ methods commonly used on more general purpose hardware will certainly be helpful in creating a better platform for developers to create the effects they desire.

Floating point operations have also been enhanced, as Microsoft has placed tighter requirements on how to handle the numbers. IEEE 754 is a specification that defines all aspects of floating point interaction. Sticking to such a standard allows programmers to guarantee that operations will be consistent between different types of hardware. Because Microsoft hasn't been as strict in the past, we've seen some issues where ATI and NVIDIA don't provide the exact same result due to rounding and accuracy differences. This time around, DX10 has very nearly IEEE 754 requirements. There are certain aspects of IEEE 754 that are not desirable in graphics hardware. These aspects have to do with over and underflow and denorms. The special results that are usually returned in these cases under IEEE specifications aren't as useful as clamping the value of a calculation to either the smallest possible result or largest possible result. With DX10, we do see the addition of NaN and infinity as possible results, and along with a better specification of accuracy and precision, those interested in general purpose computing on graphics processors (GPGPU) should be very happy.

What are Geometry Shaders?

A whole new shader type has been added this time around as well: Geometry shaders. These shaders are similar to vertex shaders in that they operate on geometry before it has been projected on to screen space where pixel processing can take over. Rather than operating on single vertices, however, geometry shaders operate on larger blocks: meshes. These meshes (made up of vertices) can be manipulated in a myriad of ways. Working with an object containing vertices gives programmer the ability to manipulate those vertices in relation to each other more easily. Vertices can even be added or removed from a mesh. The ability to write out data from the geometry shaders (rather than simply sending it on for pixel processing) will also allow software to reprocess vertices that have been added or altered by the geometry shaders. As an extension to geometry instancing, we will have more flexibility in manipulating instanced geometry in order to avoid the cut and paste look. All of these new features mean we should see things like particle systems move completely off of the CPU and on to the GPU, and geometry may begin to play a larger role in graphics in the future.

In the beginning, increasing the number of triangles that could be rendered in a scene was a huge factor in performance. After a certain point, software, CPUs, buses, and overhead in general started to get in the way of how much difference adding more triangles made. Rather than having millions of really tiny triangles moving around, it became much faster to use textures to simulate geometry. Currently, per pixel lighting combined with uncompressed normal maps do a great job of simulating a whole lot of geometry at the expense of a lot of pixel power. With the new 8k*8k texture sizes and other DX10 enhancements, there is a lot of potential for using pixel processing to simulate geometry even better. But the combination of unified shaders and geometry shaders in new hardware should start to give developers a whole lot more flexibility in how they approach the problem of fine detail in geometry.

111 Comments

View All Comments

Sharky974 - Thursday, November 9, 2006 - link

The new features of DX10 stuff was captivating at first, but quickly grew tiresome and needlessly complex. The IQ comparisons the same thing, some simplicity is needed here. Tell us in a nutshell what looks better and why. The mouse over pictures are well nigh useless as well, and all look like crap. Whatever needs to be changed to get the IQ point across, needs to be changed already, I'm guessing 200 zoom is a problem for starters.Then who's bright idea was it to only test one resolution, through the whole article?

Then who's bright idea was it to dedicate just as many graphs as performance, one per game, to not only power draw, but the even more useless performance per watt? Meaning 66% of your data graphs, in an article about a paradign changin, long-anticipated, brand new GPU, are related to the power usage of the card. Are you electric workers monthly.com now?

I am very surprised more of the comments weren't negative, this review was a total failure.

And yeah, what's with all the non-standard resolution testing? All the big sites like H, Anand, and FS go round and round talking about the incredible depths they go to get the bottom of real world performance as it relates to the real world, average user, and then you guys use stupid resolution likes 1280X960 (FS uses that particular one), that nobody on earth uses, regularly! It's really, really stupid. Hell for that matter, nobody uses 1600X1200 or any non-LCD native res anymore either, yet those are all staples of any review, and so these "real world" articles aren't very real world at all. But that's somewhat of a tangent issue, and I actually dont mind a lot of different resolutions tested, just as long as the big common ones are hit (which is not always the case)

DerekWilson - Friday, November 10, 2006 - link

I'm always working on bringing down the complexity of my explainations. It's one of my weak points as a writer. It's difficult for me to take something and present it at a high level that doesn't reflect exactly what the thing is. Analogies are great -- I like them -- but I have a hard time using them because I can't ever think of analogies that are accurate enough.Any suggestions you have for helping me explain things completely, accurately, effectively, and (especially) in the most straight forward manner possible are very welcome.

As for the IQ comparisons -- these were much more simplified than I had intended (because Anand told me we couldn't do rollovers with 40 images on one page -- it would load too slow). This is our version of putting things in a nutshell. I could get to the point faster though --

IQ:

gamma correct aa is great for edges, but it causes problems with thin lines and transparency/adaptive AA making textures look mushy. transparency/adaptive aa are great but have a large performance hit -- except in 8800 which keeps these features playable and offers higher IQ. CSAA is great at brining higher AA levels to edges, but the loss of Z data at the sub-pixel level makes it less effective at solving the thin line problem than equivalent MSAA modes. The roll overs illustrate all this.

Thats as simple as I can make it -- I hope it helps.

We did not only test at one resolution -- In every game we tested at 1600x1200, 1920x1440, and 2560x1600. In oblivion we tested at 1280x1024 as well.

All our resolution data was in the last graph on each page -- resolution scaling. There are two graphs per page on performance. As you can see, at resolutions below 2560x1600, the 8800 GTX is almost over kill.

1600x1200 is a standard LCD panel resolution and has been for quite some time. It's actually quite affordable now as well. 1280x1024 (while popular) is often too low to matter in a high end performance analysis piece (and where it did matter we tested it). 1920x1440 is a 4:3 resolution that will give 1920x1200 panel owners a very good idea of performance (differnce is usually under 5% in many games). 2560x1600 is a standard resolution for 30" LCD panels.

I can understand being upset if you missed the performance data at other resolutions, but it seems like the rest of your complaints are that we put too much data in the article. I doubt this will change in the future, but is there anything else we could have done to make this article better? We are very willing to listen to feedback, especially on articles as big as this.

Thanks,

Derek Wilson

flexy - Friday, November 10, 2006 - link

>>>complaints are that we put too much data in the article. I doubt this will change in the future,

>>>

i doubt you can make it RIGHT for everyone...however i share the opinion w/ MOST that it is an excellent review. TOO much data is seldom bad, NOT on a site where you can expect geeks and nerds digging every bit of information :)

I remember times when reviews where FAR less detailed...and what can be better than going in-depth into AA/AF modi, showing their differnce in detail ? I think this was right on and i value such in-depth coverage !

The DX10 coverage MAYBE was "too much info" for some...but then legitimate IMHO. We're talking about totally new h/w architecture, totally new and revamped DX API and the first hardware supporting it..so it was defintly a good place to cover this.

Also...you always have the option to skip parts of a review...and the MORE detailed it is...the more it is a helpful resource (also later) to come back and read up. You dont need to comprehend any bit of information at once, but it's good to know it's there.

my $0.2

jiulemoigt - Thursday, November 9, 2006 - link

The first really big issue is that a poly can have more than one color on it, due textures, subsurface scattering, displacements, bump maps, normal maps, occulion passes, specular highlight, transparency, and a few others I can not think of off the top of my head, you could probaly find out just by asking in any cg forum like cgtalk or any dev who has worked with a profesional 3d package. That being said it may have confused people to try and explain how it really works.The other issue is to deal with gamma correct AA, maybe my moniter is showing a way different image but I'm not really sure how you can even compare

http://images.anandtech.com/reviews/video/NVIDIA/G...">http://images.anandtech.com/reviews/video/NVIDIA/G...

http://images.anandtech.com/reviews/video/NVIDIA/G...">http://images.anandtech.com/reviews/video/NVIDIA/G...

as the light is highlighting the building from two different direction in the images, the nvidia image is coming from the left and behind the buildings and the ati image is coming from the right and about midway down the image in front of the little building,

though a question that should be asked what time of day is it supposed to be the nvidia looks like dusk, and the ati looks blown out even for high noon, though the one above seems to be the same time of day and the nvidia is blown and the ati is shadowing correctly... really odd for the images, which suggests that some other filter is causing the issue on both cards like hdr, or something else.

DerekWilson - Thursday, November 9, 2006 - link

Yes a poly can have more than one color on it, and I agree our explaination could have been better ... but it is a difficult topic to talk about.The whole basis of multisample AA relies on the assumption that the color of a poly *within one pixel* will not vary significantly. Of course, this is not always true. This is, in fact, the reason supersample AA does make a difference -- it takes into account the actual color of the pixel at the position of the sub-pixel. This is also why its so much more expensive.

I didn't mean to imply that an entire poly must have only one color. But it's hard to talk about MSAA without pointing out the fact that the algorithm assumes one color per pixel per poly (calculated at the pixel center in most cases).

We did enable HDR, but we tried our hardest to take the screenshots at exactly the same ammount of time after loading the scene (Valve's HDR uses dynamic exposure which does change saturation over time and with light level coming into the camera).

While this would impact general image comparison, it doesn't impact the effect of gamma correct AA on thin lines (which is what we were trying to show).

Thanks for the feedback -- if there's anything you can add to help us be more specific in our description, we would certainly appreciate it. We would like to avoid simply leaving details out -- we'd like to learn how to better impart knowledge.

Nimbo - Thursday, November 9, 2006 - link

This must be the first GPU article that does not derive in a flame war between ATI and Nvidia fanboys...flexy - Thursday, November 9, 2006 - link

i actually dont care. I look at performance and comparisons, and then chose what card to get :) Although w/ ATI for years already.If one card, however, has some substantial advantage over another, i'll gladly point that out and also gladly debate with others why i'd prefer card X over Y.

Thats the difference between a fanboy and a enthusiast, i think. As long as i can back up statements w/ facts instead of just defeinding a "brand".

the other "problem" is really that same gen cards USUALLY are pretty much on par prformance wise...so debating/defeninf brand X over Y does make as much sense as defending ferrari over lamborghini :)

But then..if we wouldn't do that and even discuss about the "littlest" details and have lengthy conversations on forums eg. WHICH AA methods is better and why...and why 5 FPS there are better...and/or why this AF method is better than the other...it would be pretty boring.

I mean we're hardware-enthusiasts, and gfx-cards are (IMHO) the most interesting component in a PC :)

DigitalFreak - Thursday, November 9, 2006 - link

I thought we were done with the days of >$499 single GPU cards after the 7900GTX launch. Guess not.VooDooAddict - Thursday, November 9, 2006 - link

Great article.Now I just need to figure out if a 8800GTX will fit in a mATX UltraFly Case.

Araemo - Thursday, November 9, 2006 - link

Everyone is repeating microsoft's claim that dx10 will be Vista-only.the inq (I know, I know....) reported http://www.theinquirer.net/default.aspx?article=35...">here that there will be a directx '9.0L' for XP that supports the new rendering features of DirectX10, but without the new virtualization/driver model improvements.