NVIDIA's GeForce 8800 (G80): GPUs Re-architected for DirectX 10

by Anand Lal Shimpi & Derek Wilson on November 8, 2006 6:01 PM EST- Posted in

- GPUs

G80: A Mile High Overview

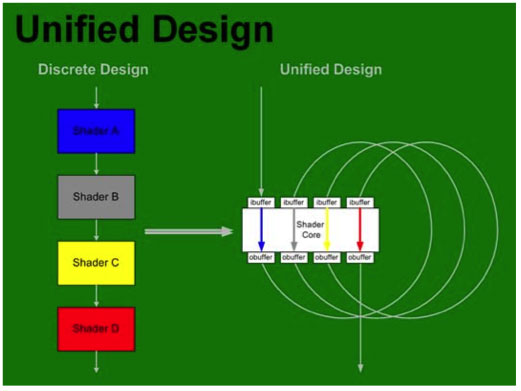

Now that we know a little more about the requirements and direction of DX10, we can take a deeper look at where NVIDIA has decided to go with the architecture of G80. We will be seeing a completely new design based around a unified shader architecture. While DX10 doesn't require a unified architecture, it certainly does make a lot of sense to move in that direction.

Inside G80, vertex, geometry, pixel shaders and more (more on this later) are all able to run on the same set of execution resources. In order to make this happen, the shader core needed to be made more general purpose and suitable for multiple usage scenarios. This is much like what we are used to seeing on a CPU, and as time moves on we expect these similarities to increase from both the CPU and GPU side. The design NVIDIA has come up, while very complex and powerful, is quite elegant. Here's a look at the block diagram for G80:

The architecture is able to use thread management hardware to dispatch different types of instructions on to the shader core. As vertices complete, their output can be used as input to geometry shaders back at the "top" of the shader core. Geometry shader output is then used as input to pixel shaders. Here's a quick conceptual representation of what we are talking about:

The sheer size of G80 is absolutely amazing; while NVIDIA wouldn't disclose exact die sizes let's look at the facts. The G80 chip is made up of 681 million transistors, more than a single core Itanium 2 or the recently launched Kentsfield, but manufactured on an almost old 90nm process. As a reference point, ATI's Radeon X1900 XTX based on the R580 GPU was built on a 90nm process yet it featured only 384 million transistors. NVIDIA's previous high-end GPU, the G71 based GeForce 7900 GTX was also built on a 90nm process but used only 278 million transistors. Any way you slice it, this is one huge chip. Architecting such a massive GPU has taken NVIDIA a great deal of time and money, four years and $475M to be exact. The previous record for time was almost 3 years at a lesser amount, but NVIDIA wouldn't tell us which GPU that was.

Intel's Quad Core Kentsfield on top, G80 on bottom

Despite very high clock speeds on the die and a ridiculous 681 million transistor count, power consumption of NVIDIA's G80 is quite reasonable given its target; on average, a G80 system uses about 8% more power than one outfitted with ATI's Radeon X1950 XTX.

You really start to get a sense of how much of a departure G80 is from previous architectures when you look at the shader core. Composed of 128 simple processors, called Stream Processors (SPs), the G80 shader core runs at a very high 1.35GHz on the highest end G80 SKU. We'll get into exactly what these stream processors are on the coming pages, but NVIDIA basically put together a wide array of very fast, specialized, but simple processors. In a sense, G80's shader core looks much like Cell's array of SPEs, but the SPs here are not nearly as independent as the SPEs in Cell.

Running at up to 1.35GHz, NVIDIA had to borrow a few pages from the books of Intel in order to get this done. The SPs are fairly deeply pipelined and as you'll soon see, are only able to operate on scalar values, thus through simplifying the processors and lengthening their pipelines NVIDIA was able to hit the G80's aggressive clock targets. There was one other CPU-like trick employed to make sure that G80 could have such a shader core, and that is the use of custom logic and layout.

The reason new CPU architectures take years to design while new GPU architectures can be cranked out in a matter of 12 months is because of how they're designed. GPUs are generally designed using a hardware description language (HDL), which is sort of a high level programming language that is used to translate code into a transistor layout that you can use to build your chip. At the other end of the spectrum are CPU designs which are largely done by hand, where design is handled at the transistor level rather than at a higher level like a HDL would.

Elements of GPUs have been designed at the transistor level in the past; things like memory interfaces, analog circuits, memories, register files and TMDS drivers were done by hand using custom transistor level design. But shaders and the rest of the pipeline was designed by writing high level HDL code and relying on automated layout.

You can probably guess where we're headed with this; the major difference between G80 and NVIDIA's previous GPUs is that NVIDIA designed the shader core at the transistor level. If you've heard the rumors of NVIDIA building more than just GPUs in the future, this is the first step, although NVIDIA was quick to point out that G80 won't be the norm. NVIDIA will continue to design using HDLs where it makes sense, and in critical areas where additional performance or power sensitive circuitry is needed, we'll see transistor level layout work done by NVIDIA's engineering. It's simply not feasible for NVIDIA's current engineering staff and product cycles to work with a GPU designed completely at the transistor level. That's not to say it won't happen in the future, and if NVIDIA does eventually get into the system on a chip business with its own general purpose CPU core, it will have to happen; but it's not happening anytime soon.

The additional custom logic and layout present in G80 helped extend the design cycle to a full four years and brought costs for the chip up to $475M. Prior to G80 the previous longest design cycle was approximately 2.5 - 3 years. Although G80 did take four years to design, much of that was due to the fact that G80 was a radical re-architecting of the graphics pipeline and that future GPUs derived from G80 will have an obviously shorter design cycle.

111 Comments

View All Comments

JarredWalton - Wednesday, November 8, 2006 - link

They did the same thing with the original Halo, porting it (and slowing it down) to DX9. MS seems to think making Halo 2 Vista-only will get people to upgrade to the new OS. [:rolls eyes:]stmok - Wednesday, November 8, 2006 - link

How else are they gonna get gamers to upgrade to Vista? :)(by cornering them into adopting Vista, using DirectX 10.0)

Its sad and pathetic at the same time.

DirectX 10.0 should be a "transitional" solution...That is, it covers both XP and Vista. This allows people to gradually upgrade their hardware, and if they wish, to Vista. What MS is doing now, is throwing everyone (developers and consumers) into the deep end, and expecting them to pay for the changes. (I suspect some would be put off by this, while the majority will continue to accept it...Which is unfortunate).

Great article BTW. Interesting to see the high-end stuff...But I doubt I can afford it in this lifetime!

I have two questions!

(1) Any chance of looking at a triple video card setup?

(I saw a presentation slide which had 2 video cards in SLI, while a third showed something else on screen).

(2) Any idea when the GF8600-series comes?

(mainstream market solution).

yyrkoon - Thursday, November 9, 2006 - link

Great, links arent working ?http://www.gamedev.net/reference/programming/featu...">http://www.gamedev.net/reference/programming/featu...

yyrkoon - Thursday, November 9, 2006 - link

http://www.gamedev.net/reference/programming/featu...">This article was written by a friend of mine back in April after an interview with ATI. Perhaps this will clear some things up.

yyrkoon - Thursday, November 9, 2006 - link

When you break all hardware/software ties to something that has been around for 4-5 years? Its not that easy making it "transitional". From a software perspective, D3D10 is not compatable with XP in the least.I for one, think this is a step in the right direction.

JarredWalton - Thursday, November 9, 2006 - link

Supposedly all of the changes to the WDDM make porting DX10 back to Windows XP "impossible", although I'm more inclined to think the correct term would be "difficult" and you also have to add in "it doesn't fit with MS marketing protocol". WDDM is quite different in Vista however, so maybe there's some substance to the claims.cosmotic - Wednesday, November 8, 2006 - link

On page 9:--Briefly explain what a sub-pixel is in the sentence before--

JarredWalton - Wednesday, November 8, 2006 - link

Due to the size of this article and the amount of time it took to get ready, let me preempt any comments about the spelling and grammar. I am in the process of editing the final document as I read through it, and there are spelling/grammar errors. If they bother you too much, check back in an hour. If you read this an hour from now and you still find errors, then you can respond, though it would be useful to keep all responses in a single thread like this one.Thanks in advance,

Jarred Walton

Editor

AnandTech.com

xtknight - Thursday, November 16, 2006 - link

On p 12 (gamma corrected AA):"This causes problems for thing like thin lines."

acejj26 - Wednesday, November 8, 2006 - link

"If DirectX 10 sounds like a great boon to software developers, the fact that DX10 will only be supported in Windows XP is certain to curb enthusiasm. "I believe this should say "DX10 will only be supported in Windows Vista..."

Not to be rude, but shouldn't the article be edited BEFORE being published??