Valve Hardware Day 2006 - Multithreaded Edition

by Jarred Walton on November 7, 2006 6:00 AM EST- Posted in

- Trade Shows

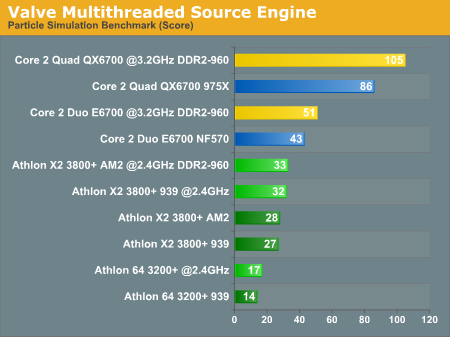

Particle Systems Benchmark

The more meaningful of the two benchmarks in terms of end users is going to be the particle simulation benchmark, as this has the potential to actually impact gameplay. The only problem is that the map is a contrived situation with four rooms each showing different particle system simulations. As proof that simulating particle systems can require a lot of CPU processing power, and that Valve can multithread the algorithms, the benchmark is meaningful. How it will actually impact future gaming performance is more difficult to determine. Also note that particle systems are only one aspect of game engine performance that can use more processing cores; artificial intelligence, physics, animation, and other tasks can benefit as well, and we look forward to the day when we have a full gaming benchmark that can simulate all of these areas rather than just particle systems. For now, here's a quick look at the particle system performance results.

There are several interesting things we get from the particle simulation benchmark. First, it scales almost linearly with the number of processor cores, so the Core 2 Quad system ends up being twice as fast as the Core 2 Duo system when running at the same clock speed. We will take a look at how CPU cache and memory bandwidth affects performance in the future, but at present it's pretty clear that Core 2 once again holds a commanding performance lead over AMD's Athlon 64/X2 processors. As for Pentium D, we repeatedly got a program crash when trying to run it, even with several different graphics cards. There's no reason to assume it would be faster than Athlon X2, though, and we did get results with Pentium D on the other test.

Athlon X2 performed the same, more or less, whether running on 939 or AM2 - even with high-end DDR2-800 memory. Our E6700 test system generated inconsistent results when overclocked, likely due to limitations with the nForce 570 SLI chipset. For most of the platforms, the 20% overclock brought on average a 20% performance increase, showing again that we are essentially completely CPU limited. The lack of granularity makes the scores vary slightly from 20% but it's close enough for now. Finally, taking a look at Athlon 64 vs. X2 on socket 939, the second CPU core improves performance by ~90%

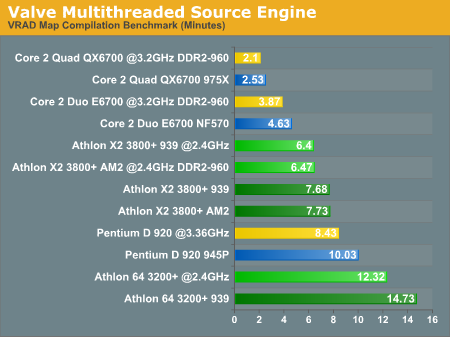

VRAD Map Compilation Benchmark

As more of a developer/content creation benchmark, the results of the VRAD benchmark are not likely to be as interesting to a lot of people. However, keep in mind that better performance in this area can lead to more productive employees, so hopefully that means better games sooner. (Or maybe it just means more stress for the content developers?)

The results we got on the map compilation benchmark support Valve's own research and help to explain why they would be very interested in getting more Core 2 Quad systems into their offices. We don't have a single core Pentium 4 processor represented, but even a Pentium D 920 still ends up taking more than twice as long as a Core 2 Duo E6700 system, and about four times as long as Core 2 Quad. Looking at the CPU speed scaling, a 20% higher clock speed with the Pentium D resulted in 19% higher performance. If Intel had tried to stick with the NetBurst architecture, they would need dual core Pentium D processors running at more than 6.0 GHz in order to match the performance offered by the E6700. We won't even get into discussions about how much power such a CPU would require.

Performance scales almost linearly with clock speed once again, improving by 20% with the overclocking. Moving from single to dual core Athlon chips improves performance by about 92%. Going from a Core 2 Duo to a Core 2 Quad on the other hand improves performance by "only" 84%. It is not too surprising to find that moving to four cores doesn't show scaling equal to that of the single to dual move, but an 84% increase is still very good, roughly equal to what we see in 3D rendering applications.

The more meaningful of the two benchmarks in terms of end users is going to be the particle simulation benchmark, as this has the potential to actually impact gameplay. The only problem is that the map is a contrived situation with four rooms each showing different particle system simulations. As proof that simulating particle systems can require a lot of CPU processing power, and that Valve can multithread the algorithms, the benchmark is meaningful. How it will actually impact future gaming performance is more difficult to determine. Also note that particle systems are only one aspect of game engine performance that can use more processing cores; artificial intelligence, physics, animation, and other tasks can benefit as well, and we look forward to the day when we have a full gaming benchmark that can simulate all of these areas rather than just particle systems. For now, here's a quick look at the particle system performance results.

There are several interesting things we get from the particle simulation benchmark. First, it scales almost linearly with the number of processor cores, so the Core 2 Quad system ends up being twice as fast as the Core 2 Duo system when running at the same clock speed. We will take a look at how CPU cache and memory bandwidth affects performance in the future, but at present it's pretty clear that Core 2 once again holds a commanding performance lead over AMD's Athlon 64/X2 processors. As for Pentium D, we repeatedly got a program crash when trying to run it, even with several different graphics cards. There's no reason to assume it would be faster than Athlon X2, though, and we did get results with Pentium D on the other test.

Athlon X2 performed the same, more or less, whether running on 939 or AM2 - even with high-end DDR2-800 memory. Our E6700 test system generated inconsistent results when overclocked, likely due to limitations with the nForce 570 SLI chipset. For most of the platforms, the 20% overclock brought on average a 20% performance increase, showing again that we are essentially completely CPU limited. The lack of granularity makes the scores vary slightly from 20% but it's close enough for now. Finally, taking a look at Athlon 64 vs. X2 on socket 939, the second CPU core improves performance by ~90%

VRAD Map Compilation Benchmark

As more of a developer/content creation benchmark, the results of the VRAD benchmark are not likely to be as interesting to a lot of people. However, keep in mind that better performance in this area can lead to more productive employees, so hopefully that means better games sooner. (Or maybe it just means more stress for the content developers?)

The results we got on the map compilation benchmark support Valve's own research and help to explain why they would be very interested in getting more Core 2 Quad systems into their offices. We don't have a single core Pentium 4 processor represented, but even a Pentium D 920 still ends up taking more than twice as long as a Core 2 Duo E6700 system, and about four times as long as Core 2 Quad. Looking at the CPU speed scaling, a 20% higher clock speed with the Pentium D resulted in 19% higher performance. If Intel had tried to stick with the NetBurst architecture, they would need dual core Pentium D processors running at more than 6.0 GHz in order to match the performance offered by the E6700. We won't even get into discussions about how much power such a CPU would require.

Performance scales almost linearly with clock speed once again, improving by 20% with the overclocking. Moving from single to dual core Athlon chips improves performance by about 92%. Going from a Core 2 Duo to a Core 2 Quad on the other hand improves performance by "only" 84%. It is not too surprising to find that moving to four cores doesn't show scaling equal to that of the single to dual move, but an 84% increase is still very good, roughly equal to what we see in 3D rendering applications.

55 Comments

View All Comments

JarredWalton - Tuesday, November 7, 2006 - link

What's with the octal posting? Too many CPU cores running? ;)I deleted the other 7 identical posts for you. Careful with that Post Comment button!

saratoga - Tuesday, November 7, 2006 - link

Server kept timing out when I hit post, so I assumed it wasn't committing :)exdeath - Tuesday, November 7, 2006 - link

You can see my recent comments on this topic here:http://www.dailytech.com/Article.aspx?newsid=4847&...">http://www.dailytech.com/Article.aspx?newsid=4847&...

In my experience relying on atomic CPU swap operations isn't enough as it only works with a single value (32 bit word for example).

While you lock and swap a 32 bit Y value, someone else has just finished reading the newly written X value but beat you to the lock to read the old Y value before you've updated. Clearly whole data structures need to be coherent, not just small atomic values.

Also it’s unusual to modify objects observable states mid frame. Even if you avoided the above example so that the X,Y pair was always updated together, you'd still have different objects interpreting the position as a whole of that object in different places at different times. State data must be held constant to all observers throughout the context of a single frame.

exdeath - Tuesday, November 7, 2006 - link

Even if you avoided the above example so that the X,Y pair was always updated together, you'd still have different objects interpreting the position as a whole of that object in different places at different times in the same frame.JarredWalton - Tuesday, November 7, 2006 - link

I'm assuming your comment is in regards to the PS3/Cell comments on the last page? It's sort of sounds like you're arguing about the way Valve has chosen to go about doing things, or that you disagree with some of the opinions they've expressed concerning other hardware. We have only tried to provide a very high-level overview of what Valve is doing, and we hardly touched the low-level details -- Valve didn't spend a lot of time on specific implementation issues either. All they did was provide us with some information about what they are doing, and a bit of opinion on what they think of the rest of the hardware options.Preventing anything else from doing write operations to the world state during an entire frame in order to keep things coherent is a big problem with multithreading. Apparently Valve has found a way around that, or at least found a way to do it more efficiently, using lock free and wait free algorithms. No, I can't honestly say I really understand what those algorithms do, but if they say it worked better for their code base I'm willing to trust them.

As far as the PS3/Cell processor goes, Valve did say that they have various thoughts on how to properly utilize the architecture. It is simply going to be more difficult to do relative to Xbox 360 and PC. It's not impossible, and companies are definitely going to tackle this problem. As far as how they tackle it, I'm more than a bit rusty on my coding background, and other than high-level details I'm not too concerned how they improve their multithreading code on any specific platform, just that they do it.

exdeath - Tuesday, November 7, 2006 - link

The other issue is OS support.Compiler add-on's or third party APIs can only serve to hide the details or make things look cleaner. But no matter what, the final barrier between the application and the OS are the API calls provided by the OS threading model. Thus no third party implementation can be better than the OS thread model itself in terms of performance and overhead. All those can do is make it easier to use at the top by handling the OS details.

I imagine threading APIs on popular OSes will start to evolve, just like graphics APIs have, once everyone gets on the multi-core bandwagon and starts to get a feel for what's available in the OS APIs and what they'd rather have. So far, Vista's thread pool API looks good, but I still don't see an API to determine such basic things as checking if the work queue is empty and all threads are idle, etc.

Currently I find it's easier to implement my own thread pool manager which does atomic increments and decrements on a 'task count' variable as tasks are entered or completed in the queue. Checking if all tasks are done involves testing that task count against 0 and signaling an event flag that wakes any management threads sleeping until all its work tasks to complete. It also allows for more flexibility in 'before and after' housekeeping as work threads move from task to task and that kind of control isn't offered in the XP’s built in thread pool API, nor Vista’s as far as I can tell.

exdeath - Tuesday, November 7, 2006 - link

Not arguing their methods, a lot of things in this article are in line with my own opinions on multithreading, pretty much the best way to got about it. I'm just pointing out that atomic lock/swap operations in hardware are very primitive and typically operate only on CPU word size values, not entire data structures. Thus it's possible between doing two atomic operations on two variables on one core, another core can get an old version of one variable and a new version of another.core1: compute X

core2: ...

core1: lock/write x

core2: read x, get newly written version

core1: compute Y

core2: read Y, get old y before the update

core1: lock/write Y

core2: ...

The task on core2 is working with inconsistent data, the new X and the old Y. If the task on core2 only uses the data as input, i.e.: AI tracking another AI entity, it has the wrong position, and won't know about it since it has no need to perform its own lock/write (so it never gets the exception that says the value changed). Even if it did, it would have to throw out all work and redo it with the new Y, and then it could possibly change again.

Looping and retrying seems wasteful. And I’m thinking the only way to catch such a hardware error on a failed lock/write update is via exceptions, and handling a thrown exception on an attempt to write a single 32 bit value is very wasteful of CPU cycles.

In my own research I have had excellent results with double buffering any modified data. Each threaded task only updates its hidden internal working state for frame n+1 while all reads to the object are read from its external current state for frame n. At the end of the frame when all parallel tasks have completed, the current/working states are swapped, and the work queue is filled again to start the next frame.

This ensures that throughout the entire computation of frame n+1, the current frame n state will be available to all threads, and guaranteed to not be modified through the duration of current frame. So basically all threads can read anything they want and modify their own data. On PC/360 the time to swap everything is basically nothing; you just swap a few pointers, or a single pointer to an array/structure of current/working data for the frame.

On the PS3 some data copying and moving will be required, but this is mandatory due to design anyway and assisted by an extremely smart and powerful DMAC.

One place to be critical about is message passing between objects since it requires posting (writing) data to be picked up by another object. But the time to lock/post/unlock a queue is negligible compared to the time it takes to process the results leading up to the creation of the message. This is similar to the D3D notion of doing as much as you can before you lock and only do the minimal work needed inside the lock and unlock as quickly as possible.

GhandiInstinct - Tuesday, November 7, 2006 - link

Jarred Walton,My question: Will Valve's games in 2007 be released with specificaitons such as: "For minimum requirements you need a dual-core cpu, for maximum results you need a quad-core" or anything to that nature? Because I seem to be confused in what Valve is working on dual or quad or both or neither or something different, and what I should get to best utilize their games and multi-core software in general.

Thanks.

JarredWalton - Tuesday, November 7, 2006 - link

Episode Two should come out sometime in 2007, and before that happens you will get the multithreading patch affecting previous Source engine titles. Right now, it doesn't sound like anything released in the next year or so from valve is going to require dual cores. That's what I was trying to get out on the conclusion page where I mentioned that they are targeting an "equivalent experience" regardless of what sort of processor you are running.So just like you could turn down the level of detail in Half-Life 2 and run it on DX8 or even DX7 hardware, Source engine should be able to accommodate single core processors all the way up through N-core processors. The engine will spawn as many threads as you have processor cores, with one main thread serving as the controller and N - 1 helper threads. Xbox 360 for example would have 5 helper threads plus the master thread, because it has three course each capable of executing to threads simultaneously.

Patrese - Tuesday, November 7, 2006 - link

Great article, good to see dual-quad cores being used for something in games. By the way, the kitchen examples made me hungry... :)