Intel's Core 2 Extreme & Core 2 Duo: The Empire Strikes Back

by Anand Lal Shimpi on July 14, 2006 12:00 AM EST- Posted in

- CPUs

Overclocking

You have already seen that the Core 2 Extreme X6800 outperforms AMD's fastest processor, the FX-62, by a wide margin. This is exacerbated by the fact that the $316 E6600, running at 2.4GHz, outperforms the FX-62 in almost every benchmark we ran. That certainly address the questions of raw performance and value.

For most enthusiasts, however, there is also the important question of how Core 2 processors overclock. As AMD has moved closer to the 90nm wall, recent AM2 processors do not have much headroom. A prime example is the FX-62, which is rated at 2.8GHz, reaches 3GHz with ease, but then has a difficult time reaching or passing 3.1GHz on air. So how does Core 2, built at 65nm, compare in overclocking?

The top X6800 is rated to run at 11X multiplier on a 1066MHz FSB; it is the only Core 2, however, that is completely unlocked, both up and down. You can adjust multipliers up to 60 on the Asus P5W-DH motherboard, or down to 6. This makes the X6800 an ideal CPU for overclocking, even though top-line processors are normally notorious for not being the best overclockers.

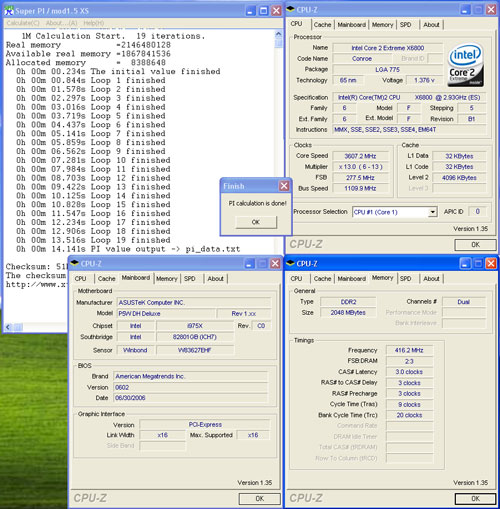

To gauge the overhead or overclocking abilities of the X6800 in the simplest terms, the CPU speed was increased, keeping the CPU voltage at the default setting of 1.20V.

At default voltage the X6800 reached a stable 3.6GHz (13 x277). This is a 23% overclock from the stock 2.93GHz speed at stock voltage. It is also an important overclocking result, since it implies Intel could easily release a 3.46GHz or 3.6GHz Core 2 processor tomorrow if they chose to. It is clear there is no need for these faster Core 2s yet, but it does illustrate the speed range possible with the current Core 2 Duo architecture.

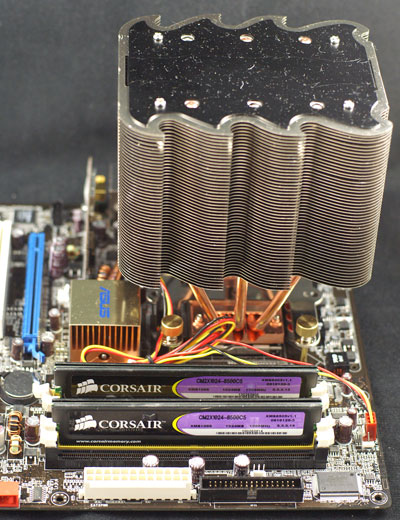

The X6800 was then pushed to the highest CPU speed we could achieve with Air Cooling. We did use a very popular and effective air cooler for our testing, the Tuniq Tower.

The goal was to reach the highest possible speed that was benchmark stable. Super Pi, 3DMarks, and several game benchmarks were run to test stability. The 2.93GHz chip reached 4.0GHz on air cooling in these overclocking tests. That represents a 36% overclock on air with what will likely be the least overclockable Core 2 processor - the top line X6800.

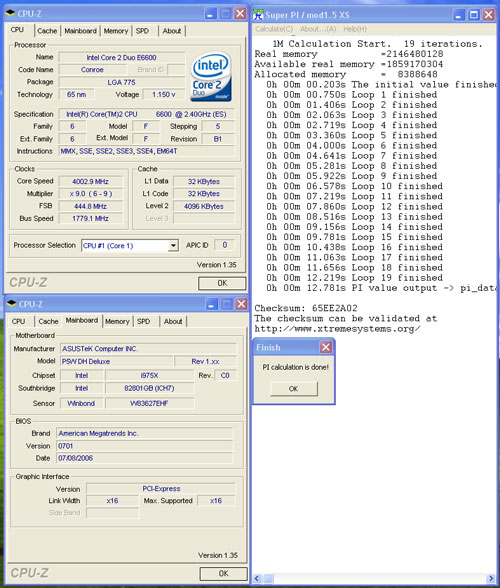

To provide some idea of overclocking abilities with other Core 2 Duo processors, we ran quick tests with E6700 (2.67GHz), and E6600 (2.4GHz). The test E6700 reached a stable 3.4GHz at default voltage and topped out at 3.9GHz with the Tuniq Cooler. The 2.4GHz E6600 turned out to be quite an overclocker in our tests. Even though it was hard-locked at a 9 multiplier it reached an amazing 4GHz in the overclocking tests. That represents a 67% overclock.

We had another Core 2 Extreme X6800 that we tried overclocking with a stock Intel HSF. The results were not as impressive as with the Tuniq cooler, with 3.4GHz being the most stable we could get it. We're going to be playing around with these processors more in the future to hopefully get a better overall characterization of what you can expect.

Curious about our overclocking successes, we asked Intel why Core 2 CPUs are able to overclock close to the same levels as NetBurst processors can, despite having less than half the pipeline length. Intel gave us the following explanation:

NetBurst microarchitecture is constrained by physical power / thermal limitations long before the constraint of pipeline stages comes into play. The microarchitecture itself would continue to scale upwards if not for the power constraints. (In fact, we have seen Presler overclocked to 6 GHz in liquid nitrogen environments. At that level, power delivery through the power supply & board itself begin to limit further scaling of the processor.)

Intel's explanation makes a great deal of sense, especially when you remember the original claims that NetBurst was supposed to be good for between 5GHz - 10GHz. NetBurst never got the chance to reach its true overclocking prime as Intel hit thermal density walls well before the 5GHz - 10GHz range and thus Intel's Core architecture was born. Intel's Core 2 processors once again give us an example of the good ol' days of Intel overclocking, where moving to a smaller manufacturing process meant we'd have some highly overclockable chips on our hands. With NetBurst dead and buried, the golden age of overclocking is back.

Enthusuasts have not seen overclocking like this since Socket 478 days, and in fact Core 2 may be even better. The 2.4GHz E6600, which outperformed the FX-62 in most benchmarks at stock speed costs $316, and overclocked to 4Ghz with excellent air cooling. With that kind of performance, value, and overclocking the E6600 will likely become the preferred chip for serious overclockers - particularly those that are looking for champagne performance on a smaller budget.

It is important, however, not to sell the advantages of the X6800 short. AnandTech never recommends the fastest chip you can buy as a good value choice, but X6800 does bring some advantages to the table. It is the only Conroe that is completely unlocked. This allows settings like 266(stock FSB)x15 for 4.0GHz, settings that keep other components in the system at stock speed. This can only be achieved with the X6800 - other Core 2 Duo chips are hard-locked - and for some that feature will justify buying an X6800 at $999. For the rest of us overclockers E6600 is shaping up to be the chip to buy for overclocking.

202 Comments

View All Comments

coldpower27 - Friday, July 14, 2006 - link

Are there supposed to be there as they aren't functioning in Firefox 1.5.0.4coldpower27 - Friday, July 14, 2006 - link

You guys fixed it awesome.Orbs - Friday, July 14, 2006 - link

On "The Test" page (I think page 2), you write:please look back at the following articles:

But then there are no links to the articles.

Anyway, Anand, great report! Very detailed with tons of benchmarks using a very interesting gaming configuration, and this review was the second one I read (so it was up pretty quickly). Thanks for not saccrificing quality just to get it online first, and again, great article.

Makes me want a Conroe!

Calin - Friday, July 14, 2006 - link

Great article, and thanks for a well done job. Conroe is everything Intel marketing machine shown it to be.stepz - Friday, July 14, 2006 - link

The Core 2 doesn't have smaller emoty latency than K8. You're seeing the new advanced prefetcher in action. But don't just believe me, check with the SM2.0 author.Anand Lal Shimpi - Friday, July 14, 2006 - link

That's what Intel's explanation implied as well, when they are working well the prefetchers remove the need for an on-die memory controller so long as you have an unsaturated FSB. Inevitably there will be cases where AMD is still faster (from a pure latency perspective), but it's tough to say how frequently that will happen.Take care,

Anand

stepz - Friday, July 14, 2006 - link

Excuse me. You state "Intel's Core 2 processors now offer even quicker memory access than AMD's Athlon 64 X2, without resorting to an on-die memory controller.". That is COMPLETELY wrong and misleading. (see: http://www.aceshardware.com/forums/read_post.jsp?i...">http://www.aceshardware.com/forums/read_post.jsp?i... )It would be really nice from journalistic integrity point of view and all that, if you posted a correction or atleast silently changed the article to not be spreading incorrect information.

Oh... and you really should have smelt something fishy when a memory controller suddenly halves its latency by changing the requestor.

stepz - Friday, July 14, 2006 - link

To clarify. Yes the prefetching and espescially the speculative memory op reordering does wonders for realworld performance. But then let the real-world performance results speak for themselves. But please don't use broken synthetic tests. The advancements help to hide latency from applications that do real work. They don't reduce the actual latency of memory ops that that test was supposed to test. Given that the prefetcher figures out the access pattern of the latency test, the test is utterly meaningless in any context. The test doesn't do anything close to realworld, so if its main purpose is broken, it is utterly useless.JarredWalton - Friday, July 14, 2006 - link

Modified comments from a similar thread further down:Given that the prefetcher figures out the access pattern of the latency test, the test is utterly meaningless in any context."

That's only true if the prefetcher can't figure out access patterns for all other applications as well, and from the results I'm pretty sure it can. You have to remember, even with the memory latency of approximately 35 ns, that delay means the CPU now has about 100 cycles to go and find other stuff to do. At an instruction fetch rate of 4 instructions per cycle, that's a lot of untapped power. So, while it waits on main memory access one, it can be scanning the next accesses that are likely to take place and start queuing them up and priming the RAM. The net result is that you may never actually be able to measure latency higher than 35-40 ns or whatever.

The way I think of it is this: pipeline issues aside, a large portion of what allowed Athlon 64 to outperform NetBurst was reduced memory latency. Remember, Pentium 4 was easily able to outperform Athlon XP in the majority of benchmarks -- it just did so at higher clock speeds. (Don't *even* try to tell me that the Athlon XP 3200+ was as fast as a Pentium 4 3.2 GHz! LOL. The Athlon 64 3200+ on the other hand....) AMD boosted performance by about 25% by adding an integrated memory controller. Now Intel is faster at similar clock speeds, and although the 4-wide architectural design helps, not to mention 4MB shared L2, they almost certainly wouldn't be able to improve performance without improving memory latency -- not just in theory, but in actual practice. Looking at the benchmarks, I have to think that our memory latency scores are generally representative of what applications see.

If you have to engineer a synthetic application specifically to fool the advanced prefetcher and op reordering, what's the point? To demonstrate a "worst case" scenario that doesn't actually occur in practical use? In the end, memory latency is only one part of CPU/platform design. The Athlon FX-62 is 61.6% faster than the Pentium XE 965 in terms of latency, but that doesn't translate into a real world performance difference of anywhere near 60%. The X6800 is 19.3% faster in memory latency tests, and it comes out 10-35% faster in real world benchmarks, so again there's not an exact correlation. Latency is important to look at, but so is memory bandwidth and the rest of the architecture.

The proof is in the pudding, and right now the Core 2 pudding tastes very good. Nice design, Intel.

coldpower27 - Friday, July 14, 2006 - link

But why are you posting the Manchester core's die size?What about the Socket AM2 Windsor 2x512KB model which has a die size of 183mm2?