Computex 2006: 300W GPUs, Conroe, HDMI Video Cards and Lots of Motherboards

by Anand Lal Shimpi on June 5, 2006 10:24 PM EST- Posted in

- Trade Shows

It’s almost like we say this every year, but Computex hasn’t even officially started yet and we already have a lot to talk about. Everything from the power requirements of next year’s GPUs from ATI and NVIDIA to the excitement surrounding Intel’s Conroe launch is going to be covered in today’s pre-show coverage so we’ll save the long winded introduction and get right to business.

We will first address some of the overall trends we’ve seen while speaking to many of the Taiwanese manufacturers and then dive into product specific items. We’ll start with the most shocking news we’ve run into thus far - the power consumption of the next-generation GPUs due out early next year.

DirectX 10 GPUs to Consume up to 300W

ATI and NVIDIA have been briefing power supply manufacturers in Taiwan recently about what to expect for next year’s R600 and G80 GPUs. Both GPUs will be introduced in late 2006 or early 2007, and while we don’t know the specifications of the new cores we do know that they will be extremely power hungry. The new GPUs will range in power consumption from 130W up to 300W per card. ATI and NVIDIA won't confirm or deny our findings and we are receiving conflicting information as to the exact specifications of these new GPUs, but the one thing is for sure is that the power requirements are steep.

Power supply makers are being briefed now in order to make sure that the power supplies they are shipping by the end of this year are up to par with the high end GPU requirements for late 2006/early 2007. You will see both higher wattage PSUs (1000 - 1200W) as well as secondary units specifically for graphics cards. One configuration we’ve seen is a standard PSU mounted in your case for your motherboard, CPU and drives, running alongside a secondary PSU installed in a 5.25” drive bay. The secondary PSU would then be used to power your graphics cards.

OCZ had one such GPU power supply at the show for us to look at. As you can see above, the 300W power supply can fit into a 5.25" drive bay and receives power from a cable passed through to it on the inside of your PC's case.

OCZ is even working on a model that could interface with its Powerstream power supplies, so you would simply plug this PSU into your OCZ PSU without having to run any extra cables through your case.

In order to deal with the increased power consumption of this next-generation of DirectX 10 GPUs apparently manufacturers are considering the possibility of using water cooling to keep noise and heat to a minimum.

As depressing as this news is, there is a small light at the end of the tunnel. Our sources tell us that after this next generation of GPUs we won’t see an increase in power consumption, rather a decrease for the following generation. It seems as if in their intense competition with one another, ATI and NVIDIA have let power consumption get out of hand and will begin reeling it back in starting in the second half of next year.

In the more immediate future, there are some GPUs from ATI that will be making their debut, including the R580+, RV570 and RV560. The R580+ is a faster version of the current R580, designed to outperform NVIDIA’s GeForce 7900 GTX. The RV570 is designed to be an upper mid-range competitor to the 7900GT, possibly carrying the X1700 moniker. The only information we’ve received about RV570 is that it may be an 80nm GPU with 12-pipes. The RV560 may end up being the new successor to the X1600 series, but we haven’t received any indication of specifications.

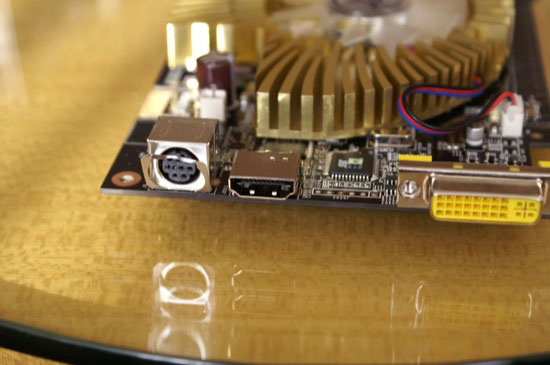

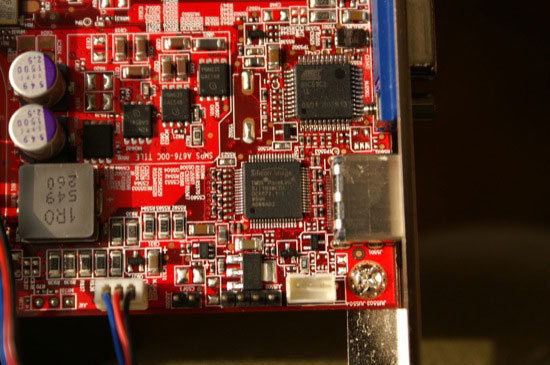

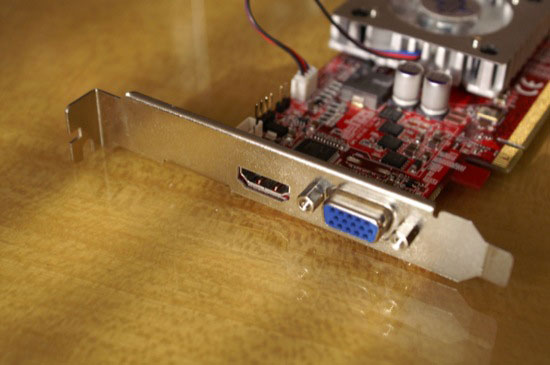

After the HDMI/HDCP fiasco that both ATI and NVIDIA faced earlier this year, we’re finally seeing video cards equipped with HDMI outputs and full HDCP support. The HDCP solution of choice appears to be a TMDS transmitter by Silicon Image that has found its way onto almost all of the HDMI equipped video cards we’ve seen.

While some of the HDMI equipped graphics cards simply use the HDMI output as a glorified DVI connector, other companies have outfitted their designs with a SPDIF header to allow for digital audio passthrough over HDMI as well. Remember that the HDMI connector can carry both audio and video data, and by outfitting cards with a header for internal audio passthrough (from your soundcard/motherboard to the graphics card) you take advantage of that feature of the HDMI specification.

Alongside HDMI support, passively cooled GPUs are “in” these days as we’ve seen a number of fanless graphics cards since we’ve been here. The combination of HDMI output and a passive design is a definite winner for the HTPC community, who are most likely to be after a video card equipped with a HDMI output at this point.

61 Comments

View All Comments

shabby - Tuesday, June 6, 2006 - link

Yes i have one, i just dont see the point of sending audio to the tv, and then back out to the reciever when you can do just send it directly from the hddvd/bluray to the reciever instead of from the hddvd to tv then back to reciever.Sending audio to one more spot might degrade quality, i said might, so why not send it directly to the reciever?

ShapeGSX - Tuesday, June 6, 2006 - link

I have an HDTV, and I never use the TV's speakers. Why have HDTV but crap 2 channel audio? Instead, I connect the digital audio to my receiver for 5.1 surround.epsilonparadox - Tuesday, June 6, 2006 - link

If you have an HDTV with more than one HDMI port and a SPDIF out, you can connect multiple HDMI sources to the TV and you can take an optical cable from the TV to your receiver since HDMI carries 5.1 channel audio.TauRusIL - Tuesday, June 6, 2006 - link

Guys, the HDMI cable will go to your receiver carrying both video and audio, then the receiver will send the video out to your HDTV. That's the setup that makes sense to me. No point in sending audio to the HDTV directly. Most newer generation receivers include HDMI switching already.namechamps - Tuesday, June 6, 2006 - link

Lots of people will want to run the HDMI to their HDTV. Just because you dont understand it doesn't mean there isn't a very good reason.Here is my setup. I have a cable connection connected directly to the HDTV (cable card slot), and my xbox360 hooked to HDMI. My next project is too add a HD-DVD player and when HDMI video cards become common I will have an HTPC hooked by HDMI also. So I got 4 inputs (cable internal and xbox360, HD-DVD players, HTPC on HDMI) hooked to HDTV. With me so far. Now my TV can play the audio directly (yeah it's 2.1) but there are times when I dont want/need the loudness of my receiver. Late at night or when listening to the news dolby digital 5.1 is just overkill.

NOW here is the part you dont understand (and therefore quick to bash others). Most HDTV (mine inclded) have SPDIF OUTPUT. If I hit monitor mute on my remote then the TV speakers shutoff and any digital audio goes directly to the SPDIF. So regardless if I am watching terresterial HDTV, HDTV cable, regular cables, HD-DVD, xbox360 or eventially anything from my HTPC the TV bypasses it directly to the SPDIF output.

So with 1 HDMI cable per source plus only 1 toslink optical cable to my receiver I have hooked up ALL my audio & video gear.

If I listened to "experts" like yourself then I would have three limitations

1) much more cables

2) can't use TV speakers when I just want quiet simple 2.1

3) no high quality way to handle digital cable, and OTA HDTV without 2 more set top boxes.

Now the largest advantage to a setup like this is simplicity. Remember you may be an audio/video expert but 90% of consumers are not. With HDMI they can connect EVERYTHING to their TV. If they dont have a home theater system no problem, if they do then they connect 1 cable from TV to receiver and they are done. Compare that to the rats nest of cables behind most entertainment centers and you can see why the industry is pushing HDMI.

CKDragon - Tuesday, June 6, 2006 - link

OK, legitimate questions here; please don't feel the urge to own me. :P1) Let me make sure I understand you, first: With the setup that you describe, it seems that you would have one less HDMI cable and only 1 total SPDIF cable, correct?

2) Are HDTVs with 4+ HDMI ports common/reasonably priced? I haven't made the HD plunge, but it seems as though most of the ones I browse at have 2.

3) The method you describe sounds very efficient and I believe I understand the benefits for HDMI components. How do older components that only have analog cables fit into the equation? I'm certain that you could route those through your receiver, but I'd imagine that takes away from the fluidity of your setup. Will the HDTV pass even analog audio signals out to the receiver? In your post, you mentioned digital audio specifically being passed, so I was hoping you could clarify.

4) The digital audio being passed through the HDTV, does it degrade sound quality at all? I remember years ago when I bought my receiver I had to look for a certain quality specification regarding component video cable switching to make sure that the receiver wouldn't degrade the video signal upon pass through. I was wondering if this was a similar situation.

Sorry for the length, but if you or anyone else could answer this I'd be appreciative.

Thanks,

CK

namechamps - Thursday, June 8, 2006 - link

Will try not to own anyone...1) Not sure if I understand the question but the total # of HDMI cables = # of HDMI sources. They all connect to the HDTV. There is only 1 SPDIF (optical/toslink) and it runs from HDTV to receiver.

2) No very expensive. However there are some with 2-3 HDMI that are more reasonably priced. Expect this to change. All future models of HDTV seems to be including more & more HDMI while eliminating DVI, and other ports. I would expect soon most HDTV made will be 3-4 HDMI plus 1 or 2 of each "legacy" port (composite, s-video, component).

3) My TV will digitize analog audio and route is over the spdif out however I havent ever used that feature. The digital cable from cablecard slot does need to be converted and passed to spdif I assume however I havent experienced any audio issues. Best way to find this out is stay away from Bestbuy and goto a real home theater store. Those AV experts can help you sort through all the options.

One side not HDMI 1.2 (current version) only supports "single link" and up to 5.1 audio. The newer HDMI 1.3 (being developed) will support "dual link" and more advanced audio like DolbyDigital TrueHD, and a couple others. I dont find this to be a limitation but some users may.

4) There is no degrading because the signal is digital and the HDTV simply allows it to pass through unchanged. Now if you have analog audio sources there may be more of an issues but I dont know about that.

ChronoReverse - Monday, June 5, 2006 - link

If it turns out the low-end Conroes will overclock very well (I suspect they might), an Intel purchase might in the horizon for me (my last Intel chip was a Tualatin).I've just sold my Athlon64 mobo and CPU while I can still get a reasonable price for them. If I can't get Conroe for a good price, then I'll pick up the used X2's that should be flooding the For Sale forums =)

trippykavya - Saturday, August 8, 2020 - link

This is a great web site. Good sparkling user interface and very informative blogs. I will be coming back in a bit, thanks for the great article. I have found it enormously useful.https://www.escortdelhi.net/bangalore-escorts.html

http://www.dreamgirlsbangalore.com/

trippykavya - Saturday, August 8, 2020 - link

If you want to improve your familiarity just keep visiting this web page and be updated with the most up-to-date news posted here.http://www.bangaloreescortsservice.org/

http://www.naughtymodel.co.in/

http://www.sunnyescorts.in/

http://www.bangaloreescortindia.co.in