ATI's New Leader in Graphics Performance: The Radeon X1900 Series

by Derek Wilson & Josh Venning on January 24, 2006 12:00 PM EST- Posted in

- GPUs

R580 Architecture

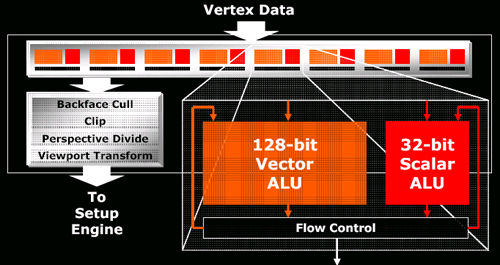

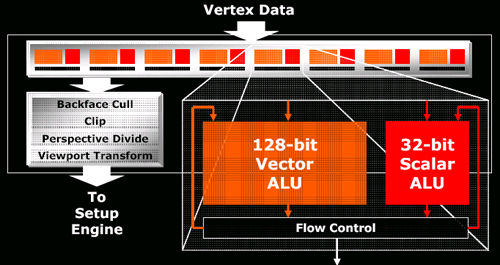

The architecture itself is not that different from the R520 series. There are a couple tweaks that found their way into the GPU, but these consist mainly of the same improvements made to the RV515 and RV530 over the R520 due to their longer lead time (the only reason all three parts arrived at nearly the same time was because of a bug that delayed the R520 by a few months). For a quick look at what's under the hood, here's the R520 and R580 vertex pipeline:

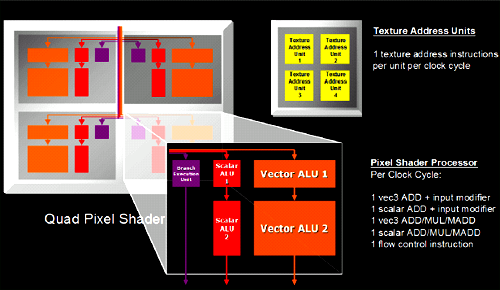

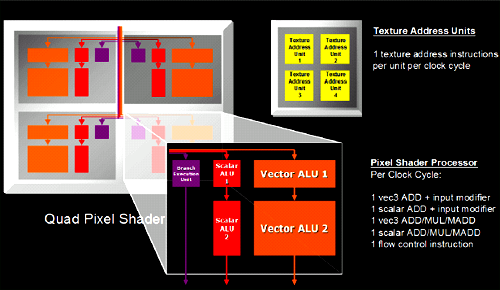

and the internals of each pixel quad:

The real feature of interest is the ability to load and filter 4 texture addresses from a single channel texture map. Textures which describe color generally have four components at every location in the texture, and normally the hardware will load an address from a texture map, split the 4 channels and filter them independently. In cases where single channel textures are used (ATI likes to use the example of a shadow map), the R520 will look up the appropriate address and will filter the single channel (letting the hardware's ability to filter 3 other components go to waste). In what ATI calls it's Fetch4 feature, the R580 is capable of loading 3 other adjacent single channel values from the texture and filtering these at the same time. This effectively loads 4 and filters four times the texture data when working with single channel formats. Traditional color textures, or textures describing vector fields (which make use of more than one channel per position in the texture) will not see any performance improvement, but for some soft shadowing algorithms performance increases could be significant.

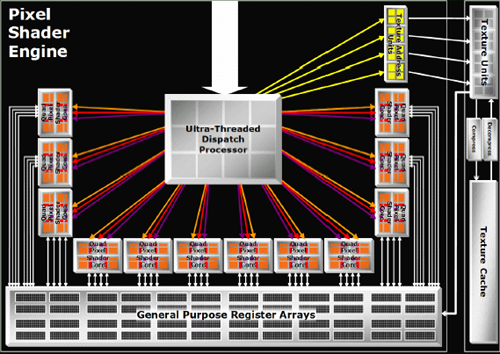

That's really the big news in feature changes for this part. The actual meat of the R580 comes in something Tim Allen could get behind with a nice series of manly grunts: More power. More power in the form of a 384 million transistor 90nm chip that can push 12 quads (48 pixels) worth of data around at a blisteringly fast 650MHz. Why build something different when you can just triple the hardware?

To be fair, it's not a straight tripling of everything and it works out to look more like 4 X1600 parts than 3 X1800 parts. The proportions work out to match what we see in the current midrange part: all you need for efficient processing of current games is a three to one ratio of pixel pipelines to render backends or texture units. When the X1000 series initially launched, we did look at the X1800 as a part that had as much crammed into it as possible while the X1600 was a little more balanced. Focusing on pixel horsepower makes more efficient use of texture and render units when processing complex and interesting shader programs. If we see more math going on in a shader program than texture loads, we don't need enough hardware to load a texture every single clock cycle for every pixel when we can cue them up and aggregate requests in order to keep available resources busy more consistently. With texture loads required to hide latency (even going to local video memory isn't instantaneous yet), handling the situation is already handled.

Other than keeping the number of texture and render units the same as the X1800 (giving the X1900 the same ratios of math to texture/fill rate power as the X1600), there isn't much else to say about the new design. Yes, they increased the number of registers in proportion to the increase in pixel power. Yes they increased the width of the dispatch unit to compensate for the added load. Unfortunately, ATI declined allowing us to post the HDL code for their shader pipeline citing some ridiculous notion that their intellectual property has value. But we can forgive them for that.

This handy comparison page will have to do for now.

The architecture itself is not that different from the R520 series. There are a couple tweaks that found their way into the GPU, but these consist mainly of the same improvements made to the RV515 and RV530 over the R520 due to their longer lead time (the only reason all three parts arrived at nearly the same time was because of a bug that delayed the R520 by a few months). For a quick look at what's under the hood, here's the R520 and R580 vertex pipeline:

and the internals of each pixel quad:

The real feature of interest is the ability to load and filter 4 texture addresses from a single channel texture map. Textures which describe color generally have four components at every location in the texture, and normally the hardware will load an address from a texture map, split the 4 channels and filter them independently. In cases where single channel textures are used (ATI likes to use the example of a shadow map), the R520 will look up the appropriate address and will filter the single channel (letting the hardware's ability to filter 3 other components go to waste). In what ATI calls it's Fetch4 feature, the R580 is capable of loading 3 other adjacent single channel values from the texture and filtering these at the same time. This effectively loads 4 and filters four times the texture data when working with single channel formats. Traditional color textures, or textures describing vector fields (which make use of more than one channel per position in the texture) will not see any performance improvement, but for some soft shadowing algorithms performance increases could be significant.

That's really the big news in feature changes for this part. The actual meat of the R580 comes in something Tim Allen could get behind with a nice series of manly grunts: More power. More power in the form of a 384 million transistor 90nm chip that can push 12 quads (48 pixels) worth of data around at a blisteringly fast 650MHz. Why build something different when you can just triple the hardware?

To be fair, it's not a straight tripling of everything and it works out to look more like 4 X1600 parts than 3 X1800 parts. The proportions work out to match what we see in the current midrange part: all you need for efficient processing of current games is a three to one ratio of pixel pipelines to render backends or texture units. When the X1000 series initially launched, we did look at the X1800 as a part that had as much crammed into it as possible while the X1600 was a little more balanced. Focusing on pixel horsepower makes more efficient use of texture and render units when processing complex and interesting shader programs. If we see more math going on in a shader program than texture loads, we don't need enough hardware to load a texture every single clock cycle for every pixel when we can cue them up and aggregate requests in order to keep available resources busy more consistently. With texture loads required to hide latency (even going to local video memory isn't instantaneous yet), handling the situation is already handled.

Other than keeping the number of texture and render units the same as the X1800 (giving the X1900 the same ratios of math to texture/fill rate power as the X1600), there isn't much else to say about the new design. Yes, they increased the number of registers in proportion to the increase in pixel power. Yes they increased the width of the dispatch unit to compensate for the added load. Unfortunately, ATI declined allowing us to post the HDL code for their shader pipeline citing some ridiculous notion that their intellectual property has value. But we can forgive them for that.

This handy comparison page will have to do for now.

120 Comments

View All Comments

blahoink01 - Wednesday, January 25, 2006 - link

Considering the average framerate on a 6800 ultra at 1600x1200 is a little above 50 fps without AA, I'd say this is a perfectly relevant app to benchmark. I want to know what will run this game at 4 or 6 AA with 8 AF at 1600x1200 at 60+ fps. If you think WOW shouldn't be benchmarked, why use Far Cry, Quake 4 or Day of Defeat?At the very least WOW has a much wider impact as far as customers go. I doubt the total sales for all three games listed above can equal the current number of WOW subscribers.

And your $3000 monitor comment is completely ridiculous. It isn't hard to get a 24 inch wide screen for 800 to 900 bucks. Also, finding a good CRT that can display greater than 1600x1200 isn't hard and that will run you $400 or so.

DerekWilson - Tuesday, January 24, 2006 - link

we have looked at world of warcraft in the past, and it is possible we may explore it again in the future.Phiro - Tuesday, January 24, 2006 - link

"The launch of the X1900 series no only puts ATI back on top, "Should say:

"The launch of the X1900 series not only puts ATI back on top, "

GTMan - Tuesday, January 24, 2006 - link

That's how Scotty would say it. Beam me up...DerekWilson - Tuesday, January 24, 2006 - link

thanks, fixedDrDisconnect - Tuesday, January 24, 2006 - link

Its amusing how the years have changed everyone's perception as to how much is a reasonalble price for a component. Hardrives, memory, monitors and even CPUs have become so cheap many have lost the perspective of what being on the leading edge costs. I paid 750$ for a 100 MB drive for my Amiga, 500$ for a 4x CR-ROM and remember spending 500$ on a 720 X 400 Epson colour injet. (Yeah I'm in my 50's) As long as games continue to challenge the capabilities of video cards and the drive to increase performance continues the top end will be expensive. Unlike other hardware (printers, memory, hardrives) there are still perfomance improvements to be made that the user will perceive. If someday a card can render so fast that all games play like reality, then video cards will become like hardrives are now.finbarqs - Tuesday, January 24, 2006 - link

Everyone gets this wrong! It uses 16 PIXEL-PIPELINES with 48 PIXEL SHADER PROCESSORS in it! the pipelines are STILL THE SAME as the X1800XT! 16!!!!!!!!!! oh yeah, if you're wondering, in 3DMark 2005, it reached 11,100 on just a Single X1900XTX...DerekWilson - Tuesday, January 24, 2006 - link

semantics -- we are saying the same things with different words.fill rate as the main focus of graphics performance is long dead. doing as much as possible at a time to as many pixels as possible at a time is the most important thing moving forward. Sure, both the 1900xt and 1800xt will run glquake at the same speed, but the idea of the pixel (fragment) pipeline is tied more closely to lighting, texturing and coloring than to rasterization.

actually this would all be less ambigous if opengl were more popular and we had always called pixel shaders fragment shaders ... but that's a whole other issue.

DragonReborn - Tuesday, January 24, 2006 - link

I'd love to see how the noise output compares to the 7800 series...slatr - Tuesday, January 24, 2006 - link

How about some Lock On Modern Air Combat tests?I know not everyone plays it, but it would be nice to have you guys run your tests with it. Especially when we are shopping for $500 dollar plus video cards.