SUN’s UltraSparc T1 - the Next Generation Server CPUs

by Johan De Gelas on December 29, 2005 10:03 AM EST- Posted in

- CPUs

Introduction

SUN's Ultrasparc T1, formerly known as Niagara, is much more than just a new UltraSparc. It is the harbinger of a new generation of CPUs, which focus almost solely on Thread Level Parallelism. No less than 32 independent parts of a different program (threads) can be "in flight" on the chip. It is SUN's first implementation of their Throughput computing philosophy, and compared to what we are used to in the AMD/Intel world, it is a pretty extreme architecture that focuses on network and server performance.

Stubborn Server applications

The basic idea behind the UltraSparc T1 is that most modern superscalar Out-Of-Order CPUs may be excellent for games, digital content creation and scientific calculations, but they are not a good match for commercial server loads.

These complex CPUs can decode up to 3 (Opteron) to 8 (Power 5) instructions in parallel, put in a buffer and try to issue them across 9 or more units. In theory, these CPUs can decode, issue, execute and retire up to 3 (Opteron) to 5 (IBM Power) instructions per clock cycle. They have huge buffers (up to 200 instructions) to keep many instructions in flight.

Server workloads, however, cannot make good use of all this parallelism for several reasons. The main reason is that commercial server loads move a lot of data around and perform relatively little calculation on that data. Moving a lot of data around means that you may need a lot of accesses to the memory, which results in many cycles wasted while the CPU has to wait for the data to arrive. As many different users query different parts of the database, caching cannot be as efficient (low locality of reference). In the past years, memory latency has become worse as memory speed increased a lot slower than the speed of the CPU. Memory latency is even worse on MP (Multi-Processor) systems, and has risen from a few tens of CPU cycles to 200-400 clock cycles. The second reason is that many of the calculations performed on that data involve data dependent (read: hard to predict) branches, which makes it even harder to do a lot in parallel.

You might counter these two problems by eliminating the branches through predication and incorporate very large caches. That is what the Itanium family does, but even the mighty Itanium is not capable of running those server loads at high speeds despite predication and gigantic caches. Below, you can see Intel's own numbers for CPU utilization on the 3 different workloads.

The applications that can be found inside Spec Integer benchmark are still rather compute-intensive compared to server applications. Compression, FPGA Circuit Placement and Routing, Compiling and interpreting, and computer visualization are representatives of very CPU intensive integer loads. On average, the best desktop CPUs such as the Athlon 64 or Intel Dothan are capable of sustaining 0.8 to 1 instructions per clock cycle in this benchmark, while the Pentium 4 is around 0.5-0.7 IPC. Itanium is capable of a 1.3-1.5 IPC. That may sound like very low numbers, but let us compare SpecInt with typical server loads. In the table below, you find how the 4-way superscalar USIIIi does on the various benchmarks.

Rather than focus on the absolute numbers, it is more important to note that web applications have 3 times less IPC than CPU intensive integer apps. OLTP databases (TPC-C) do even worse: the CPU sustains on average 0.2 instructions per clock pulse, or 4.5 less than SpecInt. These numbers are no different for the Opteron or Xeon. So despite Out of Order execution, nifty branch prediction schemes and big caches, commercial server loads utilize a very meagre 10 to 15% of the potential of modern CPUs.

One possible solution is to focus on clock speed instead of trying to process as many instructions in parallel (ILP, instruction level parallelism). The long pipelines of such CPUs make the branch prediction problem worse, and the power consumption goes up exponentially as we discussed in a previous article about dynamic power and power leakage.

SUN's Ultrasparc T1, formerly known as Niagara, is much more than just a new UltraSparc. It is the harbinger of a new generation of CPUs, which focus almost solely on Thread Level Parallelism. No less than 32 independent parts of a different program (threads) can be "in flight" on the chip. It is SUN's first implementation of their Throughput computing philosophy, and compared to what we are used to in the AMD/Intel world, it is a pretty extreme architecture that focuses on network and server performance.

Fig 1. The 2U SUN T2000

Stubborn Server applications

The basic idea behind the UltraSparc T1 is that most modern superscalar Out-Of-Order CPUs may be excellent for games, digital content creation and scientific calculations, but they are not a good match for commercial server loads.

These complex CPUs can decode up to 3 (Opteron) to 8 (Power 5) instructions in parallel, put in a buffer and try to issue them across 9 or more units. In theory, these CPUs can decode, issue, execute and retire up to 3 (Opteron) to 5 (IBM Power) instructions per clock cycle. They have huge buffers (up to 200 instructions) to keep many instructions in flight.

Server workloads, however, cannot make good use of all this parallelism for several reasons. The main reason is that commercial server loads move a lot of data around and perform relatively little calculation on that data. Moving a lot of data around means that you may need a lot of accesses to the memory, which results in many cycles wasted while the CPU has to wait for the data to arrive. As many different users query different parts of the database, caching cannot be as efficient (low locality of reference). In the past years, memory latency has become worse as memory speed increased a lot slower than the speed of the CPU. Memory latency is even worse on MP (Multi-Processor) systems, and has risen from a few tens of CPU cycles to 200-400 clock cycles. The second reason is that many of the calculations performed on that data involve data dependent (read: hard to predict) branches, which makes it even harder to do a lot in parallel.

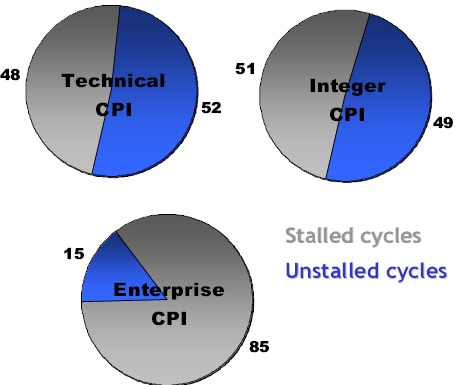

You might counter these two problems by eliminating the branches through predication and incorporate very large caches. That is what the Itanium family does, but even the mighty Itanium is not capable of running those server loads at high speeds despite predication and gigantic caches. Below, you can see Intel's own numbers for CPU utilization on the 3 different workloads.

Fig 2: Intel reporting the percentage of stalled cycles of different applications on the Itanium 2 family. Source:Intel.

The applications that can be found inside Spec Integer benchmark are still rather compute-intensive compared to server applications. Compression, FPGA Circuit Placement and Routing, Compiling and interpreting, and computer visualization are representatives of very CPU intensive integer loads. On average, the best desktop CPUs such as the Athlon 64 or Intel Dothan are capable of sustaining 0.8 to 1 instructions per clock cycle in this benchmark, while the Pentium 4 is around 0.5-0.7 IPC. Itanium is capable of a 1.3-1.5 IPC. That may sound like very low numbers, but let us compare SpecInt with typical server loads. In the table below, you find how the 4-way superscalar USIIIi does on the various benchmarks.

| Benchmark | IPC |

| SPECint | 0.9 |

| SPECjbb | 0.5 |

| SPECweb | 0.3 |

| TPC-C | 0.2 |

Rather than focus on the absolute numbers, it is more important to note that web applications have 3 times less IPC than CPU intensive integer apps. OLTP databases (TPC-C) do even worse: the CPU sustains on average 0.2 instructions per clock pulse, or 4.5 less than SpecInt. These numbers are no different for the Opteron or Xeon. So despite Out of Order execution, nifty branch prediction schemes and big caches, commercial server loads utilize a very meagre 10 to 15% of the potential of modern CPUs.

One possible solution is to focus on clock speed instead of trying to process as many instructions in parallel (ILP, instruction level parallelism). The long pipelines of such CPUs make the branch prediction problem worse, and the power consumption goes up exponentially as we discussed in a previous article about dynamic power and power leakage.

49 Comments

View All Comments

Betwon - Thursday, December 29, 2005 - link

Why? Really?It shows that the performance of FP apps is very very poor!!!

We can't believe it.

It is terrible for many FP apps.

Now, we know that the new CPU is only for the integer/32-thread-parallel-well apps.

JarredWalton - Friday, December 30, 2005 - link

How many FP instructions do you think a high-end web server runs? Try to think outside the box for a minute, rather than comparing it to HPC-oriented chips. Itanium wastes more than 80% of it's potential when running many database loads, and it does better than some of the other alternatives. Spending lots of die space on OOO logic and long pipelines isn't always the best solution, especially if you can guarantee that most code will have many threads. Quit thinking Half-Life and other games for a minute and try to shift to the big iron server world.Betwon - Friday, December 30, 2005 - link

Only one FP unit? not less than 40 cycles latency?If it is true:

The new CPU will be slower than P3@450MHz in the area of FP apps.

Brian23 - Friday, December 30, 2005 - link

who cares. That's not what it's designed to do. The only reason that it has the floating point core is for the rare occation when a FP op is needed.Betwon - Friday, December 30, 2005 - link

It means that this new CPU does not fit for the FP apps. Maybe a old CPU(10 years old) can beat it.Now, we know that the apps-area of this new CPU is very very spec...

It is too difficult to find apps(2-thread-paralle-well or more) for P4, how to find the apps(32-thread-paralle-well) easily?

thesix - Friday, December 30, 2005 - link

Betwon,You seem to be confused with the concept of multi-threading v.s. multi-tasking.

You do NOT need to find an app that runs 32 parallel threads in one process.

You can simple run 32 _instances_ of that app, for example,

or, run 32 different apps even if everyone of them is single-threaded.

A typical server environment is just like that.

When we talk about Chip Multi-Threading (CMT), it's the _hardware_ thread, which is a totally different concept than software thread. Once hardward thread represents the capability of running one computing task, it does care where this task comes from the same app/process or not.

A perfect example is the Apache webserver, IIRC, at least in version 1.x.y (which is still the most popular version), the apache http server process is single-threaded. A new process is forked for each (or a group of) new http request. The more hardware threads you have, the more requests you can handle in parallel. Of course, the faster each hardward thread (or core, or cpu) is, the more requests it can handle in a given amount of time, but not in parallel.

It is also true that _most_ database out there doesn't use _any_ floatpoint computation.

So, if you think about it, the market for T1 type of CPU/server is not a small one.

The bottom line is, T1 excels at througput/Watt and througput/chip.

It's a well kown fact that it sucks at single-task or floatpoint computation.

Betwon - Friday, December 30, 2005 - link

NO!You seem to be confused with the concept of SMT v.s. CMT.

It is very low efficient, if T1 only use CMT but not use SMT.

T1 have no branch prediction and one_inst_issue/core, very very poor FP performacne.

The only explain about how to improve the efficiency(very poor) is to use SMT to hide the latency(by branch miss/cache miss ect.)

But it has only 8KB L1(which will be used by 4 threads), the cache miss will increase. It is possible to become worst.

thesix - Friday, December 30, 2005 - link

Explain to me the conceptual difference between SMT and CMT?All you have said is the (component) _implementation_ difference between T1 and POWER in achieving hardware threading.

Since you appear to know this topic quite well, why the ignorant comment like this:

"It is too difficult to find apps(2-thread-paralle-well or more) for P4, how to find the apps(32-thread-paralle-well) easily?"

and kept screaming about the lack of floatpoint performance?

I simply don't understand why you're so upset.

Betwon - Friday, December 30, 2005 - link

My english has some problem.I think that T1 use both CMT and SMT.

SMT -- one core with four threads

CMT -- one CPU with eight cores

If without SMT, cores of T1 will be very poor efficient (because of the stall's latency caused by branch miss/cache miss).

The very very poor FP performance of T1 is the truth.

We have to remind ourselves that it is only a integer CPU. It's FP performance is too terrible.

fitten - Sunday, January 1, 2006 - link

There is no "reminding" anyone of the poor FPU performance. The thing was never designed to be strong in FPU (quite obviously). It has "enough" FPU so that it doesn't have to do software emulation and that's it. So... going on and on about FPU performance is a useless argument here. Sun (nor anyone talking about the T1s) has ever said that it would be good at FPU perforamnce because it wasn't designed to be.This CPU was designed for servers. Servers typically have high cache miss rates anyway because of a number of things (streaming any kind of I/O doesn't have much data locality advantages). Server processes also typically have lots of I/O stalls. When a context stalls, each core has multiple other contexts to chose from in order to keep running.

So, I think the points you are trying to stress are quite obvious from the design of the CPU and the types of loads it was designed to handle. Yes, poor FPU performance obvious from having very limited (and slow) FPU resources. Yes, if you aren't running lots of threads the machine is inefficient because the thing is designed to take advantage of server type threads where there will be lots of I/O stalling and if there is nothing else to run while waiting on the I/O requests to finish, it sits idle (much like any other machine). Yes, in-order execution and the lack of branch prediction will not mask any stalls the instruction stream will generate (which is OK because the design of the CPU actually counts on these stalls to happen so that lots of nice SMT can happen).

It sounds like you are in violent agreement with everyone :)