Gigabyte's i-RAM: Affordable Solid State Storage

by Anand Lal Shimpi on July 25, 2005 3:50 PM EST- Posted in

- Storage

We All Scream for i-RAM

Gigabyte sent us the first production version of their i-RAM card, marked as revision 1.0 on the PCB.There were some obvious changes between the i-RAM that we received and what we saw at Computex.

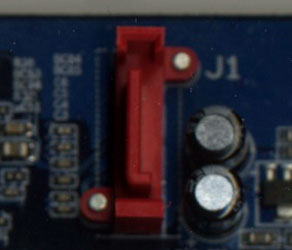

First, the battery pack is now mounted in a rigid holder on the PCB. The contacts are on the battery itself, so there's no external wire to deliver power to the card.

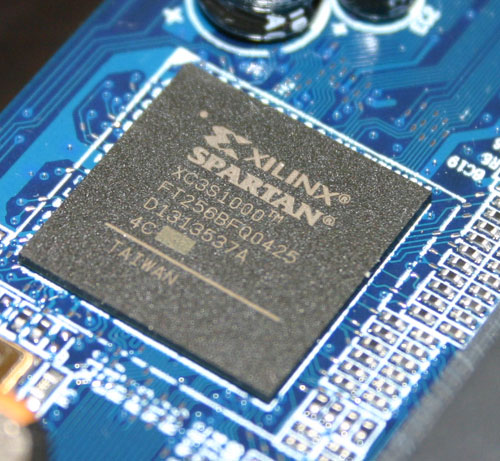

The Xilinx FPGA has three primary functions: it acts as a 64-bit DDR memory controller, a SATA controller and a bridge chip between the memory and SATA controllers. The chip takes requests over the SATA bus, translates them and then sends them off to its DDR controller to write/read the data to/from memory.

Gigabyte has told us that the initial production run of the i-RAM will only be a quantity of 1000 cards, available in the month of August, at a street price of around $150. We would expect that price to drop over time, and it's definitely a lot higher than what we were told at Computex ($50).

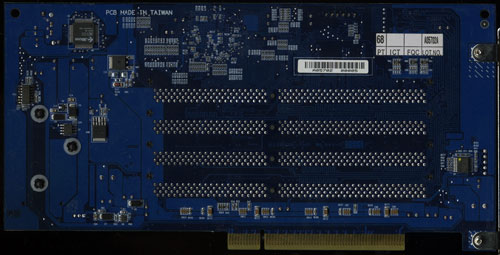

The i-RAM is outfitted with 4 184-pin DIMM slots that will accept any DDR DIMM. The memory controller in the Xilinx FPGA operates at 100MHz (DDR200) and can actually support up to 8GB of memory. However, Gigabyte says that the i-RAM card itself only supports 4GB of DDR SDRAM. We didn't have any 2GB unbuffered DIMMs to try in the card to test its true limit, but Gigabyte tells us that it is 4GB.

The Xilinx FPGA also won't support ECC memory, although we have mentioned to Gigabyte that a number of users have expressed interest in having ECC support in order to ensure greater data reliability.

Although the i-RAM plugs into a conventional 3.3V 32-bit PCI slot, it doesn't use the PCI connector for anything other than power. All data is transfered via the Xilinx chip and over the SATA connector directly to your motherboard's SATA controller, just like any regular SATA hard drive.

With SATA as the only data interface, Gigabyte made the i-RAM infinitely more useful than software based RAM drives because to the OS and the rest of your system, the i-RAM appears to be no different than a regular hard drive. You can install an OS, applications or games on it, you can boot from it and you can interact with it just like you would any other hard drive. The difference is that it is going to be a lot faster and also a lot smaller than a conventional hard drive.

The size limitations are pretty obvious, but the performance benefits really come from the nature of DRAM as a storage medium vs. magnetic hard disks. We have long known that modern day hard disks can attain fairly high sequential transfer rates of upwards of 60MB/s. However, as soon as the data stops being sequential and is more random in nature, performance can drop to as little as 1MB/s. The reason for the significant drop in performance is the simple fact that repositioning the read/write heads on a hard disk takes time as does searching for the correct location on a platter to position them. The mechanical elements of hard disks are what make them slow, and it is exactly those limitations that are removed with the i-RAM. Access time goes from milliseconds (1 x 10-3) down to nanoseconds (1 x 10-9), and transfer rate doesn't vary, so it should be more consistent.

Since it acts as a regular hard drive, theoretically, you can also arrange a couple of the i-RAM cards together in RAID if you have a SATA RAID controller. The biggest benefit to a pair of i-RAM cards in RAID 0 isn't necessarily performance, but now you can get 2x the capacity of a single card. We are working on getting another i-RAM card in house to perform some RAID 0 tests. However, Gigabyte has informed us that presently, there are stability issues with running two i-RAM cards in RAID 0, so we wouldn't recommend pursuing that avenue until we know for sure that all bugs are worked out.

133 Comments

View All Comments

jconan - Wednesday, July 27, 2005 - link

Of all the disk intensive apps I could think of aren't Bit Torrents a bit disk intensive? Would I-Ram make a good match for Bit Torrents?robmueller - Tuesday, July 26, 2005 - link

I agree with the people who mention server uses for this product. There are already quite a few products like this around in the server space, but they are all VERY expensive. There's a comprehensive list here:http://www.storagesearch.com/ssd-buyers-guide.html">http://www.storagesearch.com/ssd-buyers-guide.html

The one thing to note, most of these are flash based drives, which means they retain their data, but are actually quite slow transfer speed wise. When it comes to pure performance solutions (which are usually DRAM with battery and/or HD backup), there's only a couple of companies:

http://www.umem.com/Umem_NVRAM_Cards.html">http://www.umem.com/Umem_NVRAM_Cards.html

http://www.superssd.com/default.asp">http://www.superssd.com/default.asp

http://www.curtisssd.com/products/">http://www.curtisssd.com/products/

http://www.cenatek.com/product_rocketdrive.cfm">http://www.cenatek.com/product_rocketdrive.cfm

http://www.hyperossystems.co.uk/07042003/products....">http://www.hyperossystems.co.uk/07042003/products....

http://www.taejin.co.kr/english/product_intro.html">http://www.taejin.co.kr/english/product_intro.html

We've been long time users of micro memory products, and in general they've been great. We place database journals, filesystem journals, and general server "hot" files on the device and get great performance out of it.

The biggest issue with most of these is price and support. Rocket Drive is Windows only (we have Linux srevers). HyperDrive doesn't appear to be shipping yet (we ordered one and haven't heard anything). Jetspeed I've never even been able to get a sensible reply from. Curtis seem to be focussing on fibre channel (their SCSI interface drive is now quite old, only 80MB/s), which means you need to spend an extra $1000 almost on just a controller. RamSan are incredibly expensive and FC only, but apparently have amazing performance as well. Umem does have a Linux driver, but Umem are no longer selling their retail, they are only selling wholesale to big storage vendors that use them in their products.

So that basically left us really interested in iRAM as a potential long term replacement for for Umem in new servers we buy. It's a pity that the apparent performance is a bit lacking. On the other hand, the biggest advtange of RAM based drives is the latency reduction. Basically you can write and have your data commited to "permanent" storage and move along with the next task straight away. This is the whole point of database/filesystem journals. It would be great to test the iRAM with real server scenarios that rely on this low latency ability. Rerunning the database tests with a combination of journal and full database on the drive would be really interesting.

http://www.anandtech.com/IT/showdoc.aspx?i=2447">http://www.anandtech.com/IT/showdoc.aspx?i=2447

Basically it seems that this is a really hard product to sell. There's definitely a market for it in the server space, but most of the people who realise that are big DB/file system users, and are usually willing to spend more to get an "enterprise" like product. It would be really nice if all those "middle" users with database/filesystem/email issues could be shown how to use one of these to significantly extend the life/performance of one of their servers...

Scarceas - Tuesday, July 26, 2005 - link

I see this as a much easier way to run your OS in RAM (hell, I don't think there is a way to run XP on a RAM partition).If you have 4GB of RAM, you can partition 3.5GB and run win9x in it. That leaves the max 512MB conventional RAM for 9x to work with. It takes a lot of work, but I think it is faster than this because you don't have the PCI bus constraint, and the RAM controller on a motherboard is probably flatout superior.

It would be interesting to see a comparison...

Scarceas - Tuesday, July 26, 2005 - link

Why did the 300mb file from the drive to itself take ~4 times as long as the 693MB file from the drive to itself?what am I missing?

Antiflash - Wednesday, July 27, 2005 - link

It is a 300mb folder containing several files that could be located in diferent positions which means a more random access. The other is a unique file, it is larger but the data is read from adyacent positions in the disc. In the first case you have to add the overhead of the procesing time of the OS when dealing with several files.JarredWalton - Wednesday, July 27, 2005 - link

Actually, you need to make it a bit more clear: it's the Firefox source code, which is likely thousands of small files. It's not just a few or many, but *TONS* of little files. Even though the access times of the i-RAM are much lower than that of a standard HDD, there is still latency associated with the SATA bus and other portions of the system, so it's not "instantaneous". Three times as fast is still good, and that's relative to the Raptor - something like a 7200 RPM drive would be even slower relative to the i-RAM. Still, best case scenario for heavy IO seems to suggest the current i-RAM is only about 3X faster than a good HDD setup. Good but not great.- Tuesday, July 26, 2005 - link

There's only one comment so far in this entire thread that really touches on where the i-Ram is truly going to succeed, and a few posters flirt with the notion in an offhanded manner.The benefits of an i-Ram would really come out during I/O intensive operations, as in high volumes of reads and writes, without really being high data transfer volumes, which is the case for a lot of database operations. A lot of the tests performed in the article really had a focus of large volume data retrieval, and that's like using the haft of a katana to hammer in a nail.

Think about web bulletin boards like PHP-nuke, Slashcode, PHPBB, any active dynamic website that is constantly accessing a database to load user preferences, banner ads, static images. Forum posting, article retrieval, content searching, etc. An applicable consumer example would be putting your web browser's cache on the I-Ram, or your mail or news reader's data files, or dumping a copy of your entire document's folder to it, then using Windows' search function to dig through them all for all occurences of "the". Throw a squid cache on it. Put your innoDB transaction log on it. Hell, for that matter, slot a handful of these and use them as innoDB raw partitions for your data.

The kinds of tests you need to perform to make an I-Ram shine would be high volumes of simultaneous searches across the entire volume, the kind of act that would make a regular disk drive grind to a screaming halt in a fit of schizophrenic head twitching. This isn't video editing, OS booting (with exceptions), game loading, or most of the scenarios commented on above. It's still a SATA drive. Your bulk data isn't going to transfer any faster, but you *can* find it quicker and open, update, and close your files faster. Leverage *those* strengths and stop thinking it's a RocketDrive.

Bensam123 - Tuesday, July 26, 2005 - link

All my concerns on this product were pretty much addressed-SATA2

-5.25" Bay drive instead of PCI slot

-Using a 4pin Molex connector or SATA power connector instead

-PCI-E instead of SATA (drivers are made everyday)

A few comments I have on this product that weren't mentioned. Everyone talked about putting these into a Raid0 array to improve size but no one mentioned that it could very well double performance. I don't know what's causing the current bottle necks with these cards besides the SATA interface but that just doesn't seem right. Anand needs to run benchmarks like Sisoft File System Benchmark or HD Tach to narrow it down. Read/Write/Sequential and Random should all be almost instaneous only limited by the bandwidth of SATA and the bridge it is attached to. This card could very well be limited by the chipset they tried it on (southbridge/northbridge interconnet). It might be even faster on a chipset that lacks a southbridge and only has a northbridge such as the nForce4.

Given the nature of this product I don't know why motherboard manufacturers just don't add this right onto a board or make a special adapter for it you can buy (with a better interface). I could see alot more use in something like this if the dimms were attached right to my board and straight to my notherbridge.

What Gigabyte should've done (all companies with a bright idea should do this) is just give this to review sites such as Anand and others just to see what feedback emerges before they try to market something like this. I guess Gigabyte is sort of doing this by only producing 1,000 but that's still 1,000 more then they need to. If my guess is correct the second revision of this product should follow quite shortley after this one hits the market.

As was mentioned the price is a killer (I would rather get a SCSI320 controller and a 15,000 RPM Cheetah).

nullpointerus - Tuesday, July 26, 2005 - link

The bandwidth, which could have really blown SATA drives out of the water in certain tasks, is obviously crippled by its attachment to SATA. Yet if i-RAM was running at full PCI Express speed, then I should think opening the specs for the memory controller would quickly lead to open source drivers. The storage is, after all, cheap DDR sticks.Sure, these drivers might be written for Linux or BSD instead of Windows, but surely porting GPL'd drivers to Windows would be easy for a company which can open the specs? nVidia and ATI have proprietary drivers because they claim it would be suicide for them to open up their proprietary chip interfaces. But i-RAM?

nullpointerus - Tuesday, July 26, 2005 - link

I thought that compilation would make a good application for this. Source code, intermediate, and output files take up less than 4 GB. The large amount of small text files involved should allow the i-RAM's random access performance advantage to really shine. Add to that the fact that long compiles can take several hours - or days if you are building Gentoo, for example - and the difference should be quite noticable. Yet there don't seem to be any compiler tests in this article. Maybe they simply aren't I/O limited?