No more mysteries: Apple's G5 versus x86, Mac OS X versus Linux

by Johan De Gelas on June 3, 2005 7:48 AM EST- Posted in

- Mac

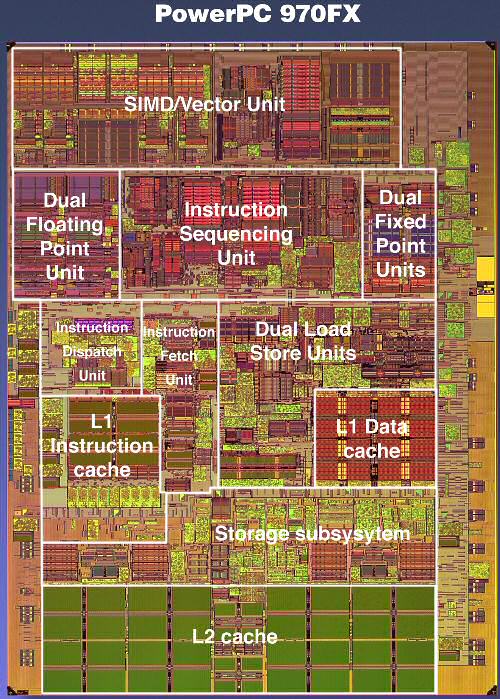

IBM PowerPC 970FX: Superscalar monster

Meet the G5 processor, which is in fact IBM's PowerPC 970FX processor. The RISC ISA, which is quite complex and can hardly be called "Reduced" (The R of RISC), provides 32 architectural registers. Architectural registers are the registers that are visible to the programmer, mostly the compiler programmer. These are the registers that can be used to program the calculations in the binary (and assembler) code.

The 970FX is deeply pipelined, quite a bit deeper than the Athlon 64 or Opteron. While the Opteron has a 12 stage pipeline for integer calculations, the 970FX goes deeper and ends up with 16 stages. Floating point is handled through 21 stages, and the Opteron only needs 17. 21 stages might make you think that the 970FX is close to a Pentium 4 Northwood, but you should remember that the Pentium 4 also had 8 stages in front of the trace cache. The 20 stages were counted from the trace cache. So, the Pentium 4 has to do less work in those 20 stages than what the 970FX performs in those 16 or 21 stages. When it comes to branch prediction penalties, the 970FX penalty will be closer to the Pentium 4 (Northwood). But when it comes to frequency headroom, the 970FX should do - in theory - better than the Opteron, but does not come close to the "old" Pentium 4.

The 970FX works out of order and up to 200 instructions can be kept in flight, compared to 126 in the Pentium 4. The rate at which instructions are fetched will not limit the issue rate either. The PowerPC 970 FX fetches up to 8 instructions per cycle from the L1 and can decode at the same rate of 8 instructions per cycle. So, is the 970FX the ultimate out-of-order CPU?

While 200 instructions in flight are impressive, there is a catch. If there was no limitation except die size, CPUs would probably keep thousands of instructions in flight. However, the scheduler has to be able to pick out independent instructions (instructions that do not rely on the outcome of a previous one) out of those buffers. And searching and analysing the buffers takes time, and time is very limited at clock speeds of 2.5 GHz and more. Although it is true that the bigger the buffers, the better, the number of instructions that can be tracked and analysed per clock cycle is very limited. The buffer in front of the execution units is about 100 instructions big, still respectable compared to the Athlon 64's reorder buffer of 72 instructions, divided into 24 groups of 3 instructions.

The same grouping also happens on the 970FX or G5. But the grouping is a little coarser here, with 5 instructions in one group. This grouping makes reordering and tracking a little easier than when the scheduler would have to deal with 100 separate instructions.

The grouping is, at the same time, one of the biggest disadvantages. Yes, the Itanium also works with groups, but there the compilers should help the CPU with getting the slots filled. In the 970FX, the group must be assembled with pretty strict limitations, such as at one branch per group. Many other restrictions apply, but that is outside the scope of this article. Suffice it to say that it happens quite a lot that a few of the operations in the group consist of NOOP, no-operation, or useless "do nothing" instructions. Or that a group cannot be issued because some of the resources that one member of the group needs is not available ( registers, execution slots). You could say that the whole grouping thing makes the Superscalar monster less flexible.

Branch prediction is done by two different methods each with a gigantic 16K entry history table. A third "selector" keeps another 16K history to see which of the two methods has done the best job so far. Branch prediction seems to be a prime concern for the IBM designers.

Memory Subsystem

The caches are relatively small compared to the x86 competition. A 64 KB I-cache and 32 KB D-cache is relatively "normal", while the 512 KB L2-cache is a little small by today's standards. But, no complaints here. A real complaint can be lodged against the latency to the memory. Apple's own webpage talks about 135 ns access time to the RAM. Now, compare this to the 60 ns access time that the Opteron needs to access the RAM, and about 100-115 ns in the case of the Pentium 4 (with 875 chipset).A quick test with LM bench 2.04 confirms this:

| Host | OS | Mem read (MB/s) | Mem write (MB/s) | L2-cache latency (ns) | RAM Random Access (ns) |

| Xeon 3.06 GHz | Linux 2.4 | 1937 | 990 | 59.940 | 152.7 |

| G5 2.7 GHz | Darwin 8.1 | 2799 | 1575 | 49.190 | 303.4 |

| Xeon 3.6 GHz | Linux 2.6 | 3881 | 1669 | 78.380 | 153.4 |

| Opteron 850 | Linux 2.6 | 1920 | 1468 | 50.530 | 133.2 |

Memory latency is definitely a problem on the G5.

On the flipside of the coin is the excellent FSB bandwidth. The G5/Power PC 970FX 2.7 GHz has a 1.35 GHz FSB (Full Duplex), capable of sending 10.8 GB/s in each direction. Of course, the (half duplex) dual channel DDR400 bus can only use 6.4 GB/s at most. Still, all this bandwidth can be put to good use with up to 8 data prefetch streams.

116 Comments

View All Comments

Icehawk - Friday, June 3, 2005 - link

Interesting stuff. I'd like to see more data too. Mmm Solaris.Unfortunately the diagrams weren't labeled for the most part (in terms of "higher is better") making it difficult to determine the results.

And the whole not displaying on FF properly... come on.

NetMavrik - Friday, June 3, 2005 - link

You can say that again! NT shares a whole lot more than just similarites to VMS. There are entire structures that are copied straight from VMS. I think most people have forgotten or never knew what "NT" stood for anyway. Take VMS, increment each letter by one, and you get WNT! New Technology my a$$.Guspaz - Friday, June 3, 2005 - link

Good article. But I'd like to see it re-done with the optimal compiler per-platform, and I'd like to see PowerPC Linux used to confirm that OSX is the cause of the slow MySQL performance.melgross - Friday, June 3, 2005 - link

I was just thinking back about this and remembered something I've seenComputerworld has had articles over the past two years or so about companies who have gone to XServes. They are using them with Apache, SYbase or Oracle. I don't remember any complaints about performance.

Also Oracle itself went to XServes for its own datacenter. Do you think they would have done that if performance was bad? They even stated that the performance was very good.

Something here seems screwed up.

brownba - Friday, June 3, 2005 - link

johan, i always appreciate your articles.you've been /.'d !!!!

and anandtech is holding up well.

good job

bostrov - Friday, June 3, 2005 - link

Since so much effort went in to vector facilities and instruction sets ever since the P54 days, shouldn't "best effort" on each CPU be used (use the IBM compiler on G5 and the Intel compiler on x86) - by using gcc you're using an almost artifically bad compiler and there is no guarantee that gcc will provide equivilant optimizations for each platform anyway.I think it'd be very interesting to see an article with the very best available compilers on each platform running the benchmarks.

Incidently, intel C with the vector instruction sets disabled still does better.

JohanAnandtech - Friday, June 3, 2005 - link

bostrov: because the Intel compiler is superb at vectorizing code. I am testing x87 FPU and gcc, you are testing SSE-2 performance with the Intel compiler.JohanAnandtech - Friday, June 3, 2005 - link

minsctdp: A typo which happened during final proofread. All my original tables say 990 MB/s. Fixed now.bostrov - Friday, June 3, 2005 - link

My own results for flops 2.0: (compiled with Intel C 8.1, 3.2 Ghz Prescott with 160 Mhz - 5:4 ratio - FSB)flops20-c_prescott.exe

FLOPS C Program (Double Precision), V2.0 18 Dec 1992

Module Error RunTime MFLOPS

(usec)

1 1.7764e-013 0.0109 1288.7451

2 -1.4166e-013 0.0082 852.7242

3 8.1046e-015 0.0067 2531.7045

4 9.0483e-014 0.0052 2858.2062

5 -6.2061e-014 0.0140 2065.6650

6 3.3640e-014 0.0100 2906.2439

7 -5.7980e-012 0.0327 366.4559

8 3.7692e-014 0.0111 2700.8968

Iterations = 512000000

NullTime (usec) = 0.0000

MFLOPS(1) = 1088.7826

MFLOPS(2) = 854.7579

MFLOPS(3) = 1609.7508

MFLOPS(4) = 2753.5016

Why are the anandtech results so poor?

melgross - Friday, June 3, 2005 - link

I thought that GCC comes with Tiger. I have read Apple's own info, and it definitely mentions GCC 4. Perhaps that would help the vectorization process.Altivec is such an important part of the processor and the performance of the machine that I would like to see properly written code used to compare these machines.