ATI's Radeon X800 XL 512MB - A toe in the 512MB pool

by Anand Lal Shimpi on May 4, 2005 9:27 AM EST- Posted in

- GPUs

At the end of last year, ATI announced 5 new GPUs, three of which were supposed to be available within a week of the announcement, none of which actually were. One of those GPUs, the X800 XL, was of particular interest as it was ATI's first real answer to NVIDIA's GeForce 6800GT and ATI said that it would be priced a full $100 less than NVIDIA's offering. Of course, that GPU didn't arrive on time either. And when it did, you had to pay a lot more than $299 to get it.

Fast forward to the present day and pretty much everything is fine. You can buy any of the GPUs that ATI announced at the end of last year at or below their suggested retail prices. But, we have another GPU release on our hands, and given ATI's recent track record, we have no idea if we're talking about a GPU that will be out later this month as promised or one that won't see the light of day for much longer.

Today, ATI is announcing their first 512MB graphics card - the Radeon X800 XL 512MB. Priced at $449, ATI's Radeon X800 XL 512MB is identical in every aspect to the X800 XL, with the obvious exception of its on-board memory size. The X800 XL 512MB is outfitted with twice as many memory devices as the 256MB version, but ATI is indicating that there's no drop in performance despite the increase in memory devices. The clock speeds of the X800 XL 512MB remain identical to the 256MB version, at 400MHz core and 980MHz memory. The 512MB version is also built on the same 0.11-micron R430 GPU as the 256MB version; in other words, the GPUs are identical - one is just connected to twice as much memory. Right now, the X800 XL 512MB is PCI Express only.

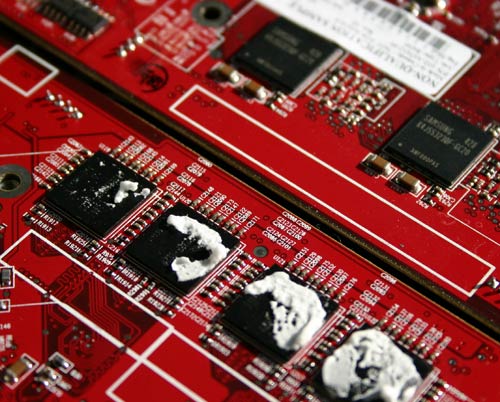

The board layout and design hasn't changed with the move to 512MB. The power delivery circuitry is all the same. The difference is that now there are twice as many memory devices on the PCB.

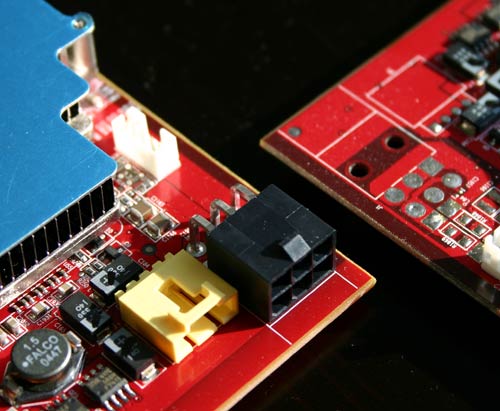

The two X800 XLs: 512MB (lower), 256MB (upper).

The X800 XL 512MB also now requires the 6-pin PCI Express power connector, something that the original 256MB board didn't need.

The X800 XL 512MB achieves its memory capacity by using twice the number of chips as the 256MB version.

This is where ATI's strategy doesn't seem to make much sense. They are taking a mid-range performance part, the X800 XL, and giving it more memory than any of their GPUs, thus pricing it on par with their highest end X850 XT. More than anything, ATI is confusing their own users with this product. Those who are uninformed and have a nice stack of cash to blow on a new GPU are now faced with a dilemma: $449 for a 512MB X800 XL or $499 for a 256MB X850 XT? That is, if this part does actually ship to market in time and if it is actually priced at $449 - both assumptions that we honestly can't make anymore given what we've seen with the past several ATI releases.

What's also particularly interesting about today's 512MB launch is that ATI won't be producing any 512MB X800 XL cards under the "built by ATI" name. You will only be able to get these cards through ATI's partners. According to ATI, the following manufacturers will bring the X800 XL 512MB to market sometime this month:

- GigabyteAnd as confirmation, here's a slide from ATI's presentation saying the exact same thing:

- TUL

- Sapphire

- HIS

- MSI

- ABIT

It is worth noting that NVIDIA has been shipping a 512MB card, the 512MB GeForce 6800 Ultra, for quite some time now. However, the card itself only seems to be available to system builders such as Alienware and Falcon Northwest. The 512MB 6800 Ultra is extremely expensive and appears to add around $800 to the cost of any system built by those who carry it; obviously, not in the same price range as ATI's X800 XL 512MB. Despite our curiosities, we could not get a card from NVIDIA in time for this review, although we'd be willing to bet that our findings here with the X800 XL 512MB would apply equally to NVIDIA's 512MB GeForce 6800 Ultra as well.

70 Comments

View All Comments

aliasfox - Wednesday, May 4, 2005 - link

The x800xl is not the best card on the Mac side... the 6800 Ultra DDL and x800XT have been available for some time, both with DDL support for the 30" display.Of course, I'm just waiting for someone to hook a massive SLI system up to a 30" and actually *play* a modern game at 2560x1600... that would surely be a sight to see...

Cuser - Wednesday, May 4, 2005 - link

GED: I gree with you on the OpenGL thing. I thought nvidia was in the lead with OpenGL support. My old ATI would not play in OpenGL at all!However, I disagree with your second post. If it were that simple, why do rendered games need so much RAM? I am pretty sure there are other things stored in video RAM when rendering the OS in 3D including textures. I think the days of the VRAM being used as JUST a frame buffer in the OS are numbered.

Ged - Wednesday, May 4, 2005 - link

"2) On a MACINTOSH with Tiger, extra VRAM is a very good thing for the future, considering how the Quartz 2D Extreme will work (utilizing the GPU for OS rendering, caching it in VRAM)"You shouldn't need close to 512MB of VRAM for displaying OSX's 'Quartz 2D Extreme'.

Consider that Apple's largest display is 2560x1600 pixels (4096000 pixels total) x 24 bpp (I'm assuming 24bpp) = 98,304,000 bits for a frame (12,288,000 bytes for a frame).

Even if you had 20 full screen frames which you composited together for the full effect it would only take 245,760,000 bytes which is still within the 256MB on current generations of cards (using 32bpp it's still under 256MB at 19 complete frames).

I seriously doubt that anything Quartz would do would need that much VRAM. If Quartz does need that much VRAM, I think something is really, really wrong.

Someone please correct my math if I'm off, but something's wrong if you need 512MB to display 2D OS graphics.

Ged - Wednesday, May 4, 2005 - link

"1) ATI is better at OpenGL than Nvidia"Everything I have read and all the benchmarks I have seen are oposite of this claim.

What am I missing?

racolvin - Wednesday, May 4, 2005 - link

Anand:Two things to remember about these cards:

1) ATI is better at OpenGL than Nvidia

2) On a MACINTOSH with Tiger, extra VRAM is a very good thing for the future, considering how the Quartz 2D Extreme will work (utilizing the GPU for OS rendering, caching it in VRAM)

Everything on the Mac is OpenGL, so ATI testing the waters with this part is not surprising when I think of it in the Mac-future context. The X800XL would still be the best card available for the Mac folks should it make it to that side of the fence ;)

R

StrangerGuy - Wednesday, May 4, 2005 - link

Yet another pointless release from ATI, or Nvidia if it matter with their respective 512MB cards.BTW I saw an advertisement on a 512MB X300SE by ECS via a link from AT forums. Anyone has a 486 with 512MB yet?

Cuser - Wednesday, May 4, 2005 - link

Bersl2: I sympathize with where you are coming from. However there are 2 things that you are not considering.First, Microsoft controls the operating system, so, it would stand to reason they control (if not have a large influence on) the ways and methods the developers, programmers, and hardware alike interact with that system(and vise versa).

Secondly, DirectX api is developed in collaboration with the graphics industry (or at least so I am led to believe).

I think the real reason to get upset by this is if microsoft abuses this power (do they?).

Furthermore, I myself would like to see more titles using OpenGL, if not to promote diversity and not be dependant on only one API.

I am not a developer, but it seems that more and more games are only using DirectX API because Microsoft pushes it (i.e...easier to get information, training, SDK's, and is advertised everywhere.)

OpenGL is an open standard and does not seem to have that type of support. But, this is just my observation so far. Maybe someone in the industry could shed some light on this...

bersl2 - Wednesday, May 4, 2005 - link

Cuser: Your assertion I agree with, if not your reasoning.Think about this: *Why* should Microsoft be the one controlling the API? The games they've "made" have (almost?) all been bought from somebody else. They don't make hardware either. Why is everybody being led around on a leash by the middleman?

It's frustrating when there's very little reason (and if there is a halfway decent reason, I'd like to hear about it---and don't give me that "ease of use" crap; any competent programmer can use either API rather well) not to use OpenGL over Direct3D, other than the "Nobody ever got fired for choosing Microsoft" mentality.

aliasfox - Wednesday, May 4, 2005 - link

I'm sure Aero Glass in all it's glory will want more than 64 MB VRAM. Given that a) it's Microsoft (not known for its sleek software) and b) that it's still a year away.My reasoning? Mac OSX (don't shoot me). OSX's Aqua interface is also 3D in the absolute barest sense of the word, and the rendering engine uses the GPU for textures and such (my understanding, at least). Features such as Exposé on Panther (which has been out for ~18 months) are much, much happier in 64 MB RAM than in 32.

In addition, some of Apple's new features in Tiger need a GPU with DX9 features. I have a feeling Microsoft will do the same thing with Longhorn. But perhaps no more than 128 MB VRAM, I would hope.

fishbits - Wednesday, May 4, 2005 - link

LOL, guess we'll have to wait and see what the real reasoning for designing the card was, and how good a buy it will be (and for whom and when). Anand nailed it when he said it was a raw deal for most of us as things stand now.