NVIDIA nForce Professional Brings Huge I/O to Opteron

by Derek Wilson on January 24, 2005 9:00 AM EST- Posted in

- CPUs

The Kicker(s)

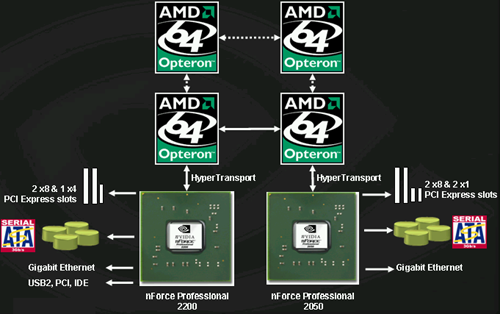

On server setups with available HyperTransport lanes to processors, up to 3 nForce Pro 2050 MCPs can be added alongside a 2200 MCP.

As each MCP makes a HT connection to a processor, a dual or quad processor setup is required for a server to take advantage of 3 2050 MCPs (in addition to the 2200), but in the case where such I/O power is needed, the processing power would be an obvious match. It is possible for a single Opteron to connect to one 2200 and 2 2050 MCPs for slightly less than the maximum I/O, as any available HyperTransport link can be used to connect to one of NVIDIA's MCPs. Certainly, the flexibility now offered by AMD and NVIDIA to third party vendors has been increased multiple orders of magnitude.

So, what does a maximum of 4 MCPs in one system get us? Here's the list:

- 80 PCI Express lanes across 16 physical connections

- 16 SATA 3Gb/s channels

- 4 GbE interfaces

Keep in mind that these features are all part of MCPs connected directly to physical processors with 1GHz HyperTransport connections. Other onboard solutions with massive I/O have provided multiple GbE, SATA, and PCIe lanes through a south bridge, or even the PCI bus. The potential for onboard scalability with zero performance hit is staggering. We wonder if spreading out all of this I/O across multiple HyperTransport connections and processors may even help to increase performance over the traditional AMD I/O topography.

Server configurations can drop an MCP off of each processor in an SMP system.

The fact that NVIDIA's RAID can span across all of the devices in the system (all 16 SATA 3Gb/s and the 4 PATA devices from the 2200) is quite interesting as well. Performance will be degraded by mixing PATA and SATA devices (as the system will have to wait longer on PATA drives), and likely, most will want to keep RAID limited to SATA. We are excited to see what kind of performance that we can get from this kind of system.

So, after everyone catches their breath from the initial "wow" factor of the maximum nForce Pro configuration, keep in mind that this will only be used in very high end servers using Infiniband connections, 10 GbE, and other huge I/O interfaces. Boards will be configured with multiple x8 and x4 PCIe slots. This will be a huge boon to the high end server market. We've already shown the scalability of the Opteron at the quad level to be significantly higher than Intel's Xeon (single front side bus limitations hurt large I/O), but unless Intel has some hidden card up their sleeve, this new boost will put AMD based high end servers well beyond the reach of anything that Intel could put out in the near term.

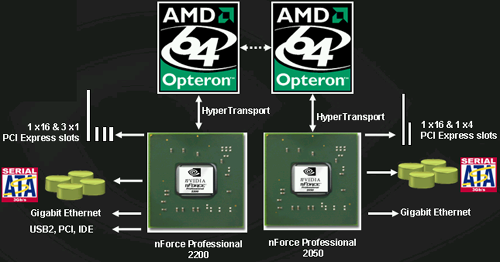

On the workstation side, NVIDIA will be pushing dual processor platforms with one 2200 and one 2050 MCP. This will allow the following:

- 40 PCI Express lanes across 8 physical connections

- 8 SATA 3Gb/s channels

- 2 GbE interfaces

This is the workstation layout for a DP NFPro system.

Again, it may be interesting to see if a vendor will come out with a single processor workstation board requiring an Opteron 2xx to enable a 2200 and 2050. This way, a single processor workstation could be had with all the advantages of the system.

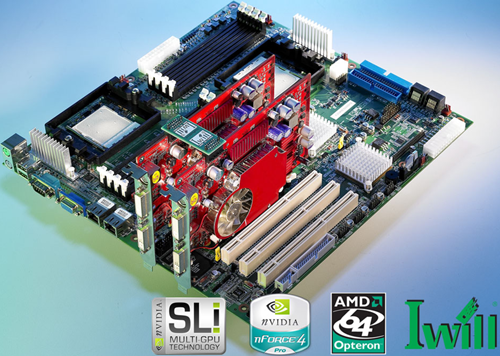

IWill is shipping their DK8ES as we speak and should have availability soon.

While NVIDIA hasn't announced SLI versions of Quadro or support for them, boards based on the nForce Pro will support SLI version of Quadro when available. And the most attractive feature of the nFroce Pro when talking about SLI is this: 2 full x16 PCI Express slots. Not only do both physical x16 slots have all x16 electrical connections, but NVIDIA has enough headroom left for an x4 slot and some x1 slots as well. In fact, this feature is big enough to be a boon without the promise of SLI in the future. The nForce Pro offers full bandwidth and support for multiple PCI Express cards, meaning that multimonitor support on PCI Express just got a shot in the arm.

One thing that's been missing since the move away from PCI graphics cards has been multiple card support in systems. Due to the nature of AGP, running a PCI card alongside an AGP card is nice, but PCI cards are much lower performance. Finally, those who need multiple graphics cards will have a solution with support for more than one of the latest and greatest graphics cards. This means two 9MP monitors for some, and more than two monitors for others. And each graphics card has a full x16 connection back to the rest of the system.

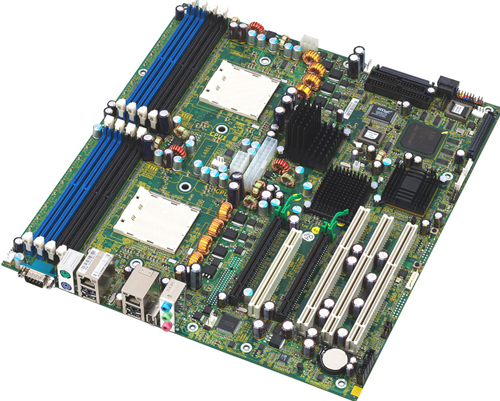

Tyan motherboards based on nForce Pro are also shipping soon according to NVIDIA.

The two x16 connections will also become important for workstation and server applications that wish to take advantage of GPUs for scientific, engineering, or other vector-based applications that lend themselves to huge arrays of parallel floating point hardware. We've seen plenty of interest in doing everything from fluid flow analysis to audio editing on graphics cards. It's the large bi-directional bandwidth of PCI Express that enables this, and now that we'll have two PCI Express graphics cards with full bandwidth, workstations have even more potential.

Again, workstations will be able to have RAID across all 8 SATA devices and 4 PATA devices if desired. We can expect mid-range and lower end workstation solutions only to have a single nForce 2200 MCP on them. NVIDIA sees only the very high end of the market looking at pairing the 2200 with 2050 in the workstation space.

Perhaps the only down side to the huge number of potential PCI Express lanes available is the inability of NVIDIA MCPs to share lanes between MCPs and slight lack of physical PCI Express connections. In otherwords, it will not be possible to have a motherboard with 5 x16 PCI Express slots or 10 x8 PCI Express slots, even though there are 80 lanes available. Of course, having 4 x16 slots is nothing to sneeze at. On the flip side, it's not possible to put 1 x16, 2 x4, and 6 x1 PCIe slots on a dual processor workstation. Even though there are a good 16 PCI Express lanes available, there are no more physical connections to be had. Again, this is an extreme example, and not likely to be a real world problem. But it's worth noting nonetheless.

55 Comments

View All Comments

Googer - Tuesday, January 25, 2005 - link

From what I have gathered, TCQ and NCQ are similar but not the exact same thing. Kind of like SCSI and IDE HDD's are similar but not the same.tumbleweed - Monday, January 24, 2005 - link

I've read before that NCQ as implemented by SATA is equivalent to the 'simple mode' of SCSI's TCQ, rather than being the same thing.DerekWilson - Monday, January 24, 2005 - link

#30:you cannot run 32-bit 33mhz cards at 66mhz ... There are 32-bit pci cards that can be dropped into 64bit 33mhz PCI slots. Not 64bit/66Mhz, and not PCI-X.

DerekWilson - Monday, January 24, 2005 - link

In using two seperate displays, 2 x2 PCIe connections is fine for two graphics cards. The system can't saturate graphics cards.The fact that NVIDIA uses both over the top and PCIe to send data for SLI means that bandwidth does impact SLI to a point. We haven't yet seen the impact of two x16 SLI slots, but the article I linked to about NF4 Ultra modding that Wes wrote shows that x16 + x2 and x8 + x8 are close, but there is a difference.

We'll be sure to test as much as we can -- hopefully someone will stick in PCIe lane configuration controlls in their BIOS.

Googer - Monday, January 24, 2005 - link

#31 TCQ has been a feature of Hitachi/IBM PATA for many years now since the 120gxp and the only controller that supports PATA tcq is Pacific Digital's "Discstaq" ATA 100 controller with propietary cables.Googer - Monday, January 24, 2005 - link

#26 sound storm lives! Chaintech nFORCE4http://www.newegg.com/app/ViewProductDesc.asp?desc...

Googer - Monday, January 24, 2005 - link

#12 Why wouldn't you want PCI-x for your existing pci cards, since it can run legacy 32-bit pci in 66mhz instead of 33mhz you are doubbleing your bandwith. It is something (pci-x) I am looking for on my next motherboard along with x16 and an x4 pci-e slot for skt 939.Here is an ananadtech article on the motherboard you

were referring to.

http://www.anandtech.com/news/shownews.aspx?i=2370...

Jeff7181 - Monday, January 24, 2005 - link

Jesus... 2 16X PCI Express slots... that's nutty! Yay to AMD and nVidia for building in more parallelism!Dubb - Monday, January 24, 2005 - link

Kris: x2 is sufficient? I thought things started to drop off around x4...coulda sworn I saw that somewhere.I have a question though, does the scenario change if you're running separate cards as opposed to SLI? If I had the funds, I'd be looking to power a couple 9MP displays (or a 9 MP + 30" cinema) off separate 3400s or 4400s

I'm pretty sure the 2895 (K8WE) was confirmed 16 + 16... their website claims "two x16 slots...with x16 signals"

If I was actually looking to buy though, I'd be looking to the tyan regardless - I like the layout and features better.

KristopherKubicki - Monday, January 24, 2005 - link

Dubb: I do not even believe that the Tyan is a "true" dual 16 lane configuration, but I sent them an email waiting a response.Of course - to be honest - it doesnt matter. two, two lane solutions are enough for modern SLI to scrape by - dual x4 or dual x8 are more than enough bandwidth for symmetric vector processing. I have a feeling full saturation of 16 lane PCIe, particularly for graphics, is a long way away.

Kristopher