NVIDIA's Scalable Link Interface: The New SLI

by Derek Wilson on June 28, 2004 2:00 PM EST- Posted in

- GPUs

Introduction

The major trend in computing as of late has been toward parallelism. We are seeing Hyperthreading and the promise of dual core CPUs on the main stage, and supporting technologies like NCQ that will optimize the landscape for multitasking environments. Even multi-monitor support has caught on everywhere from the home to the office.One of the major difficulties with this is that most user space applications (especially business applications like word processors and such) are not inherently parallel. Sure, we can throw in things like check-as-you-type spelling and grammar, and we can make the OS do more and more things in the background, but the number of things going at once is still relatively small.

Performance in this type of situation is dominated by the single threaded (non-parallel) case: how fast the system can accomplish the next task that the user has issued. Expanding parallelism in this type of computing environment largely contributes to smoothness, and the perception that the system is highly responsive to the user.

But since the beginning of the PC, and for the foreseeable future, there is an aspect of computing that benefits almost linearly with parallelism: 3D graphics.

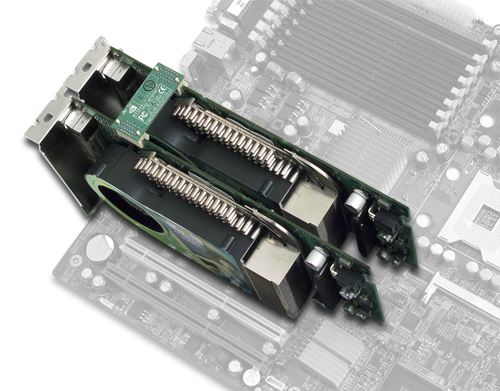

NVIDIA 6800 Ultras connected in parallel.

For an average 3D scene in a game, there will be a bunch of objects on the screen, all which need to be translated, rotated, or scaled as per the requirements for the next frame of animation of the game. These objects have anywhere from just a few to thousands of triangles that make them up. For every triangle that we can't eliminate (clip) as unseen, we have to project it onto the screen (from back to front objects which are further from the viewer are covered by closer ones).

What really puts it into perspective (pardon the pun) is resolution. At 1600x1200, 1,920,000 pixels need to be drawn to the screen. And we haven't even mentioned texture mapping or vertex and pixel shader programs. And all that stuff needs to happen in less than 1/60th of a second to satisfy most discriminating gamers. In this case, performance is dominated by how many things can get done at one time rather than how fast one thing can be done.

And if you can't pack any more parallelism onto single bit of silicon, what better way to garner more power (and better performance) than by strapping two cards together?

40 Comments

View All Comments

Wonga - Monday, June 28, 2004 - link

Hey hadders, I was thinking the same thing. Surely if these cards need such fancy cooling, they need a little bit of room to actually get some air to that cooling??? And to think I used to get worried putting a PCI card next to a Voodoo Banshee...DigitalDivine - Monday, June 28, 2004 - link

does anyone have any info if nvidia will be doing this for low end cards as well?klah - Monday, June 28, 2004 - link

"But it is hard for us to see very many people justifying spending $1000 on two NV45 based cards even for 2x the performance of one very fast GPU"Probably the same number of people who spend $3k-$7k on systems from Alienware, FalconNW, etc.

Alienware alone sells ~30,000 units/yr.

http://money.cnn.com/2004/03/18/commentary/game_ov...

hadders - Monday, June 28, 2004 - link

Whoops. duh. Admittedly all that hot air is been exited via the cooling vent at the back, but still my original thought was overall ambient temperature. I guess there would be no reason why they couldn't put that second PCIe slot further down the boards.hadders - Monday, June 28, 2004 - link

Hmmm, to be honest I hope they would intend to widen the gap between video cards. I wouldn't think the air flow particularily good on the "second" card if it's pushed up hard against the other? And where is all that hot air been blown?DerekWilson - Monday, June 28, 2004 - link

The thing about NVIDIA SLI is that the technology is part of die ... Its on 6800UE, 6800U, 6800GT, and 6800 non-ultra ... It is poossible that they disable the technology on lower clocked versions just like one of the quad pipes is disabled on the 12 pipe 6800 ...The bottom line is that it wouldn't be any easier or harder for NVIDIA to impiliment this technology for lesser GPUs based on the NV40 core. Its a question of will they. It seems at this point that they aren't plannig on it, but demand can always influence a company's decisions.

At the same time, I wouldn't recommend holding your breath :-)

ET - Monday, June 28, 2004 - link

Even with current games you can easily make yourself GPU limited by running 8x AA at high resolutions (or even less, but wouldn't you want the highest AA and resolution, if you could get them). Future games will be much more demanding.What I'm really interested in is whether this will be available only at the high end, or at the mid-range, too. Buying two mid-range cards for a better-than-single-high-end result could be a nice option.

Operandi - Monday, June 28, 2004 - link

Cool idea, but aren't these high end cards CPU limited by themselves let alone paired together.DerekWilson - Monday, June 28, 2004 - link

I really would like some bandwidth info, and I would have mentioned it if they had offered.That second "bare bones" PCB you are talking about is kind of what I meant when I was speaking of a dedicated slave card. Currently NVIDIA has given us no indication that this is the direction they are heading in.

KillaKilla - Monday, June 28, 2004 - link

Did they give any info as to the bandwidth between cards?Or perhaps even to the viability of dual core cards? (say having a sandard card, and adding a seperate PCB with just the bare minimum, say GPU, RAM and and interface? Figuring that this would cut a bit of the cost off of manufacturing an entirely seperate card.