NVIDIA's Scalable Link Interface: The New SLI

by Derek Wilson on June 28, 2004 2:00 PM EST- Posted in

- GPUs

Introduction

The major trend in computing as of late has been toward parallelism. We are seeing Hyperthreading and the promise of dual core CPUs on the main stage, and supporting technologies like NCQ that will optimize the landscape for multitasking environments. Even multi-monitor support has caught on everywhere from the home to the office.One of the major difficulties with this is that most user space applications (especially business applications like word processors and such) are not inherently parallel. Sure, we can throw in things like check-as-you-type spelling and grammar, and we can make the OS do more and more things in the background, but the number of things going at once is still relatively small.

Performance in this type of situation is dominated by the single threaded (non-parallel) case: how fast the system can accomplish the next task that the user has issued. Expanding parallelism in this type of computing environment largely contributes to smoothness, and the perception that the system is highly responsive to the user.

But since the beginning of the PC, and for the foreseeable future, there is an aspect of computing that benefits almost linearly with parallelism: 3D graphics.

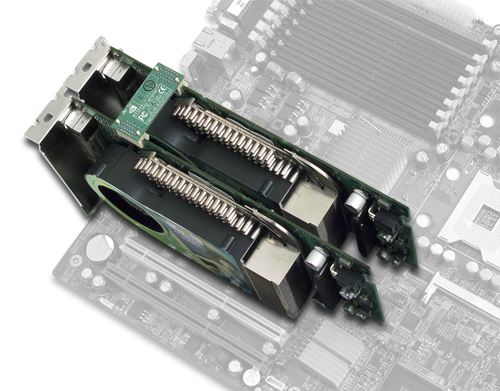

NVIDIA 6800 Ultras connected in parallel.

For an average 3D scene in a game, there will be a bunch of objects on the screen, all which need to be translated, rotated, or scaled as per the requirements for the next frame of animation of the game. These objects have anywhere from just a few to thousands of triangles that make them up. For every triangle that we can't eliminate (clip) as unseen, we have to project it onto the screen (from back to front objects which are further from the viewer are covered by closer ones).

What really puts it into perspective (pardon the pun) is resolution. At 1600x1200, 1,920,000 pixels need to be drawn to the screen. And we haven't even mentioned texture mapping or vertex and pixel shader programs. And all that stuff needs to happen in less than 1/60th of a second to satisfy most discriminating gamers. In this case, performance is dominated by how many things can get done at one time rather than how fast one thing can be done.

And if you can't pack any more parallelism onto single bit of silicon, what better way to garner more power (and better performance) than by strapping two cards together?

40 Comments

View All Comments

SpeekinSfear - Tuesday, June 29, 2004 - link

I also prefer NVIDIA 6800s over ATI X800s (Especially the GT model) but I requiring two video cards to get the best peformance is an inconsiderate progression. They're even encouraging devs to design stuff specially for this. It almost makes it like they cant make better video cards anymore or else like they care enough to try hard. Almost like they wanna slow down the video card performance pace, get everyone to buy two cards and make money from quantity over quality. NVIDIA better easy up if they know what's good for them. They're already pushing us hard enough to get PCIe*16 mobos. If they get their heads to high up in the clouds, they may start to lose business because no one will be willing to pay for their stuff. Or maybe Im just reading too much into this. :)Jeff7181 - Tuesday, June 29, 2004 - link

I thought it was a really big deal when they started combining vga cards and 3d accelerator cards into an "all-in-one" package. Now to get peak performance you're going to have two cards again... sounds like a step back to me... not to mention a HUGE waste of hardware. If they want the power of two NV4x GPU's, make a GeForce 68,000 Super Duper Ultra Extreme that's a dual GPU configuration.NFactor - Tuesday, June 29, 2004 - link

NVIDIA's new series of chips in my opinion are more impressive than ATI's. ATI may be faster but Nvidia is adding new technology like an onchip video encoder/decoder or this SLI technology. I look forward to seeing it in action.SpeekinSfear - Tuesday, June 29, 2004 - link

DerekWilsonI get what you're sayin'. I just think it's crazy! I try to stay somewhat up to pace but this is just too much.

DerekWilson - Tuesday, June 29, 2004 - link

SpeekinSfear --If you've got the money to grab a workstation board and 2x 6800 Ultras, I think you can spring for a couple hundred dollar workstation power supply. :-)

SpeekinSfear - Tuesday, June 29, 2004 - link

Im sorry but I thought lots of people were having a hard enough time powering up one 6800 Ultra. Either is absurd or I dont know something. What kind of PSU are gonna need to pull this off?TrogdorJW - Monday, June 28, 2004 - link

The CPU is already doing a lot of work on the triangles. Doing a quick analysis that determines where to send a triangle shouldn't be too hard. The only difficulty is the overlapping triangles that need to be sent to both cards, and even that isn't very difficult. The load balancing is going to be of much greater benefit than the added computation, I think. Otherwise, you risk instances where 75% of the complexity is in the bottom or top half of the screen, so the actual performance boost of a solution like Alienware's would only be 33% instead of 100%.At one point, the article mentioned the bandwidth necessary to transfer half of a 2048x1536 frame from one card to the other. At 32-bit color, it would be 6,291,456 bytes, or 6 MB. If you were shooting for 100 FPS rates, then the bandwidth would need to be 600 MB/s - more than X2 PCIe but less than X4 PCIe if it were run at the same clockspeed as PCIe.

If the connection is something like 16 bits wide (looking at the images, that seems like a good candidate - there are 13 pins on each side, I think, so 26 pins with 10 being for grounding or other data seems like a good estimate), then the connection would need to run at 300 MHz to manage transferring 600 MB/s. It might simply run at the core clockspeed, then, so it would handle 650 MB/s on the 6800, 700 MB/s on the GT, and 750+ MB/s on the Ultra and Ultra Extreme. Of course, how many of us even have monitors that can run at 2048x1536 resolution? At 1600x1200, you would need to be running at roughly 177 FPS or higher to max out a 650 MB/s connection.

With that in mind, I imagine benchmarks with older games like Quake 3 (games that run at higher frame rates due to lower complexity) aren't going to benefit nearly as much. I believe we're seeing well over 200 FPS at 1600x1200 with 4xAA in Q3 with high-end systems, and somehow I doubt that the SLI connection is going to be able to push enough information to enable rates of 400+ FPS. (1600x1200x32 at 400 FPS would need 1400 MB/s of bandwidth between the cards just for the frames, not to mention any other communications.) Not that it really matters, though, except for bragging rights. :) More complex, GPU-bound games like Far Cry (and presumably Doom 3 and Half-life 2) will probably be happy to reach even 100 FPS.

glennpratt - Monday, June 28, 2004 - link

Uhh, there's still the same number of triangles. If this is to be transparent to the game's then the card's themselves will likely split up the information.You come to some pretty serious conclusions based on exactly zero fact or logic.

hifisoftware - Monday, June 28, 2004 - link

How much CPU load does it add? As I understand every triangle is analyzed as to where it will end up (top or bottom). Then this triangle is sent to the appropriate video card. This will add a huge load on CPU. Is this thing is going to be faster at all?ZobarStyl - Monday, June 28, 2004 - link

I completely agree with the final thought that if someone can purchase a dual-PCI-E board and a single SLI enabled card with the thought of grabbing an identical card later on, then this will definitely work out well. Plus once a system gets old and is relegated to other purposes (secondary rigs) you could still seperate the two and have 2 perfectly good GPU's. I seriously hope this is what nV has in mind.